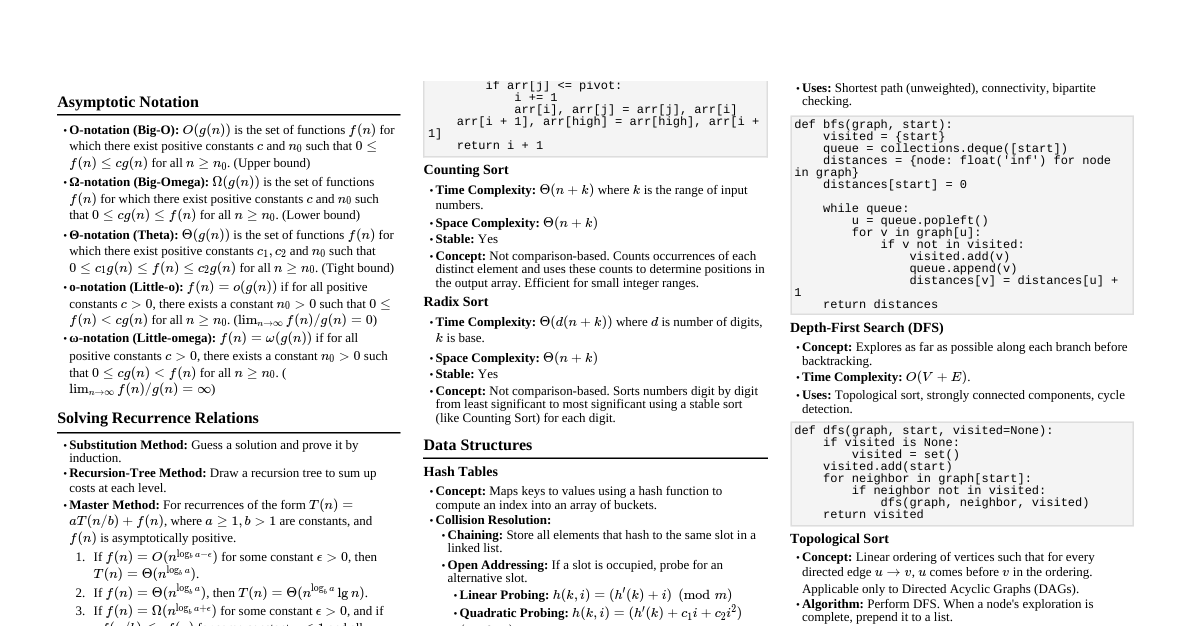

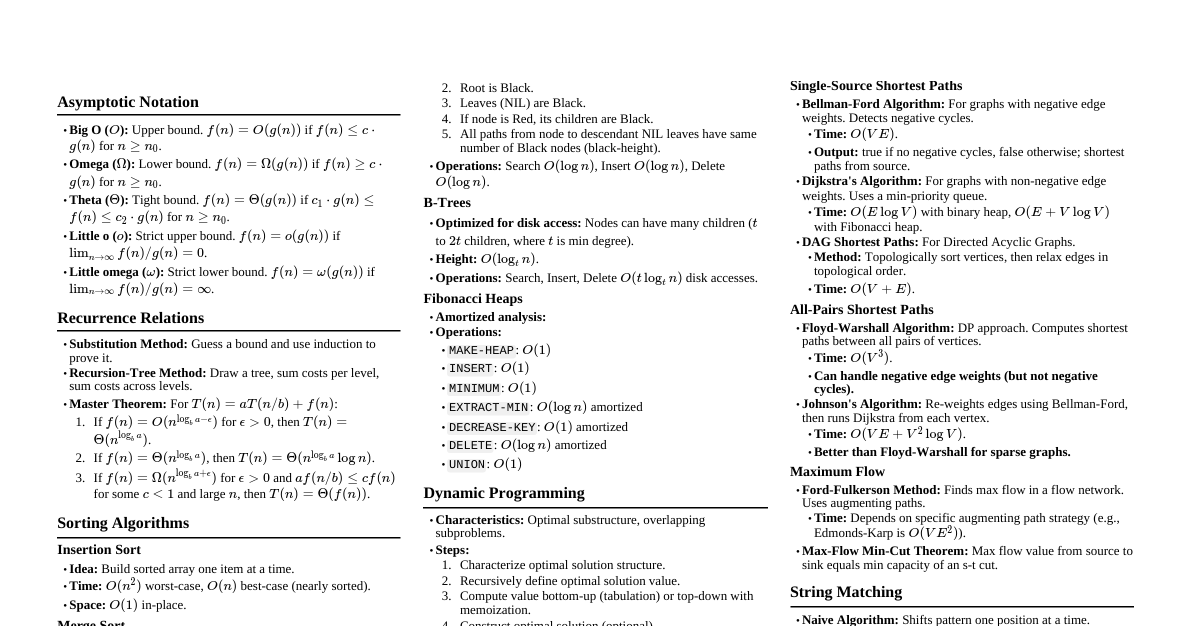

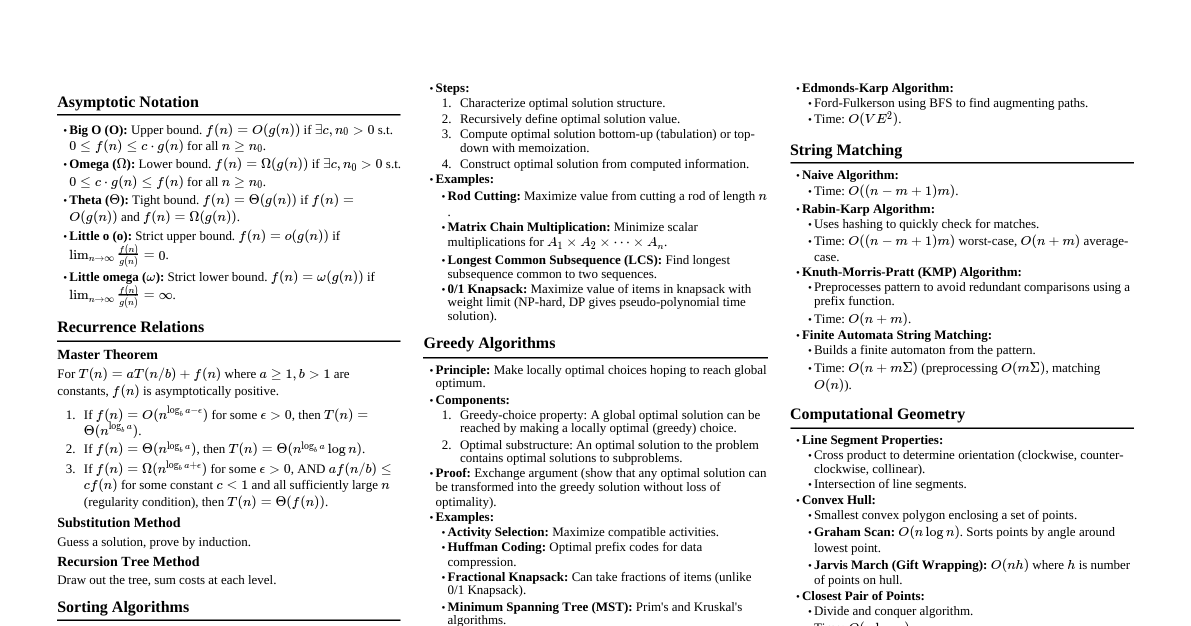

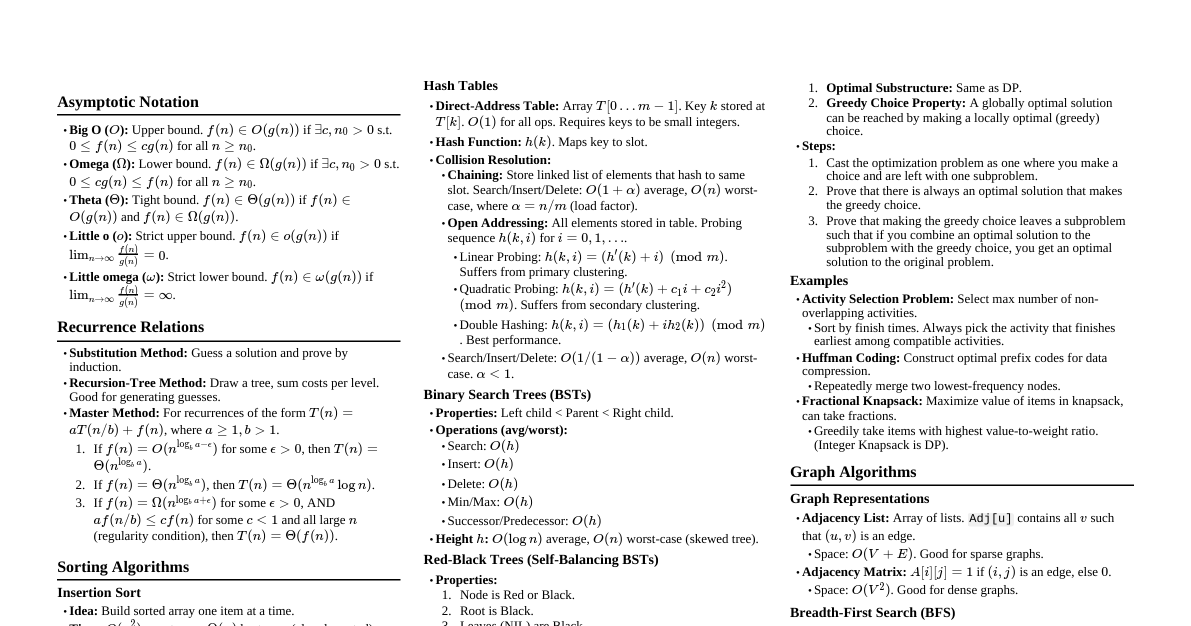

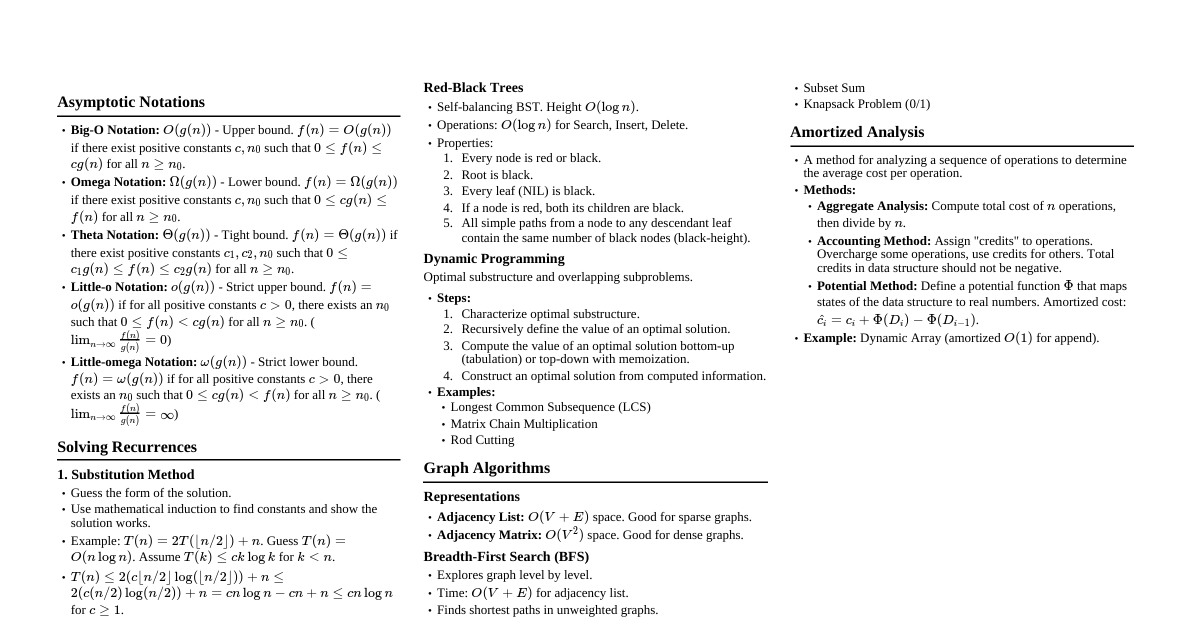

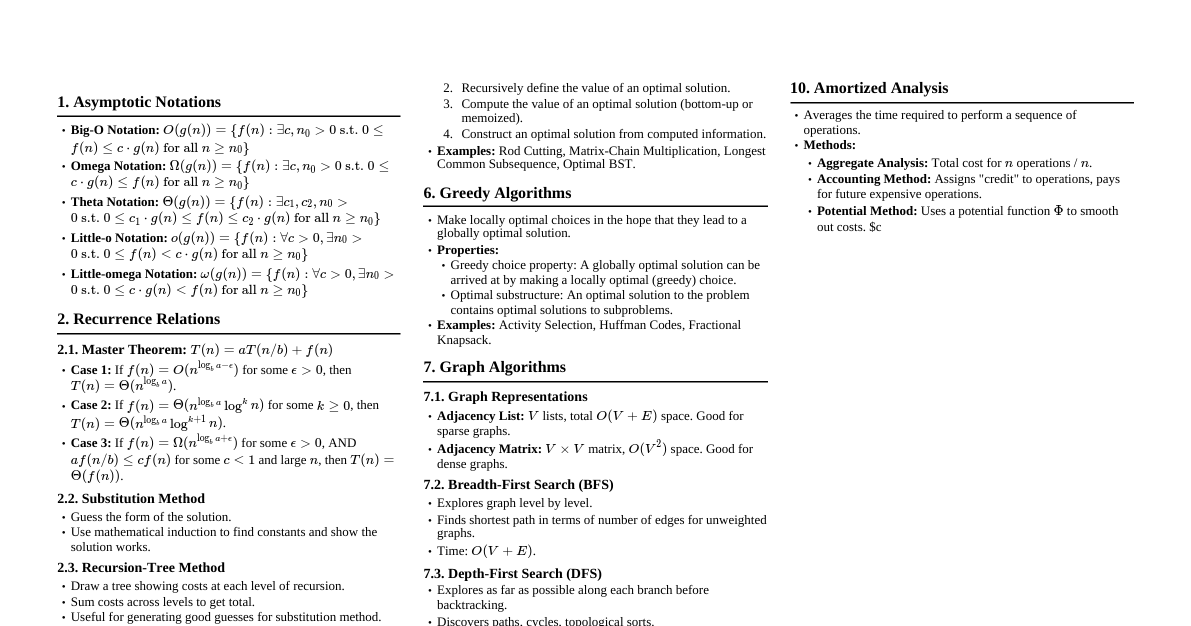

### Asymptotic Notation - **Big O ($O$):** Upper bound. $f(n) \in O(g(n))$ if $\exists c, n_0 > 0$ s.t. $0 \le f(n) \le c g(n)$ for all $n \ge n_0$. - **Omega ($\Omega$):** Lower bound. $f(n) \in \Omega(g(n))$ if $\exists c, n_0 > 0$ s.t. $0 \le c g(n) \le f(n)$ for all $n \ge n_0$. - **Theta ($\Theta$):** Tight bound. $f(n) \in \Theta(g(n))$ if $f(n) \in O(g(n))$ and $f(n) \in \Omega(g(n))$. - **Little o ($o$):** Strict upper bound. $f(n) \in o(g(n))$ if $\lim_{n \to \infty} f(n)/g(n) = 0$. - **Little omega ($\omega$):** Strict lower bound. $f(n) \in \omega(g(n))$ if $\lim_{n \to \infty} f(n)/g(n) = \infty$. ### Recurrences - **Substitution Method:** Guess a solution and prove by induction. - **Recursion-Tree Method:** Draw a tree, sum costs per level, sum all levels. - **Master Method:** For $T(n) = aT(n/b) + f(n)$ 1. If $f(n) \in O(n^{\log_b a - \epsilon})$ for some $\epsilon > 0$, then $T(n) \in \Theta(n^{\log_b a})$. 2. If $f(n) \in \Theta(n^{\log_b a})$, then $T(n) \in \Theta(n^{\log_b a} \log n)$. 3. If $f(n) \in \Omega(n^{\log_b a + \epsilon})$ for some $\epsilon > 0$, and if $a f(n/b) \le c f(n)$ for some $c ### Sorting Algorithms - **Insertion Sort:** - Time: $O(n^2)$ worst-case, $O(n)$ best-case. - Space: $O(1)$. - Stable: Yes. - In-place: Yes. - **Merge Sort:** - Time: $O(n \log n)$ always. - Space: $O(n)$. - Stable: Yes. - In-place: No. - Recurrence: $T(n) = 2T(n/2) + \Theta(n)$. - **Heap Sort:** - Time: $O(n \log n)$ always. - Space: $O(1)$. - Stable: No. - In-place: Yes. - Uses a max-heap. `BUILD-MAX-HEAP`: $O(n)$. `HEAP-EXTRACT-MAX`: $O(\log n)$. - **Quick Sort:** - Time: $O(n \log n)$ average-case, $O(n^2)$ worst-case. - Space: $O(\log n)$ average-case (due to recursion stack). - Stable: No. - In-place: Yes. - `PARTITION` procedure is key. Random pivot helps avoid worst-case. - **Counting Sort:** - Time: $O(n+k)$ where $k$ is range of input numbers. - Space: $O(n+k)$. - Stable: Yes. - Not comparison sort. - **Radix Sort:** - Time: $O(d(n+k))$ where $d$ is number of digits. - Space: $O(n+k)$. - Stable: Yes, if underlying sort (e.g., Counting Sort) is stable. ### Data Structures #### Hash Tables - **Direct-address table:** $O(1)$ without collisions. - **Collision Resolution:** - **Chaining:** Linked lists for collisions. Average $O(1+\alpha)$ for search/insert/delete, where $\alpha=n/m$ (load factor). Worst $O(n)$. - **Open Addressing:** Probe for next empty slot. - Linear Probing: $h(k, i) = (h'(k) + i) \pmod m$. Suffers from primary clustering. - Quadratic Probing: $h(k, i) = (h'(k) + c_1 i + c_2 i^2) \pmod m$. Suffers from secondary clustering. - Double Hashing: $h(k, i) = (h_1(k) + i h_2(k)) \pmod m$. Best performance. #### Binary Search Trees (BST) - **Properties:** Left child ### Dynamic Programming - **Steps:** 1. Characterize optimal substructure. 2. Recursively define the value of an optimal solution. 3. Compute the value of an optimal solution (bottom-up). 4. Construct an optimal solution. - **Key Idea:** Overlapping subproblems and optimal substructure. - **Examples:** - **Rod Cutting:** $R_n = \max_{1 \le i \le n} (p_i + R_{n-i})$. - **Matrix-Chain Multiplication:** $m[i,j] = \min_{i \le k ### Greedy Algorithms - **Properties:** 1. **Greedy-choice property:** A globally optimal solution can be reached by making a locally optimal (greedy) choice. 2. **Optimal substructure:** An optimal solution to the problem contains optimal solutions to subproblems. - **Examples:** - **Activity Selection:** Always pick the activity that finishes earliest. - **Huffman Codes:** Build tree by repeatedly merging two lowest-frequency nodes. - **Fractional Knapsack Problem:** Take items with highest value-to-weight ratio. (0/1 Knapsack is DP). ### Graph Algorithms #### Graph Representations - **Adjacency List:** $O(V+E)$ space. Good for sparse graphs. - **Adjacency Matrix:** $O(V^2)$ space. Good for dense graphs. #### Breadth-First Search (BFS) - **Purpose:** Find shortest path in unweighted graph, traverse layers. - **Time:** $O(V+E)$. - **Data Structure:** Queue. #### Depth-First Search (DFS) - **Purpose:** Traverse all reachable vertices, detect cycles, topological sort. - **Time:** $O(V+E)$. - **Data Structure:** Stack (or recursion). - **Output:** Discovery and finishing times, forest of trees. #### Topological Sort - **Purpose:** Linear ordering of vertices such that for every directed edge $(u,v)$, $u$ comes before $v$. Only for DAGs. - **Algorithm:** Run DFS, then list vertices in decreasing order of finishing times. - **Time:** $O(V+E)$. #### Minimum Spanning Trees (MST) - **Properties:** - **Cut Property:** Any cut (partition of vertices into two sets) has a minimum-weight edge crossing it (unless all edges crossing it have same weight). - **Cycle Property:** The maximum-weight edge on any cycle is not in any MST. - **Prim's Algorithm:** - Builds MST by adding cheapest edge from current tree to a vertex not yet in tree. - Time: $O(E \log V)$ with binary heap, $O(E + V \log V)$ with Fibonacci heap. - **Kruskal's Algorithm:** - Sorts all edges by weight, adds cheapest edge that doesn't form a cycle. - Time: $O(E \log E)$ or $O(E \log V)$ using Disjoint Set Union. #### Single-Source Shortest Paths - **Bellman-Ford Algorithm:** - Works with negative edge weights (detects negative cycles). - Time: $O(VE)$. - Relaxes edges $V-1$ times. - If after $V$-th relaxation, any distance changes, a negative cycle exists. - **Dijkstra's Algorithm:** - Works only with non-negative edge weights. - Time: $O(E \log V)$ with binary heap, $O(E + V \log V)$ with Fibonacci heap. - Uses a min-priority queue. - **DAG Shortest Paths:** - Compute topological sort, then relax edges in that order. - Time: $O(V+E)$. - Works with negative edge weights. #### All-Pairs Shortest Paths - **Floyd-Warshall Algorithm:** - Dynamic programming approach. - Time: $O(V^3)$. - Works with negative edge weights (detects negative cycles). - $d^{(k)}_{ij} = \min(d^{(k-1)}_{ij}, d^{(k-1)}_{ik} + d^{(k-1)}_{kj})$. - **Johnson's Algorithm:** - Re-weights graph edges to be non-negative using Bellman-Ford, then runs Dijkstra $V$ times. - Time: $O(VE + V^2 \log V)$ with binary heap, $O(VE + V^2 \log V)$ with Fibonacci heap. - Good for sparse graphs ($E \ll V^2$). #### Maximum Flow - **Max-Flow Min-Cut Theorem:** The maximum value of an s-t flow is equal to the minimum capacity of an s-t cut. - **Ford-Fulkerson Method:** - Repeatedly find augmenting paths in the residual graph and push flow. - Time: Can be exponential without good path selection. - **Edmonds-Karp Algorithm:** Uses BFS to find augmenting paths. Time: $O(VE^2)$. ### String Matching - **Naive Algorithm:** $O((n-m+1)m)$. - **Rabin-Karp Algorithm:** - Uses hashing to quickly check for matches. - Average Time: $O(n+m)$. Worst-case: $O((n-m+1)m)$. - Requires modular arithmetic. - **Knuth-Morris-Pratt (KMP) Algorithm:** - Uses a precomputed `prefix function` to avoid re-matching characters. - Time: $O(n+m)$. - Preprocessing `prefix function`: $O(m)$. ### NP-Completeness - **P (Polynomial Time):** Problems solvable in polynomial time. - **NP (Nondeterministic Polynomial Time):** Problems whose solutions can be verified in polynomial time. - **NP-Hard:** A problem $H$ is NP-hard if for every problem $L \in NP$, $L \le_P H$ (L can be reduced to H in polynomial time). - **NP-Complete:** A problem $C$ is NP-complete if $C \in NP$ and $C$ is NP-hard. - **Common NP-Complete Problems:** - **SAT (Satisfiability):** Given a boolean formula, is there an assignment that makes it true? - **3-SAT:** SAT where each clause has exactly 3 literals. - **Clique:** Does a graph contain a clique of size $k$? - **Vertex Cover:** Does a graph have a vertex cover of size $k$? - **Hamiltonian Cycle:** Does a graph contain a Hamiltonian cycle? - **Traveling Salesperson Problem (TSP):** Find shortest tour visiting all cities. - **Subset Sum:** Is there a subset of numbers that sums to $k$? - **Knapsack (0/1):** Maximize value of items with weight constraint. ### Approximation Algorithms - **Purpose:** Find near-optimal solutions for NP-hard problems in polynomial time. - **Approximation Ratio ($\rho(n)$):** - For minimization problem: $\rho(n) = C / C^* \ge 1$. - For maximization problem: $\rho(n) = C^* / C \ge 1$. - Where $C$ is solution found by algorithm, $C^*$ is optimal solution. - **Examples:** - **Vertex Cover:** Greedy 2-approximation: repeatedly pick an edge and add both its endpoints to the cover, then remove all incident edges. Ratio = 2. - **Traveling Salesperson Problem (TSP) with Triangle Inequality:** 1. Compute MST. 2. Perform DFS traversal of MST, listing vertices in order visited. 3. Create tour by connecting vertices in DFS order. - Ratio = 2.