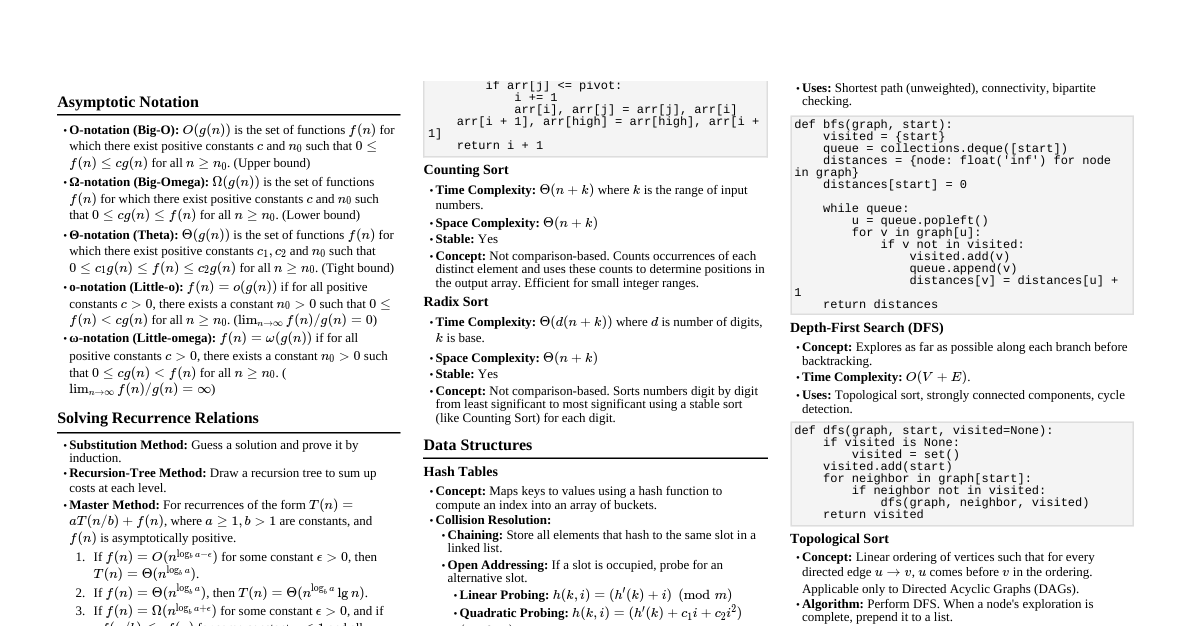

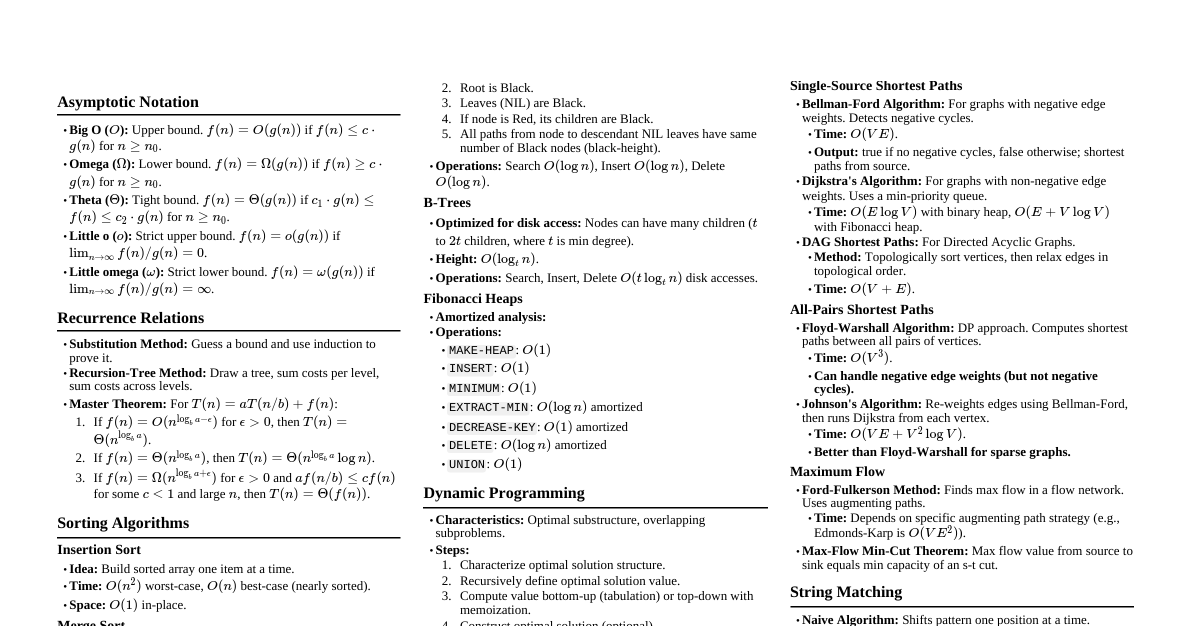

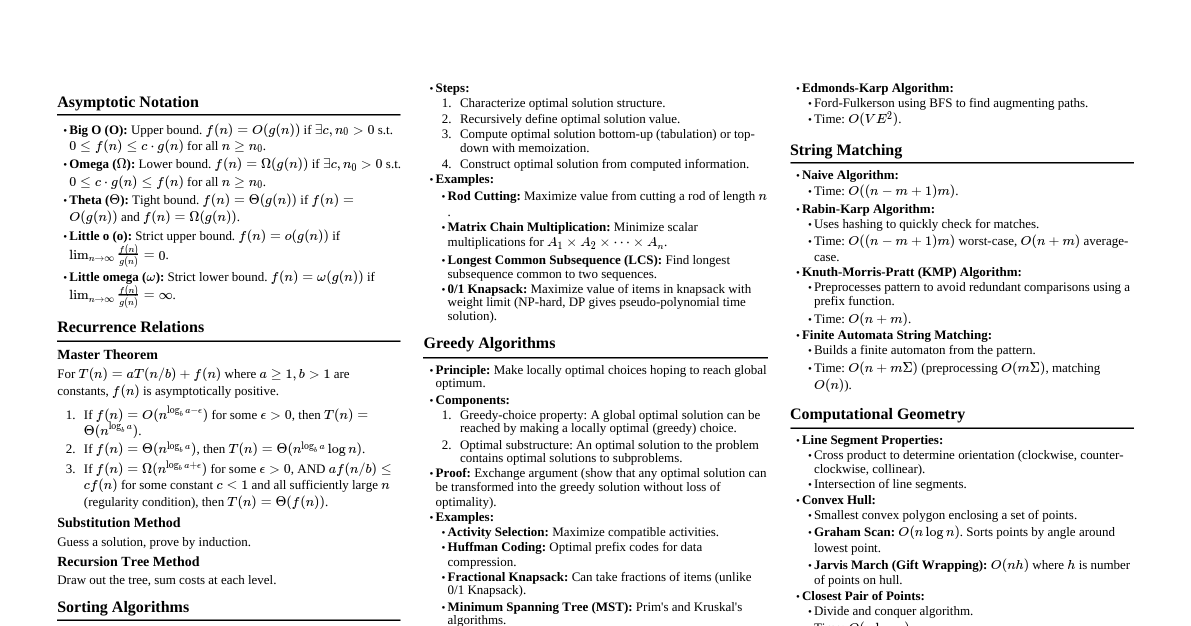

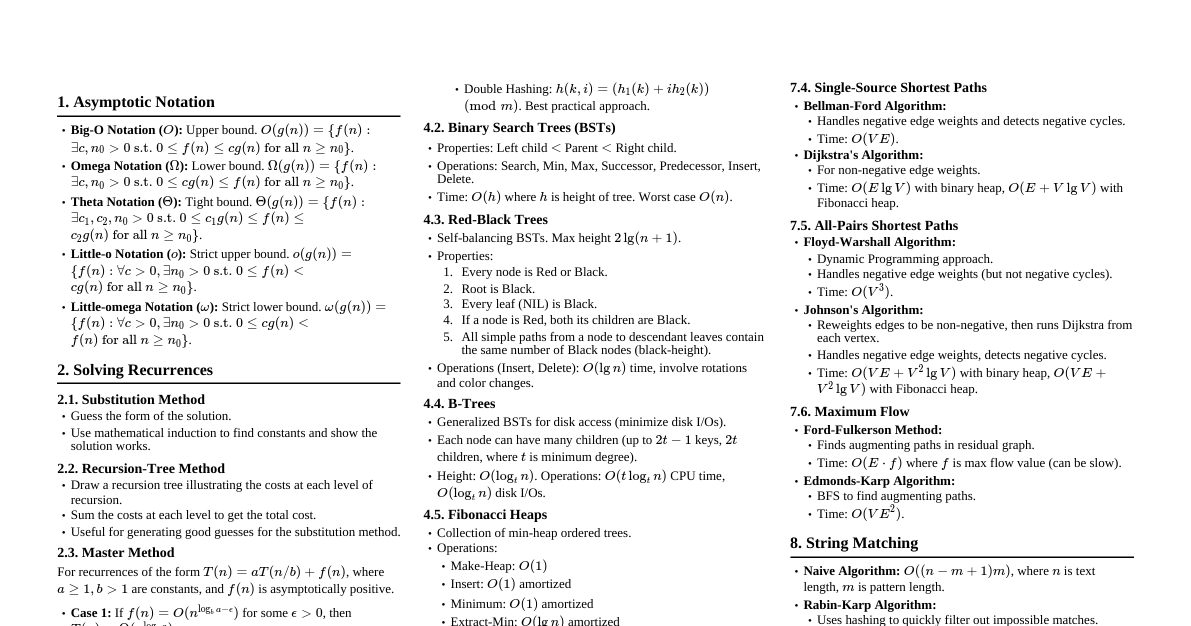

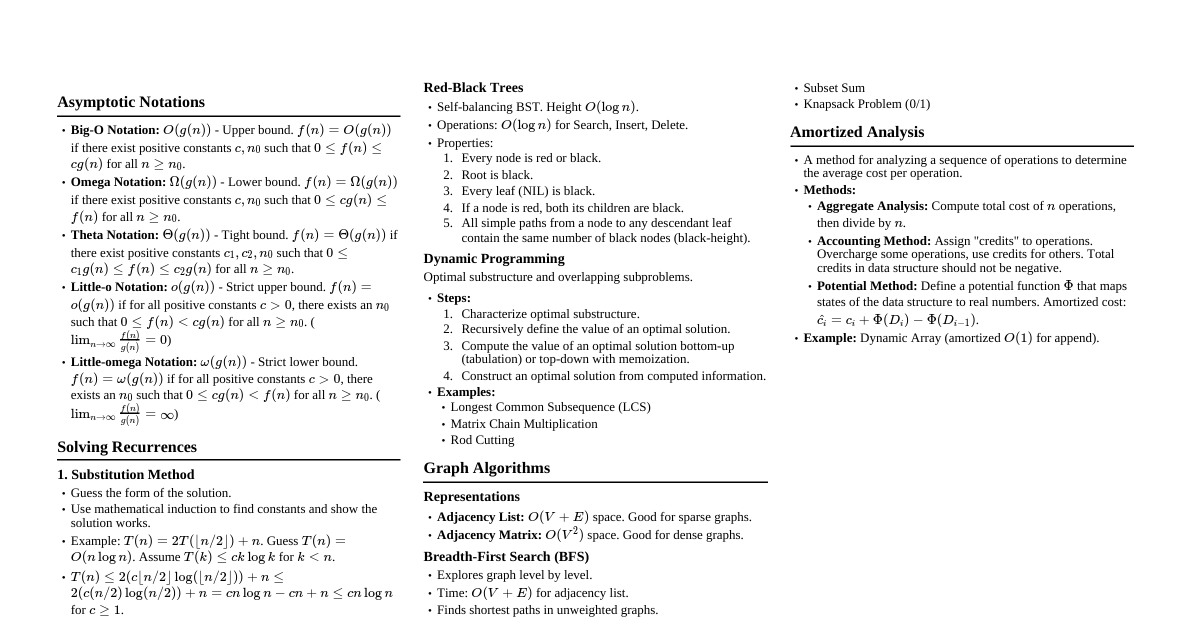

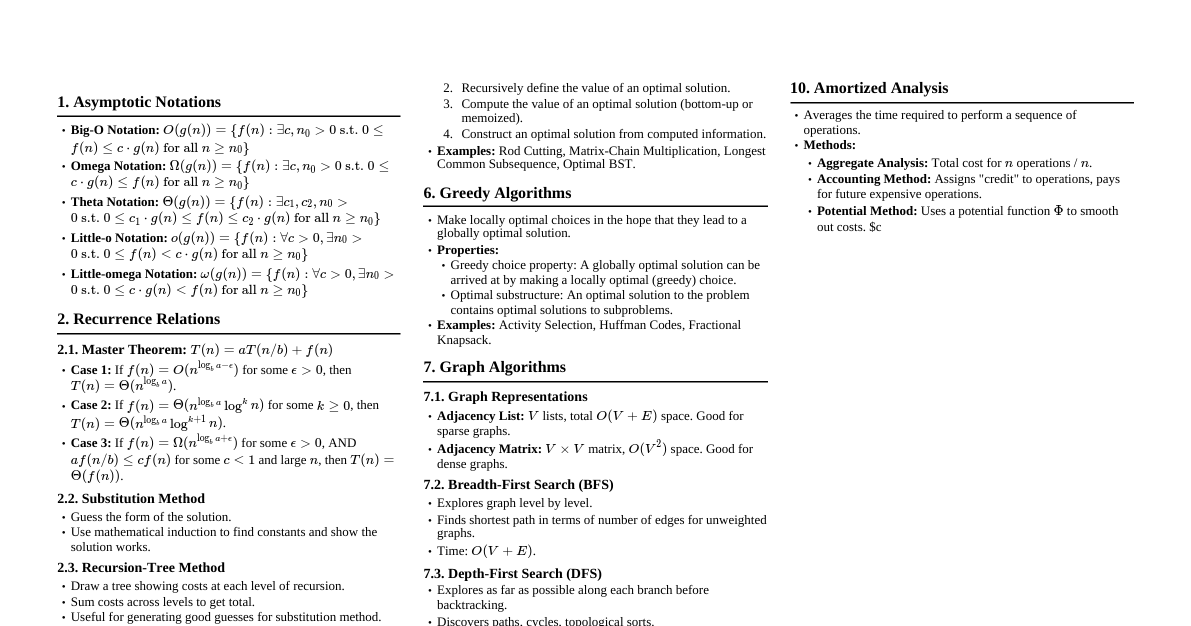

### Asymptotic Notation - **Big O ($O$):** Upper bound. $f(n) \in O(g(n))$ if $\exists c, n_0 > 0$ s.t. $0 \le f(n) \le c g(n)$ for all $n \ge n_0$. - **Omega ($\Omega$):** Lower bound. $f(n) \in \Omega(g(n))$ if $\exists c, n_0 > 0$ s.t. $0 \le c g(n) \le f(n)$ for all $n \ge n_0$. - **Theta ($\Theta$):** Tight bound. $f(n) \in \Theta(g(n))$ if $f(n) \in O(g(n))$ and $f(n) \in \Omega(g(n))$. - **Little o ($o$):** Strict upper bound. $f(n) \in o(g(n))$ if $\lim_{n \to \infty} \frac{f(n)}{g(n)} = 0$. - **Little omega ($\omega$):** Strict lower bound. $f(n) \in \omega(g(n))$ if $\lim_{n \to \infty} \frac{f(n)}{g(n)} = \infty$. ### Recurrence Relations - **Substitution Method:** Guess a solution and prove by induction. - **Recursion-Tree Method:** Draw a tree, sum costs per level. Good for generating guesses. - **Master Method:** For recurrences of the form $T(n) = aT(n/b) + f(n)$, where $a \ge 1, b > 1$. 1. If $f(n) = O(n^{\log_b a - \epsilon})$ for some $\epsilon > 0$, then $T(n) = \Theta(n^{\log_b a})$. 2. If $f(n) = \Theta(n^{\log_b a})$, then $T(n) = \Theta(n^{\log_b a} \log n)$. 3. If $f(n) = \Omega(n^{\log_b a + \epsilon})$ for some $\epsilon > 0$, AND $a f(n/b) \le c f(n)$ for some $c ### Sorting Algorithms #### Insertion Sort - **Idea:** Build sorted array one item at a time. - **Time:** $O(n^2)$ worst-case, $\Theta(n)$ best-case (already sorted). - **Space:** $O(1)$ in-place. - **Stable:** Yes. #### Merge Sort - **Idea:** Divide array in half, recursively sort each half, then merge. - **Time:** $\Theta(n \log n)$ worst-case. - **Space:** $O(n)$ aux space for merging. - **Stable:** Yes. - **Recurrence:** $T(n) = 2T(n/2) + \Theta(n)$. #### Heap Sort - **Idea:** Build a max-heap, then repeatedly extract max and rebuild heap. - **Time:** $\Theta(n \log n)$ worst-case. - **Space:** $O(1)$ in-place. - **Stable:** No. - **Build-Max-Heap:** $O(n)$. - **Heapify:** $O(\log n)$. #### Quick Sort - **Idea:** Divide and conquer. Pick a pivot, partition array around pivot, recursively sort sub-arrays. - **Time:** $O(n \log n)$ average-case, $O(n^2)$ worst-case (bad pivot choice). - **Space:** $O(\log n)$ average-case (recursion stack), $O(n)$ worst-case. - **Stable:** No. - **Partition:** $\Theta(n)$. - **Randomized Quick Sort:** Guarantees $O(n \log n)$ expected time. #### Counting Sort - **Idea:** For integers in a small range $[0, k]$. Count occurrences of each number, then use counts to place elements. - **Time:** $\Theta(n+k)$. - **Space:** $\Theta(n+k)$. - **Stable:** Yes. - **Limitations:** Only for integers, range $k$ must not be too large. #### Radix Sort - **Idea:** Sorts numbers by processing individual digits. Stable sort (like Counting Sort) is used for each digit. - **Time:** $\Theta(d(n+k))$ where $d$ is number of digits, $k$ is max digit value. - **Space:** $\Theta(n+k)$ - **Limitations:** Works best when numbers have similar number of digits and digit range $k$ is small. ### Data Structures #### Linked Lists - **Singly:** Each node points to next. - **Doubly:** Each node points to next and previous. - **Circular:** Last node points to first. - **Operations:** - Search: $O(n)$ - Insert: $O(1)$ (at head/tail if pointers maintained) - Delete: $O(1)$ (given pointer to node), $O(n)$ (given key) #### Stacks & Queues - **Stack (LIFO):** Push, Pop, Peek. All $O(1)$. - **Queue (FIFO):** Enqueue, Dequeue, Peek. All $O(1)$. #### Hash Tables - **Direct-Address Table:** Array $T[0 \dots m-1]$. Key $k$ stored at $T[k]$. $O(1)$ for all ops. Requires keys to be small integers. - **Hash Function:** $h(k)$. Maps key to slot. - **Collision Resolution:** - **Chaining:** Store linked list of elements that hash to same slot. Search/Insert/Delete: $O(1 + \alpha)$ average, $O(n)$ worst-case, where $\alpha = n/m$ (load factor). - **Open Addressing:** All elements stored in table. Probing sequence $h(k, i)$ for $i=0, 1, \dots$. - Linear Probing: $h(k, i) = (h'(k) + i) \pmod m$. Suffers from primary clustering. - Quadratic Probing: $h(k, i) = (h'(k) + c_1 i + c_2 i^2) \pmod m$. Suffers from secondary clustering. - Double Hashing: $h(k, i) = (h_1(k) + i h_2(k)) \pmod m$. Best performance. - Search/Insert/Delete: $O(1/(1-\alpha))$ average, $O(n)$ worst-case. $\alpha ### Dynamic Programming - **Characteristics:** 1. **Optimal Substructure:** Optimal solution to problem contains optimal solutions to subproblems. 2. **Overlapping Subproblems:** Recursive algorithm revisits same subproblems repeatedly. - **Steps:** 1. Characterize optimal substructure. 2. Recursively define value of optimal solution. 3. Compute value of optimal solution (bottom-up typically). 4. Construct optimal solution from computed information. #### Examples - **Rod Cutting:** Maximize revenue by cutting rod into pieces. - $R[i] = \max_{1 \le j \le i} (p_j + R[i-j])$ - **Matrix-Chain Multiplication:** Parenthesize chain of matrices to minimize scalar multiplications. - $m[i,j] = \min_{i \le k ### Greedy Algorithms - **Characteristics:** 1. **Optimal Substructure:** Same as DP. 2. **Greedy Choice Property:** A globally optimal solution can be reached by making a locally optimal (greedy) choice. - **Steps:** 1. Cast the optimization problem as one where you make a choice and are left with one subproblem. 2. Prove that there is always an optimal solution that makes the greedy choice. 3. Prove that making the greedy choice leaves a subproblem such that if you combine an optimal solution to the subproblem with the greedy choice, you get an optimal solution to the original problem. #### Examples - **Activity Selection Problem:** Select max number of non-overlapping activities. - Sort by finish times. Always pick the activity that finishes earliest among compatible activities. - **Huffman Coding:** Construct optimal prefix codes for data compression. - Repeatedly merge two lowest-frequency nodes. - **Fractional Knapsack:** Maximize value of items in knapsack, can take fractions. - Greedily take items with highest value-to-weight ratio. (Integer Knapsack is DP). ### Graph Algorithms #### Graph Representations - **Adjacency List:** Array of lists. `Adj[u]` contains all $v$ such that $(u,v)$ is an edge. - Space: $O(V+E)$. Good for sparse graphs. - **Adjacency Matrix:** $A[i][j] = 1$ if $(i,j)$ is an edge, else $0$. - Space: $O(V^2)$. Good for dense graphs. #### Breadth-First Search (BFS) - **Explores:** All vertices at depth $k$ before depth $k+1$. - **Uses:** Queue. - **Applications:** Shortest path in unweighted graphs, connectivity, bipartite graphs. - **Time:** $O(V+E)$. #### Depth-First Search (DFS) - **Explores:** As far as possible along each branch before backtracking. - **Uses:** Stack (or recursion). - **Applications:** Topological sort, strongly connected components, cycle detection. - **Time:** $O(V+E)$. #### Topological Sort - **Applies to:** Directed Acyclic Graphs (DAGs). - **Idea:** Linear ordering of vertices such that for every directed edge $(u,v)$, $u$ comes before $v$. - **Algorithm:** 1. Run DFS. 2. As each vertex finishes (all its descendants visited), insert it onto front of a linked list. - **Time:** $O(V+E)$. #### Minimum Spanning Trees (MST) - **Goal:** Find a subset of edges that connects all vertices, has no cycles, and has minimum total edge weight. - **Properties:** - **Cut Property:** For any cut (partition of vertices into two non-empty sets), if an edge $(u,v)$ is a light edge crossing the cut, it can be part of some MST. - **Cycle Property:** If a light edge $(u,v)$ is not in a cycle, then it can be part of some MST. ##### Prim's Algorithm - **Idea:** Grow MST from a starting vertex. Always add the cheapest edge connecting a vertex in the MST to a vertex outside. - **Implementation:** Priority queue (min-heap) for edges. - **Time:** $O(E \log V)$ with binary heap, $O(E + V \log V)$ with Fibonacci heap. ##### Kruskal's Algorithm - **Idea:** Sort all edges by weight. Add edges in increasing order, skipping edges that would form a cycle. - **Implementation:** Disjoint-set data structure to detect cycles. - **Time:** $O(E \log E)$ or $O(E \log V)$ (since $E \le V^2$, $\log E = O(\log V)$) using union-find. #### Single-Source Shortest Paths ##### Bellman-Ford Algorithm - **Applies to:** Graphs with negative edge weights (but no negative cycles reachable from source). - **Idea:** Relax all edges $V-1$ times. Then check for negative cycles. - **Time:** $O(VE)$. ##### Dijkstra's Algorithm - **Applies to:** Graphs with non-negative edge weights. - **Idea:** Greedily select the vertex with the smallest shortest-path estimate that has not yet been processed. - **Implementation:** Priority queue (min-heap). - **Time:** $O(E \log V)$ with binary heap, $O(E + V \log V)$ with Fibonacci heap. #### All-Pairs Shortest Paths ##### Floyd-Warshall Algorithm - **Idea:** Dynamic programming. Iteratively updates shortest path between all pairs using intermediate vertices $k$. - **$D^{(k)}_{ij} = \min(D^{(k-1)}_{ij}, D^{(k-1)}_{ik} + D^{(k-1)}_{kj})$** - **Time:** $O(V^3)$. - **Can handle:** Negative edge weights (but no negative cycles). ##### Johnson's Algorithm - **Idea:** Reweights edges to be non-negative, then runs Dijkstra from each vertex. - **Steps:** 1. Add new vertex $s$, add 0-weight edges from $s$ to all other vertices. 2. Run Bellman-Ford from $s$ to find $h(v)$ for all $v$. If negative cycle, stop. 3. Reweight edges: $w'(u,v) = w(u,v) + h(u) - h(v)$. New weights are non-negative. 4. Run Dijkstra from each vertex $u$ using $w'$. 5. Convert back to original weights: $\delta(u,v) = \delta'(u,v) - h(u) + h(v)$. - **Time:** $O(VE + V^2 \log V)$ with binary heap, $O(VE + V^2 \log V)$ with Fibonacci heap. Better than Floyd-Warshall for sparse graphs. ### Maximum Flow #### Max-Flow Min-Cut Theorem - The value of a max flow is equal to the capacity of a min cut. - **Cut $(S,T)$:** Partition of vertices into $S$ (containing source $s$) and $T$ (containing sink $t$). - **Capacity of cut:** $\sum_{u \in S, v \in T} c(u,v)$. #### Ford-Fulkerson Method - **Idea:** Repeatedly find an augmenting path in the residual graph and push flow along it until no more paths exist. - **Augmenting Path:** Path from $s$ to $t$ in residual graph with positive residual capacity. - **Residual Graph:** $G_f = (V, E_f)$. $E_f$ contains edges $(u,v)$ if $c_f(u,v) > 0$. - **Residual Capacity:** $c_f(u,v) = c(u,v) - f(u,v)$. - **Time:** $O(E \cdot f_{max})$ (if capacities are integers) or can be infinite (if capacities are irrational). - **Edmonds-Karp Algorithm:** Ford-Fulkerson using BFS to find shortest augmenting path. - **Time:** $O(VE^2)$. ### String Matching #### Naive Algorithm - **Idea:** Slide pattern $P$ over text $T$ and check for match at each position. - **Time:** $O((n-m+1)m)$, worst-case $O(nm)$. #### Rabin-Karp Algorithm - **Idea:** Uses hashing to quickly filter out impossible shifts. Compares hash values of pattern and text substrings. - **Precomputation:** $O(m)$. - **Matching:** $O(n+m)$ average-case, $O(nm)$ worst-case (spurious hits). - **Uses:** Rolling hash function. #### Knuth-Morris-Pratt (KMP) Algorithm - **Idea:** Precomputes a "prefix function" ($\pi$) for the pattern to avoid redundant comparisons when a mismatch occurs. $O(m)$ precomputation. - **Prefix function $\pi[q]$:** Length of the longest proper prefix of $P_q$ that is also a suffix of $P_q$. - **Matching:** $O(n)$. - **Total Time:** $O(n+m)$. ### Computational Geometry #### Convex Hull - **Goal:** Smallest convex polygon enclosing a set of points. - **Algorithms:** - **Graham Scan:** Sorts points by polar angle, then uses a stack to maintain candidate hull vertices. $O(n \log n)$. - **Jarvis March (Gift Wrapping):** Finds extreme points one by one. $O(nh)$ where $h$ is number of hull vertices. - **Quickhull:** Divide and conquer, similar to quicksort. $O(n \log n)$ average, $O(n^2)$ worst-case. #### Closest Pair of Points - **Goal:** Find two points in a set with the minimum distance. - **Algorithm (Divide and Conquer):** 1. Sort points by X-coordinate. 2. Divide into two halves. Recursively find closest pair in each half. Let $\delta$ be min of two results. 3. Consider points in a $2\delta$-wide strip around the dividing line. Sort these points by Y-coordinate. 4. For each point in strip, check only a constant number of next points (at most 7) for closer pair. - **Time:** $O(n \log n)$. ### NP-Completeness - **P (Polynomial Time):** Class of decision problems solvable in polynomial time by a deterministic Turing machine. - **NP (Nondeterministic Polynomial Time):** Class of decision problems solvable in polynomial time by a nondeterministic Turing machine. (Verifiable in polynomial time by a deterministic TM). - **NP-Hard:** A problem $H$ is NP-hard if every problem $L \in NP$ can be polynomial-time reduced to $H$. - **NP-Complete:** A problem $C$ is NP-complete if $C \in NP$ and $C$ is NP-hard. - **Reducibility ($L_1 \le_P L_2$):** Problem $L_1$ can be transformed into problem $L_2$ in polynomial time. If $L_1 \le_P L_2$ and $L_2 \in P$, then $L_1 \in P$. If $L_1 \le_P L_2$ and $L_1$ is NP-hard, then $L_2$ is NP-hard. #### Common NP-Complete Problems - **SAT (Satisfiability):** Given a boolean formula, is there an assignment of variables that makes it true? (First NP-complete problem). - **3-SAT:** SAT where clauses have exactly 3 literals. - **Vertex Cover:** Given a graph $G=(V,E)$ and integer $k$, does there exist a vertex cover of size at most $k$? - **Clique:** Given a graph $G=(V,E)$ and integer $k$, does there exist a clique of size at least $k$? - **Hamiltonian Cycle:** Given a graph $G=(V,E)$, does it contain a Hamiltonian cycle? - **Traveling Salesperson Problem (TSP):** Given a list of cities and distances, find shortest possible route visiting each city exactly once and returning to origin. (Optimization version is NP-hard, decision version is NP-complete). - **Subset Sum:** Given a set of integers $S$ and an integer $T$, is there a subset of $S$ that sums to $T$? - **Knapsack (0/1):** Given items with weights and values, and a max weight $W$, choose items to maximize total value without exceeding $W$. (Decision version is NP-complete).