OCI Data Science 1Z0-1110-25 Cheat Sheet

Cheatsheet Content

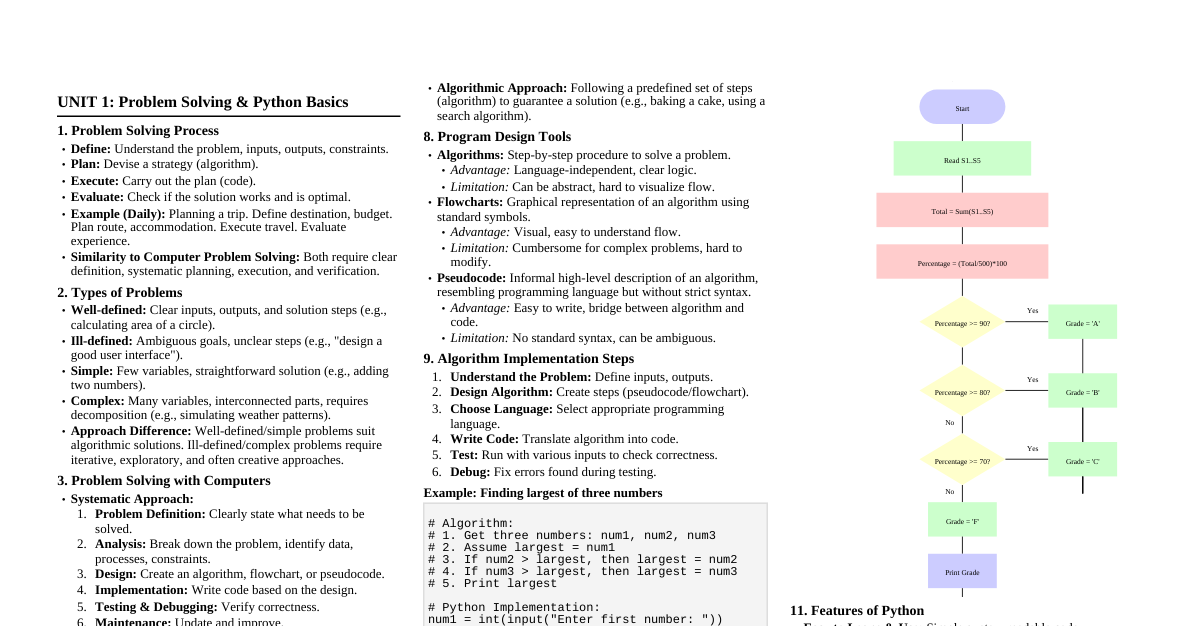

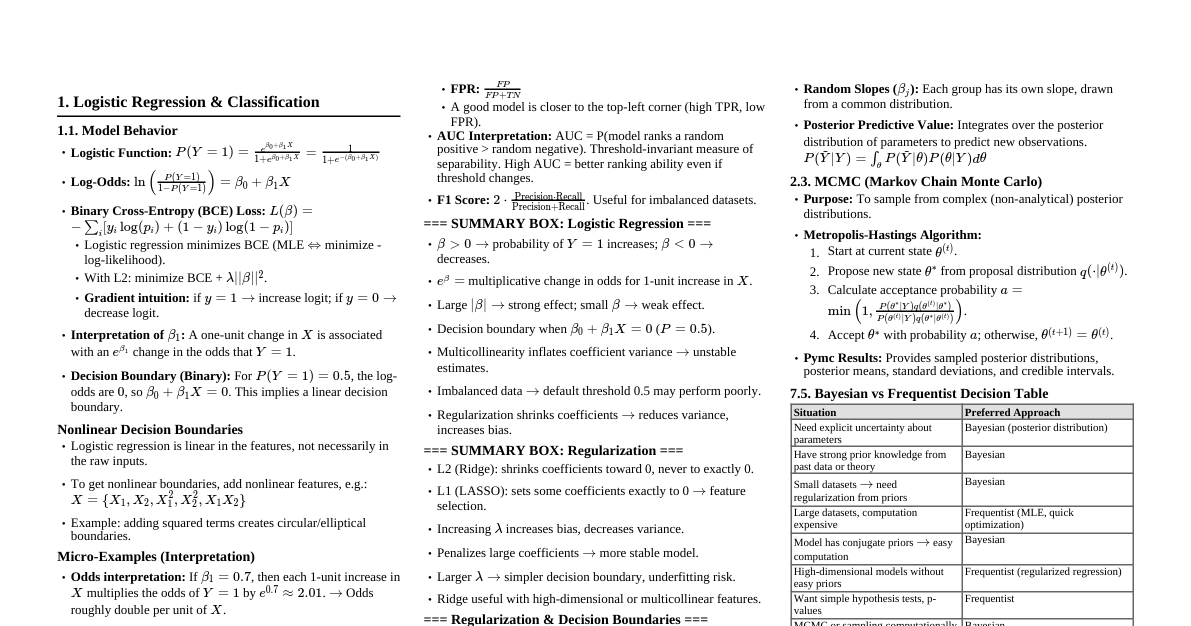

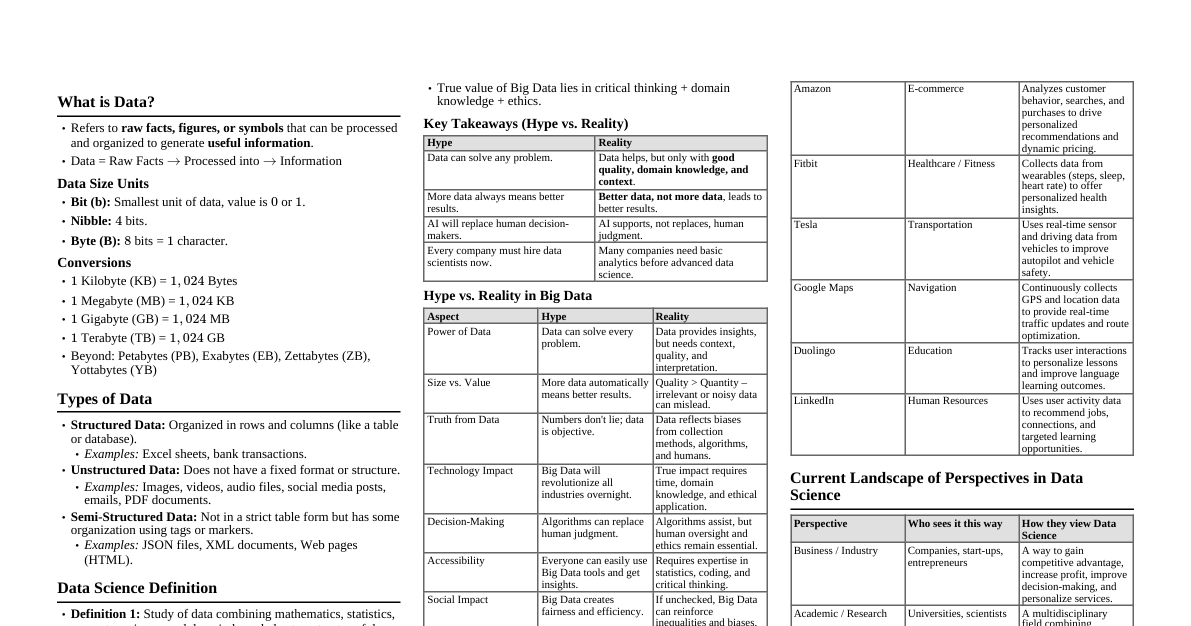

OCI Data Science Service Overview Managed service for data scientists to build, train, and deploy ML models. Provides notebooks, model catalogs, model deployment, and MLOps capabilities. Integrated with other OCI services (Object Storage, Data Catalog, Data Flow, etc.). IAM Policies: The 'Need to Know' Constants Common Policy Statements Allow group data-scientists to manage data-science-family in compartment project-compartment Allow group data-scientists to manage object-family in compartment project-compartment Allow group data-scientists to use vnics in compartment project-compartment Allow group data-scientists to use subnets in compartment project-compartment Allow group data-scientists to read repos in compartment project-compartment (for Container Registry) Allow group data-scientists to manage data-flow-family in compartment project-compartment Allow group data-scientists to use data-catalog-family in compartment project-compartment Service-Specific Policies Data Science Projects: manage data-science-projects Notebook Sessions: manage data-science-notebook-sessions Models: manage data-science-models Model Deployments: manage data-science-model-deployments Jobs: manage data-science-jobs Private Endpoints: manage data-science-private-endpoints Default Limits & Supported Shapes Notebook Sessions: Default 1-2 per region. Shapes include VM.Standard2.x (CPU), VM.Standard3.Flex (CPU), VM.GPU3.x , VM.GPU4.x . Model Deployments: Default 1-2 per region. Shapes include VM.Standard2.x (CPU), VM.Standard3.Flex (CPU), VM.GPU3.x . Data Science Jobs: Default 5 concurrent jobs. Shapes similar to notebook sessions. Object Storage: Used for datasets, model artifacts, Conda environments. Soft limits on bucket size/object count. Always check Service Limits in OCI Console for current regional limits. Request increases as needed. Accelerated Data Science (ADS) SDK: The 'Cheat' Codes Python SDK for interacting with OCI Data Science services. Simplifies data loading, model training, model catalog, and model deployment. Initialization: import ads; ads.set_auth('resource_principal') (best practice for services) or ads.set_auth('api_key') . Key ADS Modules & Classes ads.datascience.notebook : Notebook session management. ads.dataset : Data loading and preprocessing utilities. ads.model.framework : Model serialization/deserialization for various frameworks (e.g., SklearnModel , TensorflowModel ). ads.model.model_properties : Define model metadata. ads.model.model_deployment : Deploy models as API endpoints. ads.jobs : Create and manage Data Science Jobs. ads.common.model_artifact : Manage model artifacts. Common ADS SDK Commands Loading Data: df = ads.dataset.DSDataset().from_object_storage(bucket_name, object_name) Saving Model Artifact: model_artifact = ads.model.artifact.ModelArtifact(artifact_dir=artifact_path) model_artifact.prepare(model=sklearn_model, inference_conda_env='slug') model_artifact.save(model_id='ocid1.datasciencemodel...') Logging Model to Catalog: generic_model = ads.model.generic_model.GenericModel( artifact_dir=artifact_path, inference_conda_env='slug', model_id='ocid1.datasciencemodel...') generic_model.save() Deploying Model: deployment = generic_model.deploy( display_name='My Model Deployment', compartment_id='ocid1.compartment...', project_id='ocid1.datascienceproject...', deployment_log_group_id='ocid1.loggroup...', deployment_log_id='ocid1.log...', instance_shape='VM.Standard2.1', instance_count=1) deployment.wait_for_completion() Invoking Deployment: prediction = deployment.predict(data={'features': [...]}) Creating DS Job: job = ads.jobs.Job(name="My Training Job", compartment_id="ocid1.compartment...", project_id="ocid1.datascienceproject...", runtime_spec=ads.jobs.DataScienceJobRunner( conda_env="oci://service-conda-envs@oci/datascience/cpu/PyTorch 1.10 CPU on Python 3.8", script_path="oci://bucket@namespace/path/to/script.py")) job.create() MLOps & CI/CD Model Catalog: Central repository for storing and managing models, versions, and metadata. Data Science Jobs: Run training scripts, preprocessing, or batch inference as reproducible, scheduled, or on-demand tasks. Model Deployments: Host models as REST endpoints for real-time inference. Auto-scaling, logging, metrics. OCI DevOps: Integrate with Git repositories, build pipelines, and deployment pipelines for automated ML workflows. OCI Notifications/Alarms: Monitor model performance, drift, or deployment health. Workflow Visualizer: End-to-End ML Lifecycle Data Ingestion: Load data from Object Storage, Autonomous Database, ADW, etc. (ADS SDK, SQL, Spark). Data Preparation & Feature Engineering: Use Notebook Sessions, Data Flow, or Data Science Jobs. Model Training: Develop in Notebook Sessions, run large-scale training with Data Science Jobs. Utilize pre-built or custom Conda environments. Model Evaluation & Selection: Evaluate models using metrics, potentially comparing versions. Model Artifact Creation: Use ADS SDK to create model artifact ( score.py , trained model, metadata). Model Catalog Registration: Log the model artifact to the OCI Model Catalog. Model Deployment: Deploy the model from the catalog as an HTTP endpoint for real-time inference. Configure logging, scaling. Model Monitoring: Collect logs (OCI Logging), metrics (OCI Monitoring), and implement drift detection. Model Retraining/Update: Based on monitoring, trigger retraining via Data Science Jobs and update deployment. Comparison Tables Model Deployment Types Feature OCI Data Science Model Deployment OCI Functions OCI Container Engine for Kubernetes (OKE) Primary Use Case Real-time ML inference, dedicated ML instances. Serverless, event-driven, short-lived tasks. Complex deployments, microservices, high customization. Management Fully managed, integrated with DS service. Serverless, managed by OCI. User-managed Kubernetes cluster. Scaling Auto-scaling based on CPU/memory. Automatic, scales to zero. Kubernetes HPA (Horizontal Pod Autoscaler). Cost Model Per instance-hour. Per execution, memory, duration. Per node-hour, K8s control plane. Complexity Low (abstracts infrastructure). Medium (requires Docker image, API Gateway). High (K8s expertise needed). Best For Typical ML model serving. Simple, stateless inference, pre/post-processing. Advanced MLOps, custom serving logic, large teams. Data Flow vs. Data Science Jobs Feature OCI Data Flow OCI Data Science Jobs Underlying Technology Apache Spark . Any Python script/Docker image . Primary Use Case Big data processing , ETL, data cleansing, feature engineering at scale. Model training , batch inference, complex ML workflows, general Python tasks. Programming Language Python (PySpark), Java, Scala. Python (with Conda envs or custom Docker). Scaling Distributed computing, scales by adding Spark executors. Single instance (VM shape), but can run many jobs concurrently. Integration Optimized for data sources (Object Storage, ADW). Optimized for ML tasks, integrates with Model Catalog. Cost Model Per OCPU-hour, memory-hour. Per OCPU-hour, GPU-hour. Best For Processing massive datasets , Spark-based ML. Training deep learning models , custom ML algorithms, orchestration. Integration with Other OCI Services Object Storage: Primary storage for datasets, model artifacts, Conda environments, logs. Data Catalog: Discover, organize, and govern data assets. Integrate with DS for metadata. Data Flow: Large-scale data processing and feature engineering for DS projects. Autonomous Database (ADB): Store structured data for ML, consume via JDBC/Python. Logging: Centralized logging for notebook sessions, model deployments, and jobs. Monitoring: Track metrics for deployments, jobs (CPU, memory, request latency). Vault: Securely store credentials, API keys, and other secrets. Networking (VCN, Subnets, Private Endpoints): Secure access to private resources. Container Registry (OCIR): Store custom Docker images for Data Science Jobs or Model Deployments. 'Watch Out' Exam Traps Conda Environments: Service-managed: Pre-built, immutable. User-managed: Custom environments stored in Object Storage. Must be activated in notebooks/jobs. Published: User-managed environments made available to others in the compartment. Ensure correct Conda environment slug is specified for jobs/deployments. Private Endpoints: Required for Notebook Sessions, Model Deployments, or Jobs to access private resources (e.g., ADB, private Object Storage, on-premise data). Must be created in a private subnet . Enable inbound/outbound rules in Security Lists/NSGs. Model Deployment Artifacts: Must include score.py , trained model file(s) , and runtime.yaml . score.py must contain load_model() and predict() functions. runtime.yaml specifies Conda environment slug, entrypoint, and other dependencies. Resource Principals vs. API Keys: Resource Principal: Best practice for services (e.g., Notebook Sessions, Jobs, Deployments) to authenticate to other OCI services. Attach dynamic group policy. API Keys: Used by local clients or users for programmatic access. Data Science Job vs. Notebook Session: Notebook Session: Interactive development, prototyping. Data Science Job: Non-interactive, reproducible, scheduled, or batch execution of scripts. OCI Logging: Model deployments and jobs generate logs that are sent to OCI Logging. Ensure correct log group/log ID are configured. Networking: Always verify VCN, subnet, security list/NSG configurations for connectivity issues. IAM Policies: Granular policies are key. If something fails, check IAM first. Often a missing permission.