Data Concepts & Analysis (EDA)

Cheatsheet Content

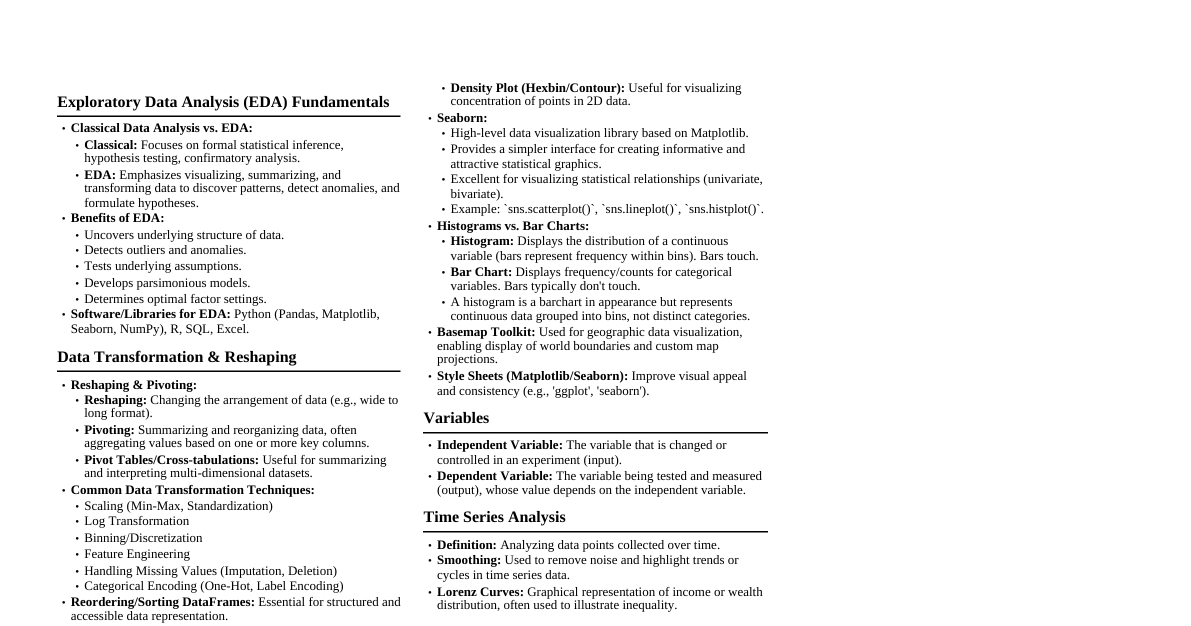

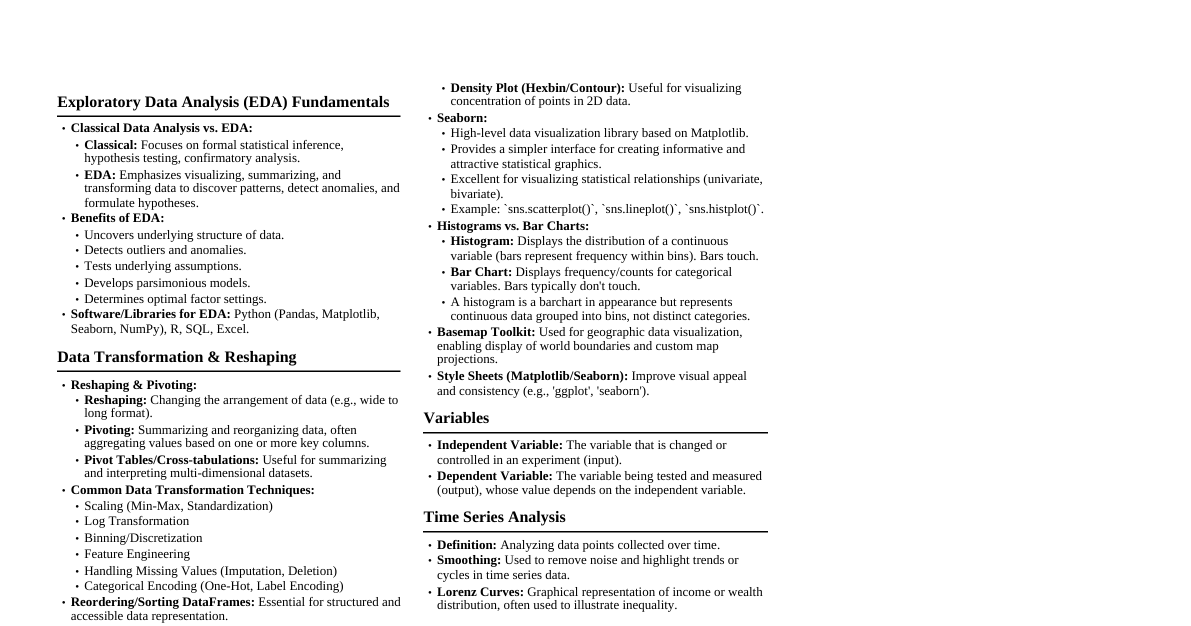

### Introduction to Data and Its Types #### Concept Data refers to raw facts, figures, or information collected for analysis and decision-making. It is the foundation of Machine Learning (ML) and business intelligence. #### Key Points - ML models learn patterns from data. - Data helps in identifying trends and relationships. - Quality data improves model accuracy. - Data supports evidence-based decision-making. - Without data, prediction and analysis are not possible. #### Uses - Training ML models. - Business forecasting. - Risk analysis in finance. - Patient diagnosis in healthcare. - Policy making in government. #### Example In e-commerce, customer purchase data helps predict which products customers are likely to buy in the future. #### Conclusion Data is called the backbone of Machine Learning because models learn patterns from data to make predictions. Without data, no analysis or intelligent decision-making is possible. ### Classification of Data #### Concept Classification of data refers to organizing data into categories based on its type and characteristics for proper analysis. #### Key Points Data is mainly divided into: 1. **Numerical Data (Quantitative Data)** * **Discrete Data:** Countable values (e.g., number of students) * **Continuous Data:** Measurable values (e.g., height, age) 2. **Categorical Data (Qualitative Data)** * **Nominal Data:** No specific order (e.g., gender, color) * **Ordinal Data:** Ordered categories (e.g., education level, ranking) #### Uses - Helps choose correct statistical methods. - Helps select appropriate visualization techniques. - Important for feature engineering in ML. #### Example - Age → Continuous - Number of children → Discrete - Gender → Nominal - Education Level → Ordinal #### Conclusion Understanding data classification is essential because different types of data require different analysis techniques. Proper classification ensures accurate analysis and better model performance. ### Classification of Data (Based on Observation) #### Concept Classification of data based on observation refers to categorizing data depending on how it is collected over time and across entities. #### Key Points - **Cross-Sectional Data** - Data collected at one specific point in time. - Observations are from multiple individuals/entities. - No time dimension involved. - **Time Series Data** - Data collected over different time periods. - Observations are for a single entity. - Has a time sequence (daily, monthly, yearly). - **Panel Data (Longitudinal Data)** - Combination of Cross-sectional and Time Series data. - Data collected for multiple entities over multiple time periods. - Contains both entity and time dimensions. #### Uses - Cross-sectional → Comparing different groups at one time. - Time series → Forecasting and trend analysis. - Panel data → Studying behavior over time with multiple entities. #### Example - Cross-sectional → Income of 100 students in 2025. - Time series → Monthly sales of a company from 2020-2025. - Panel data → Monthly sales of 10 companies from 2020–2025. #### Conclusion Classification based on observation is important because different data structures require different analytical techniques. It helps in selecting appropriate statistical and ML models. ### Classification of Data (Based on Measurement Scale) #### Concept Classification based on measurement scale refers to categorizing data according to the level of measurement and the type of information it provides. #### Key Points - **Nominal Scale** - Categorical data. - No numerical meaning. - No order or ranking. - Only used for labeling. - **Ordinal Scale** - Categorical data. - Has meaningful order. - Differences between values are not measurable. - **Interval Scale** - Numerical data. - Ordered with equal intervals. - No true zero point. - Differences are meaningful, but ratios are not. - **Ratio Scale** - Numerical data. - Ordered with equal intervals. - Has a true zero point. - Both differences and ratios are meaningful. #### Uses - Helps in choosing appropriate statistical methods. - Important for selecting correct visualization techniques. - Determines which mathematical operations can be applied. #### Example - Nominal → Gender, Blood Group - Ordinal → Rank, Education Level - Interval → Temperature in Celsius - Ratio → Height, Weight, Age, Income #### Conclusion Understanding measurement scales is essential because each scale supports different types of analysis. Proper identification ensures accurate statistical testing and better ML model performance. ### Classification of Data (Based on Availability) #### Concept Classification based on availability refers to categorizing data depending on how and from where it is collected. #### Key Points - **Primary Data** - Collected directly by the researcher. - First-hand and original data. - Collected through surveys, interviews, experiments, questionnaires. - More reliable but time-consuming and costly. - **Secondary Data** - Already collected by someone else. - Available in books, journals, websites, government reports. - Saves time and cost. - May not be fully specific to research needs. - **Tertiary Data** - Data compiled from primary and secondary sources. - Found in encyclopedias, indexes, summaries, databases. - Used for quick reference. - Less detailed and less specific. #### Uses - Helps in selecting appropriate data source. - Saves time and cost in research planning. - Important for business and academic research. #### Example - Primary → Conducting your own survey on student satisfaction. - Secondary → Using census data from government reports. - Tertiary → Using Wikipedia or encyclopedia for general information. #### Conclusion Classification based on availability is important because it helps researchers choose suitable data sources based on accuracy, cost, and research objectives. ### Classification of Data (Based on Structural Form) #### Concept Classification based on structural form refers to categorizing data depending on how it is organized and stored. #### Key Points - **Structured Data** - Organized in rows and columns. - Stored in relational databases. - Easy to search and analyze. - Follows a fixed schema. - **Semi-Structured Data** - Does not follow strict table format. - Contains tags or labels. - Flexible structure. - Example formats: JSON, XML, emails. - **Unstructured Data** - No predefined format. - Difficult to store in traditional databases. - Requires advanced processing (NLP, image processing). - Includes text, images, audio, video. #### Uses - Structured → Traditional databases, SQL queries. - Semi-structured → Web data, APIs. - Unstructured → Social media analysis, image recognition, NLP tasks. #### Example - Structured → Student marks table in Excel. - Semi-structured → JSON data from a website API. - Unstructured → Instagram photos, YouTube videos. #### Conclusion Understanding structural classification is important because different data types require different storage systems and analytical techniques. It helps in selecting proper tools for data processing and ML. ### Classification of Data (Based on Inherent Nature) #### Concept Classification based on inherent nature refers to categorizing data according to its fundamental characteristics — whether it represents numerical values or descriptive categories. #### Key Points Data based on inherent nature is divided into: - **Quantitative Data** - Numerical in nature. - Represents measurable or countable quantities. - Mathematical operations can be performed. - Further divided into: - Discrete Data → Countable values (e.g., number of students) - Continuous Data → Measurable values (e.g., height, weight) - **Qualitative Data** - Descriptive or categorical in nature. - Represents qualities or characteristics. - Cannot perform meaningful arithmetic operations. - Further divided into: - Nominal Data → No order (e.g., gender, color) - Ordinal Data → Has order (e.g., ranking, education level) #### Uses - Helps in selecting proper statistical methods. - Determines visualization techniques. - Important for feature engineering in ML. #### Example - Quantitative → Age, Income, Number of products sold - Qualitative → Blood group, Grade (A, B, C), City name #### Conclusion Understanding inherent nature of data is essential because quantitative and qualitative data require different analysis techniques. Proper classification ensures accurate interpretation and better ML performance. ### Concepts of Population and Sample #### Concept Population refers to the entire group of individuals or observations under study, while a sample is a subset of the population selected for analysis. #### Key Points - **Population** - Complete set of observations. - Can be large or infinite. - Studying full population is called Census. - Population characteristics are called Parameters ($\mu, \sigma$). - **Sample** - Subset of population. - Used to make inferences about population. - Saves time and cost. - Sample characteristics are called Statistics ($\bar{x}, s$). #### Uses - Used in surveys and research. - Helps estimate population parameters. - Important in hypothesis testing. - Widely used in ML model training. #### Example - Population → All students in a university. - Sample → 200 students selected from the university for a survey. #### Conclusion Studying the entire population is often impractical due to time and cost constraints. Therefore, a properly selected sample is used to make reliable conclusions about the population. ### Small Sample and Large Sample #### Concept Small and large samples refer to classification of samples based on their size, which affects the type of statistical methods and distributions used for analysis. #### Key Points - **Small Sample** - Sample size ### Statistic and Parameter, Types of Statistics and Applications in Business #### Concept A Parameter is a numerical measure that describes a characteristic of a population, whereas a Statistic is a numerical measure that describes a characteristic of a sample. Statistics are used to estimate population parameters and support decision-making. #### Key Points - **Parameter** - Describes entire population. - Fixed but usually unknown. - Represented by Greek symbols. - Population Mean → $\mu$ - Population Standard Deviation → $\sigma$ - Obtained through census. - **Statistic** - Describes sample. - Used to estimate parameters. - Represented by Roman symbols. - Sample Mean → $\bar{x}$ - Sample Standard Deviation → $s$ - Used when population study is impractical. #### Types of Statistics Statistics is broadly divided into: - **Descriptive Statistics** - Summarizes and organizes data. - Includes mean, median, mode. - Measures of dispersion (range, variance, standard deviation). - Uses charts and graphs. - **Inferential Statistics** - Makes predictions or inferences about population using sample. - Includes hypothesis testing. - Confidence intervals. - Regression and correlation analysis. #### Applications in Different Business Scenarios - **Marketing** - Analyze customer preferences. - Predict sales trends. - **Finance** - Risk analysis using variance and standard deviation. - Forecast stock prices. - **Operations** - Quality control using sampling. - Inventory forecasting. - **Human Resources** - Salary analysis. - Employee performance evaluation. #### Example - Company studies spending habits of 200 customers (Statistic) - Uses it to estimate average spending of all customers (Parameter) #### Conclusion Statistics plays a crucial role in business decision-making. Descriptive statistics helps summarize data, while inferential statistics helps predict and generalize results to the population. Proper use of statistics leads to informed, data-driven business strategies. ### Frequency Distribution of Data #### Concept Frequency distribution is a method of organizing raw data to show the number of times each value or group of values occurs in a dataset. #### Key Points - Helps convert raw data into structured form. - Shows frequency (number of occurrences) of each value. - Makes data easy to understand and analyze. - Can be represented in tabular or graphical form. - **Types:** - Ungrouped Frequency Distribution → Individual values. - Grouped Frequency Distribution → Data divided into class intervals. #### Uses - Simplifies large datasets. - Helps identify patterns and trends. - Used in histogram and bar chart creation. - Important for statistical analysis in EDA. #### Example Raw Data (Marks): 45, 50, 45, 60, 70, 50, 45 | Marks | Frequency | |-------|-----------| | 45 | 3 | | 50 | 2 | | 60 | 1 | | 70 | 1 | #### Conclusion Frequency distribution helps in organizing raw data into a structured format, making it easier to analyze patterns and perform statistical calculations. ### Difference between EDA, Classical Analysis and Bayesian Analysis #### Concept Exploratory Data Analysis (EDA) is an approach to analyzing datasets to summarize their main characteristics using statistical and visual methods before applying formal models. It focuses on discovering patterns, detecting anomalies, and understanding data structure. Classical and Bayesian analysis are two statistical approaches used for inference, differing in how they interpret probability. #### Key Points - **Exploratory Data Analysis (EDA)** - Focuses on exploring and summarizing data. - Uses visualization (histograms, boxplots, scatterplots). - Identifies patterns, trends, and outliers. - No strict assumptions initially. - Data-driven and flexible. - **Classical (Frequentist) Analysis** - Probability = Long-run frequency. - Parameters are fixed but unknown. - Uses hypothesis testing and p-values. - No prior information used. - Example: Z-test, t-test. - **Bayesian Analysis** - Probability = Degree of belief. - Parameters treated as random variables. - Uses prior knowledge (prior distribution). - Updates belief using Bayes' Theorem. - Produces posterior probability. #### Major Differences | Aspect | EDA | Classical Analysis | Bayesian Analysis | |----------------------|--------------------------|-------------------------|-------------------| | Purpose | Explore data | Test hypotheses | Update beliefs | | Probability Meaning | Not focus on probability | Long-run frequency | Degree of belief | | Use of Prior Info | No | No | Yes | | Approach | Visual & descriptive | Mathematical inference | Probability updating | #### Uses - EDA → Understanding dataset before modeling. - Classical → Hypothesis testing in business and research. - Bayesian → Risk assessment, decision-making with prior knowledge. #### Example - EDA → Plotting sales trend before forecasting. - Classical → Testing if new marketing strategy increases average sales. - Bayesian → Updating probability of loan default using past data. #### Conclusion EDA is used for initial data understanding without strong assumptions, while Classical and Bayesian analyses are formal statistical inference methods. Classical analysis relies on long-run frequency, whereas Bayesian analysis updates beliefs using prior information. ### Comparison of EDA with Classical Data Summary Measures #### Concept Exploratory Data Analysis (EDA) is an approach used to explore and understand data using visual and descriptive techniques. Classical data summary measures focus mainly on numerical statistics such as mean and variance to summarize data. #### Key Points - **Exploratory Data Analysis (EDA)** - Emphasizes graphical representation. - Detects patterns, trends, and relationships. - Identifies outliers and anomalies. - Flexible and data-driven. - Uses boxplots, histograms, scatterplots. - **Classical Data Summary Measures** - Focuses on numerical summaries. - Includes mean, median, mode. - Measures of dispersion (range, variance, standard deviation). - Based on mathematical formulas. - Less emphasis on visualization. #### Major Differences | Aspect | EDA | Classical Summary Measures | |------------------|------------------------|----------------------------| | Approach | Visual & descriptive | Numerical & mathematical | | Focus | Patterns & structure | Central tendency & spread | | Outlier Detection| Easily visible | May be hidden | | Flexibility | High | Limited | #### Uses - EDA → Initial data understanding before modeling. - Classical Measures → Quick statistical summary. - Both used in business analysis and ML preprocessing. #### Example - Classical → Calculating mean sales of a company. - EDA → Plotting sales distribution to check skewness and outliers. #### Conclusion EDA provides a deeper and visual understanding of data, while classical summary measures provide numerical descriptions. Both are important, but EDA offers better insight into data structure and irregularities. ### Goals of Exploratory Data Analysis (EDA) and Underlying Assumptions #### Concept Exploratory Data Analysis (EDA) is a statistical approach used to analyze and understand datasets before applying formal models. It focuses on discovering patterns, detecting anomalies, testing assumptions, and gaining insights using descriptive and visual methods. #### Key Points - **Goals of EDA** - Understand the structure of data - Identify missing values and errors - Detect outliers and anomalies - Discover patterns and relationships - Check distribution of variables - Generate hypotheses for further analysis - Prepare data for modeling #### Uses - Data cleaning and preprocessing - Feature selection - Choosing appropriate statistical tests - Improving ML model performance #### Underlying Assumptions in EDA Unlike classical statistical methods, EDA: - Does not assume normal distribution initially - Does not assume linearity at the beginning - Is flexible and data-driven - Relies on visualization and summaries - Encourages questioning assumptions EDA checks assumptions such as: - Normality - Linearity - Independence - Homoscedasticity #### Example Before applying regression, EDA checks: - Whether variables are normally distributed - Whether relationship is linear - Whether outliers exist #### Conclusion EDA aims to understand and explore data without rigid assumptions. It helps uncover hidden patterns and validate assumptions before applying formal statistical or ML models, ensuring accurate and reliable analysis. ### Importance of EDA in Data Exploration Techniques and Techniques to Test Assumptions #### Concept Exploratory Data Analysis (EDA) is a crucial step in data analysis that helps understand data structure, detect anomalies, and validate assumptions before applying statistical or Machine Learning models. #### Importance of EDA in Data Exploration **Key Points** 1. Helps understand data distribution 2. Detects missing values and inconsistencies 3. Identifies outliers and anomalies 4. Examines relationships between variables 5. Checks data quality 6. Validates assumptions required for statistical models 7. Prevents incorrect model application #### Different Techniques to Test Assumptions in EDA EDA uses various techniques to test assumptions such as normality, linearity, independence, and homoscedasticity. - **Testing Normality** - Histogram - Q-Q Plot - Shapiro-Wilk Test - Kolmogorov-Smirnov Test - **Testing Linearity** - Scatter Plot - Correlation Analysis - **Testing Independence** - Durbin-Watson Test - Residual plots - **Testing Homoscedasticity (Equal Variance)** - Residual vs Fitted Plot - Breusch-Pagan Test - **Detecting Outliers** - Box Plot - Z-score - IQR Method #### Uses in Business Scenarios - Marketing → Check customer purchase distribution - Finance → Validate risk model assumptions - Healthcare → Detect abnormal patient values - Operations → Ensure quality control data stability #### Example Before applying linear regression: - Check normality of residuals - Verify linear relationship - Detect outliers - Ensure equal variance #### Conclusion EDA is essential for identifying data issues and validating assumptions before modeling. By applying appropriate testing techniques, analysts ensure accurate, reliable, and meaningful results in statistical and ML applications. ### Role of Graphics in Data Exploration (EDA) #### Concept Graphics play a crucial role in Exploratory Data Analysis (EDA) by visually representing data to uncover patterns, trends, relationships, and anomalies that may not be evident from numerical summaries alone. #### Key Points - Makes complex data easy to understand - Reveals distribution shape (normal, skewed) - Detects outliers quickly - Identifies relationships between variables - Highlights trends over time - Supports assumption checking - Improves decision-making clarity #### Common Graphical Techniques in EDA - Histogram → Shows data distribution - Boxplot → Detects outliers and spread - Scatterplot → Shows relationship between variables - Bar chart → Compares categories - Line chart → Displays time trends #### Uses in Business - Marketing → Visualize customer spending patterns - Finance → Detect abnormal stock price movements - Sales → Analyze monthly trends - HR → Compare employee performance #### Example Instead of only calculating average sales, plotting a histogram can reveal skewness and outliers, giving deeper insight into sales behavior. #### Conclusion Graphics are essential in EDA because they provide intuitive and powerful insights into data structure. Visual exploration helps detect hidden patterns, validate assumptions, and prevent misleading conclusions from numerical summaries alone. ### Introduction to Unidimensional, Bidimensional and Multidimensional Graphical Representation of Data #### Concept Graphical representation of data refers to visual methods used to display and analyze data. Based on the number of variables involved, graphical representation can be unidimensional, bidimensional, or multidimensional. #### Key Points - **Unidimensional Graphical Representation** - Involves one variable. - Focuses on distribution or frequency. - Used for understanding central tendency and spread. - **Common Graphs:** - Histogram - Bar Chart - Pie Chart - Box Plot - **Bidimensional Graphical Representation** - Involves two variables. - Shows relationship between variables. - Used for correlation and trend analysis. - **Common Graphs:** - Scatter Plot - Line Graph - Two-variable Bar Chart - **Multidimensional Graphical Representation** - Involves more than two variables. - Shows complex relationships. - Used in advanced data analysis. - **Common Graphs:** - 3D Scatter Plot - Heatmap - Bubble Chart - Pair Plot #### Example - Unidimensional → Distribution of student marks. - Bidimensional → Relationship between height and weight. - Multidimensional → Relationship between sales, profit, and advertising cost. #### Conclusion Graphical representation simplifies complex data analysis. Unidimensional graphs analyze single variables, bidimensional graphs show relationships between two variables, and multidimensional graphs help explore complex patterns among multiple variables. ### Introduction to Data Exploration Process for Data Preparation #### Concept Data preparation is the process of cleaning, transforming, and organizing raw data to make it suitable for analysis and Machine Learning models. Data exploration is the initial step in this process, where the dataset is examined to understand its structure, quality, and characteristics. #### Key Points - **Need for Data Preparation** - Raw data may contain missing values. - Presence of noise and outliers. - Inconsistent formats. - Duplicate records. - Irrelevant features. - **Data Exploration Process in Data Preparation** 1. Understanding data structure = Number of rows and columns, Data types (numerical, categorical) 2. Checking missing values = Identify null or blank entries 3. Detecting duplicates = Remove repeated records 4. Identifying outliers = Use boxplots, Z-score, IQR 5. Analyzing distributions = Histogram, density plots 6. Checking relationships = Correlation matrix, scatter plots #### Uses - Improves data quality. - Ensures accurate model training. - Reduces model bias. - Enhances prediction performance. #### Example Before building a house price prediction model: - Remove missing values in price column. - Detect extreme property prices (outliers). - Convert categorical variables (location) into numerical format. - Check correlation between area and price. #### Conclusion Data preparation is a critical step in the data analysis process. Through systematic data exploration, issues such as missing values, outliers, and inconsistencies are identified and resolved, ensuring reliable and accurate model performance. ### Data Discovery #### Concept Data Discovery is the initial phase of the data exploration process where the dataset is examined and understood without modifying it. It focuses on identifying data structure, content, patterns, and potential issues before cleaning or modeling. #### Key Points - Understand dataset structure (rows, columns, variables) - Identify data types (numerical, categorical) - Check basic statistics (mean, median, min, max) - Detect missing values - Identify duplicate records #### Uses - Helps understand the nature of data. - Identifies potential problems early. - Guides data cleaning strategy. - Supports proper feature selection. #### Example In a sales dataset: - Count number of records. - Identify columns like Date, Sales, Product. - Check for missing values in Sales column. - Observe distribution of sales. #### Conclusion Data discovery is a foundational step in data preparation. It allows analysts to understand dataset characteristics and identify issues before applying cleaning or transformation techniques, ensuring efficient and accurate analysis. ### Issues Related with Data Access and Characterization of Data #### Concept Data access refers to the process of retrieving data from various sources for analysis. Data characterization refers to understanding the nature, structure, and properties of data before performing analysis or modeling. #### Issues Related with Data Access **Key Points** 1. Format mismatch (CSV, JSON, Excel differences) 2. Large file size causing slow processing 3. Security and authorization restrictions 4. Incomplete or missing data 5. Inconsistent data sources 6. Data privacy concerns 7. Network or connectivity issues #### Characterization of Data Data characterization involves understanding: 1. Type of data (Numerical, Categorical) 2. Structure (Structured, Semi-structured, Unstructured) 3. Scale of measurement (Nominal, Ordinal, Interval, Ratio) 4. Data distribution (Normal, Skewed) 5. Presence of outliers 6. Missing value patterns 7. Data relationships (correlation) #### Uses - Helps choose appropriate cleaning methods. - Determines suitable statistical tests. - Supports feature engineering. - Ensures proper model selection. #### Example In a customer dataset: - Access issue → Data stored in multiple formats (Excel & JSON). - Characterization → Identify that income is continuous and gender is nominal. #### Conclusion Efficient data access ensures smooth retrieval and usability of data, while proper data characterization helps understand data structure and properties. Both are essential steps in data preparation for accurate and reliable analysis. ### Consistency and Pollution of Data #### Concept Data consistency refers to the accuracy, uniformity, and reliability of data across a dataset. Data pollution refers to the presence of errors, noise, irrelevant, duplicate, or corrupted information that reduces data quality. #### Data Consistency **Key Points** 1. Data values follow the same format throughout. 2. No contradictions across records. 3. Uniform units of measurement. 4. No conflicting entries. 5. Maintains integrity across systems. #### Example - Date format consistently stored as DD-MM-YYYY. - Salary recorded in same currency (₹). #### Data Pollution **Key Points** 1. Presence of missing values. 2. Duplicate records. 3. Incorrect or inconsistent data. 4. Noise and outliers. 5. Irrelevant or corrupted data. #### Example - Same customer recorded twice, Age entered as 250 years. - Random symbols in numeric column. #### Uses - Ensuring data consistency improves reliability. - Removing data pollution improves model accuracy. - Essential for proper data cleaning and preparation. #### Conclusion Data consistency ensures uniform and reliable information, while data pollution degrades data quality through errors and noise. Identifying and correcting these issues is essential for accurate statistical analysis and Machine Learning performance. ### Duplicate or Redundant Variables #### Concept Duplicate variables refer to columns that are exactly repeated in a dataset. Redundant variables refer to different columns that contain overlapping or highly similar information, leading to repetition of data. #### Key Points 1. **Duplicate Variables** - Same column appears more than once. - Exact same values. - Increases dataset size unnecessarily. 2. **Redundant Variables** - Different columns but carry same or highly correlated information. - Cause multicollinearity in models. - Can reduce model interpretability. #### Problems Caused - Multicollinearity (unstable regression coefficients) - Increased computational cost - Overfitting risk #### Uses (Why We Remove Them) - Simplifies dataset. - Improves model stability. - Improves prediction accuracy. #### Example - Duplicate → Two identical “Salary” columns. - Redundant → “Date of Birth” and “Age” in same dataset. #### Conclusion Duplicate and redundant variables increase complexity without adding value. Removing them improves model efficiency, reduces multicollinearity, and enhances overall data quality. ### Outliers and Leverage Data #### Concept An outlier is a data point that significantly differs from other observations in a dataset. Leverage points are observations that have extreme values in the independent variable (X) and can strongly influence the regression model. #### Key Points 1. **Outliers** - Extreme values in dataset. - Far away from majority of observations. - Can occur due to error or genuine variation. - Affect mean and standard deviation. - **Detection Methods:** Box Plot, Z-score, IQR method 2. **Leverage Points** - Extreme values in predictor (independent variable). - May or may not be outliers in Y. - Have strong influence on regression line. - Identified using leverage statistics or Cook's distance. #### Difference Between Outliers and Leverage | Aspect | Outlier | Leverage Point | |---------------------------------|-----------------------------|-----------------------------| | Extreme in Dependent variable (Y)| Independent variable (X) | | | Impact | Affects mean and spread | Influences regression slope | | Detection | IQR, Z-score | Hat matrix, Cook's distance | #### Uses - Detect errors in data. - Improve regression accuracy. - Prevent misleading results. - Improve model stability. #### Example - Outlier → One student scoring 5 marks when others score 60–80. - Leverage → One house with extremely large area compared to others. #### Conclusion Outliers and leverage points can significantly affect statistical analysis and regression models. Identifying and handling them properly ensures accurate and reliable results in data analysis. ### Noisy Data, Missing Values and Imputation Techniques #### Concept Noisy data refers to data containing random errors, irrelevant information, or extreme variations that distort analysis. Missing values occur when no data value is stored for a variable in an observation. Imputation is the process of replacing missing or empty values with estimated values. #### Key Points 1. **Noisy Data** - Contains random errors or unwanted variations. - May occur due to measurement errors or data entry mistakes. - Affects accuracy of statistical analysis. - Can distort model predictions. - **Techniques to Handle Noisy Data:** - Binning - Moving average - Regression smoothing - Outlier detection and removal 2. **Missing Values** - Occur due to data entry errors, system failures, or non-response. - Represented as NULL, NAN, or blank. - Can bias results if not handled properly. - **Types of Missing Data:** - MCAR (Missing Completely at Random) - MAR (Missing at Random) - MNAR (Missing Not at Random) 3. **Imputation of Missing Values** Imputation means filling missing values using statistical techniques. - **Basic Techniques:** - Mean Imputation (for numerical data) - Median Imputation - Mode Imputation (for categorical data) - **Advanced Techniques:** - K-Nearest Neighbors (KNN) Imputation - Regression Imputation - Multiple Imputation - Interpolation (for time series data) - **Other Methods:** - Forward Fill / Backward Fill - Deleting rows/columns (if missing data is small) #### Uses - Improves data quality. - Prevents loss of important data. - Reduces bias in analysis. - Improves ML model performance. #### Example If income value is missing: - Replace with mean income (basic method). - Or use regression model to predict missing income (advanced method). #### Conclusion Handling noisy data and missing values is essential in data preparation. Proper imputation techniques improve data reliability and ensure accurate statistical analysis and machine learning performance. ### Missing Pattern and Its Importance #### Concept Missing pattern refers to the structure, distribution, and mechanism of missing values in a dataset. It helps identify how and why data is missing, which is crucial for selecting appropriate handling techniques. #### Key Points = Types of Missing Patterns (Mechanisms) 1. **MCAR (Missing Completely at Random)** - Missing values occur randomly. - No relationship with any variable. - Least problematic type. 2. **MAR (Missing at Random)** - Missing values depend on other observed variables. - Can be handled using advanced imputation techniques. 3. **MNAR (Missing Not at Random)** - Missing values depend on the missing value itself. - Most problematic type. - Requires special modeling approaches. #### Patterns of Missing Data Structure - Monotone pattern → Missing values follow a sequence. - Arbitrary pattern → Missing values scattered randomly. #### Importance of Missing Pattern 1. Helps choose correct imputation method. 2. Prevents biased results. 3. Improves model accuracy. 4. Identifies systematic data collection problems. 5. Supports reliable statistical inference. #### Example - If high-income individuals avoid reporting income: → Missing depends on income itself → This is MNAR - If survey respondents skip salary question randomly: → This is MCAR #### Conclusion Understanding missing patterns is essential before applying imputation techniques. Correct identification of missing mechanisms ensures unbiased analysis and improves model reliability in EDA and Machine Learning. ### Handling Non-Numerical Data in Missing Places #### Concept Handling non-numerical data in missing places refers to techniques used to fill or manage missing values in categorical or textual variables to maintain data quality and ensure accurate analysis. #### Key Points Non-numerical (categorical) data includes labels such as gender, city, product type, etc. Missing values in such data cannot be handled using numerical averages, so special methods are required. #### Techniques to Handle Missing Non-Numerical Data 1. **Mode Imputation** - Replace missing value with most frequent category. - Simple and commonly used method. 2. **Constant Value Imputation** - Replace missing values with a fixed category like "Unknown” or “Not Available". - Useful when missing itself carries information. 3. **Forward Fill / Backward Fill** - Used mainly in time series data. - Fill missing values with previous or next valid observation. 4. **KNN Imputation** - Uses similarity between records to estimate missing category. - More accurate but computationally expensive. 5. **Predictive Modeling** - Use classification models to predict missing category. - More advanced method. 6. **Deletion Method** - Remove rows if missing values are minimal. - Used when missing percentage is very small. #### Uses - Maintains dataset completeness. - Prevents bias in categorical analysis. - Ensures smooth ML model training. - Preserves data integrity. #### Example If Gender column has missing values: - Replace with mode (e.g., “Male”). - Or replace with "Unknown". - Or predict using other features. #### Conclusion Handling missing non-numerical data requires appropriate categorical imputation techniques. Choosing the correct method ensures reliable analysis and prevents bias in Machine Learning models. ### Univariate Data Analysis #### Concept Univariate Data Analysis is the process of analyzing and summarizing a single variable in a dataset to understand its distribution, central tendency, and variability. #### Key Points - Involves only one variable at a time. - Focuses on describing the characteristics of that variable. - Helps understand data distribution and patterns. - Uses statistical measures such as mean, median, mode. - Uses measures of dispersion such as range, variance, and standard deviation. - Often represented using graphical methods. #### Common Techniques - Histogram → Shows distribution of numerical data - Bar Chart → Shows frequency of categorical data - Pie Chart → Shows proportion of categories - Box Plot → Shows spread and outliers #### Uses - Understand basic structure of data. - Detect outliers and skewness. - Summarize dataset before advanced analysis. - Support decision making in business analytics. #### Example Analyzing the age of customers in a dataset using a histogram to understand how ages are distributed. #### Conclusion Univariate analysis helps understand the characteristics of a single variable in a dataset. It is the first step in exploratory data analysis and provides a foundation for deeper statistical analysis. ### Measure of Central Tendency – Mean (Arithmetic, Geometric and Harmonic Mean) #### Concept Measures of central tendency describe the central or typical value of a dataset. The mean is one of the most commonly used measures and represents the average value of the observations. #### Key Points 1. **Arithmetic Mean (AM)** - Most common type of mean. - Calculated by sum of all observations divided by number of observations. - Suitable for normally distributed numerical data. - Sensitive to outliers. 2. **Geometric Mean (GM)** - Used when data involves multiplicative growth. - Useful for percentages, ratios, and growth rates. - Less affected by extreme values than arithmetic mean. 3. **Harmonic Mean (HM)** - Used for rates and ratios (speed, price per unit, etc.). - Gives more weight to smaller values. #### Uses - Summarizes dataset with a single representative value. - Helps compare different datasets. - Widely used in economics, finance, and business analytics. #### Example - Arithmetic Mean → Average marks of students. - Geometric Mean → Average growth rate of investment. - Harmonic Mean → Average speed when distance is constant. #### Conclusion Mean is an important measure of central tendency used to summarize data. Arithmetic, geometric, and harmonic means are applied in different situations depending on the nature of the data. ### Raw Data and Grouped Data; Mean, Median, Mode #### Concept Data can be represented either as raw data (original unorganized observations) or as grouped data (data arranged into class intervals with frequencies). Measures of central tendency such as mean, median, and mode summarize the dataset with a representative value. #### Key Points 1. **Raw Data** - Original data collected from observations. - Not organized into classes. - Each value appears individually. - Suitable for small datasets. - **Example:** Marks of students: 45, 50, 60, 70, 55, 65 2. **Grouped Data** - Data arranged into class intervals. - Each class has a frequency. - Used when dataset is large. - Makes analysis easier. - **Example:** | Marks | Frequency | |-------|-----------| | 40-50 | 3 | | 50-60 | 5 | | 60-70 | 4 | #### Measures of Central Tendency 1. **Mean** - Arithmetic Mean: Average value of dataset. 2. **Median** - Middle value of ordered dataset. - **For Raw Data:** - Arrange data in ascending order. - Middle observation is the median. 3. **Mode** - Value that appears most frequently. - **For Raw Data:** - Most frequent value. #### Uses - Summarizes dataset with single value. - Helps compare datasets. - Used in business analytics, economics, and ML. #### Example If marks are: 40, 50, 60, 60, 70 - Mean = average of values - Median = middle value - Mode = most frequent value (60) #### Conclusion Raw data shows individual observations, while grouped data organizes large datasets into class intervals. Mean, median, and mode provide central values that help understand the overall distribution of data. ### Quartile and Percentile, Interpretation of Quartile and Percentile Values #### Concept Quartiles and percentiles are statistical measures used to divide a dataset into equal parts to understand the distribution of data. Quartiles divide the dataset into four equal parts, while percentiles divide it into 100 equal parts. #### Key Points 1. **Quartiles** - Divide data into 4 equal parts. - Three quartile values exist: - Q1 (First Quartile) → 25% of data lies below it. - Q2 (Second Quartile) → Median (50% of data below it). - Q3 (Third Quartile) → 75% of data lies below it. 2. **Percentiles** - Divide data into 100 equal parts. - Each percentile indicates the percentage of data below that value. - **Example:** - P25 = 25th percentile - P50 = Median - P90 = 90% of observations are below this value. #### Uses - Understand distribution of data. - Identify relative standing of observations. - Detect outliers using quartile ranges. - Used in exams, income distribution, and performance analysis. #### Example - If a student scores at the 90th percentile, it means the student scored higher than 90% of students in the dataset. - If Q1 = 40 marks, it means 25% of students scored below 40. #### Interpretation - Q1 → Lower quarter of data. - Q2 (Median) → Middle value of dataset. - Q3 → Upper quarter of data. - Percentiles show the relative ranking of a value in a dataset. #### Conclusion Quartiles and percentiles help describe the distribution and ranking of data points. They are important tools in statistical analysis and EDA for understanding data spread and identifying relative performance. ### Measure of Dispersion #### Concept Measures of dispersion describe the spread or variability of data values around the central value. They indicate how much the observations differ from each other in a dataset. #### Key Points - Shows the degree of variation in data. - Helps understand the reliability of the average. - If dispersion is small → values are close to the mean. - If dispersion is large → values are widely spread. - Important for comparing datasets. #### Common Measures of Dispersion 1. **Range** - Difference between the maximum and minimum value. - Range = Maximum - Minimum 2. **Interquartile Range (IQR)** - Measures spread of the middle 50% of data. - IQR = Q3 - Q1 3. **Variance** - Average of squared deviations from the mean. - $\sigma^2 = \frac{\sum (x - \bar{x})^2}{n}$ 4. **Standard Deviation** - Square root of variance. - Shows average deviation from mean. - $\delta = \sqrt{\delta^2}$ #### Uses - Understand variability of dataset. - Compare stability between datasets. - Identify outliers and data spread. - Important in risk analysis and ML models. #### Example If two classes have the same mean marks but different standard deviations: - Class A → SD = 5 (scores close to mean) - Class B → SD = 20 (scores widely spread) Class A is more consistent. #### Conclusion Measures of dispersion help understand the spread and variability of data. They complement measures of central tendency and provide deeper insight into the dataset. ### Symmetry of Data, Skewness, Kurtosis, Robustness of Parameters and Measures of Concentration #### Concept These statistical concepts describe the shape, distribution, and reliability of data. They help analysts understand whether data is balanced, skewed, concentrated, or affected by extreme values. 1. **Symmetry of Data** #### Concept A dataset is symmetric when the left and right sides of the distribution are mirror images of each other. #### Key Points - Mean = Median = Mode in perfectly symmetric data. - No skewness present. - Data spread is equal on both sides. - Example: Normal distribution curve. 2. **Skewness** #### Concept Skewness measures the degree of asymmetry in a data distribution. #### Types of Skewness - **Positive Skewness (Right Skew)** - Long tail on the right side. - Mean > Median > Mode. - **Negative Skewness (Left Skew)** - Long tail on the left side. - Mean ### Bivariate Data Analysis #### Concept Bivariate Data Analysis is the statistical analysis of two variables simultaneously to understand the relationship, association, or dependency between them. #### Key Points - Involves two variables (X and Y). - Used to study relationships or correlations between variables. - Helps identify patterns, trends, and associations. - Variables can be numerical or categorical. - Often used before predictive modeling. #### Common Techniques - Scatter Plot → Shows relationship between two numerical variables - Correlation Analysis → Measures strength and direction of relationship - Simple Linear Regression → Predicts one variable using another - Cross-tabulation → Used for categorical variables #### Uses - Identify relationships between variables. - Support decision making in business. - Detect trends and dependencies. - Help in predictive modeling. #### Example - Advertising expenditure and sales revenue - Height and weight of individuals A scatter plot can show whether the variables move together. #### Conclusion Bivariate data analysis helps understand how two variables are related. It is an important step in exploratory data analysis and provides insight into dependencies between variables. ### Introduction to Bivariate Distributions #### Concept A bivariate distribution describes the joint behavior of two variables together. It shows how two variables vary simultaneously and helps analyze the relationship between them. #### Key Points - Involves two random variables (usually X and Y). - Shows the joint distribution of both variables. - Helps understand association, dependence, or correlation. - Can be represented using tables, graphs, or probability distributions. - Used in statistical modeling and predictive analysis. #### Types of Bivariate Distributions 1. **Discrete Bivariate Distribution** - Both variables take discrete values. - Represented using a joint probability table. - **Example:** Number of products purchased and number of visits by customers. 2. **Continuous Bivariate Distribution** - Variables take continuous values. - Represented using joint probability density functions. - **Example:** Height and weight of individuals. #### Graphical Representation Common graphical tools include: - Scatter Plot - Contour Plot - 3D Surface Plot - Heatmap These help visualize the relationship between variables. #### Uses - Studying relationships between variables. - Measuring correlation between variables. - Supporting regression analysis. - Used in economics, finance, and machine learning. #### Example Analyzing advertising expenditure (X) and sales revenue (Y) to see how advertising influences sales. #### Conclusion Bivariate distributions help analyze how two variables behave together. Understanding these relationships is essential for correlation analysis, regression modeling, and predictive analytics. ### Association Between Two Nominal Variables #### Concept Association between two nominal variables refers to the relationship between two categorical variables that do not have any inherent order. Statistical methods are used to determine whether the variables are independent or related. #### Key Points - Both variables are categorical (nominal). - Categories have no natural order. - Relationship is analyzed using contingency tables. - Association measures help determine strength of relationship. - Commonly used in social science and business analysis. #### Methods to Measure Association 1. **Contingency Table (Cross-tabulation)** - Displays frequency distribution of two categorical variables. - Shows how categories interact. 2. **Chi-Square Test of Independence** - Tests whether two nominal variables are independent. 3. **Phi Coefficient** - Measures association for 2x2 contingency tables. 4. **Cramer's V** - Measures strength of association for larger tables. #### Uses - Analyze relationships between categorical variables. - Market research and customer segmentation. - Social science and demographic studies. - Business decision making. #### Example Studying relationship between: - Gender (Male/Female) - Product Preference (Product A/Product B) A contingency table can show whether gender influences product preference. #### Conclusion Association between two nominal variables helps determine whether categorical variables are related. Statistical tests like Chi-square and measures like Cramer's V help quantify the strength of this relationship. ### Contingency Tables, Chi-Square Calculations and Phi Coefficient #### Concept Contingency tables, Chi-Square test, and Phi coefficient are statistical methods used to analyze the association between two categorical (nominal) variables. They help determine whether the variables are independent or related. #### 1. Contingency Tables **Concept** A contingency table (or cross-tabulation table) shows the frequency distribution of two categorical variables simultaneously. **Key Points** - Rows represent categories of one variable. - Columns represent categories of another variable. - Each cell shows the frequency of occurrence. - Used to analyze association between variables. **Example** | Gender | Product A | Product B | Total | |--------|-----------|-----------|-------| | Male | 30 | 20 | 50 | | Female | 25 | 25 | 50 | | Total | 55 | 45 | 100 | #### 2. Chi-Square Test of Independence **Concept** The Chi-Square test determines whether two categorical variables are independent or associated. **Formula** $$ \chi^2 = \sum \frac{(O - E)^2}{E} $$ Where: $O$ = Observed frequency $E$ = Expected frequency **Expected Frequency** $$ E = \frac{(\text{Row Total} \times \text{Column Total})}{\text{Grand Total}} $$ **Interpretation** - Large Chi-square value → Strong association - Small Chi-square value → Variables likely independent #### 3. Phi Coefficient **Concept** Phi coefficient measures the strength of association between two nominal variables in a 2x2 contingency table. **Formula** $$ \Phi = \sqrt{\frac{\chi^2}{n}} $$ Where: $\chi^2$ = Chi-square statistic $n$ = Total observations **Range** - -1 to +1 - 0 → No association - +1 → Strong association #### Uses - Test independence between categorical variables. - Analyze survey data. - Market research and customer behavior analysis. - Social science studies. #### Example Studying association between: - Gender - Product preference Using a contingency table and Chi-square test to determine if gender influences product choice. #### Conclusion Contingency tables organize categorical data, the Chi-square test checks independence between variables, and the Phi coefficient measures the strength of their association. Together they help analyze relationships between nominal variables. ### Scatter Plot, Causal Interpretation, Correlation Coefficient and Regression Coefficient #### Concept These techniques are used in bivariate data analysis to study the relationship between two variables. Scatter plots provide a visual representation, while correlation and regression coefficients measure the strength and direction of the relationship. #### 1. Scatter Plot **Concept** A scatter plot is a graphical representation where two variables are plotted on X and Y axes, showing how one variable changes with another. **Key Points** - Each point represents one observation. - Helps visualize patterns, trends, and relationships. - Shows positive, negative, or no relationship. - Useful for identifying outliers. **Types of Relationships** - Positive Relationship → As X increases, Y increases. - Negative Relationship → As X increases, Y decreases. - No Relationship → Points scattered randomly. #### 2. Causal Interpretation **Concept** Causal interpretation means understanding whether changes in one variable actually cause changes in another variable. **Important Note** - Scatter plots show association, not necessarily causation. - Correlation does not imply causation. **Example** - Ice cream sales and drowning incidents may increase together, but one does not cause the other.