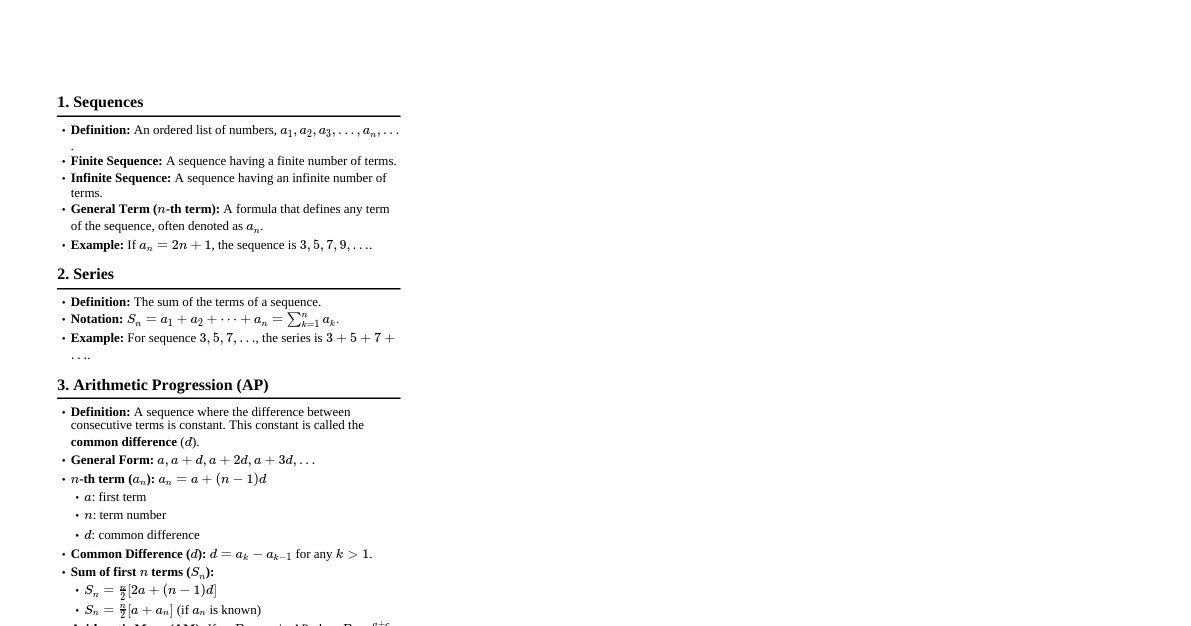

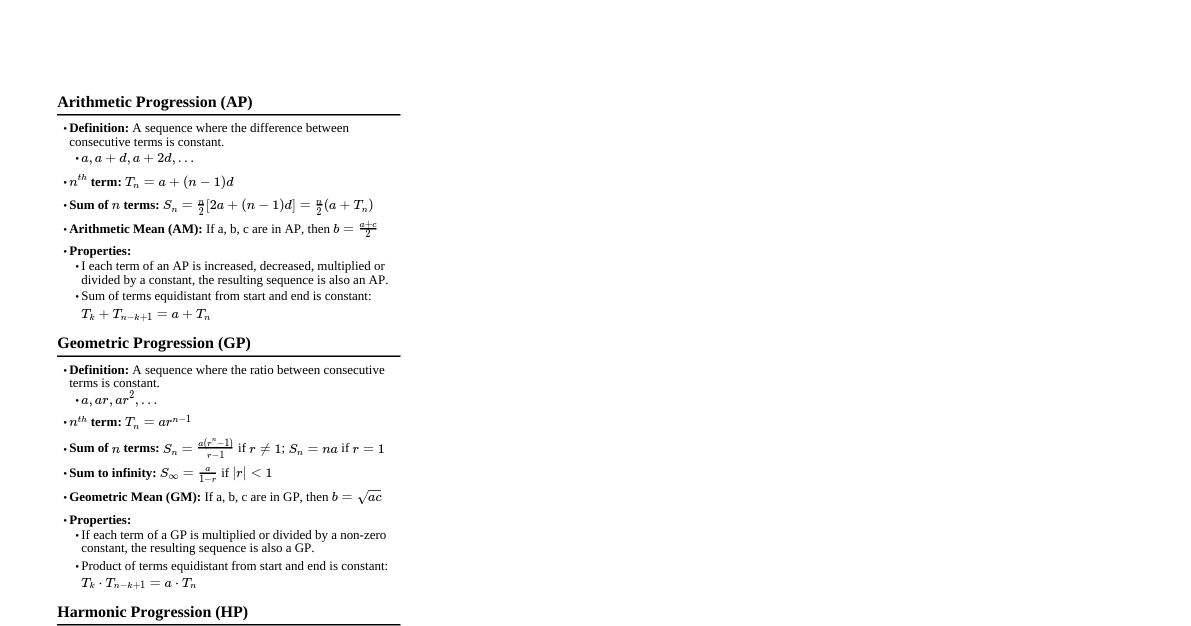

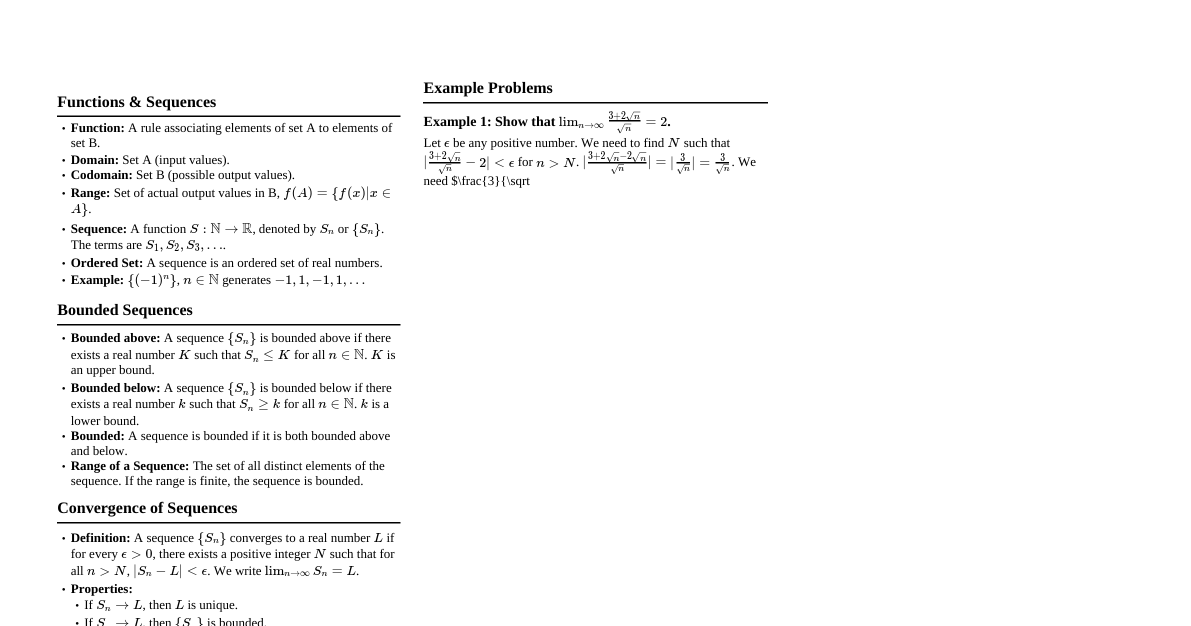

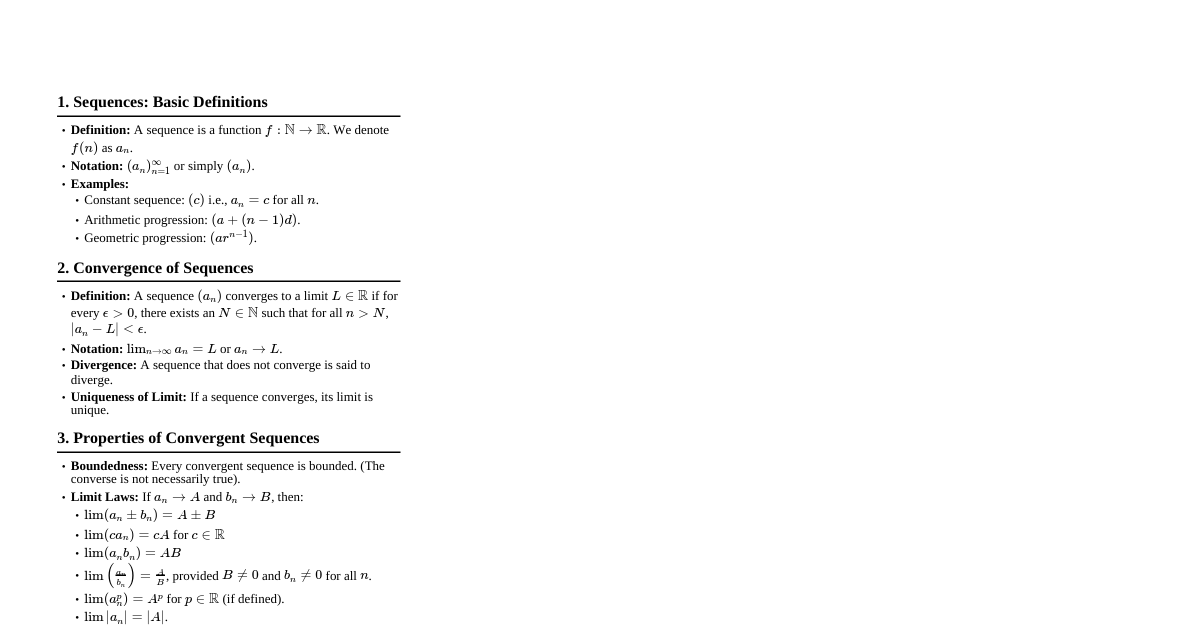

### Sequences and Series In this chapter, we find out what it means by the sum of an infinite collection of numbers. We explore conditions under which an infinite sum $$a_1+a_2+a_3 + \cdots + a_n + \cdots$$ known as an infinite series is meaningful. We discuss methods for computing the sum of an infinite series by applying algebra and calculus on the series. Infinite series are important in science and mathematics because many functions either arise most naturally in the form of an infinite series or have infinite series representation that are useful for numerical computation. Throughout the discussion, $N = \{1, 2, 3, 4, \dots\}$. ### Sequences #### Definition 2.1.1. A **sequence function** is a function from $N$ to $R$. An (**infinite**) **sequence** is the ordered list of elements of the range of a sequence function. The elements of an infinite sequence are called its **terms**. #### Notation. If $f: N \to R$ is a sequence function and $a_n = f(n)$, then the resulting sequence is $\{a_n\}_{n=1}^{+\infty} = \{a_1, a_2, \dots, a_n, \dots\}$ or simply (if there is no ambiguity) $\{a_n\}$. #### Variation: For any $k \in N \cup \{0\}$, $\{a_n\}_{n=k}^{+\infty} = \{a_k, a_{k+1}, a_{k+2}, \dots, a_n, \dots\}$. This means that a sequence may also start at $n=0$ or at any $n > 1$. #### Remark 2.1.2. Below are some special sequences. 1. **Arithmetic sequence:** $\{t_n\}$ where $t_n = a + dn$ for constants $a$ and $d, d \neq 0$. 2. **Geometric sequence:** $\{t_n\}$ where $t_n = ar^n$ for nonzero constants $a$ and $r$. 3. **Fibonacci sequence:** $\{t_n\}_{n=1}^{+\infty}$ where $t_1 = 1, t_2 = 1$, and $t_n = t_{n-1} + t_{n-2}$ for all $n \geq 3$. Our main concern now is to study the behavior of a given sequence $\{a_n\}$ as $n$ increases without bound. #### Definition 2.1.4. Let $\{a_n\}$ be a sequence and let $L \in R$. The **limit** of $\{a_n\}$ is $L$ if and only if for any $\varepsilon > 0$, there exists an $N > 0$ such that $|a_n - L| N$. In this case, we say that the sequence $\{a_n\}$ **converges to** $L$ or is **convergent**, and write $\lim_{n \to +\infty} a_n = L$. If no number $L$ exists which satisfies the above, then we say that $\{a_n\}$ **diverges** or is **divergent**. #### Theorem 2.1.7. Let $f$ be a function defined on $[k, +\infty)$ for some $k \in N$ and let $a_n = f(n)$ for all $n \in N$ with $n \geq k$. 1. If $\lim_{x \to +\infty} f(x)$ exists, then $\lim_{n \to +\infty} a_n = \lim_{x \to +\infty} f(x)$. 2. If $\lim_{x \to +\infty} f(x) = +\infty$ (respectively, $-\infty$), then $\lim_{n \to +\infty} a_n = +\infty$ (respectively, $-\infty$). #### Remark 2.1.6. Let $m, k \in N$. The sequence $\{a_n\}_{n=k}^{+\infty}$ converges to $L$ if and only if the sequence $\{a_n\}_{n=m}^{+\infty}$ converges to $L$. #### Theorem 2.1.11 (Squeeze Theorem for Sequences). Let $\{a_n\}$, $\{b_n\}$, and $\{c_n\}$ be sequences. If $a_n \leq b_n \leq c_n$ for all $n \geq k$ for some $k \in N$, and $\lim_{n \to +\infty} a_n = \lim_{n \to +\infty} c_n = L$ for some $L \in R$, then $\lim_{n \to +\infty} b_n = L$. #### Definition 2.1.14. A sequence $\{a_n\}$ is said to be 1. **constant** if $a_n = a_{n+1}$ for all $n$; 2. **increasing** if $a_n \leq a_{n+1}$ for all $n$; 3. **decreasing** if $a_n \geq a_{n+1}$ for all $n$; 4. **monotone** if it is either increasing or decreasing. An increasing (respectively, decreasing) sequence in which any two consecutive terms are distinct is said to be **strictly increasing** (respectively, **strictly decreasing**). A sequence $\{a_n\}_{n=k}^{+\infty}$ is **ultimately increasing** (respectively, **ultimately decreasing**) if $\{a_n\}_{n=m}^{+\infty}$ is increasing (respectively, decreasing) for some $m \geq k$. #### Remark 2.1.15. Any of the following conditions implies that a sequence $\{a_n\}_{n=k}^{+\infty}$ is increasing: 1. $a_{n+1} - a_n \geq 0$ for all $n$; 2. all terms are positive and $\frac{a_{n+1}}{a_n} > 1$ for all $n$; 3. $a_n = f(n)$ where $f$ is differentiable on $[k, +\infty)$ for all $n \in N$ and $f'(x) \geq 0$ for all $x \geq k$. Analogous conditions can be obtained which imply that a sequence is strictly increasing, decreasing, or strictly decreasing. #### Definition 2.1.19. A sequence $\{a_n\}$ is said to be 1. **bounded below** if there exists $m \in R$ such that $a_n \geq m$ for all $n$, and $m$ is called a **lower bound** for the sequence; 2. **bounded above** if there exists $M \in R$ such that $a_n \leq M$ for all $n$; and $M$ is called an **upper bound** for the sequence; 3. **bounded** if it is both bounded below and bounded above. #### Remark 2.1.21. An increasing sequence is bounded below by its first term, while a decreasing sequence is bounded above by its first term. The following result gives a sufficient condition for convergence of sequences which does not require computing the limit. #### Theorem 2.1.22 (Monotone Convergence Theorem). If a sequence is bounded and monotone, then it is convergent. Equivalently, a monotone sequence is convergent if and only if it is bounded. ### Series of Constant Terms #### Definition 2.2.1. An infinite series is an expression of the form $$\sum_{n=1}^{+\infty} a_n = a_1+a_2+\cdots+a_n+\cdots$$ The numbers $a_1, a_2, a_3, \dots$ are called terms of the series. #### Notation. If there is no ambiguity, we may write $\sum_{n=1}^{+\infty} a_n$ as $\sum a_n$ or simply $a_n$. A **partial sum** of an infinite series $\sum_{n=1}^{+\infty} a_n$ is a finite sum $S_k$ given by $$S_k = \sum_{n=1}^{k} a_n = a_1 + a_2 + \cdots + a_k.$$ #### Definition 2.2.2. Let $S_k$ denote the $k$th partial sum of a series $\sum_{n=1}^{+\infty} a_n$. The series is said to be 1. **convergent** with sum $S$ if and only if the sequence $\{S_k\}_{k=1}^{+\infty}$ of partial sums converges to $S$. In this case, we write $\sum_{n=1}^{+\infty} a_n = S$; 2. **divergent** if and only if the sequence of partial sums diverges. #### Theorem 2.2.8. 1. Let $c \in R \setminus \{0\}$. If $\sum_{n=1}^{+\infty} a_n$ converges then $\sum_{n=1}^{+\infty} c a_n$ converges with sum $c \sum_{n=1}^{+\infty} a_n$, and if $\sum_{n=1}^{+\infty} a_n$ diverges then $\sum_{n=1}^{+\infty} c a_n$ diverges. 2. If $\sum_{n=1}^{+\infty} a_n$ and $\sum_{n=1}^{+\infty} b_n$ both converge, then $\sum_{n=1}^{+\infty} (a_n + b_n)$ converges with sum $\sum_{n=1}^{+\infty} a_n + \sum_{n=1}^{+\infty} b_n$. 3. If $\sum_{n=1}^{+\infty} a_n$ converges and $\sum_{n=1}^{+\infty} b_n$ diverges, then $\sum_{n=1}^{+\infty} (a_n + b_n)$ diverges. 4. Suppose that $a_n = b_n$ for all but a finite number of values of $n$. If $\sum_{n=1}^{+\infty} a_n$ converges then $\sum_{n=1}^{+\infty} b_n$ converges, and if $\sum_{n=1}^{+\infty} a_n$ diverges then $\sum_{n=1}^{+\infty} b_n$ diverges. #### Theorem 2.2.11 (Divergence Test). If the series $\sum_{n=1}^{+\infty} a_n$ is convergent then $\lim_{n \to \infty} a_n = 0$. Equivalently, if $\lim_{n \to \infty} a_n \neq 0$ then $\sum_{n=1}^{+\infty} a_n$ is divergent. ### Convergence Tests for Series of Nonnegative Terms Throughout this section we consider only series $\sum a_n$ where $a_n \geq 0$ for all $n$. #### Theorem 2.3.1 (Bounded-Sum Test). Let $\sum_{n=1}^{+\infty} a_n$ be a series of positive terms. Then $\sum_{n=1}^{+\infty} a_n$ converges if and only if its sequence of partial sums is bounded above. #### Proof. Let $S_n = a_1 + \dots + a_n$. Then for all $n$ we have $$0 S_n$. Therefore $\{S_n\}_{n=1}^{+\infty}$ is increasing, and hence is monotonic. Also $S_n > 0$ for all $n$, so $\{S_n\}_{n=1}^{+\infty}$ is bounded below. Thus $\{S_n\}_{n=1}^{+\infty}$ is bounded if and only if it is bounded above. By the Monotone Convergence Theorem, $\{S_n\}_{n=1}^{+\infty}$ is convergent if and only if it is bounded. Putting everything together, we get that $\sum_{n=1}^{+\infty} a_n$ converges if and only if its sequence of partial sums is bounded above. #### Theorem 2.3.3 (Integral Test). Let $f$ be a function that is continuous, positive-valued, and decreasing on $[1, +\infty)$, and suppose that $f(n) = a_n$ for all $n \in N^+$. 1. If $\int_1^{+\infty} f(x) dx$ converges, then the series $\sum_{n=1}^{+\infty} a_n$ converges. 2. If $\int_1^{+\infty} f(x) dx = +\infty$, then the series $\sum_{n=1}^{+\infty} a_n$ diverges. #### Theorem 2.3.8 (Comparison Test). Let $\sum a_n$ and $\sum b_n$ be series of nonnegative terms. 1. If $a_n \leq b_n$ for all $n \geq k$ for some $k \in N$, and $\sum b_n$ is convergent, then $\sum a_n$ is convergent. 2. If $a_n \geq b_n$ for all $n \geq k$ for some $k \in N$, and $\sum b_n$ is divergent, then $\sum a_n$ is divergent. #### Proof. Assume first that $a_n \leq b_n$ for all $n$ and that $\sum b_n$ is convergent. For $n \geq k$ let $S_n = a_k + \dots + a_n$ and $T_n = b_k + \dots + b_n$. Then $S_n \leq T_n$ for all $n \geq k$, and by the Bounded-Sum Test $\{T_n\}_{n=k}^{+\infty}$ is bounded above. Let $M$ be an upper bound of $\{T_n\}_{n=k}^{+\infty}$. Then $T_n \leq M$ for all $n$, so it follows also that $S_n \leq M$ for all $n$. Therefore $\{S_n\}_{n=k}^{+\infty}$ is bounded above. Therefore by the Bounded-Sum Test the series $\sum_{n=k}^{+\infty} a_n$ converges, and by item 4 of Theorem 2.2.8, so does $\sum a_n$. Now suppose that $a_n \geq b_n$ for all $n \geq k$ and that $\sum b_n$ is divergent. If $\sum a_n$ is convergent then by (1.) the series $\sum b_n$ is convergent, which is false. Therefore $\sum a_n$ is divergent. #### Theorem 2.3.11 (Limit Comparison Test). Let $\sum a_n$ and $\sum b_n$ be series of positive terms. 1. If $\lim_{n \to +\infty} \frac{a_n}{b_n} = L \in R^+$, then either both series converge or both series diverge. 2. If $\lim_{n \to +\infty} \frac{a_n}{b_n} = 0$ and $\sum b_n$ converges, then $\sum a_n$ converges. 3. If $\lim_{n \to +\infty} \frac{a_n}{b_n} = +\infty$ and $\sum b_n$ diverges, then $\sum a_n$ diverges. ### Alternating Series Test #### Definition 2.4.1. An **alternating series** is a series of the form $\sum (-1)^n b_n$ or $\sum (-1)^{n-1} b_n$, where $b_n > 0$ for each $n$. That is, an alternating series is a series of nonzero terms which alternate in sign. #### Theorem 2.4.2 (Alternating Series Test). Let $\sum (-1)^n b_n$ be an alternating series with $b_n \geq 0$ for all $n$. If (i) the sequence $\{b_n\}$ is ultimately decreasing, and (ii) $\lim_{n \to +\infty} b_n = 0$ then the series is convergent. #### Proof. Consider the series $\sum_{n=1}^{+\infty} (-1)^{n-1} b_n$, where $b_n > 0$ for all $n$, and assume that this series satisfies the conditions (i) and (ii) above. Let $S_k = b_1 - b_2 + \dots + (-1)^{k-1} b_k$. If $k$ is even, say $k = 2n$ for some $n$, then it follows from condition (ii) that $$S_k = S_{2n} = b_1 - (b_2 - b_3) - \dots - (b_{2n-2} - b_{2n-1}) - b_{2n} \leq b_1.$$ Also $S_{2n+2} = S_{2n} + (b_{2n+1} - b_{2n+2}) \geq S_{2n}$. So the sequence $\{S_{2n}\}_{n=1}^{+\infty}$ of partial sums $S_k$ with $k$ even is increasing and bounded above, and hence is monotonic and bounded. Therefore by the Bounded-Sum Test $\{S_{2n}\}_{n=1}^{+\infty}$ is convergent. If $k$ is odd, say $k = 2n-1$, then $$S_k = S_{2n-1} = b_1 - (b_2 - b_3) + \dots + (b_{2n-3} - b_{2n-2}) + b_{2n-1} \geq b_1.$$ Also $S_{2n+1} = S_{2n-1} - (b_{2n} - b_{2n+1}) \leq S_{2n-1}$. So the sequence $\{S_{2n-1}\}_{n=1}^{+\infty}$ of partial sums $S_k$ with $k$ odd is decreasing and bounded below, and hence is monotonic and bounded. Therefore by the Bounded-Sum Test $\{S_{2n-1}\}_{n=1}^{+\infty}$ is convergent. Finally we show that $\lim_{n \to +\infty} S_{2n} = \lim_{n \to +\infty} S_{2n-1}$. Indeed, $$\lim_{n \to +\infty} S_{2n} - \lim_{n \to +\infty} S_{2n-1} = \lim_{n \to +\infty} (S_{2n} - S_{2n-1}) = \lim_{n \to +\infty} a_{2n} = 0,$$ which implies that $\lim_{n \to +\infty} S_{2n} = \lim_{n \to +\infty} S_{2n-1}$. Therefore the sequence $\{S_k\}$ converges, and hence $\sum_{n=1}^{+\infty} (-1)^{n-1} b_n$ converges. #### Warning. If either $\lim_{n \to +\infty} b_n \neq 0$ or $\{b_n\}$ is not ultimately decreasing, the Alternating Series Test is inconclusive. Other tests must be used to determine convergence or divergence. ### Tests for Absolute Convergence Consider the series $$\sum_{n=1}^{+\infty} \frac{\sin n}{n^2} = \frac{\sin 1}{1} + \frac{\sin 2}{4} + \frac{\sin 3}{9} + \frac{\sin 4}{16} + \dots$$ Observe that some terms are positive and some are negative. In fact, $\sin 1 > 0$, $\sin 2 > 0$, $\sin 3 > 0$, $\sin 4 1$ for some $L \in R$ or $\lim_{n \to +\infty} \left|\frac{a_{n+1}}{a_n}\right| = +\infty$, then the series is divergent. #### Warning. If $\lim_{n \to +\infty} \left|\frac{a_{n+1}}{a_n}\right| = 1$ then the Ratio Test is inconclusive. #### Theorem 2.5.12 (Root Test). Given a series $\sum_{n=1}^{+\infty} a_n$. 1. If $\lim_{n \to +\infty} \sqrt[n]{|a_n|} = L 1$ for some $L \in R$ or $\lim_{n \to +\infty} \sqrt[n]{|a_n|} = +\infty$, then the series is divergent. #### Warning. If $\lim_{n \to +\infty} \sqrt[n]{|a_n|} = 1$, then the Root Test is inconclusive. ### Power Series #### Definition 2.6.1. Let $a \in R$. A **power series** about $a$ (or centered at $a$) is an expression of the form $$\sum_{n=0}^{+\infty} c_n (x-a)^n = c_0 + c_1(x-a) + c_2(x-a)^2 + c_3(x-a)^3 + \dots + c_n(x-a)^n + \dots$$ where $x$ is a variable and $c_n$ is a constant for all $n$. #### Remark 2.6.2. 1. Convention: $(x-a)^0 := 1$ for all $x \in R$. 2. If there is a largest number $d$ such that $c_d \neq 0$, then the power series is a polynomial with degree $d$. Not all power series are polynomials. #### Definition 2.6.4. The **interval of convergence** of a power series in the variable $x$ is the set of all values of $x$ for which the resulting series converges. #### Remark 2.6.5. Every power series $\sum_{n=0}^{+\infty} c_n (x-a)^n$ is convergent when $x=a$. Indeed, $$\sum_{n=0}^{+\infty} c_n (a-a)^n = c_0 + \sum_{n=1}^{+\infty} c_n (0)^n = c_0 + \sum_{n=1}^{+\infty} 0 = c_0.$$ #### Theorem 2.6.6. For a given power series $\sum_{n=0}^{+\infty} c_n (x-a)^n$, exactly one of the following is true: 1. The series converges only at $x=a$. 2. The series converges absolutely for all $x \in R$. 3. There is a positive number $R$ such that the series converges absolutely if $|x-a| R$. #### Remark 2.6.7. 1. By Theorem 2.6.6, the interval of convergence of a power series centered at $a$ is of one of the following forms: * $\{a\}$; * $(-\infty, +\infty)$; * an interval with endpoints $a-R$ and $a+R$ for some real number $R > 0$. 2. We say that $\sum_{n=0}^{+\infty} c_n (x-a)^n$ has **radius of convergence** * $0$ if its interval of convergence is $\{a\}$; * $\infty$ if its interval of convergence is $(-\infty, +\infty)$; * $R$ if its interval of convergence is an interval with endpoints $a-R$ and $a+R$ for some real number $R > 0$. 3. In many cases, the radius and interval of convergence can be obtained using the conditions of the Ratio Test. ### Functions as Power Series The sum of a power series $\sum_{n=0}^{+\infty} c_n (x-a)^n$ is a function $f$ given by $$f(x) = \sum_{n=0}^{+\infty} c_n (x-a)^n$$ for all $x$ in the interval of convergence of the series. In this section and in the next two, we are concerned with the following problems: 1. Given a power series, find a non-series expression for its sum $f(x)$, together with the set of all numbers $x$ for which this is valid. 2. Given a non-series expression $g(x)$, find a power series whose sum is $g(x)$, together with the set of all numbers $x$ for which this is valid. #### Theorem 2.7.3. Assume that the power series $\sum_{n=0}^{+\infty} c_n (x-a)^n$ has radius of convergence $R$, where $R \in R^+$ or $R = +\infty$. Then the function $f$ defined by $$f(x) = \sum_{n=0}^{+\infty} c_n (x-a)^n$$ is differentiable (and therefore continuous) on the interval $I$, where $I = (a-R, a+R)$ if $R \in R^+$ and $I = (-\infty, +\infty)$ if $R = +\infty$. Furthermore, $$f'(x) = \sum_{n=1}^{+\infty} n c_n (x-a)^{n-1}$$ and $$\int f(x) dx = C + \sum_{n=0}^{+\infty} \frac{c_n}{n+1} (x-a)^{n+1}$$ where $C$ is an arbitrary constant. The series representations for $f'(x)$ and $\int f(x) dx$ both have radius of convergence $R$. #### Warning. It is not always true that a function is equal to its Taylor series at all points in the interval of convergence of the series. ### Taylor and Maclaurin Series We continue our study of one of the problems posed in the previous section, namely, that of finding a power series representation for a given expression $g(x)$. Under certain conditions on $g(x)$, we can define a power series, called a **Taylor series**, associated with $g(x)$. In Theorem 2.8.1 it is shown that if $g(x)$ has a power series representation then this series is a Taylor series of $g(x)$. From Theorems 2.9.3 and 2.9.4 we obtain a method for determining whether $g(x)$ is equal to its Taylor series, and for which values of $x$ this representation is valid. #### Theorem 2.8.1. Assume that the function $g$ has a power series representation about the number $a \in \text{dom}(g)$. Then the derivatives of $g$ of all orders exist at $a$, and $$g(x) = \sum_{n=0}^{+\infty} \frac{g^{(n)}(a)}{n!} (x-a)^n$$ for all $x$ in the interval of convergence of the series, where $g^{(0)} := g$. #### Proof. Let $a \in R$ and assume that $g(x) = \sum_{n=0}^{+\infty} c_n (x-a)^n$, where $c_i \in R$ for all $i$. By Theorem 2.7.3 $$g'(x) = \sum_{n=1}^{+\infty} n c_n (x-a)^{n-1}$$ It can be shown that for any $k \in Z^+$, $$g^{(k)}(x) = \sum_{n=k}^{+\infty} n(n-1)\dots(n-k+1) c_n (x-a)^{n-k}.$$ Thus, if $g^{(0)}(x) := g(x)$, then for any nonnegative integer $k$ $$g^{(k)}(a) = k! c_k.$$ Therefore $g^{(k)}(a)$ exists for all $k$, and $$c_k = \frac{g^{(k)}(a)}{k!}.$$ The result follows. #### Definition 2.8.2. The series in Theorem 2.8.1 is called the **Taylor series** for $g$ about the number $a$. The Taylor series for $g$ about $0$ is called the **Maclaurin series** for $g$. ### Approximations Using Taylor Polynomials In this section we consider further the problem of whether a given function is equal to its Taylor series. For functions which are indeed equal to their Taylor series, we estimate function values using partial sums of the Taylor series and determine the accuracy of these approximations. #### Definition 2.9.1. Let $f$ be a function whose first $k$ derivatives exist at $a$. The $k$th degree **Taylor polynomial** of $f$ about $a$ is the polynomial $P_k$ defined by $$P_k(x) = \sum_{n=0}^{k} \frac{f^{(n)}(a)}{n!} (x-a)^n.$$ The **remainder** is the function $R_k$ given by $$R_k(x) = f(x) - P_k(x).$$ #### Remark 2.9.2. A Taylor polynomial of $f$ is a partial sum of the Taylor series of $f$. Hence $$P_k(x) = \sum_{n=0}^{k} \frac{f^{(n)}(a)}{n!} (x-a)^n.$$ #### Theorem 2.9.3. Let $f$ be a function whose derivatives of all orders exist at $a$, and let $P_k(x)$ denote the Taylor polynomial of $f$ about $a$. Then $f(x)$ is equal to its Taylor series about $a$ if and only if $\lim_{k \to +\infty} R_k(x) = 0$. #### Proof. By (2.1) and the definition of the remainder function, $$f(x) = \sum_{n=0}^{+\infty} \frac{f^{(n)}(a)}{n!} (x-a)^n = \lim_{k \to +\infty} P_k(x) = \lim_{k \to +\infty} (f(x) - R_k(x)) = f(x) - \lim_{k \to +\infty} R_k(x).$$ It follows immediately that $f(x) = \sum_{n=0}^{+\infty} \frac{f^{(n)}(a)}{n!} (x-a)^n$ exactly when $\lim_{k \to +\infty} R_k(x) = 0$. It is often inconvenient to compute $\lim R_k(x)$ using its definition. Theorem 2.9.4 and its immediate consequence Corollary 2.9.7 are among several results that provide us with indirect methods of determining if this limit is equal to zero. #### Theorem 2.9.4 (Lagrange form of $R_k$). Let $f$ be a function whose first $k+1$ derivatives exist in the open interval $I$ between $x$ and $a$ with $f^{(k)}$ continuous in the closed interval between $x$ and $a$. Then for some $z \in I$, with $z$ dependent on $k$, $$R_k(x) = \frac{f^{(k+1)}(z)}{(k+1)!} (x-a)^{k+1}.$$ #### Remark 2.9.5. Theorem 2.9.4 is a generalization of the Mean-Value Theorem for functions which was learned in Math 21. Recall that if $f$ is a function that is continuous on a closed interval $[a, b]$ and differentiable on the open interval $(a, b)$, then there exists a number $z \in (a, b)$ satisfying $$f'(z) = \frac{f(b)-f(a)}{b-a}.$$ This can be written as $$f(b) - f(a) = \frac{f'(z)}{1!} (b-a).$$ Observe that the left side is equal to $f(b) - P_0(b)$, thus implying that the right side is $R_0(b)$, which is a special case of (2.2). The following is a frequently useful fact: Since $\sum_{k=0}^{+\infty} \frac{(x-a)^k}{k!}$ is absolutely convergent for all $a, x \in R$, $$\lim_{k \to +\infty} \frac{(x-a)^k}{k!} = 0 \text{ for any } a, x \in R.$$ #### Corollary 2.9.7. Assume the hypotheses of Theorem 2.9.4. If there is a constant $M$ satisfying $|f^{(k+1)}(z)| \leq M$ for any $z \in I$, then $$0 \leq |R_k(x)| \leq \frac{M}{(k+1)!} |x-a|^{k+1}.$$ #### Theorem 2.9.12. Given a convergent alternating series $\sum_{n=1}^{+\infty} a_n$, where $a_n = (-1)^{n+1} b_n$ or $a_n = (-1)^n b_n$, with $b_n > 0$ and $b_{n+1}