Q-Learning Cheatsheet

Cheatsheet Content

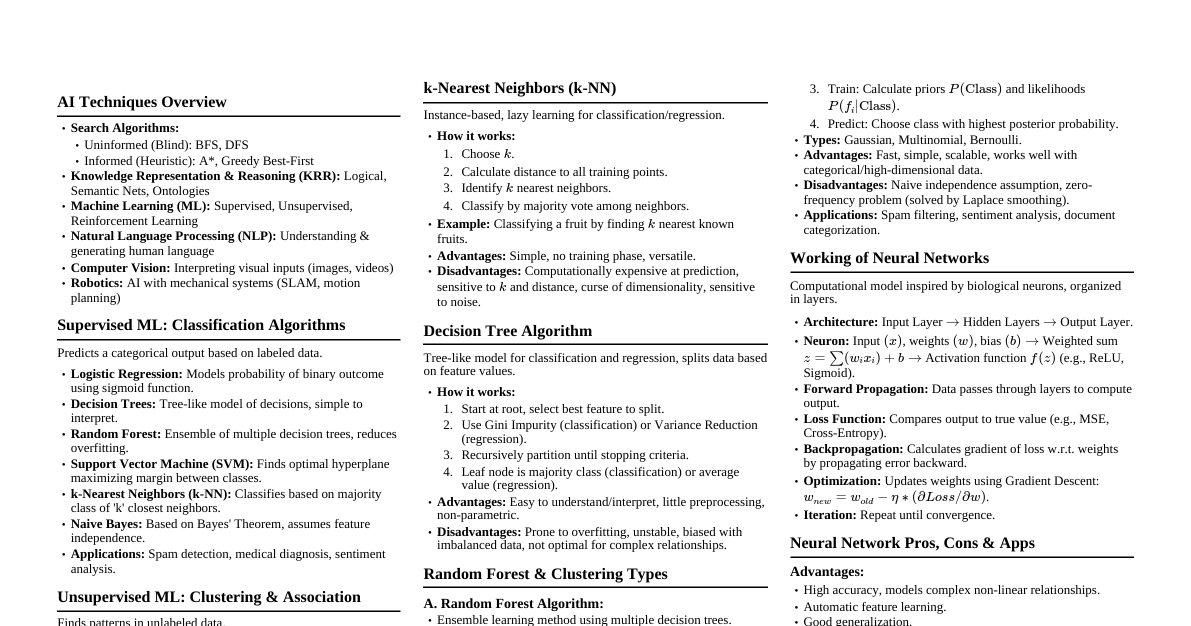

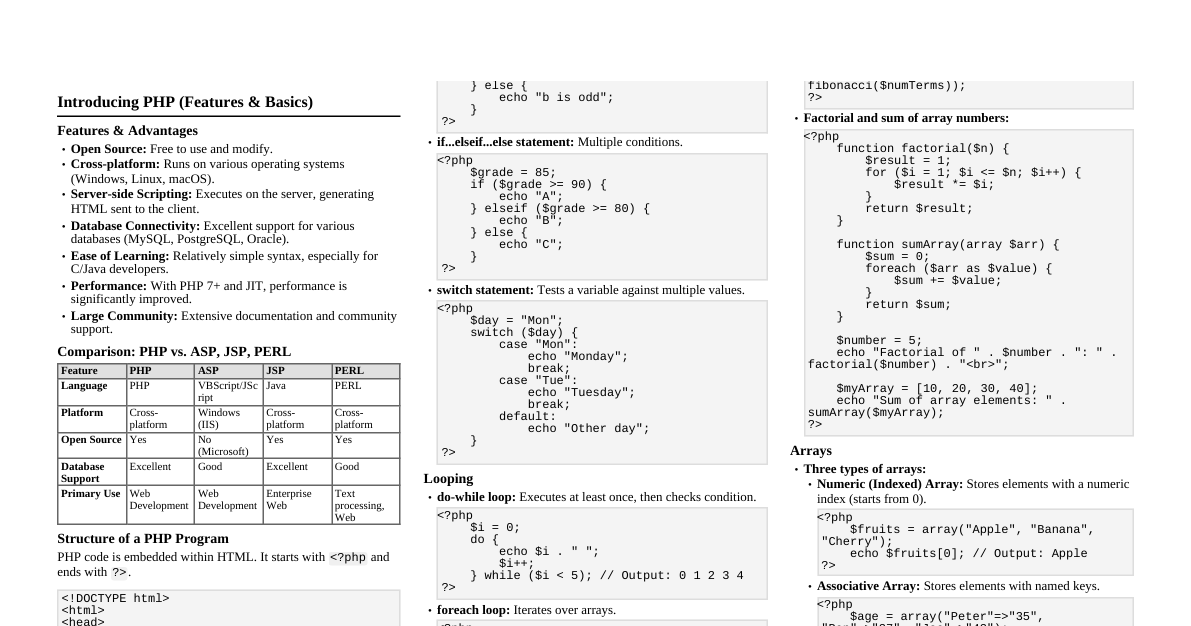

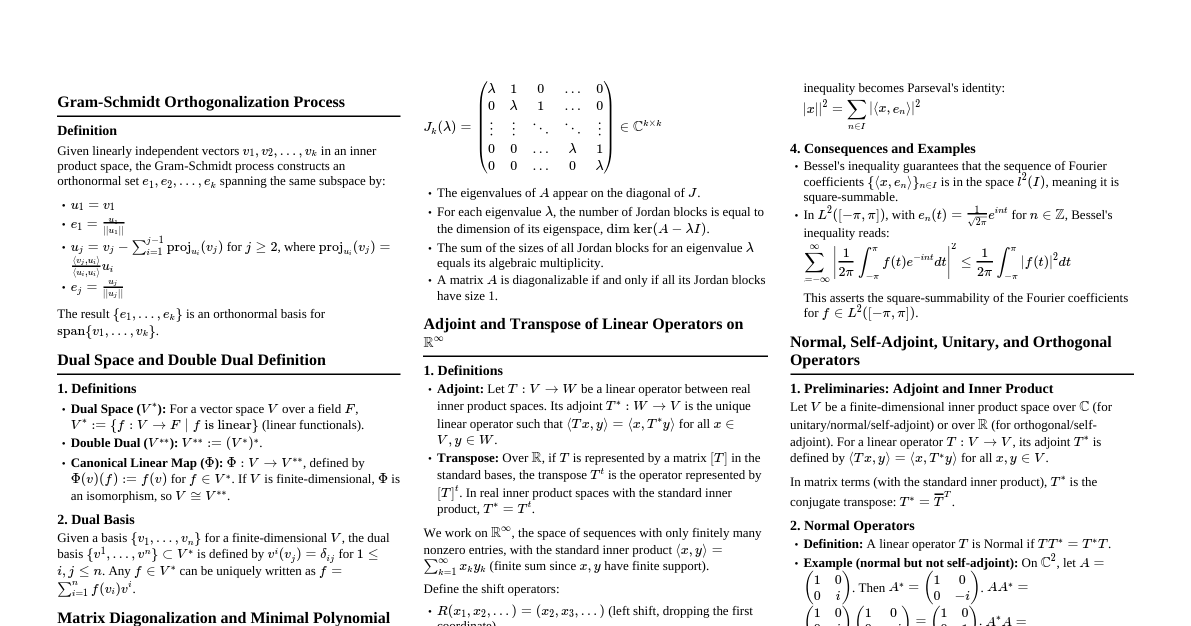

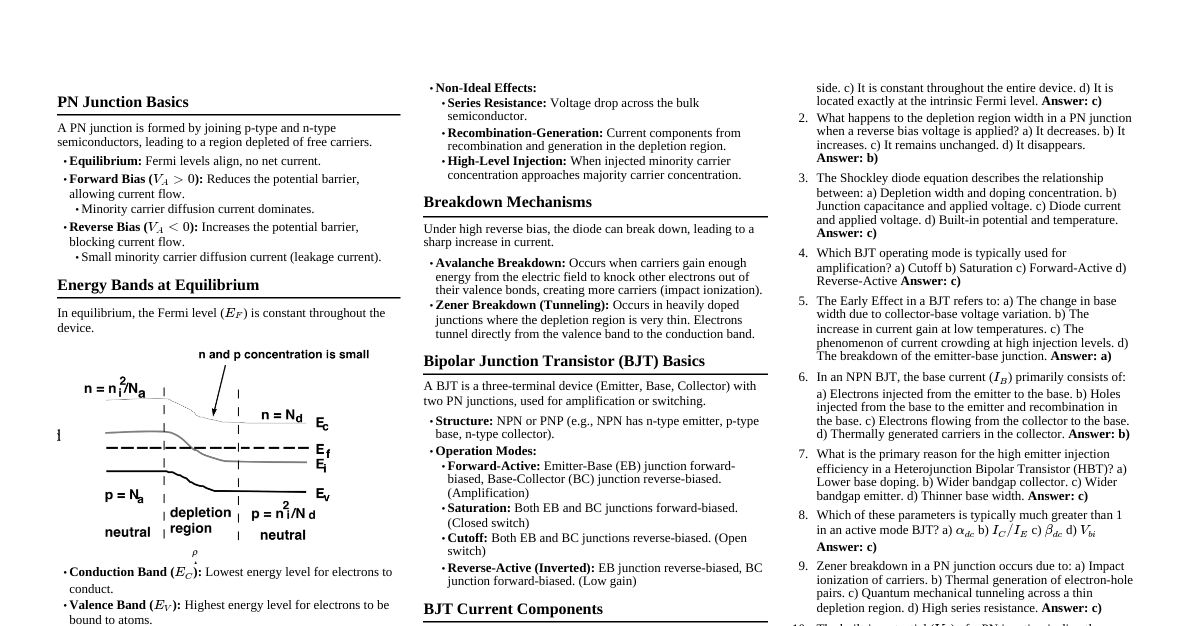

### Introduction to Q-Learning - **Reinforcement Learning (RL):** An agent learns to make decisions by performing actions in an environment to maximize a cumulative reward. - **Model-Free RL:** The agent does not know the environment's transition probabilities or reward function. - **Off-Policy Learning:** Learns the optimal policy regardless of the agent's exploration policy. - **Goal:** Find an optimal policy $\pi^*$ that tells the agent which action to take in each state to maximize long-term rewards. ### Key Components - **Agent:** The learner or decision-maker. - **Environment:** The world the agent interacts with. - **State ($S$):** A complete description of the environment at a specific time. - **Action ($A$):** A move made by the agent in a given state. - **Reward ($R$):** A scalar feedback signal from the environment indicating the desirability of an action. - **Policy ($\pi$):** A mapping from states to actions, $\pi: S \to A$. - **Value Function ($V(s)$):** Expected cumulative reward starting from state $s$ and following policy $\pi$. - **Action-Value Function ($Q(s, a)$):** Expected cumulative reward starting from state $s$, taking action $a$, and then following policy $\pi$. Q-Learning directly estimates $Q(s, a)$. - **Discount Factor ($\gamma$):** A value between 0 and 1 that discounts future rewards. Higher $\gamma$ values mean future rewards are considered more important. - $\gamma \in [0, 1]$ ### Q-Learning Algorithm 1. **Initialize** the Q-table (Q-values for all state-action pairs) arbitrarily, often to zeros. 2. **For each episode:** a. **Initialize** the starting state $S$. b. **Repeat** for each step of the episode (until $S$ is terminal): i. **Choose an action $A$** from state $S$ using an $\epsilon$-greedy policy (or similar exploration strategy). ii. **Take action $A$**, observe the new state $S'$ and reward $R$. iii. **Update the Q-value** for the state-action pair $(S, A)$ using the Bellman equation: $$Q(S, A) \leftarrow Q(S, A) + \alpha [R + \gamma \max_{a'} Q(S', a') - Q(S, A)]$$ Where: - $Q(S, A)$: Current Q-value for state $S$ and action $A$. - $\alpha$: Learning rate ($0 ### $\epsilon$-Greedy Policy - A common strategy for balancing **exploration** (trying new actions) and **exploitation** (choosing known best actions). - **With probability $\epsilon$**: Choose a random action $A$. - **With probability $1 - \epsilon$**: Choose the action $A$ with the highest Q-value for the current state $S$ (greedy action). - **$\epsilon$ annealing:** It's common to start with a high $\epsilon$ (e.g., 1.0) to encourage exploration and gradually decrease it over time (e.g., to 0.01) to favor exploitation as the agent learns. ### Q-Table - A table where rows represent states and columns represent actions. - Each cell $Q(s, a)$ stores the estimated maximum discounted future reward for taking action $a$ in state $s$. - **Example (Grid World):** | State \ Action | Up | Down | Left | Right | |--------------|----|------|------|-------| | S1 | 0 | 0 | 0 | 0 | | S2 | 0 | 0 | 0 | 0 | | ... | ...| ... | ... | ... | - **Limitations:** Becomes impractical for environments with very large or continuous state/action spaces (curse of dimensionality). In such cases, Function Approximation (e.g., Deep Q-Networks) is used. ### Hyperparameters - **Learning Rate ($\alpha$):** - High $\alpha$: Learns quickly but might oscillate or fail to converge. - Low $\alpha$: Learns slowly but might be more stable. - Typical values: $0.01$ to $0.1$. - **Discount Factor ($\gamma$):** - $\gamma \approx 0$: Agent focuses only on immediate rewards. - $\gamma \approx 1$: Agent considers future rewards heavily, tending towards long-term planning. - Typical values: $0.8$ to $0.99$. - **Exploration Rate ($\epsilon$):** - High $\epsilon$: More exploration, slower convergence to optimal policy but better for complex environments. - Low $\epsilon$: More exploitation, faster convergence if initial estimates are good, but might get stuck in local optima. - Typically starts high (e.g., $1.0$) and decays to a small value (e.g., $0.01$). ### Advantages & Disadvantages #### Advantages - **Model-Free:** Does not require knowledge of the environment's dynamics. - **Off-Policy:** Can learn the optimal policy while following a sub-optimal exploration policy. This allows for flexible exploration strategies. - **Simplicity:** Relatively easy to understand and implement for discrete state/action spaces. - **Guaranteed Convergence:** Under certain conditions (e.g., finite states/actions, sufficient exploration, decaying learning rate), Q-Learning is guaranteed to converge to the optimal policy. #### Disadvantages - **State-Action Space Explosion:** Infeasible for large or continuous state and/or action spaces due to the need for a Q-table. - **Exploration vs. Exploitation:** Finding the right balance with $\epsilon$-greedy can be challenging. - **Sample Efficiency:** Can require many episodes/interactions to converge, especially in complex environments. - **Local Optima:** Without sufficient exploration, it can get stuck in sub-optimal policies. ### Deep Q-Networks (DQN) - **Solution for large state spaces:** Replaces the Q-table with a neural network (Deep Neural Network). - The neural network takes the state $S$ as input and outputs the Q-values for all possible actions in that state. - **Experience Replay:** Stores past experiences $(S, A, R, S')$ in a replay buffer and samples mini-batches from it to train the network. This breaks correlations in the data and improves stability. - **Target Network:** Uses a separate, delayed-update target network to calculate the target Q-values ($R + \gamma \max_{a'} Q_{target}(S', a')$). This also helps stabilize training by providing a more consistent target. - **Loss Function:** Mean Squared Error (MSE) between the current Q-value prediction and the target Q-value: $$L = (Q(S, A; \theta) - (R + \gamma \max_{a'} Q_{target}(S', a'; \theta^-)))^2$$ Where $\theta$ are the parameters of the online network and $\theta^-$ are the parameters of the target network. ### Pseudocode Example ```python Initialize Q-table with zeros Set hyperparameters: alpha, gamma, epsilon, num_episodes For episode from 1 to num_episodes: Initialize state S While S is not terminal: # Choose action A using epsilon-greedy policy If random()