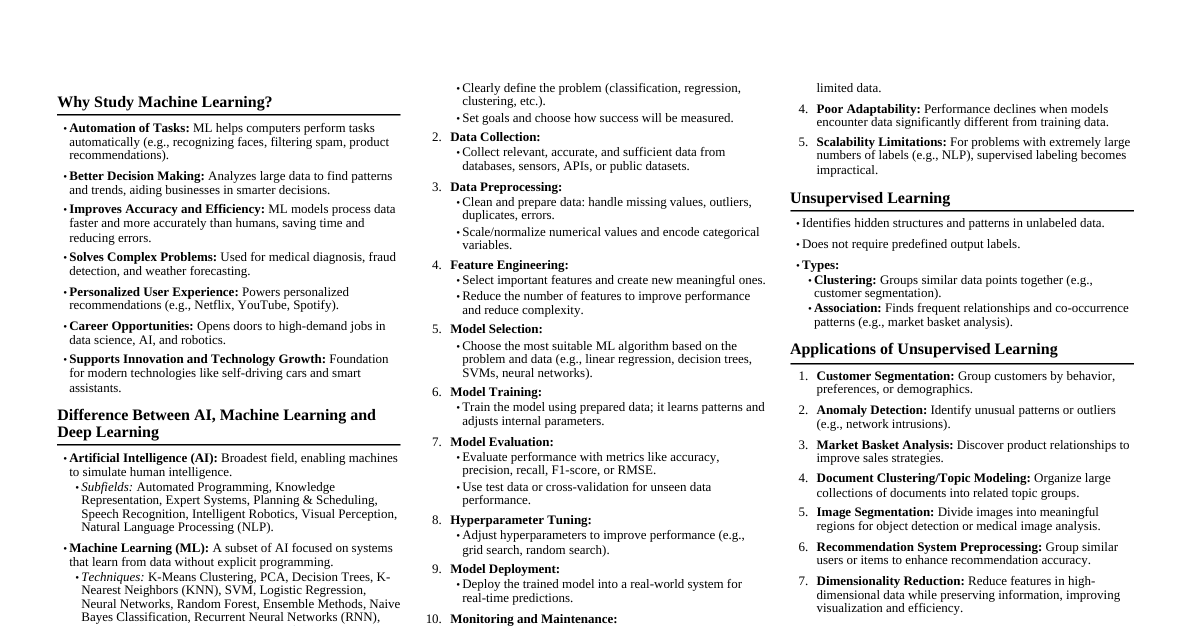

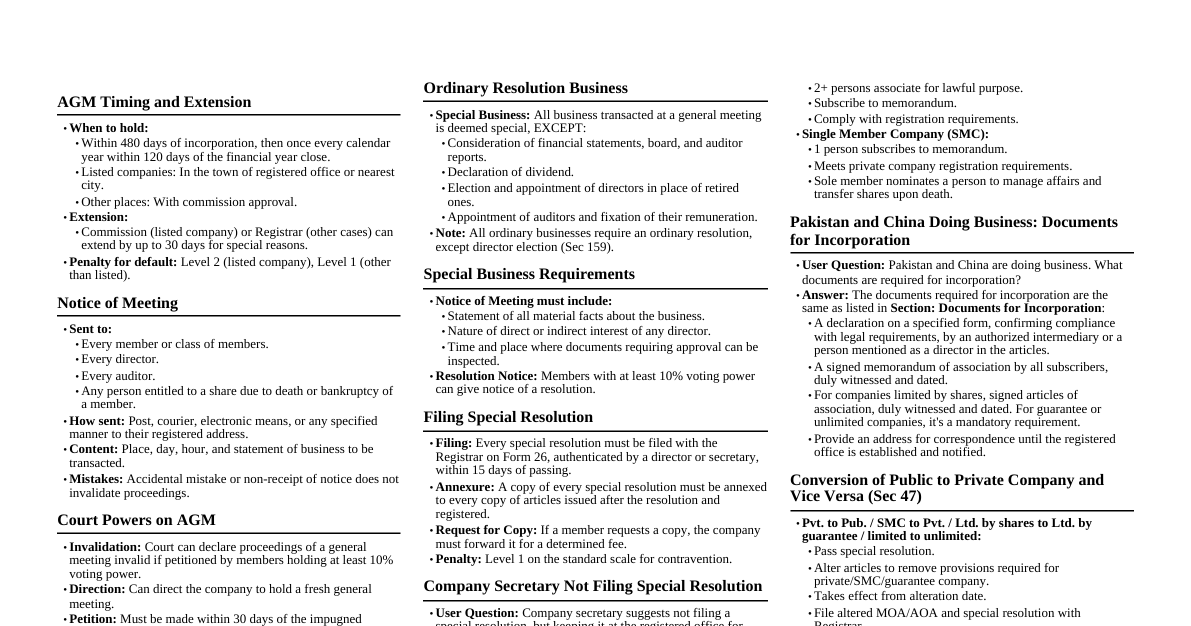

Machine Learning Q&A Bank

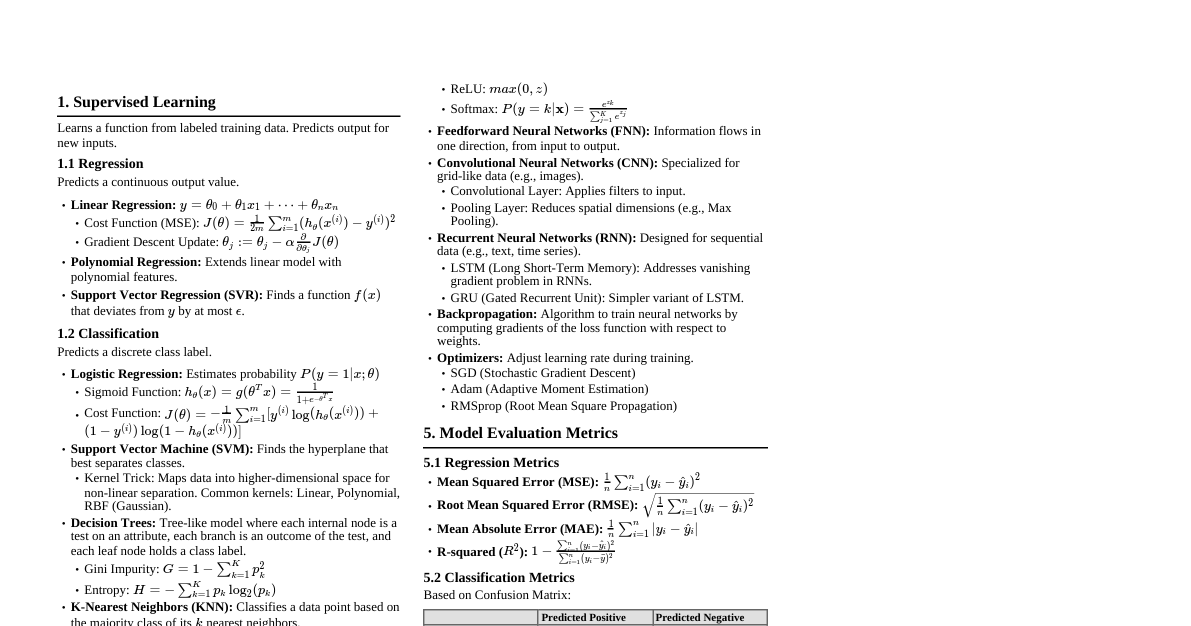

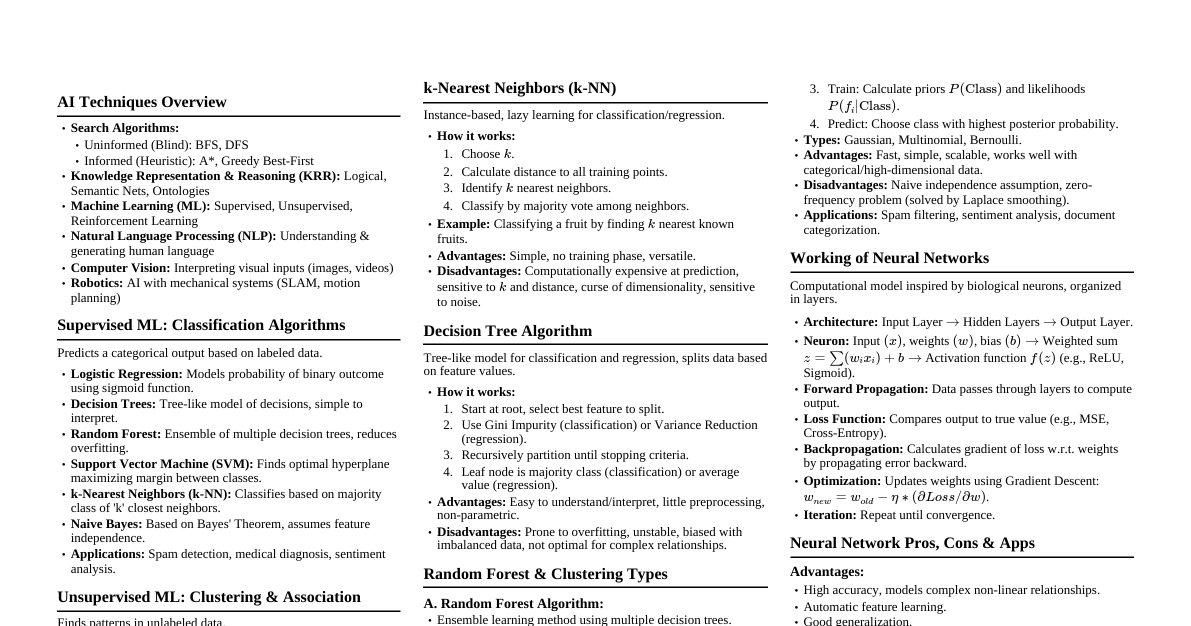

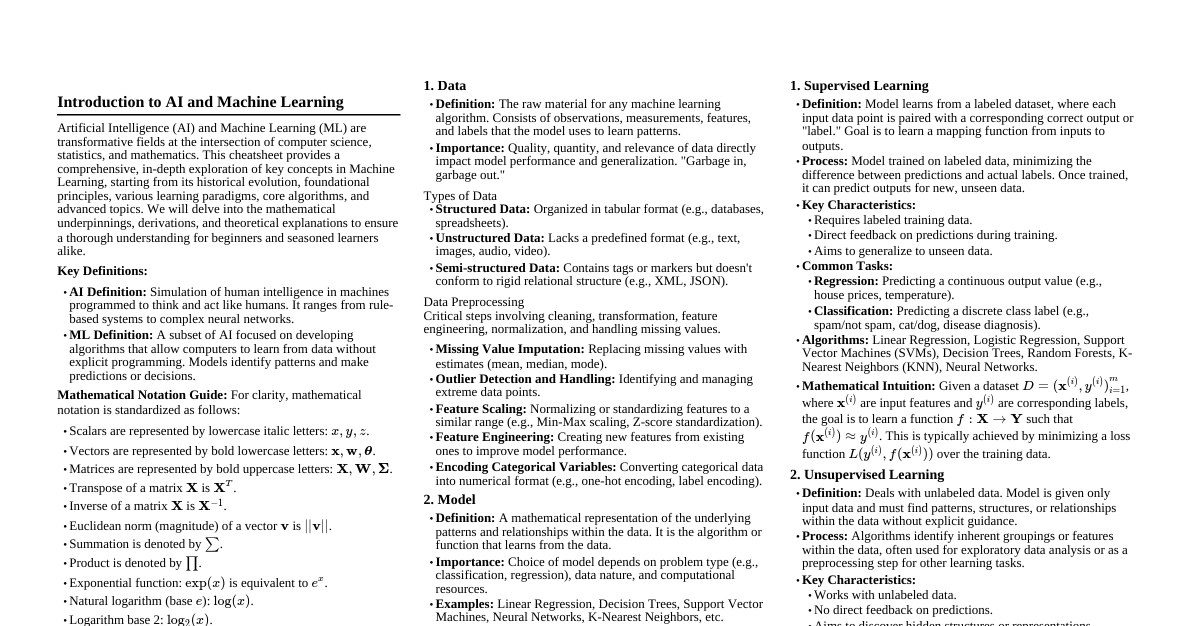

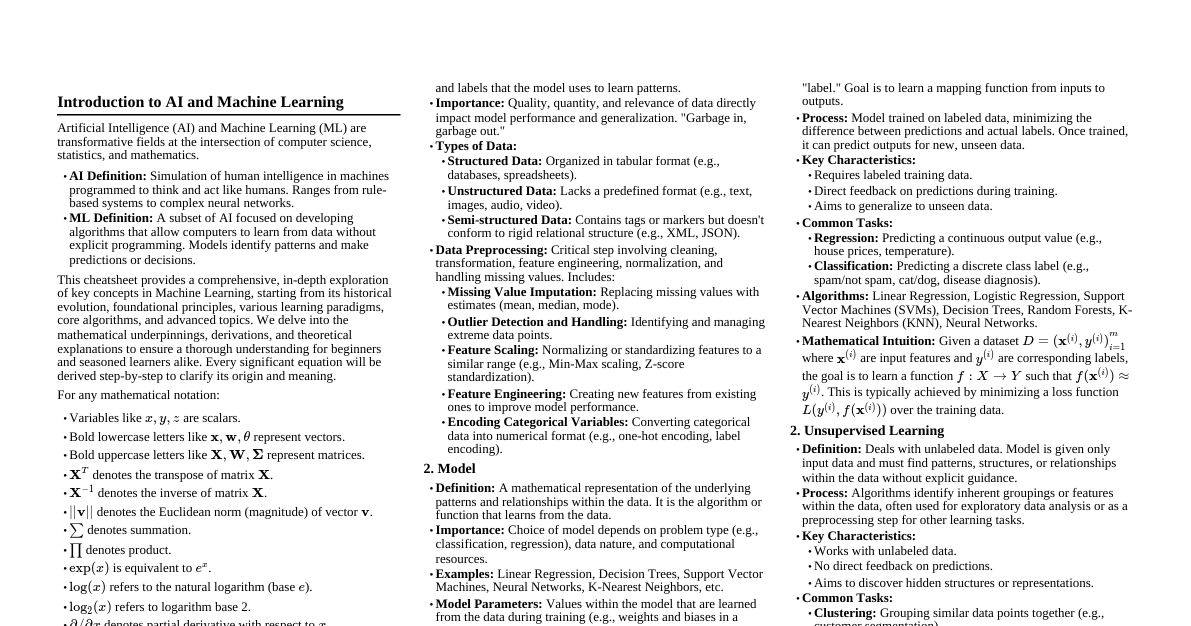

Cheatsheet Content