Machine Learning Fundamentals

Cheatsheet Content

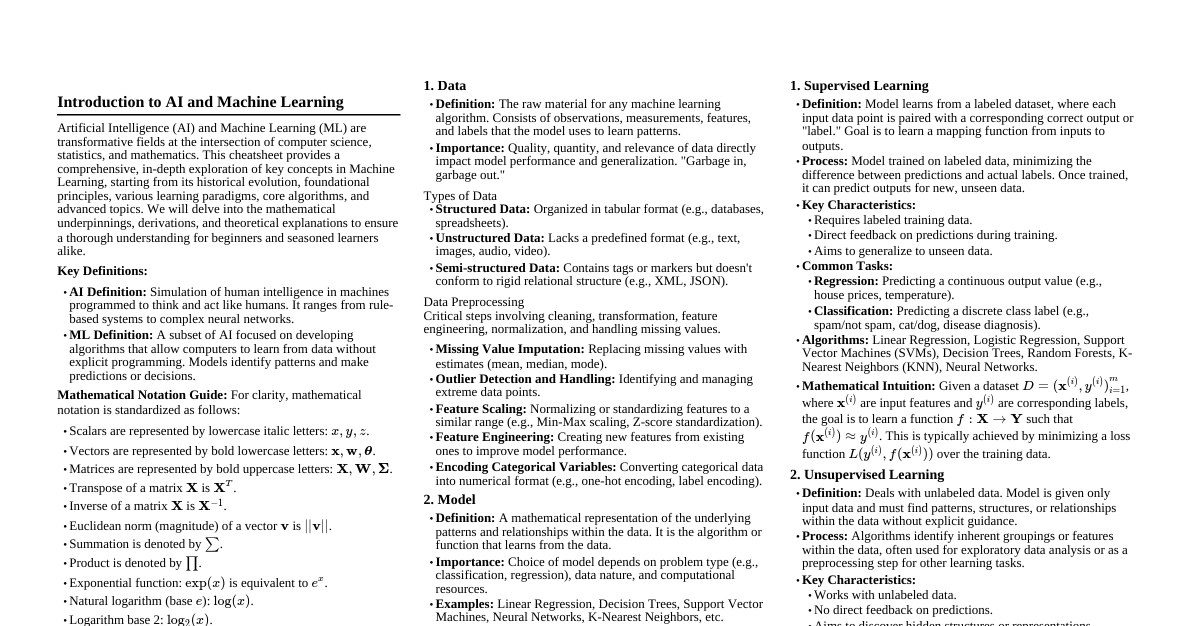

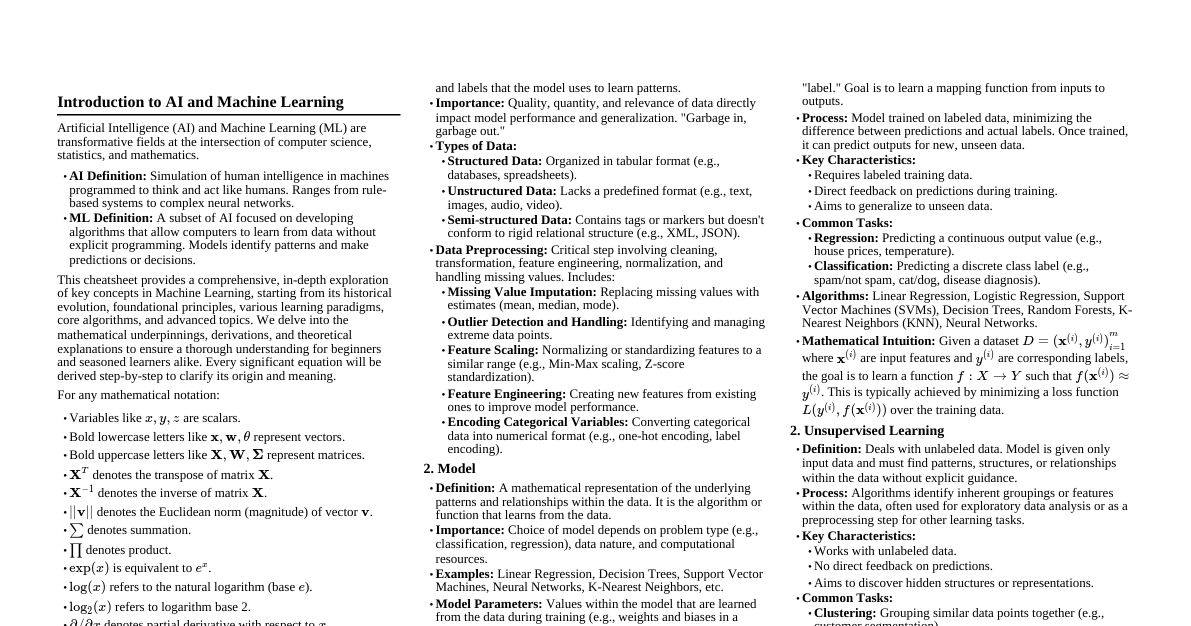

### Why Study Machine Learning? - **Automation of Tasks:** ML helps computers perform tasks automatically (e.g., recognizing faces, filtering spam, product recommendations). - **Better Decision Making:** Analyzes large data to find patterns and trends, aiding businesses in smarter decisions. - **Improves Accuracy and Efficiency:** ML models process data faster and more accurately than humans, saving time and reducing errors. - **Solves Complex Problems:** Used for medical diagnosis, fraud detection, and weather forecasting. - **Personalized User Experience:** Powers personalized recommendations (e.g., Netflix, YouTube, Spotify). - **Career Opportunities:** Opens doors to high-demand jobs in data science, AI, and robotics. - **Supports Innovation and Technology Growth:** Foundation for modern technologies like self-driving cars and smart assistants. ### Difference Between AI, Machine Learning and Deep Learning - **Artificial Intelligence (AI):** Broadest field, enabling machines to simulate human intelligence. - *Subfields:* Automated Programming, Knowledge Representation, Expert Systems, Planning & Scheduling, Speech Recognition, Intelligent Robotics, Visual Perception, Natural Language Processing (NLP). - **Machine Learning (ML):** A subset of AI focused on systems that learn from data without explicit programming. - *Techniques:* K-Means Clustering, PCA, Decision Trees, K-Nearest Neighbors (KNN), SVM, Logistic Regression, Neural Networks, Random Forest, Ensemble Methods, Naive Bayes Classification, Recurrent Neural Networks (RNN), Autoencoders, Anomaly Detection, Hopfield Networks, Reinforcement Learning, Modular Neural Networks, Adaptive Resonance Theory (ART). - **Deep Learning (DL):** A subset of ML using neural networks with many layers (deep neural networks) to learn complex patterns. - *Techniques:* Multilayer Perceptrons (MLP), Boltzmann Machines, Convolutional Neural Networks (CNN), Long Short-Term Memory (LSTM), Recurrent Neural Networks (RNN), Deep Reinforcement Learning, Transformer Models (BERT, GPT), Generative Adversarial Networks (GAN), Deep Autoencoders, Deep Belief Networks. ### What is Machine Learning? - **Definition:** "A computer program is said to learn from experience **E** with respect to some class of tasks **T** and performance measure **P**, if its performance at tasks in **T**, as measured by **P**, improves with experience **E**." (T. M. Mitchell, 2017) - **Example (Email Spam Classification):** - **Task (T):** Email spam classification. - **Experience (E):** Previously labeled emails. - **Performance (P):** Classification accuracy. - *As the model sees more labeled emails, its accuracy improves.* ### History of Machine Learning - **Origin of the Term (1959):** Arthur Samuel introduced and popularized "Machine Learning." - Described it as a field allowing computers to learn and improve performance without direct programming. - **Early Success Example:** Samuel's checkers-playing program, one of the first self-learning systems. - **Developments in the 1980s:** - Introduction of neural network methods. - Limited performance due to weak hardware and insufficient data. - Slow adoption due to computational constraints. - Period known as the first **AI winter**, when research funding and interest declined. ### Machine Learning Today - **Progress in the 2000s:** - Growth of algorithms like Support Vector Machines (SVMs) and decision trees. - Better handling of large datasets. - Improved computational efficiency. - Expansion of big data technologies. - Revival and advancement of deep learning techniques. - **Modern Applications:** - Image and speech recognition. - Natural language processing (NLP). - Autonomous vehicles. - Healthcare and personalized treatment systems. - **Popular AI Systems:** - DeepFace for facial recognition. - AlphaGo (defeated world champions in Go). - ChatGPT and other conversational AI tools. - **Key Contributors:** Yann LeCun, Geoffrey Hinton, and Yoshua Bengio ("The Three Godfathers of AI") played major roles in advancing deep learning. ### Standard Machine Learning Pipeline 1. **Problem Definition:** * Clearly define the problem (classification, regression, clustering, etc.). * Set goals and choose how success will be measured. 2. **Data Collection:** * Collect relevant, accurate, and sufficient data from databases, sensors, APIs, or public datasets. 3. **Data Preprocessing:** * Clean and prepare data: handle missing values, outliers, duplicates, errors. * Scale/normalize numerical values and encode categorical variables. 4. **Feature Engineering:** * Select important features and create new meaningful ones. * Reduce the number of features to improve performance and reduce complexity. 5. **Model Selection:** * Choose the most suitable ML algorithm based on the problem and data (e.g., linear regression, decision trees, SVMs, neural networks). 6. **Model Training:** * Train the model using prepared data; it learns patterns and adjusts internal parameters. 7. **Model Evaluation:** * Evaluate performance with metrics like accuracy, precision, recall, F1-score, or RMSE. * Use test data or cross-validation for unseen data performance. 8. **Hyperparameter Tuning:** * Adjust hyperparameters to improve performance (e.g., grid search, random search). 9. **Model Deployment:** * Deploy the trained model into a real-world system for real-time predictions. 10. **Monitoring and Maintenance:** * Continuously monitor performance. Update or retrain when data patterns change. ### Some Applications of Machine Learning - **Sentiment Analysis:** Detect emotions or opinions from text data. - **Fraud Detection:** Analyze transaction patterns to identify unusual behavior. - **Speech Recognition:** Convert spoken language to text. - **Medical Diagnosis:** Assist healthcare by analyzing medical data for disease detection. - **Recommendation Systems:** Provide personalized suggestions based on user interests. ### Challenges in Machine Learning - **Limited or Skewed Data:** Small or biased datasets lead to unreliable or unfair predictions. - **Overfitting Issues:** Complex models can memorize training data, reducing generalization. - **Feature Design Difficulty:** Identifying and creating meaningful features requires significant effort and domain knowledge. - **Model and Parameter Selection:** Finding the best algorithm and tuning parameters involves repeated testing. - **Lack of Transparency:** Many advanced models are "black boxes," making decisions hard to explain. ### Machine Learning Paradigms 1. Supervised Learning 2. Unsupervised Learning 3. Semi-Supervised Learning 4. Reinforcement Learning ### Supervised Learning - Learns a mapping between input data and known output labels. - Uses labeled training data. - **Types:** - **Classification:** Predicts discrete or categorical classes (e.g., spam vs. non-spam emails). - **Regression:** Predicts continuous numerical values (e.g., house price prediction). ### Some Applications of Supervised Learning - **Spam Email Detection:** Classifying emails as spam or not spam. - **Image Recognition:** Identifying objects, faces, or handwriting. - **Medical Diagnosis:** Predicting diseases based on patient data. - **Credit Scoring:** Assessing loan applicant likelihood of default. - **Speech Recognition:** Converting spoken words into text. - **Price Prediction:** Predicting house prices, stock values, or product demand. ### Challenges in Supervised Learning 1. **Dependence on Labeled Data:** Creating large, accurate labeled datasets is time-consuming and costly. 2. **Data Bias Issues:** Biased or imbalanced training data can lead to biased models. 3. **Risk of Overfitting:** Models may memorize examples rather than learning generalizable patterns, especially with limited data. 4. **Poor Adaptability:** Performance declines when models encounter data significantly different from training data. 5. **Scalability Limitations:** For problems with extremely large numbers of labels (e.g., NLP), supervised labeling becomes impractical. ### Unsupervised Learning - Identifies hidden structures and patterns in unlabeled data. - Does not require predefined output labels. - **Types:** - **Clustering:** Groups similar data points together (e.g., customer segmentation). - **Association:** Finds frequent relationships and co-occurrence patterns (e.g., market basket analysis). ### Applications of Unsupervised Learning 1. **Customer Segmentation:** Group customers by behavior, preferences, or demographics. 2. **Anomaly Detection:** Identify unusual patterns or outliers (e.g., network intrusions). 3. **Market Basket Analysis:** Discover product relationships to improve sales strategies. 4. **Document Clustering/Topic Modeling:** Organize large collections of documents into related topic groups. 5. **Image Segmentation:** Divide images into meaningful regions for object detection or medical image analysis. 6. **Recommendation System Preprocessing:** Group similar users or items to enhance recommendation accuracy. 7. **Dimensionality Reduction:** Reduce features in high-dimensional data while preserving information, improving visualization and efficiency. ### Challenges of Unsupervised Learning 1. **No Labeled Data for Guidance:** Difficult to verify correctness or meaningfulness of results. 2. **Difficulty in Evaluating Results:** No clear accuracy measure; evaluation often depends on visual inspection or domain knowledge. 3. **Choosing the Right Number of Clusters:** Challenging to select the optimal number of groups, leading to poor grouping if incorrect. 4. **Sensitivity to Data Quality:** Noise, missing values, or outliers significantly affect output and can produce unreliable patterns. 5. **Interpretation of Patterns:** Understanding real-world meaning of discovered patterns can be difficult, often requiring expert interpretation. 6. **High Computational Cost:** Some algorithms require significant processing power and memory, especially with large datasets. 7. **Scalability Issues:** Performance slows down as data size increases, making real-time application harder. ### Semi-Supervised Learning - Learns patterns using a combination of labeled and unlabeled data. - Uses a small amount of labeled data to guide learning on a larger unlabeled dataset. - **Methods:** 1. **Self-Training:** - Model trained on labeled data. - Predicts labels for unlabeled data. - High-confidence predictions added to training data. - Process repeated to gradually improve the model. 2. **Co-Training:** - Two models trained using different sets of features from the same data. - Each model labels part of the unlabeled data for the other model. - Models learn from each other to improve accuracy. 3. **Graph-Based Methods:** - Data points are nodes in a graph; connections represent similarity. - Labels spread from labeled to nearby unlabeled points based on connections. ### When to Use Semi-Supervised Learning - **When labeled data is limited or expensive:** Useful in domains like medical imaging where expert annotation is costly. - **When large amounts of unlabeled data are available:** Leverages vast data from platforms like social media. - **For unstructured data formats:** Works well with text, images, and audio where manual labeling is difficult. - **When some categories have very few labeled examples:** Helps improve learning for rare or underrepresented classes. - **When traditional approaches fall short:** Beneficial when purely supervised or unsupervised methods are insufficient. ### Applications of Semi-Supervised Learning - **Face Recognition:** Improves accuracy by learning from small labeled sets and large unlabeled image sets. - **Handwritten Character Recognition:** Adapts to different handwriting styles using probabilistic and generative models. - **Speech Recognition:** Enhances transcription quality by training models with large volumes of unlabeled speech. - **Cybersecurity:** Detects abnormal network activity and malicious behavior patterns. - **Financial Services:** Applied in fraud prevention and risk assessment by analyzing transaction patterns. ### Advantages of Semi-Supervised Learning - **Improved Generalization:** Models learn a more complete data structure from combined labeled and unlabeled data. - **Adaptability and Stability:** Effective across different data sources and remains stable even with data pattern changes. - **Lower Labeling Cost:** Reduces reliance on manual data annotation, saving time and resources. - **Better Cluster Formation:** Enhances group separation using additional unlabeled information. - **Support for Rare Categories:** Improves learning outcomes for classes with very few labeled examples. ### Disadvantages of Semi-Supervised Learning - **Performance Evaluation Difficulty:** Measuring effectiveness is harder due to limited labeled data and inconsistent unlabeled data quality. - **Higher Model Complexity:** Requires careful selection of algorithms and tuning of parameters. - **Sensitivity to Noisy Data:** Poor-quality or irrelevant unlabeled data can reduce model accuracy. - **Dependence on Data Assumptions:** Performance relies on assumptions like data similarity and natural grouping, which may not always be valid. ### Reinforcement Learning - **Definition:** A type of machine learning where an **agent** learns to make decisions by performing actions and learning from **experience**. - The agent interacts with its **environment** and improves its behavior through trial and error. - Based on each action, the agent receives feedback (**rewards** or **penalties**) to maximize long-term benefits. - **Key Components:** - **Agent:** The learner or decision-maker that chooses actions. - **Environment:** The external system or setting in which the agent operates. - **State:** The current condition or situation observed by the agent. - **Action:** The set of possible choices or moves available to the agent. - **Reward:** The response given by the environment that indicates how good or bad an action was. - **How Learning Happens (Example: Robot Learning to Walk):** 1. The robot tries different movements (trial and error). 2. Correct movements receive a positive reward. 3. Incorrect movements or falls receive a penalty. 4. Over time, the robot learns which movements yield more rewards. 5. Eventually, the robot learns to walk properly. ### Applications of Reinforcement Learning - **Robotics:** Training robots for tasks in controlled environments, learning efficient movement patterns. - **Gaming Systems:** Creating intelligent game-playing agents that develop advanced strategies (e.g., chess, Go). - **Adaptive Learning Platforms:** Personalizing educational content by analyzing user behavior and learning progress. - **Industrial Process Optimization:** Real-time monitoring and control of industrial systems to adjust operations for better performance. ### Advantages of Reinforcement Learning - **Works Well in Dynamic Environments:** Adapts to changing conditions by continuously learning from real-time interactions. - **No Need for Pre-Labeled Data:** Learns directly from experience, unlike supervised learning. - **Effective for Sequential Decision Tasks:** Highly suitable for problems involving a series of dependent decisions (e.g., navigation, game playing). - **Capable of Discovering New Strategies:** Can develop innovative solutions not obvious to humans. - **Handles Uncertainty Efficiently:** Performs well in environments with randomness and unpredictable outcomes. ### Disadvantages of Reinforcement Learning - **High Computational Requirements:** Training RL models demands significant computing resources and large data volumes. - **Sensitive to Reward Design:** Poorly designed reward functions can lead to incorrect or unwanted behavior. - **Not Ideal for Simple Tasks:** Traditional ML or rule-based approaches may be faster and more efficient for basic problems. - **Difficult to Interpret Decisions:** Understanding why an agent chooses a particular action can be complex and unclear. - **Balancing Exploration and Exploitation Is Challenging:** Agents must manage trying new actions while using known successful strategies.