Linear Algebra Cheatsheet

Cheatsheet Content

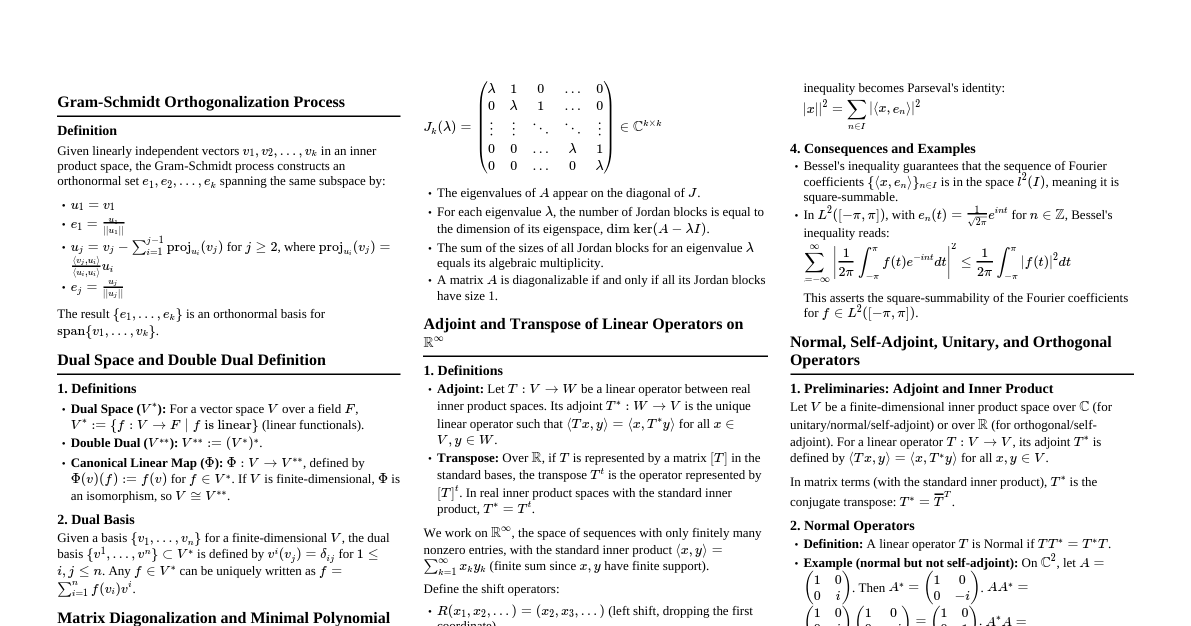

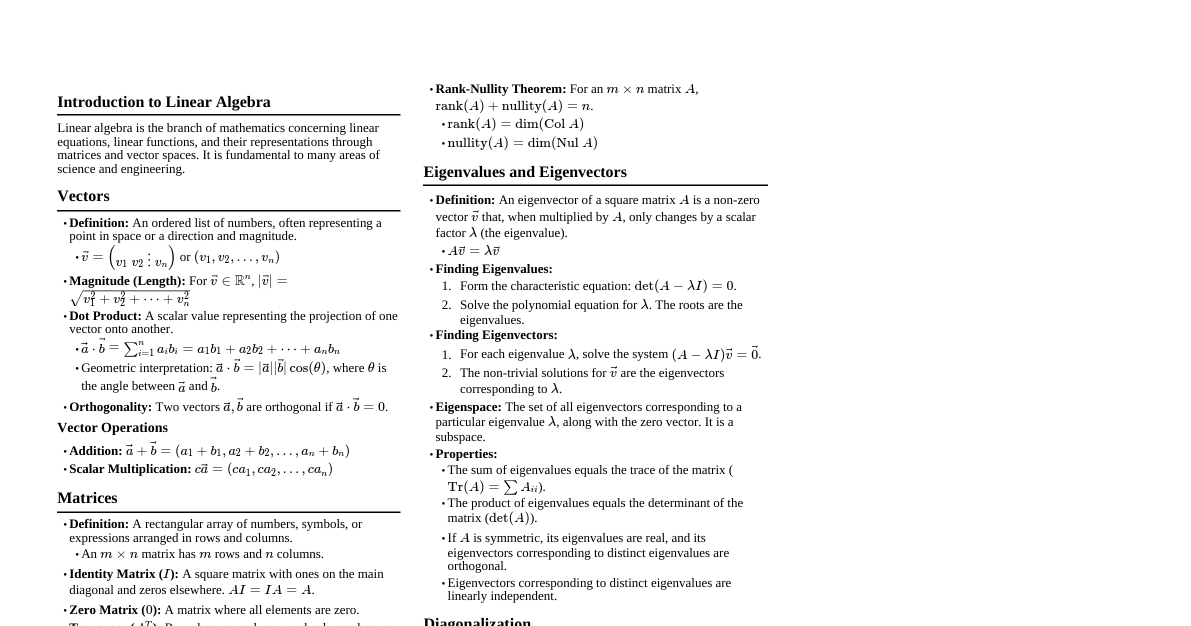

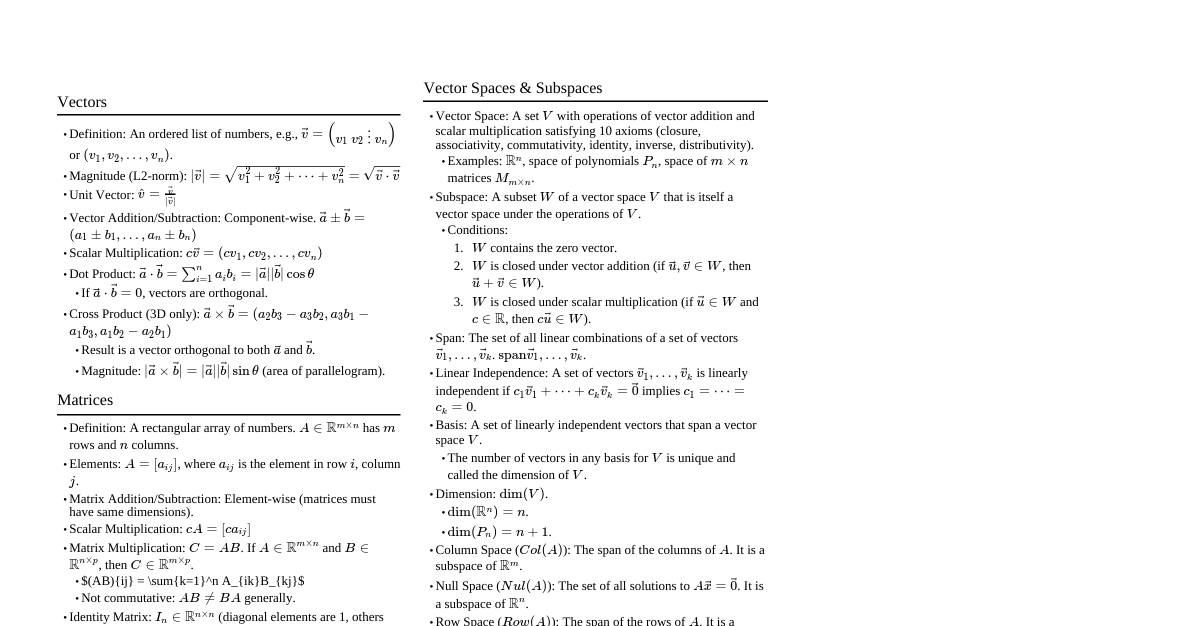

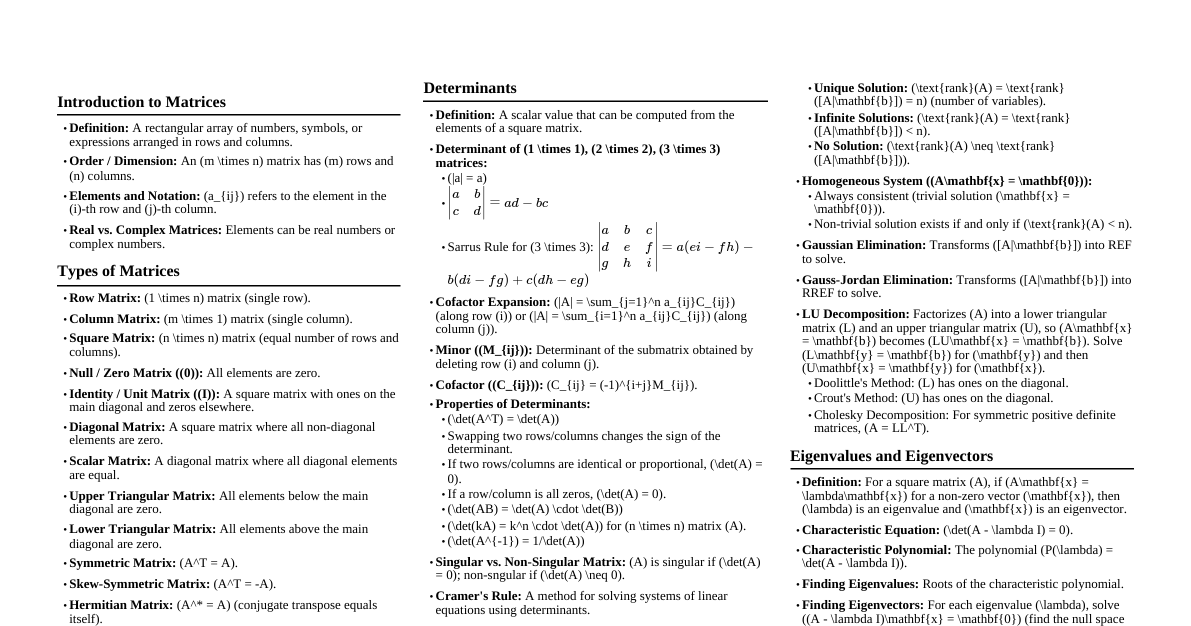

1. Ultra-compressed Overview SVD: $A=U\Sigma V^*$ for any matrix. Essential for low-rank approx, norms, rank, kernel. Eigenvalue Decomp: $A=X\Lambda X^{-1}$ for square matrices. Focus on real symmetric ($A=Q\Lambda Q^*$). Iterative Methods: Power, Inverse, Rayleigh Quotient, QR/Francis for eigenvalues. QR Decomp: $A=QR$ for any matrix. Used in LS, SVD, QR iteration. Conditioning: $\kappa(A)$ measures sensitivity. Key for stability, error bounds. 2. Key Definitions & Concepts 2.1. Singular Value Decomposition (SVD) Definition: For any $A \in \mathbb{R}^{m \times n}$ (or $\mathbb{C}^{m \times n}$), $A = U\Sigma V^*$. $U \in \mathbb{R}^{m \times m}$ (or $\mathbb{C}^{m \times m}$) is unitary (left singular vectors). $V \in \mathbb{R}^{n \times n}$ (or $\mathbb{C}^{n \times n}$) is unitary (right singular vectors). $\Sigma \in \mathbb{R}^{m \times n}$ is diagonal with non-negative singular values $\sigma_1 \ge \sigma_2 \ge \dots \ge \sigma_r > 0 = \sigma_{r+1} = \dots = \sigma_{\min(m,n)}$. $r = \text{rank}(A)$. Reduced SVD: $A = \tilde{U}\tilde{\Sigma}V^*$, where $\tilde{U} \in \mathbb{R}^{m \times n}$ has orthonormal columns, $\tilde{\Sigma} \in \mathbb{R}^{n \times n}$ is diagonal. Fundamental Subspaces: $\text{Range}(A^*) = \text{span}\{v_1, \dots, v_r\}$ (orthonormal basis). $\text{Range}(A) = \text{span}\{u_1, \dots, u_r\}$ (orthonormal basis). $\text{Null}(A) = \text{span}\{v_{r+1}, \dots, v_n\}$ (orthonormal basis). $\text{Null}(A^*) = \text{span}\{u_{r+1}, \dots, u_m\}$ (orthonormal basis). 2.2. Eigenvalue Decomposition Eigenvalue Problem: Find $(\lambda, x)$ s.t. $Ax = \lambda x$. $\lambda$: eigenvalue; $x$: eigenvector ($x \neq 0$). Eigenvalue Decomposition: $A = X\Lambda X^{-1}$, where $X$ columns are eigenvectors, $\Lambda$ is diagonal (eigenvalues). Eigenspace $E_\lambda$: $\text{Ker}(A - \lambda I)$ (eigenvectors for $\lambda$ plus zero vector). Invariant Subspace: $A E_\lambda \subseteq E_\lambda$. Algebraic Multiplicity ($m_A(\lambda)$): Multiplicity of $\lambda$ as a root of characteristic polynomial $p_A(z) = \det(zI - A)$. Geometric Multiplicity ($m_G(\lambda)$): $\dim(\text{Ker}(A - \lambda I))$. Defective Eigenvalue: $m_A(\lambda) > m_G(\lambda)$. A matrix is defective if it has any defective eigenvalues. Diagonalizable: A matrix is diagonalizable iff it is non-defective. Normal Matrix: $A^*A = AA^*$. Unitary/Orthogonal diagonalizable: $A=Q\Lambda Q^*$. Symmetric Matrix: $A=A^*$. Real symmetric matrices have real eigenvalues and orthonormal eigenvectors. $A=Q\Lambda Q^*$ with $\Lambda \in \mathbb{R}^{n \times n}$. 2.3. Matrix Norms Induced $2$-norm (Spectral Norm): $\Vert A \Vert_2 = \sup_{v \neq 0} \frac{\Vert Av \Vert_2}{\Vert v \Vert_2} = \sigma_1$. Frobenius Norm: $\Vert A \Vert_F = \sqrt{\sum_{i,j} |a_{ij}|^2} = \sqrt{\text{trace}(A^*A)} = \sqrt{\sum_j \sigma_j^2}$. Nuclear Norm ($S_1$-norm): $\Vert A \Vert_* = \sum_j \sigma_j$. 2.4. Conditioning Condition Number: $\kappa_2(A) = \frac{\sigma_1}{\sigma_r}$ (for $\text{rank}(A)=r$). If $A$ is square nonsingular, $\kappa_2(A) = \Vert A \Vert_2 \Vert A^{-1} \Vert_2 = \frac{\sigma_1}{\sigma_n}$. Backward Error $\eta(y)$: Smallest $\Delta x$ s.t. $y=f(x+\Delta x)$, $\Vert \Delta x \Vert \le \epsilon \Vert x \Vert$. Rule of thumb: $\text{forward error} \le \text{condition number} \times \text{backward error}$. 3. Essential Formulas & Rules SVD: $A = U\Sigma V^*$. $A^*A = V\Sigma^2 V^*$, $AA^* = U\Sigma^2 U^*$. Low-Rank Approximation (Eckart-Young): $A_k = \sum_{j=1}^k \sigma_j u_j v_j^*$. $\min_{\text{rank}(D)=k} \Vert A - D \Vert_2 = \Vert A - A_k \Vert_2 = \sigma_{k+1}$. $\Vert A - A_k \Vert_F = \sqrt{\sum_{j=k+1}^r \sigma_j^2}$. Rayleigh Quotient: $r(x) = \frac{x^*Ax}{x^*x}$. If $x$ is eigenvector, $r(x)=\lambda$. $\nabla r(x) = 0$ for eigenvectors. QR Iteration: $A_0=A$. For $k=1, \dots$: $A_{k-1} = Q_k R_k$, $A_k = R_k Q_k$. With shift $v_k$: $A_{k-1} - v_k I = Q_k R_k$, $A_k = R_k Q_k + v_k I$. Characteristic Polynomial: $p_A(z) = \det(zI - A) = (z-\lambda_1)\dots(z-\lambda_n)$. Matrix Norm Properties: $\Vert AB \Vert \le \Vert A \Vert \Vert B \Vert$ (submultiplicativity). Inequalities for vector norms: For $x \in \mathbb{C}^n$: $\frac{1}{\sqrt{n}}\Vert x \Vert_2 \le \Vert x \Vert_\infty \le \Vert x \Vert_2$. $\frac{1}{\sqrt{n}}\Vert x \Vert_1 \le \Vert x \Vert_2 \le \Vert x \Vert_1$. $\frac{1}{n}\Vert x \Vert_1 \le \Vert x \Vert_\infty \le \Vert x \Vert_1$. 4. Step-by-step Methods & Algorithms 4.1. Power Method (for dominant eigenvalue $\lambda_{\max}$) Initialize $v^{(0)}$ with $\Vert v^{(0)} \Vert = 1$. For $k=1, \dots$ until convergence: Compute $w = Av^{(k-1)}$. Normalize $v^{(k)} = \frac{w}{\Vert w \Vert}$. Estimate eigenvalue $\lambda^{(k)} = (v^{(k)})^*Av^{(k)}$ (Rayleigh quotient). Convergence: $\Vert v^{(k)} - (\pm q_1) \Vert = O \left( \left| \frac{\lambda_2}{\lambda_1} \right|^k \right)$, $\Vert \lambda^{(k)} - \lambda_1 \Vert = O \left( \left| \frac{\lambda_2}{\lambda_1} \right|^{2k} \right)$. Requires $\alpha_1 \neq 0$ in $v^{(0)} = \sum \alpha_i q_i$. 4.2. Inverse Iteration (for $\lambda_{\min}$ or $\lambda_J$ closest to $v$) Choose a shift $v$ not equal to an eigenvalue. Initialize $v^{(0)}$ with $\Vert v^{(0)} \Vert = 1$. For $k=1, \dots$ until convergence: Solve $(A - vI)w = v^{(k-1)}$ for $w$. Normalize $v^{(k)} = \frac{w}{\Vert w \Vert}$. Estimate eigenvalue $\lambda^{(k)} = (v^{(k)})^*Av^{(k)}$ (Rayleigh quotient). Convergence: $\Vert v^{(k)} - (\pm q_J) \Vert = O \left( \left| \frac{v-\lambda_J}{v-\lambda_S} \right|^k \right)$, $\Vert \lambda^{(k)} - \lambda_J \Vert = O \left( \left| \frac{v-\lambda_J}{v-\lambda_S} \right|^{2k} \right)$. Cost: $O(n^3)$ for solving linear system (LU outside loop). 4.3. Rayleigh Quotient Iteration (accelerated convergence) Initialize $v^{(0)}$ with $\Vert v^{(0)} \Vert = 1$. Estimate $\lambda^{(0)} = (v^{(0)})^*Av^{(0)}$. For $k=1, \dots$ until convergence: Solve $(A - \lambda^{(k-1)}I)w = v^{(k-1)}$. Normalize $v^{(k)} = \frac{w}{\Vert w \Vert}$. Estimate $\lambda^{(k)} = (v^{(k)})^*Av^{(k)}$. Convergence: Cubic (for $\lambda^{(k)}$). Cost $O(n^3)$ per iteration (matrix changes). 4.4. QR Iteration (Francis Algorithm) Phase 1 (Reduction): Reduce $A$ to Hessenberg form ($H$) (or tridiagonal if $A$ is symmetric) using Householder transformations. Cost: $O(n^3)$ for Hessenberg, $O(n)$ for symmetric tridiagonal. Phase 2 (Subspace iteration): Apply plain QR iteration or QR iteration with shifts to $H$. $H_0 = H$. For $k=1, \dots$ until convergence: $H_{k-1} - v_k I = Q_k R_k$, $H_k = R_k Q_k + v_k I$. $v_k$: shift (e.g., Rayleigh shift $h_{n,n}^{(k-1)}$ or Wilkinson shift). Converges to upper triangular (eigenvalues on diagonal) or block upper triangular (eigenvalues of blocks). Cost: $O(n^2)$ per iteration for Hessenberg, $O(n)$ for tridiagonal. 4.5. Solving Least Squares Problems (LLS): $\min_x \Vert Ax - b \Vert_2$ Using SVD: $A = U\Sigma V^*$. Solution $x = V\Sigma^{-1}U^*b = \sum_{i=1}^r \frac{u_i^*b}{\sigma_i} v_i$. Unique solution if $A$ has full column rank. Using QR decomposition: $A=QR$. Solution $x = R^{-1}Q^*b$. 5. Minimal Worked Patterns / Templates SVD computation: $A \in \mathbb{R}^{m \times n}$. Compute $A^*A$ (or $AA^*$). Find eigenvalues $\lambda_i(A^*A) = \sigma_i^2$ and eigenvectors $v_i$. $\Sigma = \text{diag}(\sigma_i)$. $V = [v_1 \dots v_n]$. Compute $u_i = \frac{1}{\sigma_i} A v_i$ for $i=1, \dots, r$. Complete $U$ using orthonormal basis of $\text{Null}(A^*)$ if $m>r$. Image compression (SVD): Convert image to grayscale matrix $P$. Compute SVD of $P$: $[U,S,V] = \text{svd}(P)$. Choose rank $k$, set $S(k+1:end, k+1:end) = 0$. Reconstruct compressed image: $P_k = U S V'$. 6. Critical Distinctions, Conditions & Pitfalls SVD vs. Eigenvalue Decomp: SVD uses two orthonormal bases ($U, V$); Eigenvalue uses one basis ($X$) which is generally not orthonormal. SVD is for any matrix; Eigenvalue for square matrices. SVD singular values are always non-negative; Eigenvalues can be complex or negative. For SPD matrices, SVD and Eigenvalue Decomposition are equivalent. Power Method Limitations: Only finds dominant eigenvalue. Linear convergence, slow if $|\lambda_2/\lambda_1|$ is close to 1. Requires initial vector $v^{(0)}$ to have a component in the dominant eigenvector direction ($\alpha_1 \neq 0$). Inverse Iteration Limitations: Requires solving a linear system $(A-vI)w = v^{(k-1)}$ at each step. Costly if matrix $(A-vI)$ changes or is not easily factorizable. QR Iteration: Reduction to Hessenberg/tridiagonal is crucial for efficiency. Shifts (Rayleigh, Wilkinson) accelerate convergence significantly. Without shifts, $QR$ iteration can be slow. Condition Number: High condition numbers indicate ill-conditioned problems, sensitive to perturbations. $\kappa_2(A^*A) = (\kappa_2(A))^2$. Numerical Rank: Not always explicit. Can be estimated by inspecting singular values (e.g., $\sigma_k$ above a tolerance). 7. Exam-trigger Phrases "Approximation by Low-Rank Matrices" $\rightarrow$ SVD (Eckart-Young). "Finding Structure with Randomness" $\rightarrow$ Randomized Algorithms (Proto-algorithm, Randomized SVD). "Compute an approximate basis for the range of A" $\rightarrow$ Randomized Range Finder (Algorithm 4.1). "Low-rank matrix approximations (e.g., SVD, QR)" $\rightarrow$ SVD, QR, randomized methods. "Dealing with truly massive data sets" $\rightarrow$ Randomized algorithms, pass-efficient algorithms. "Dominant components of SVD" $\rightarrow$ Power method, randomized SVD. "Sparse input matrix" $\rightarrow$ Krylov subspace methods, randomized methods. "Matrix too large to fit in fast memory" $\rightarrow$ Pass-efficient algorithms (randomized). "Dimension reduction" $\rightarrow$ Randomized methods, PCA, SVD. "Eigenvalue decomposition" $\rightarrow$ QR iteration, Power method, Inverse iteration. "Least squares problem" $\rightarrow$ SVD, QR decomposition. "Fixed-precision approximation problem" $\rightarrow$ Adaptive randomized algorithms (Algorithm 4.2). "Singular values decay slowly" $\rightarrow$ Power scheme (Algorithm 4.3/4.4). "General dense matrix stored in slow memory or streamed" $\rightarrow$ Single-pass algorithms. "Computational costs" $\rightarrow$ Compare $O(mnk)$ vs $O(mn \log k)$ vs $O(kT_{\text{mult}})$. "Performance of randomized schemes" $\rightarrow$ Error bounds, probability of failure. "Orthogonal projector" $\rightarrow$ $P^2=P, P^*=P$. "Positive semidefinite matrices" $\rightarrow$ $M \ge 0$, $u^*Mu \ge 0$.