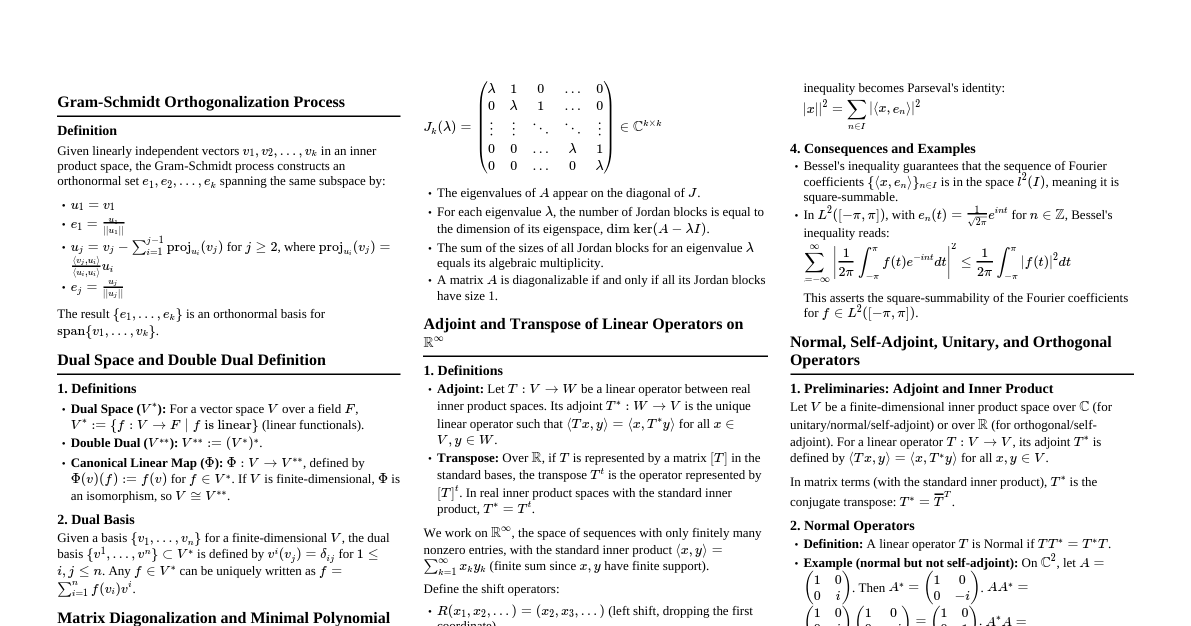

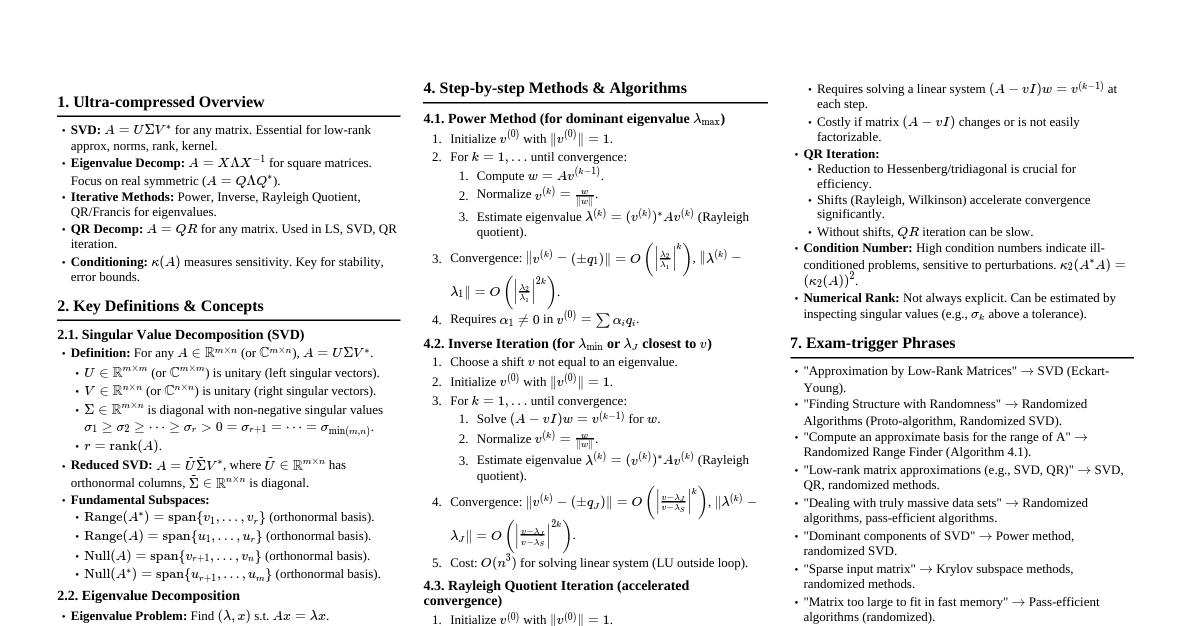

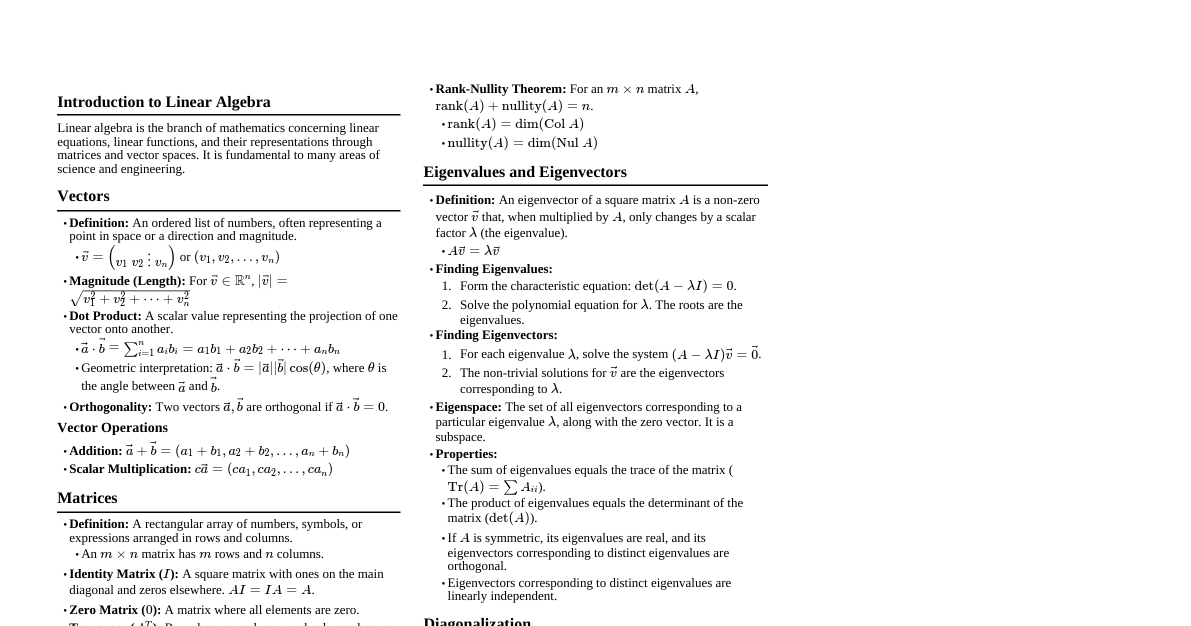

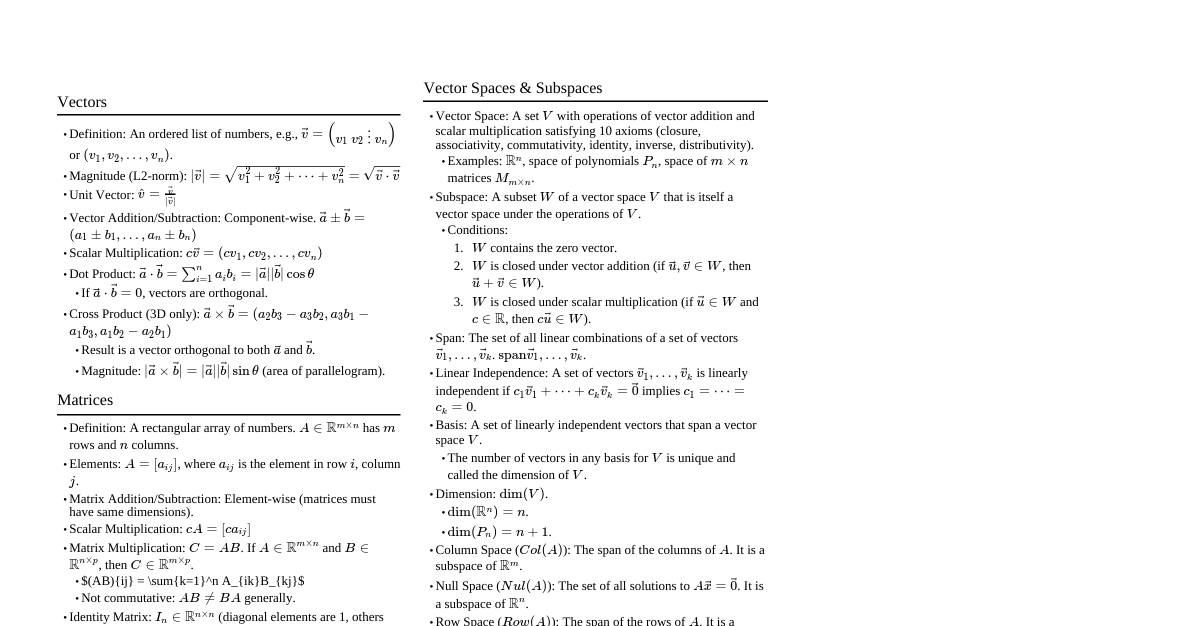

### Introduction to Matrices - **Definition:** A rectangular array of numbers, symbols, or expressions arranged in rows and columns. - **Order / Dimension:** An \(m \times n\) matrix has \(m\) rows and \(n\) columns. - **Elements and Notation:** \(a_{ij}\) refers to the element in the \(i\)-th row and \(j\)-th column. - **Real vs. Complex Matrices:** Elements can be real numbers or complex numbers. ### Types of Matrices - **Row Matrix:** \(1 \times n\) matrix (single row). - **Column Matrix:** \(m \times 1\) matrix (single column). - **Square Matrix:** \(n \times n\) matrix (equal number of rows and columns). - **Null / Zero Matrix (\(0\)):** All elements are zero. - **Identity / Unit Matrix (\(I\)):** A square matrix with ones on the main diagonal and zeros elsewhere. - **Diagonal Matrix:** A square matrix where all non-diagonal elements are zero. - **Scalar Matrix:** A diagonal matrix where all diagonal elements are equal. - **Upper Triangular Matrix:** All elements below the main diagonal are zero. - **Lower Triangular Matrix:** All elements above the main diagonal are zero. - **Symmetric Matrix:** \(A^T = A\). - **Skew-Symmetric Matrix:** \(A^T = -A\). - **Hermitian Matrix:** \(A^* = A\) (conjugate transpose equals itself). - **Skew-Hermitian Matrix:** \(A^* = -A\). - **Orthogonal Matrix:** \(A^T A = I\). - **Unitary Matrix:** \(A^* A = I\). - **Idempotent Matrix:** \(A^2 = A\). - **Nilpotent Matrix:** \(A^k = 0\) for some positive integer \(k\). - **Involutory Matrix:** \(A^2 = I\). - **Periodic Matrix:** \(A^{k+1} = A\) for some positive integer \(k\). ### Operations on Matrices #### Matrix Addition & Subtraction - **Conditions:** Matrices must have the same order. - **Properties:** Commutative (\(A+B = B+A\)), Associative (\((A+B)+C = A+(B+C)\)). #### Scalar Multiplication - Multiply each element of the matrix by the scalar. #### Matrix Multiplication - **Condition:** Number of columns in the first matrix must equal the number of rows in the second matrix. - **Properties:** Associative (\((AB)C = A(BC)\)), Distributive (\(A(B+C) = AB+AC\)), NOT Commutative in general (\(AB \neq BA\)). - **Power of a Matrix:** \(A^k = A \cdot A \cdots A\) (\(k\) times). #### Equality of Matrices - Two matrices are equal if they have the same order and their corresponding elements are equal. ### Transpose of a Matrix - **Definition:** \(A^T\) is obtained by interchanging rows and columns of \(A\). - **Properties:** - \((A^T)^T = A\) - \((A + B)^T = A^T + B^T\) - \((kA)^T = kA^T\) - \((AB)^T = B^T A^T\) - **Symmetric and Skew-Symmetric:** A square matrix \(A\) can be written as \(A = \frac{1}{2}(A+A^T) + \frac{1}{2}(A-A^T)\). ### Conjugate and Conjugate Transpose - **Complex Conjugate of a Matrix (\(\bar{A}\)):** Replace each element \(a_{ij}\) with its complex conjugate \(\bar{a}_{ij}\). - **Conjugate Transpose / Hermitian Transpose (\(A^*\)):** \(A^* = (\bar{A})^T\). - **Properties of \(A^*\):** - \((A^*)^* = A\) - \((A+B)^* = A^* + B^*\) - \((kA)^* = \bar{k}A^*\) - \((AB)^* = B^* A^*\) ### Trace of a Matrix - **Definition:** For a square matrix \(A\), the trace (\(\text{tr}(A)\)) is the sum of its diagonal elements. - **Properties:** - \(\text{tr}(A + B) = \text{tr}(A) + \text{tr}(B)\) - \(\text{tr}(kA) = k \cdot \text{tr}(A)\) - \(\text{tr}(AB) = \text{tr}(BA)\) - \(\text{tr}(A^T) = \text{tr}(A)\) ### Determinants - **Definition:** A scalar value that can be computed from the elements of a square matrix. - **Determinant of \(1 \times 1\), \(2 \times 2\), \(3 \times 3\) matrices:** - \(|a| = a\) - $$\begin{vmatrix} a & b \\ c & d \end{vmatrix} = ad - bc$$ - Sarrus Rule for \(3 \times 3\): $$\begin{vmatrix} a & b & c \\ d & e & f \\ g & h & i \end{vmatrix} = a(ei-fh) - b(di-fg) + c(dh-eg)$$ - **Cofactor Expansion:** \(|A| = \sum_{j=1}^n a_{ij}C_{ij}\) (along row \(i\)) or \(|A| = \sum_{i=1}^n a_{ij}C_{ij}\) (along column \(j\)). - **Minor (\(M_{ij}\)):** Determinant of the submatrix obtained by deleting row \(i\) and column \(j\). - **Cofactor (\(C_{ij}\)):** \(C_{ij} = (-1)^{i+j}M_{ij}\). - **Properties of Determinants:** - \(\det(A^T) = \det(A)\) - Swapping two rows/columns changes the sign of the determinant. - If two rows/columns are identical or proportional, \(\det(A) = 0\). - If a row/column is all zeros, \(\det(A) = 0\). - \(\det(AB) = \det(A) \cdot \det(B)\) - \(\det(kA) = k^n \cdot \det(A)\) for \(n \times n\) matrix \(A\). - \(\det(A^{-1}) = 1/\det(A)\) - **Singular vs. Non-Singular Matrix:** \(A\) is singular if \(\det(A) = 0\); non-singular if \(\det(A) \neq 0\). - **Cramer's Rule:** A method for solving systems of linear equations using determinants. ### Adjoint (Adjugate) of a Matrix - **Definition:** The transpose of the cofactor matrix, \(\text{adj}(A) = (C_{ij})^T\). - **Property:** \(A \cdot \text{adj}(A) = \text{adj}(A) \cdot A = \det(A) \cdot I\). - **Property:** \(\text{adj}(AB) = \text{adj}(B) \cdot \text{adj}(A)\). ### Inverse of a Matrix - **Existence Condition:** An \(n \times n\) matrix \(A\) has an inverse \(A^{-1}\) if and only if \(\det(A) \neq 0\) (i.e., \(A\) is non-singular). - **Formula:** \(A^{-1} = \frac{1}{\det(A)} \cdot \text{adj}(A)\). - **Properties:** - \((A^{-1})^{-1} = A\) - \((AB)^{-1} = B^{-1}A^{-1}\) - \((A^T)^{-1} = (A^{-1})^T\) - **Finding Inverse via Row Operations (Gauss-Jordan Elimination):** Form the augmented matrix \([A|I]\) and perform elementary row operations to transform it into \([I|A^{-1}]\). ### Rank of a Matrix - **Definition:** The maximum number of linearly independent rows (or columns) in a matrix. Also, the dimension of the column space (or row space). - **Row Echelon Form (REF):** 1. All non-zero rows are above any rows of all zeros. 2. The leading entry (pivot) of each non-zero row is in a column to the right of the leading entry of the row above it. 3. All entries in a column below a leading entry are zeros. - **Reduced Row Echelon Form (RREF):** REF plus: 1. The leading entry in each non-zero row is 1. 2. Each leading 1 is the only non-zero entry in its column. - **Elementary Row Operations:** 1. Swap two rows (\(R_i \leftrightarrow R_j\)). 2. Multiply a row by a non-zero scalar (\(kR_i \rightarrow R_i\)). 3. Add a multiple of one row to another row (\(R_i + kR_j \rightarrow R_i\)). - **Minor Method of Finding Rank:** The rank is the order of the largest non-zero minor. - **Rank via Normal / Canonical Form:** A matrix can be reduced to the form \(\begin{pmatrix} I_r & 0 \\ 0 & 0 \end{pmatrix}\), where \(r\) is the rank. - **Properties:** - \(\text{rank}(A) = \text{rank}(A^T)\). - \(\text{rank}(AB) \le \min(\text{rank}(A), \text{rank}(B))\). - \(\text{rank}(A + B) \le \text{rank}(A) + \text{rank}(B)\). - **Null Space (\(N(A)\)) and Nullity:** The null space is the set of all vectors \(\mathbf{x}\) such that \(A\mathbf{x} = \mathbf{0}\). Nullity is the dimension of the null space. - **Rank-Nullity Theorem:** For an \(m \times n\) matrix \(A\), \(\text{rank}(A) + \text{nullity}(A) = n\). ### System of Linear Equations - **Representation:** \(A\mathbf{x} = \mathbf{b}\), where \(A\) is the coefficient matrix, \(\mathbf{x}\) is the variable vector, and \(\mathbf{b}\) is the constant vector. - **Augmented Matrix:** \([A|\mathbf{b}]\). - **Consistency Conditions (Rouché–Capelli Theorem):** - **Unique Solution:** \(\text{rank}(A) = \text{rank}([A|\mathbf{b}]) = n\) (number of variables). - **Infinite Solutions:** \(\text{rank}(A) = \text{rank}([A|\mathbf{b}]) ### Eigenvalues and Eigenvectors - **Definition:** For a square matrix \(A\), if \(A\mathbf{x} = \lambda\mathbf{x}\) for a non-zero vector \(\mathbf{x}\), then \(\lambda\) is an eigenvalue and \(\mathbf{x}\) is an eigenvector. - **Characteristic Equation:** \(\det(A - \lambda I) = 0\). - **Characteristic Polynomial:** The polynomial \(P(\lambda) = \det(A - \lambda I)\). - **Finding Eigenvalues:** Roots of the characteristic polynomial. - **Finding Eigenvectors:** For each eigenvalue \(\lambda\), solve \((A - \lambda I)\mathbf{x} = \mathbf{0}\) (find the null space of \((A - \lambda I)\)). - **Properties of Eigenvalues:** - Sum of eigenvalues = \(\text{tr}(A)\). - Product of eigenvalues = \(\det(A)\). - Eigenvalues of \(A^k\) are \(\lambda^k\). - Eigenvalues of \(A^{-1}\) are \(1/\lambda\). - Eigenvalues of \(kA\) are \(k\lambda\). - Eigenvalues of \(A^T\) are the same as \(A\). - **Eigenvalues of Special Matrices:** - Triangular Matrix: Diagonal elements. - Symmetric Matrix: Always real. - Orthogonal Matrix: Have modulus 1 (i.e., \(|\lambda| = 1\)). - **Algebraic Multiplicity (AM):** The multiplicity of \(\lambda\) as a root of the characteristic polynomial. - **Geometric Multiplicity (GM):** The dimension of the eigenspace corresponding to \(\lambda\) (i.e., \(\text{nullity}(A - \lambda I)\)). Always \(1 \le \text{GM} \le \text{AM}\). ### Diagonalization - **Condition for Diagonalizability:** An \(n \times n\) matrix \(A\) is diagonalizable if and only if it has \(n\) linearly independent eigenvectors (i.e., for every eigenvalue, AM = GM). - **Modal Matrix (\(P\)):** A matrix whose columns are the linearly independent eigenvectors of \(A\). - **Diagonal Form:** If \(A\) is diagonalizable, then \(D = P^{-1}AP\), where \(D\) is a diagonal matrix with the eigenvalues on its diagonal. - **Powers of Matrix using Diagonalization:** \(A^k = PD^kP^{-1}\). - **Orthogonal Diagonalization:** If \(A\) is a symmetric matrix, it can be orthogonally diagonalized (i.e., \(P\) is an orthogonal matrix, so \(P^{-1} = P^T\)). ### Cayley-Hamilton Theorem - **Statement:** Every square matrix satisfies its own characteristic equation. If \(P(\lambda) = \det(A - \lambda I) = c_n\lambda^n + \dots + c_1\lambda + c_0\), then \(P(A) = c_n A^n + \dots + c_1 A + c_0 I = 0\). - **Finding Inverse using Cayley-Hamilton:** \(A^{-1} = -\frac{1}{c_0}(c_n A^{n-1} + \dots + c_1 I)\), provided \(c_0 = \det(A) \neq 0\). - **Finding Higher Powers of a Matrix:** Express \(A^k\) as a linear combination of \(I, A, \dots, A^{n-1}\). ### Minimal Polynomial - **Definition:** The monic polynomial of lowest degree \(m(\lambda)\) such that \(m(A) = 0\). - **Relationship with Characteristic Polynomial:** The minimal polynomial divides the characteristic polynomial. ### Jordan Canonical Form - **Jordan Blocks:** A block diagonal matrix where each block is a Jordan block \(J_k(\lambda)\). - **When Jordan Form = Diagonal Form:** When the matrix is diagonalizable (i.e., all Jordan blocks are \(1 \times 1\)). - **Application:** Used for matrices that are not diagonalizable (defective matrices). Every square matrix is similar to a Jordan canonical form. ### Quadratic Forms - **Definition:** A quadratic form is a scalar function of the form \(Q(\mathbf{x}) = \mathbf{x}^T A \mathbf{x}\), where \(A\) is a symmetric matrix. - **Matrix of Quadratic Form:** The symmetric matrix \(A\). - **Classification:** - **Positive Definite:** \(Q(\mathbf{x}) > 0\) for all \(\mathbf{x} \neq \mathbf{0}\) (all eigenvalues \(> 0\)). - **Positive Semi-Definite:** \(Q(\mathbf{x}) \ge 0\) for all \(\mathbf{x} \neq \mathbf{0}\) (all eigenvalues \(\ge 0\)). - **Negative Definite:** \(Q(\mathbf{x}) ### Bilinear Forms - **Definition:** A function \(B(\mathbf{x}, \mathbf{y}) = \mathbf{x}^T A \mathbf{y}\) that is linear in each argument separately. - **Symmetric Bilinear Form:** When \(A\) is a symmetric matrix, \(B(\mathbf{x}, \mathbf{y}) = B(\mathbf{y}, \mathbf{x})\). ### Vector Spaces - **Definition and Axioms:** A set \(V\) with two operations (vector addition and scalar multiplication) satisfying 10 axioms (closure, associativity, commutativity, identity elements, inverse elements, distributive properties). - **Subspaces:** A subset \(W\) of a vector space \(V\) that is itself a vector space under the same operations. - **Linear Combination:** A sum of scalar multiples of vectors, e.g., \(c_1\mathbf{v}_1 + c_2\mathbf{v}_2 + \dots + c_k\mathbf{v}_k\). - **Linear Independence / Dependence:** A set of vectors is linearly independent if the only linear combination that equals the zero vector is when all scalars are zero. Otherwise, it's linearly dependent. - **Span of a Set of Vectors:** The set of all possible linear combinations of the vectors. - **Basis and Dimension:** A basis for a vector space is a linearly independent set of vectors that spans the entire space. The dimension is the number of vectors in a basis. - **Column Space (\(Col(A)\) / Range):** The span of the column vectors of \(A\). - **Row Space (\(Row(A)\)):** The span of the row vectors of \(A\). - **Null Space (\(Null(A)\)):** The set of all solutions to \(A\mathbf{x} = \mathbf{0}\). ### Linear Transformations - **Definition:** A function \(T: V \rightarrow W\) such that \(T(\mathbf{u} + \mathbf{v}) = T(\mathbf{u}) + T(\mathbf{v})\) and \(T(c\mathbf{u}) = cT(\mathbf{u})\). - **Representation as Matrix:** Any linear transformation from \(\mathbb{R}^n\) to \(\mathbb{R}^m\) can be represented by an \(m \times n\) matrix \(A\) such that \(T(\mathbf{x}) = A\mathbf{x}\). - **Kernel (\(Ker(T)\) / Null Space):** The set of all vectors \(\mathbf{v} \in V\) such that \(T(\mathbf{v}) = \mathbf{0}_W\). - **Image (\(Im(T)\) / Range):** The set of all vectors \(\mathbf{w} \in W\) such that \(\mathbf{w} = T(\mathbf{v})\) for some \(\mathbf{v} \in V\). - **Rank-Nullity Theorem:** \(\dim(Ker(T)) + \dim(Im(T)) = \dim(V)\). - **Composition of Transformations:** Corresponds to matrix multiplication. If \(T_1(\mathbf{x}) = A_1\mathbf{x}\) and \(T_2(\mathbf{y}) = A_2\mathbf{y}\), then \(T_2(T_1(\mathbf{x})) = (A_2A_1)\mathbf{x}\). ### Inner Product Spaces - **Inner Product Definition:** A function \(\langle \mathbf{u}, \mathbf{v} \rangle\) that takes two vectors and returns a scalar, satisfying properties of linearity, symmetry (or conjugate symmetry for complex), and positive-definiteness. - **Norm and Distance:** - **Norm:** \(||\mathbf{v}|| = \sqrt{\langle \mathbf{v}, \mathbf{v} \rangle}\). - **Distance:** \(d(\mathbf{u}, \mathbf{v}) = ||\mathbf{u} - \mathbf{v}||\). - **Orthogonality:** Two vectors \(\mathbf{u}, \mathbf{v}\) are orthogonal if \(\langle \mathbf{u}, \mathbf{v} \rangle = 0\). - **Orthonormal Basis:** A basis where all vectors are mutually orthogonal and have unit norm. - **Gram-Schmidt Orthonormalization Process:** A procedure for constructing an orthonormal basis from any basis of an inner product space. ### Numerical Methods for Matrices #### Iterative Methods for Linear Systems (\(A\mathbf{x} = \mathbf{b}\)) - **Jacobi Method:** Iterative method that solves for each unknown in terms of the others from the previous iteration. - **Gauss-Seidel Method:** Similar to Jacobi, but uses updated values as soon as they are computed within the current iteration. - **Convergence Condition:** Often converges if the matrix \(A\) is strictly diagonally dominant. #### LU Decomposition Methods - Used for solving linear systems efficiently when multiple \(\mathbf{b}\) vectors are involved.