Linear Algebra Cheatsheet

Cheatsheet Content

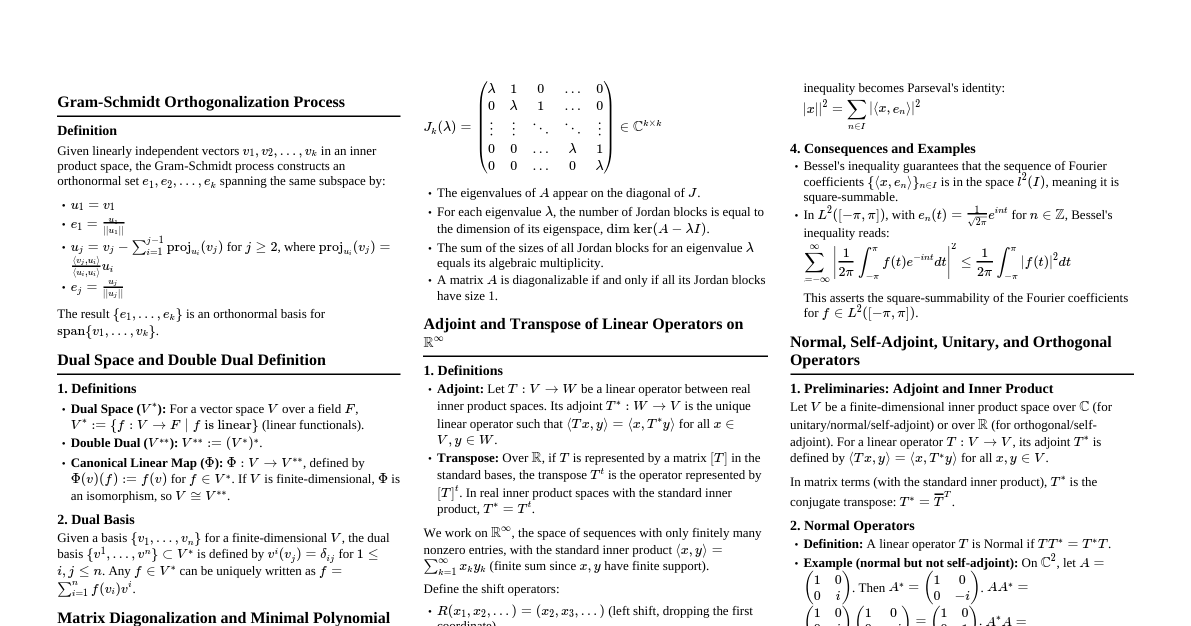

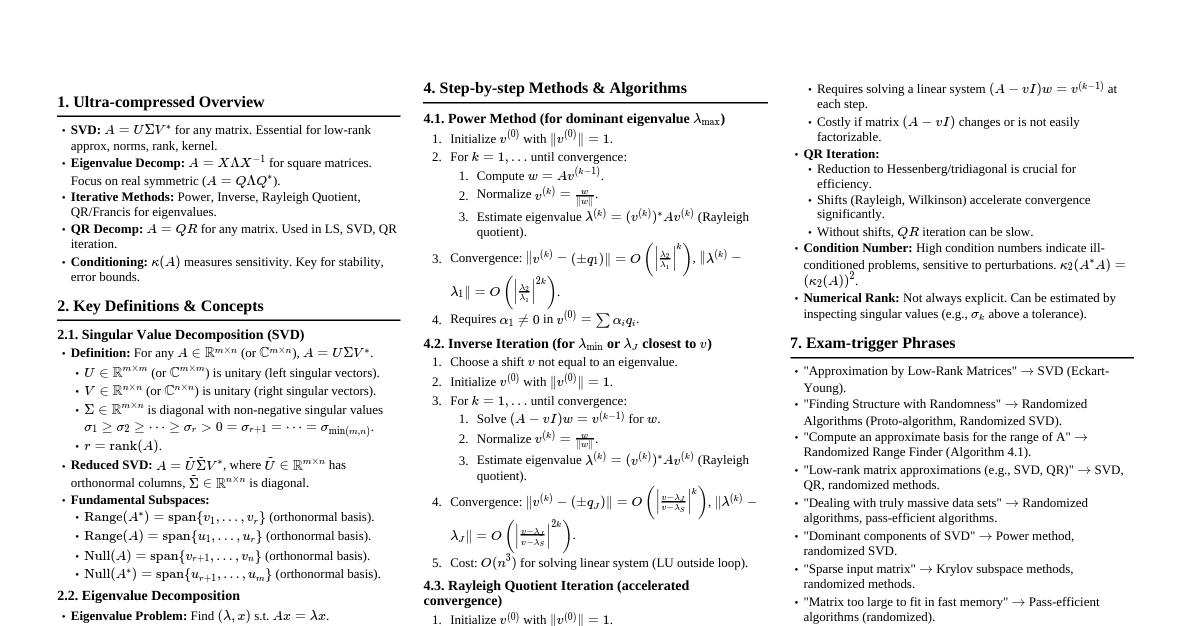

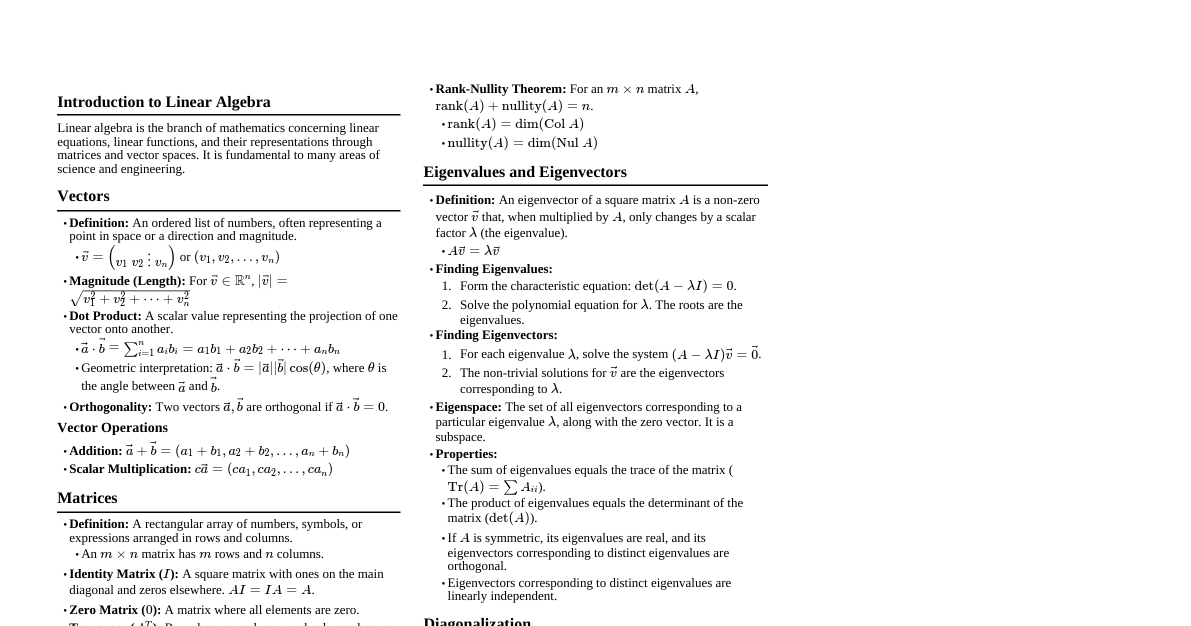

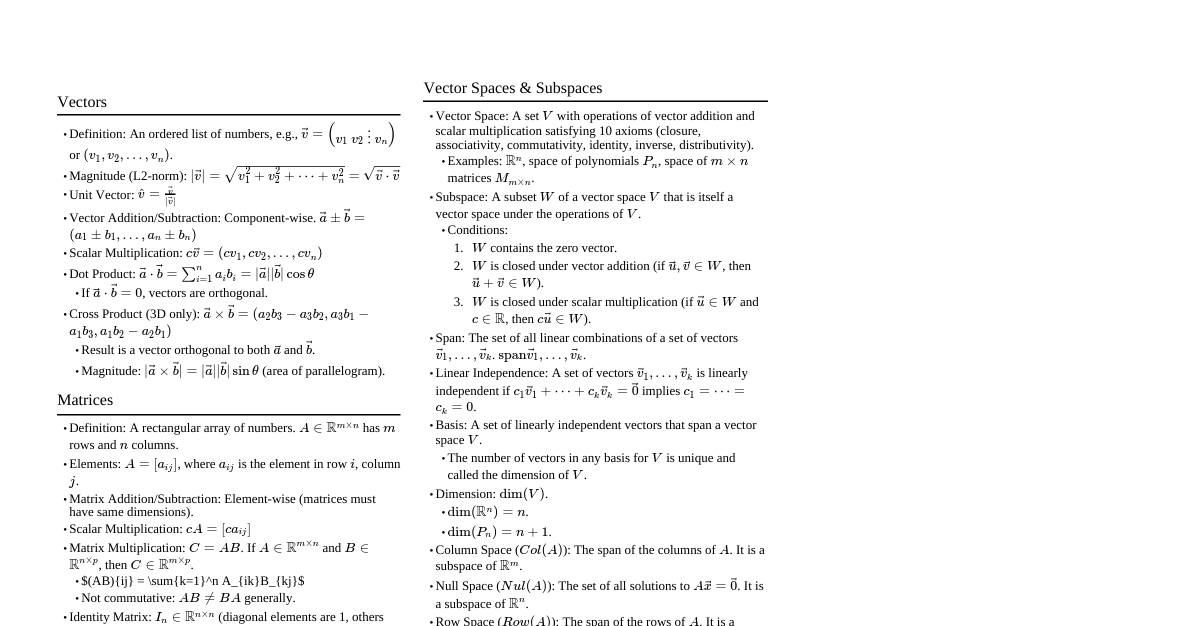

### Vector Spaces - **Definition:** A vector space $V$ over a field $F$ is a set with two operations, vector addition ($+$) and scalar multiplication ($\cdot$), satisfying the following axioms for all $\vec{u}, \vec{v}, \vec{w} \in V$ and $a, b \in F$: 1. Closure under addition: $\vec{u} + \vec{v} \in V$ 2. Commutativity of addition: $\vec{u} + \vec{v} = \vec{v} + \vec{u}$ 3. Associativity of addition: $(\vec{u} + \vec{v}) + \vec{w} = \vec{u} + (\vec{v} + \vec{w})$ 4. Additive identity: There exists a zero vector $\vec{0} \in V$ such that $\vec{u} + \vec{0} = \vec{u}$ 5. Additive inverse: For each $\vec{u} \in V$, there exists $-\vec{u} \in V$ such that $\vec{u} + (-\vec{u}) = \vec{0}$ 6. Closure under scalar multiplication: $a \cdot \vec{u} \in V$ 7. Distributivity over vector addition: $a \cdot (\vec{u} + \vec{v}) = a \cdot \vec{u} + a \cdot \vec{v}$ 8. Distributivity over scalar addition: $(a+b) \cdot \vec{u} = a \cdot \vec{u} + b \cdot \vec{u}$ 9. Associativity of scalar multiplication: $(ab) \cdot \vec{u} = a \cdot (b \cdot \vec{u})$ 10. Multiplicative identity: $1 \cdot \vec{u} = \vec{u}$ - **Examples:** $\mathbb{R}^n$, $P_n(F)$ (polynomials of degree $\le n$), $C[a,b]$ (continuous functions on $[a,b]$), $M_{m \times n}(F)$ (matrices). ### Subspaces - **Definition:** A subset $W$ of a vector space $V$ is a subspace if $W$ is itself a vector space under the operations of $V$. - **Subspace Test:** A non-empty subset $W \subseteq V$ is a subspace if and only if for all $\vec{u}, \vec{v} \in W$ and $a \in F$: 1. $\vec{u} + \vec{v} \in W$ (Closure under addition) 2. $a\vec{u} \in W$ (Closure under scalar multiplication) - **Proof:** - ($\Rightarrow$) If $W$ is a subspace, it must satisfy all vector space axioms, including closure under addition and scalar multiplication. - ($\Leftarrow$) Assume $W$ is non-empty and satisfies the two closure properties. - Additive identity: Since $W$ is non-empty, let $\vec{u} \in W$. By property 2, $0\vec{u} = \vec{0} \in W$. - Additive inverse: For any $\vec{u} \in W$, by property 2, $(-1)\vec{u} = -\vec{u} \in W$. - All other axioms (commutativity, associativity, distributivity, multiplicative identity) are inherited from $V$ since elements of $W$ are also elements of $V$. ### Span and Linear Independence - **Linear Combination:** A vector $\vec{v}$ is a linear combination of vectors $\vec{v}_1, \dots, \vec{v}_k$ if $\vec{v} = c_1\vec{v}_1 + \dots + c_k\vec{v}_k$ for scalars $c_i \in F$. - **Span:** The span of a set of vectors $S = \{\vec{v}_1, \dots, \vec{v}_k\}$, denoted $\text{span}(S)$, is the set of all possible linear combinations of the vectors in $S$. - **Theorem:** $\text{span}(S)$ is a subspace of $V$. - **Proof:** Let $\vec{x}, \vec{y} \in \text{span}(S)$ and $a \in F$. - Then $\vec{x} = c_1\vec{v}_1 + \dots + c_k\vec{v}_k$ and $\vec{y} = d_1\vec{v}_1 + \dots + d_k\vec{v}_k$. - $\vec{x} + \vec{y} = (c_1+d_1)\vec{v}_1 + \dots + (c_k+d_k)\vec{v}_k \in \text{span}(S)$. - $a\vec{x} = (ac_1)\vec{v}_1 + \dots + (ac_k)\vec{v}_k \in \text{span}(S)$. - Since $\text{span}(S)$ contains $\vec{0}$ (by taking all $c_i=0$), it is non-empty. By the subspace test, $\text{span}(S)$ is a subspace. - **Linear Independence:** A set of vectors $S = \{\vec{v}_1, \dots, \vec{v}_k\}$ is linearly independent if the only solution to $c_1\vec{v}_1 + \dots + c_k\vec{v}_k = \vec{0}$ is $c_1 = \dots = c_k = 0$. - **Linear Dependence:** A set $S$ is linearly dependent if it is not linearly independent; i.e., there exist scalars $c_1, \dots, c_k$, not all zero, such that $c_1\vec{v}_1 + \dots + c_k\vec{v}_k = \vec{0}$. - **Theorem:** A set $S$ is linearly dependent if and only if at least one vector in $S$ can be written as a linear combination of the others. - **Proof:** - ($\Rightarrow$) Assume $S$ is linearly dependent. Then $c_1\vec{v}_1 + \dots + c_k\vec{v}_k = \vec{0}$ for some $c_i$ not all zero. Suppose $c_j \ne 0$. Then $\vec{v}_j = -\frac{c_1}{c_j}\vec{v}_1 - \dots - \frac{c_{j-1}}{c_j}\vec{v}_{j-1} - \frac{c_{j+1}}{c_j}\vec{v}_{j+1} - \dots - \frac{c_k}{c_j}\vec{v}_k$. - ($\Leftarrow$) Assume $\vec{v}_j = d_1\vec{v}_1 + \dots + d_{j-1}\vec{v}_{j-1} + d_{j+1}\vec{v}_{j+1} + \dots + d_k\vec{v}_k$. Then $d_1\vec{v}_1 + \dots + d_{j-1}\vec{v}_{j-1} - \vec{v}_j + d_{j+1}\vec{v}_{j+1} + \dots + d_k\vec{v}_k = \vec{0}$. Since the coefficient of $\vec{v}_j$ is $-1 \ne 0$, the set is linearly dependent. ### Basis and Dimension - **Basis:** A set of vectors $B = \{\vec{v}_1, \dots, \vec{v}_n\}$ is a basis for a vector space $V$ if: 1. $B$ is linearly independent. 2. $\text{span}(B) = V$. - **Theorem:** Every vector in $V$ can be uniquely expressed as a linear combination of basis vectors. - **Proof:** - Existence: Since $\text{span}(B) = V$, any $\vec{v} \in V$ can be written as $\vec{v} = c_1\vec{v}_1 + \dots + c_n\vec{v}_n$. - Uniqueness: Suppose $\vec{v} = c_1\vec{v}_1 + \dots + c_n\vec{v}_n$ and $\vec{v} = d_1\vec{v}_1 + \dots + d_n\vec{v}_n$. - Subtracting these gives $\vec{0} = (c_1-d_1)\vec{v}_1 + \dots + (c_n-d_n)\vec{v}_n$. - Since $B$ is linearly independent, $c_i-d_i = 0$ for all $i$, so $c_i = d_i$. Thus, the representation is unique. - **Theorem (Replacement Theorem/Steinitz Exchange Lemma):** If $V$ is a vector space and $S = \{\vec{v}_1, \dots, \vec{v}_n\}$ is a generating set for $V$, and $L = \{\vec{w}_1, \dots, \vec{w}_m\}$ is a linearly independent set in $V$, then $m \le n$. - **Proof:** (By induction on $m$) - Base case $m=0$: $0 \le n$ is trivial. - Assume the theorem holds for $m-1$. - Since $S$ spans $V$, $\vec{w}_1 = \sum_{i=1}^n a_i \vec{v}_i$. Since $\vec{w}_1 \ne \vec{0}$ (as $L$ is LI), at least one $a_j \ne 0$. Without loss of generality, let $a_1 \ne 0$. - Then $\vec{v}_1 = \frac{1}{a_1}\vec{w}_1 - \sum_{i=2}^n \frac{a_i}{a_1}\vec{v}_i$. - This means $\vec{v}_1 \in \text{span}(\vec{w}_1, \vec{v}_2, \dots, \vec{v}_n)$. - Thus, $S_1 = \{\vec{w}_1, \vec{v}_2, \dots, \vec{v}_n\}$ spans $V$. (We've "exchanged" $\vec{v}_1$ for $\vec{w}_1$). - Now consider $L' = \{\vec{w}_2, \dots, \vec{w}_m\}$. This set is linearly independent and contained in $\text{span}(S_1)$. - By the inductive hypothesis, $m-1 \le n-1$, which implies $m \le n$. - **Corollary:** Any two bases of a finite-dimensional vector space have the same number of vectors. - **Proof:** Let $B_1$ and $B_2$ be two bases for $V$. - Since $B_1$ is linearly independent and $B_2$ spans $V$, by the Replacement Theorem, $|B_1| \le |B_2|$. - Since $B_2$ is linearly independent and $B_1$ spans $V$, by the Replacement Theorem, $|B_2| \le |B_1|$. - Therefore, $|B_1| = |B_2|$. - **Dimension:** The dimension of a finite-dimensional vector space $V$, denoted $\dim(V)$, is the number of vectors in any basis for $V$. - **Theorem:** If $\dim(V) = n$: 1. Any linearly independent set of $n$ vectors in $V$ is a basis for $V$. 2. Any spanning set of $n$ vectors for $V$ is a basis for $V$. 3. Any linearly independent set can be extended to a basis. 4. Any spanning set can be reduced to a basis. ### Linear Transformations - **Definition:** Let $V$ and $W$ be vector spaces over the same field $F$. A function $T: V \to W$ is a linear transformation if for all $\vec{u}, \vec{v} \in V$ and $c \in F$: 1. $T(\vec{u} + \vec{v}) = T(\vec{u}) + T(\vec{v})$ (Additivity) 2. $T(c\vec{u}) = cT(\vec{u})$ (Homogeneity) - **Properties:** - $T(\vec{0}_V) = \vec{0}_W$ - $T(-\vec{v}) = -T(\vec{v})$ - $T(c_1\vec{v}_1 + \dots + c_k\vec{v}_k) = c_1T(\vec{v}_1) + \dots + c_kT(\vec{v}_k)$ - **Null Space (Kernel):** $\text{null}(T) = \{\vec{v} \in V \mid T(\vec{v}) = \vec{0}_W\}$. - **Theorem:** $\text{null}(T)$ is a subspace of $V$. - **Proof:** - $\vec{0}_V \in \text{null}(T)$ since $T(\vec{0}_V) = \vec{0}_W$, so $\text{null}(T)$ is non-empty. - Let $\vec{u}, \vec{v} \in \text{null}(T)$ and $a \in F$. - $T(\vec{u} + \vec{v}) = T(\vec{u}) + T(\vec{v}) = \vec{0}_W + \vec{0}_W = \vec{0}_W$. So $\vec{u} + \vec{v} \in \text{null}(T)$. - $T(a\vec{u}) = aT(\vec{u}) = a\vec{0}_W = \vec{0}_W$. So $a\vec{u} \in \text{null}(T)$. - By the subspace test, $\text{null}(T)$ is a subspace. - **Range (Image):** $\text{range}(T) = \{T(\vec{v}) \mid \vec{v} \in V\}$. - **Theorem:** $\text{range}(T)$ is a subspace of $W$. - **Proof:** - $\vec{0}_W \in \text{range}(T)$ since $T(\vec{0}_V) = \vec{0}_W$, so $\text{range}(T)$ is non-empty. - Let $\vec{x}, \vec{y} \in \text{range}(T)$ and $a \in F$. - Then there exist $\vec{u}, \vec{v} \in V$ such that $T(\vec{u}) = \vec{x}$ and $T(\vec{v}) = \vec{y}$. - $\vec{x} + \vec{y} = T(\vec{u}) + T(\vec{v}) = T(\vec{u} + \vec{v})$. Since $\vec{u} + \vec{v} \in V$, $\vec{x} + \vec{y} \in \text{range}(T)$. - $a\vec{x} = aT(\vec{u}) = T(a\vec{u})$. Since $a\vec{u} \in V$, $a\vec{x} \in \text{range}(T)$. - By the subspace test, $\text{range}(T)$ is a subspace. - **Rank-Nullity Theorem:** If $T: V \to W$ is a linear transformation and $V$ is finite-dimensional, then $\dim(V) = \dim(\text{null}(T)) + \dim(\text{range}(T))$. - **Proof:** Let $\dim(\text{null}(T)) = k$. Let $\{\vec{u}_1, \dots, \vec{u}_k\}$ be a basis for $\text{null}(T)$. - Extend this basis to a basis for $V$: $B = \{\vec{u}_1, \dots, \vec{u}_k, \vec{v}_1, \dots, \vec{v}_m\}$. So $\dim(V) = k+m$. - We need to show that $\dim(\text{range}(T)) = m$. - Consider the set $S = \{T(\vec{v}_1), \dots, T(\vec{v}_m)\}$. - **Claim 1: $S$ spans $\text{range}(T)$.** - Let $\vec{w} \in \text{range}(T)$. Then $\vec{w} = T(\vec{v})$ for some $\vec{v} \in V$. - Since $B$ is a basis for $V$, $\vec{v} = c_1\vec{u}_1 + \dots + c_k\vec{u}_k + d_1\vec{v}_1 + \dots + d_m\vec{v}_m$. - $T(\vec{v}) = T(c_1\vec{u}_1 + \dots + c_k\vec{u}_k + d_1\vec{v}_1 + \dots + d_m\vec{v}_m)$ - $= c_1T(\vec{u}_1) + \dots + c_kT(\vec{u}_k) + d_1T(\vec{v}_1) + \dots + d_mT(\vec{v}_m)$ - Since $\vec{u}_i \in \text{null}(T)$, $T(\vec{u}_i) = \vec{0}_W$. - So $T(\vec{v}) = d_1T(\vec{v}_1) + \dots + d_mT(\vec{v}_m)$. Thus, $S$ spans $\text{range}(T)$. - **Claim 2: $S$ is linearly independent.** - Suppose $e_1T(\vec{v}_1) + \dots + e_mT(\vec{v}_m) = \vec{0}_W$. - By linearity, $T(e_1\vec{v}_1 + \dots + e_m\vec{v}_m) = \vec{0}_W$. - This means $e_1\vec{v}_1 + \dots + e_m\vec{v}_m \in \text{null}(T)$. - Since $\{\vec{u}_1, \dots, \vec{u}_k\}$ is a basis for $\text{null}(T)$, $e_1\vec{v}_1 + \dots + e_m\vec{v}_m = f_1\vec{u}_1 + \dots + f_k\vec{u}_k$ for some scalars $f_j$. - Rearranging, $f_1\vec{u}_1 + \dots + f_k\vec{u}_k - e_1\vec{v}_1 - \dots - e_m\vec{v}_m = \vec{0}_V$. - Since $B = \{\vec{u}_1, \dots, \vec{u}_k, \vec{v}_1, \dots, \vec{v}_m\}$ is a basis for $V$, it is linearly independent. - Therefore, all coefficients must be zero: $f_1=\dots=f_k=0$ and $e_1=\dots=e_m=0$. - This shows $S$ is linearly independent. - Since $S$ is a linearly independent spanning set for $\text{range}(T)$, it is a basis for $\text{range}(T)$. - Thus, $\dim(\text{range}(T)) = m$. - Therefore, $\dim(V) = k+m = \dim(\text{null}(T)) + \dim(\text{range}(T))$. - **Isomorphism:** An invertible linear transformation $T: V \to W$. If an isomorphism exists, $V$ and $W$ are isomorphic, denoted $V \cong W$. - **Theorem:** $V \cong W$ if and only if $\dim(V) = \dim(W)$. ### Matrices and Linear Transformations - **Matrix Representation of a Linear Transformation:** Let $T: V \to W$ be a linear transformation, $B = \{\vec{v}_1, \dots, \vec{v}_n\}$ a basis for $V$, and $C = \{\vec{w}_1, \dots, \vec{w}_m\}$ a basis for $W$. - For each $\vec{v}_j \in B$, $T(\vec{v}_j)$ is a vector in $W$, so it can be written as a linear combination of vectors in $C$: $T(\vec{v}_j) = a_{1j}\vec{w}_1 + a_{2j}\vec{w}_2 + \dots + a_{mj}\vec{w}_m$. - The matrix $[T]_B^C = (a_{ij})$ is an $m \times n$ matrix whose $j$-th column consists of the coordinates of $T(\vec{v}_j)$ with respect to the basis $C$. - For any $\vec{v} \in V$, if $[\vec{v}]_B$ is its coordinate vector with respect to $B$, then $[T(\vec{v})]_C = [T]_B^C [\vec{v}]_B$. - **Change of Basis Matrix:** Let $B = \{\vec{v}_1, \dots, \vec{v}_n\}$ and $B' = \{\vec{v}'_1, \dots, \vec{v}'_n\}$ be two bases for $V$. The change of basis matrix from $B'$ to $B$, denoted $P_{B' \to B}$ or $[I]_{B'}^B$, has columns $[\vec{v}'_j]_B$. - $[\vec{v}]_B = P_{B' \to B} [\vec{v}]_{B'}$. - $P_{B \to B'} = (P_{B' \to B})^{-1}$. - **Similarity:** If $T: V \to V$ is a linear operator, and $A = [T]_B^B$ and $A' = [T]_{B'}^{B'}$ are its matrix representations with respect to bases $B$ and $B'$, then $A' = P^{-1}AP$ for some invertible matrix $P$ (the change of basis matrix from $B'$ to $B$). Matrices $A$ and $A'$ are said to be similar. ### Determinants - **Definition (Leibniz Formula):** For an $n \times n$ matrix $A = (a_{ij})$, $\det(A) = \sum_{\sigma \in S_n} \text{sgn}(\sigma) \prod_{i=1}^n a_{i, \sigma(i)}$, where $S_n$ is the set of all permutations of $\{1, \dots, n\}$ and $\text{sgn}(\sigma)$ is the sign of the permutation. - **Properties:** 1. $\det(I) = 1$. 2. If a row is multiplied by $c$, the determinant is multiplied by $c$. 3. If two rows are swapped, the determinant changes sign. 4. If a multiple of one row is added to another row, the determinant is unchanged. 5. $\det(A) = 0$ if $A$ has a row of zeros, or two identical rows, or linearly dependent rows. 6. $\det(AB) = \det(A)\det(B)$. - **Proof:** This is a fundamental property. One common proof involves showing that $\det(A)$ can be characterized by its properties as a multilinear, alternating function of its column vectors that maps $(I)$ to $1$. Then, show that $f(A) = \det(AB)/\det(B)$ (for $\det(B) \ne 0$) satisfies these same properties for $A$, so $f(A) = \det(A)$, implying $\det(AB) = \det(A)\det(B)$. If $\det(B)=0$, then $B$ is singular, so $AB$ is also singular, thus $\det(AB)=0=\det(A)\det(B)$. 7. $\det(A^T) = \det(A)$. 8. $A$ is invertible if and only if $\det(A) \ne 0$. - **Cofactor Expansion:** $\det(A) = \sum_{j=1}^n (-1)^{i+j} a_{ij} M_{ij}$ (expansion along row $i$), where $M_{ij}$ is the determinant of the submatrix obtained by deleting row $i$ and column $j$. ### Eigenvalues and Eigenvectors - **Definition:** Let $A$ be an $n \times n$ matrix. A scalar $\lambda$ is an eigenvalue of $A$ if there exists a non-zero vector $\vec{v} \in \mathbb{C}^n$ such that $A\vec{v} = \lambda\vec{v}$. The vector $\vec{v}$ is called an eigenvector corresponding to $\lambda$. - **Characteristic Equation:** $A\vec{v} = \lambda\vec{v} \Rightarrow (A - \lambda I)\vec{v} = \vec{0}$. - For a non-zero $\vec{v}$ to exist, the matrix $(A - \lambda I)$ must be singular, meaning $\det(A - \lambda I) = 0$. This is the characteristic equation. - The polynomial $p(\lambda) = \det(A - \lambda I)$ is the characteristic polynomial. Its roots are the eigenvalues. - **Eigenspace:** For an eigenvalue $\lambda$, the set $E_\lambda = \{\vec{v} \in V \mid A\vec{v} = \lambda\vec{v}\}$ is called the eigenspace corresponding to $\lambda$. - $E_\lambda = \text{null}(A - \lambda I)$. It is a subspace. - **Algebraic Multiplicity:** The multiplicity of $\lambda$ as a root of the characteristic polynomial. - **Geometric Multiplicity:** $\dim(E_\lambda)$, the dimension of the eigenspace. - **Theorem:** For any eigenvalue $\lambda$, its geometric multiplicity is less than or equal to its algebraic multiplicity. - **Theorem:** Eigenvectors corresponding to distinct eigenvalues are linearly independent. - **Proof:** Suppose $\vec{v}_1, \dots, \vec{v}_k$ are eigenvectors corresponding to distinct eigenvalues $\lambda_1, \dots, \lambda_k$. Assume for contradiction that they are linearly dependent. - Let $m$ be the smallest integer such that $\vec{v}_1, \dots, \vec{v}_m$ are linearly dependent. Then $\vec{v}_m = c_1\vec{v}_1 + \dots + c_{m-1}\vec{v}_{m-1}$ for some scalars $c_i$, not all zero. (Since $m$ is minimal, $\vec{v}_1, \dots, \vec{v}_{m-1}$ are LI). - Apply $A$ to both sides: $A\vec{v}_m = A(c_1\vec{v}_1 + \dots + c_{m-1}\vec{v}_{m-1})$. - $\lambda_m\vec{v}_m = c_1\lambda_1\vec{v}_1 + \dots + c_{m-1}\lambda_{m-1}\vec{v}_{m-1}$. (Eq 1) - Multiply the original dependency equation by $\lambda_m$: $\lambda_m\vec{v}_m = c_1\lambda_m\vec{v}_1 + \dots + c_{m-1}\lambda_m\vec{v}_{m-1}$. (Eq 2) - Subtract (Eq 1) from (Eq 2): $\vec{0} = c_1(\lambda_m - \lambda_1)\vec{v}_1 + \dots + c_{m-1}(\lambda_m - \lambda_{m-1})\vec{v}_{m-1}$. - Since $\lambda_i$ are distinct, $\lambda_m - \lambda_i \ne 0$ for $i ### Inner Product Spaces - **Definition:** An inner product on a vector space $V$ over $\mathbb{R}$ (or $\mathbb{C}$) is a function $\langle \cdot, \cdot \rangle: V \times V \to F$ such that for all $\vec{u}, \vec{v}, \vec{w} \in V$ and $c \in F$: 1. $\langle \vec{u} + \vec{v}, \vec{w} \rangle = \langle \vec{u}, \vec{w} \rangle + \langle \vec{v}, \vec{w} \rangle$ (Additivity in first argument) 2. $\langle c\vec{u}, \vec{v} \rangle = c\langle \vec{u}, \vec{v} \rangle$ (Homogeneity in first argument) 3. $\langle \vec{u}, \vec{v} \rangle = \overline{\langle \vec{v}, \vec{u} \rangle}$ (Conjugate symmetry; for $\mathbb{R}$, $\langle \vec{u}, \vec{v} \rangle = \langle \vec{v}, \vec{u} \rangle$) 4. $\langle \vec{u}, \vec{u} \rangle \ge 0$, and $\langle \vec{u}, \vec{u} \rangle = 0$ if and only if $\vec{u} = \vec{0}$ (Positive definiteness) - **Examples:** - Euclidean dot product in $\mathbb{R}^n$: $\langle \vec{x}, \vec{y} \rangle = \vec{x} \cdot \vec{y} = \sum x_i y_i$. - Standard inner product in $\mathbb{C}^n$: $\langle \vec{x}, \vec{y} \rangle = \vec{x}^H \vec{y} = \sum x_i \overline{y_i}$. - For continuous functions $f, g \in C[a,b]$: $\langle f, g \rangle = \int_a^b f(x)\overline{g(x)} dx$. - **Norm:** Induced by inner product: $||\vec{v}|| = \sqrt{\langle \vec{v}, \vec{v} \rangle}$. - **Orthogonality:** $\vec{u}$ and $\vec{v}$ are orthogonal if $\langle \vec{u}, \vec{v} \rangle = 0$. - **Pythagorean Theorem:** If $\vec{u} \perp \vec{v}$, then $||\vec{u} + \vec{v}||^2 = ||\vec{u}||^2 + ||\vec{v}||^2$. - **Proof:** $||\vec{u} + \vec{v}||^2 = \langle \vec{u} + \vec{v}, \vec{u} + \vec{v} \rangle = \langle \vec{u}, \vec{u} \rangle + \langle \vec{u}, \vec{v} \rangle + \langle \vec{v}, \vec{u} \rangle + \langle \vec{v}, \vec{v} \rangle$. - Since $\vec{u} \perp \vec{v}$, $\langle \vec{u}, \vec{v} \rangle = 0$. By conjugate symmetry, $\langle \vec{v}, \vec{u} \rangle = \overline{0} = 0$. - So $||\vec{u} + \vec{v}||^2 = ||\vec{u}||^2 + ||\vec{v}||^2$. - **Cauchy-Schwarz Inequality:** $|\langle \vec{u}, \vec{v} \rangle| \le ||\vec{u}|| \cdot ||\vec{v}||$. - **Proof:** - If $\vec{v} = \vec{0}$, the inequality holds trivially ($0 \le 0$). - Assume $\vec{v} \ne \vec{0}$. Let $t \in F$. Consider the vector $\vec{u} - t\vec{v}$. - By positive definiteness, $\langle \vec{u} - t\vec{v}, \vec{u} - t\vec{v} \rangle \ge 0$. - Expanding: $\langle \vec{u}, \vec{u} \rangle - t\langle \vec{v}, \vec{u} \rangle - \overline{t}\langle \vec{u}, \vec{v} \rangle + |t|^2\langle \vec{v}, \vec{v} \rangle \ge 0$. - Let $t = \frac{\langle \vec{u}, \vec{v} \rangle}{\langle \vec{v}, \vec{v} \rangle}$. - Substitute $t$: $||\vec{u}||^2 - \frac{\langle \vec{u}, \vec{v} \rangle}{\langle \vec{v}, \vec{v} \rangle}\langle \vec{v}, \vec{u} \rangle - \frac{\overline{\langle \vec{u}, \vec{v} \rangle}}{\langle \vec{v}, \vec{v} \rangle}\langle \vec{u}, \vec{v} \rangle + \frac{|\langle \vec{u}, \vec{v} \rangle|^2}{|\langle \vec{v}, \vec{v} \rangle|^2}\langle \vec{v}, \vec{v} \rangle \ge 0$. - Since $\langle \vec{v}, \vec{u} \rangle = \overline{\langle \vec{u}, \vec{v} \rangle}$, we have: $||\vec{u}||^2 - \frac{|\langle \vec{u}, \vec{v} \rangle|^2}{||\vec{v}||^2} - \frac{|\langle \vec{u}, \vec{v} \rangle|^2}{||\vec{v}||^2} + \frac{|\langle \vec{u}, \vec{v} \rangle|^2}{||\vec{v}||^2} \ge 0$. - $||\vec{u}||^2 - \frac{|\langle \vec{u}, \vec{v} \rangle|^2}{||\vec{v}||^2} \ge 0$. - $||\vec{u}||^2 ||\vec{v}||^2 \ge |\langle \vec{u}, \vec{v} \rangle|^2$. - Taking the square root of both sides gives $|\langle \vec{u}, \vec{v} \rangle| \le ||\vec{u}|| \cdot ||\vec{v}||$. - **Triangle Inequality:** $||\vec{u} + \vec{v}|| \le ||\vec{u}|| + ||\vec{v}||$. - **Proof:** - $||\vec{u} + \vec{v}||^2 = \langle \vec{u} + \vec{v}, \vec{u} + \vec{v} \rangle = ||\vec{u}||^2 + \langle \vec{u}, \vec{v} \rangle + \langle \vec{v}, \vec{u} \rangle + ||\vec{v}||^2$. - Since $\langle \vec{v}, \vec{u} \rangle = \overline{\langle \vec{u}, \vec{v} \rangle}$, $\langle \vec{u}, \vec{v} \rangle + \langle \vec{v}, \vec{u} \rangle = \langle \vec{u}, \vec{v} \rangle + \overline{\langle \vec{u}, \vec{v} \rangle} = 2\text{Re}(\langle \vec{u}, \vec{v} \rangle)$. - So $||\vec{u} + \vec{v}||^2 = ||\vec{u}||^2 + 2\text{Re}(\langle \vec{u}, \vec{v} \rangle) + ||\vec{v}||^2$. - From Cauchy-Schwarz, $\text{Re}(\langle \vec{u}, \vec{v} \rangle) \le |\langle \vec{u}, \vec{v} \rangle| \le ||\vec{u}||||\vec{v}||$. - Therefore, $||\vec{u} + \vec{v}||^2 \le ||\vec{u}||^2 + 2||\vec{u}||||\vec{v}|| + ||\vec{v}||^2 = (||\vec{u}|| + ||\vec{v}||)^2$. - Taking the square root of both sides gives $||\vec{u} + \vec{v}|| \le ||\vec{u}|| + ||\vec{v}||$. - **Orthogonal Basis:** A basis $B = \{\vec{v}_1, \dots, \vec{v}_n\}$ such that $\langle \vec{v}_i, \vec{v}_j \rangle = 0$ for $i \ne j$. - **Orthonormal Basis:** An orthogonal basis where $||\vec{v}_i|| = 1$ for all $i$. - If $B = \{\vec{e}_1, \dots, \vec{e}_n\}$ is an orthonormal basis, then any $\vec{v} = \sum_{i=1}^n \langle \vec{v}, \vec{e}_i \rangle \vec{e}_i$. - **Gram-Schmidt Process:** Converts any basis into an orthonormal basis. - Given basis $\{\vec{v}_1, \dots, \vec{v}_n\}$, construct orthonormal basis $\{\vec{u}_1, \dots, \vec{u}_n\}$: 1. $\vec{u}_1 = \frac{\vec{v}_1}{||\vec{v}_1||}$ 2. $\vec{w}_2 = \vec{v}_2 - \langle \vec{v}_2, \vec{u}_1 \rangle \vec{u}_1$, then $\vec{u}_2 = \frac{\vec{w}_2}{||\vec{w}_2||}$ 3. $\vec{w}_k = \vec{v}_k - \sum_{j=1}^{k-1} \langle \vec{v}_k, \vec{u}_j \rangle \vec{u}_j$, then $\vec{u}_k = \frac{\vec{w}_k}{||\vec{w}_k||}$ - **QR Factorization:** Any $m \times n$ matrix $A$ with linearly independent columns can be factored as $A = QR$, where $Q$ is an $m \times n$ matrix with orthonormal columns and $R$ is an $n \times n$ upper triangular matrix with positive diagonal entries. The columns of $Q$ are the orthonormal vectors from Gram-Schmidt applied to the columns of $A$. ### Adjoints and Spectral Theorem - **Adjoint Operator:** For a linear operator $T: V \to V$ on an inner product space $V$, its adjoint $T^*: V \to V$ is defined by $\langle T\vec{v}, \vec{w} \rangle = \langle \vec{v}, T^*\vec{w} \rangle$ for all $\vec{v}, \vec{w} \in V$. - For matrices: If $A$ is the matrix of $T$ with respect to an orthonormal basis, then $A^*$ (conjugate transpose) is the matrix of $T^*$. - **Self-Adjoint (Hermitian) Operator:** $T = T^*$ (or $A = A^H$ for matrices). - **Normal Operator:** $TT^* = T^*T$ (or $AA^H = A^H A$ for matrices). - **Unitary/Orthogonal Operator:** $T^*T = TT^* = I$ (or $A^H A = I$ for matrices). These preserve inner products and norms. - **Theorem:** Eigenvalues of a self-adjoint operator (or Hermitian matrix) are real. - **Proof:** Let $\lambda$ be an eigenvalue of $T$ and $\vec{v}$ be a corresponding non-zero eigenvector. - $\lambda\langle \vec{v}, \vec{v} \rangle = \langle \lambda\vec{v}, \vec{v} \rangle = \langle T\vec{v}, \vec{v} \rangle$. - Since $T$ is self-adjoint, $\langle T\vec{v}, \vec{v} \rangle = \langle \vec{v}, T^*\vec{v} \rangle = \langle \vec{v}, T\vec{v} \rangle = \langle \vec{v}, \lambda\vec{v} \rangle = \overline{\lambda}\langle \vec{v}, \vec{v} \rangle$. - So $\lambda\langle \vec{v}, \vec{v} \rangle = \overline{\lambda}\langle \vec{v}, \vec{v} \rangle$. - Since $\vec{v} \ne \vec{0}$, $\langle \vec{v}, \vec{v} \rangle \ne 0$. Thus, $\lambda = \overline{\lambda}$, which means $\lambda$ is real. - **Theorem:** Eigenvectors of a self-adjoint operator corresponding to distinct eigenvalues are orthogonal. - **Proof:** Let $\lambda_1, \lambda_2$ be distinct eigenvalues with corresponding eigenvectors $\vec{v}_1, \vec{v}_2$. So $T\vec{v}_1 = \lambda_1\vec{v}_1$ and $T\vec{v}_2 = \lambda_2\vec{v}_2$. - Consider $\langle T\vec{v}_1, \vec{v}_2 \rangle$. - On one hand, $\langle T\vec{v}_1, \vec{v}_2 \rangle = \langle \lambda_1\vec{v}_1, \vec{v}_2 \rangle = \lambda_1\langle \vec{v}_1, \vec{v}_2 \rangle$. - On the other hand, since $T$ is self-adjoint, $\langle T\vec{v}_1, \vec{v}_2 \rangle = \langle \vec{v}_1, T^*\vec{v}_2 \rangle = \langle \vec{v}_1, T\vec{v}_2 \rangle = \langle \vec{v}_1, \lambda_2\vec{v}_2 \rangle = \overline{\lambda_2}\langle \vec{v}_1, \vec{v}_2 \rangle$. - Since eigenvalues of self-adjoint operators are real, $\overline{\lambda_2} = \lambda_2$. - So $\lambda_1\langle \vec{v}_1, \vec{v}_2 \rangle = \lambda_2\langle \vec{v}_1, \vec{v}_2 \rangle$. - $(\lambda_1 - \lambda_2)\langle \vec{v}_1, \vec{v}_2 \rangle = 0$. - Since $\lambda_1 \ne \lambda_2$, we must have $\langle \vec{v}_1, \vec{v}_2 \rangle = 0$. Thus, $\vec{v}_1$ and $\vec{v}_2$ are orthogonal. - **Spectral Theorem (for Hermitian/Symmetric Matrices):** An $n \times n$ Hermitian matrix $A$ (real symmetric matrix) has $n$ real eigenvalues and $n$ linearly independent eigenvectors that form an orthonormal basis for $\mathbb{C}^n$ (or $\mathbb{R}^n$). - This means $A$ is unitarily diagonalizable (or orthogonally diagonalizable for real symmetric matrices): $A = UDU^H$ where $U$ is unitary (columns are orthonormal eigenvectors) and $D$ is a diagonal matrix of eigenvalues. - **Proof Sketch:** 1. Show that $A$ has at least one eigenvalue and eigenvector. (Fundamental Theorem of Algebra ensures roots for char. polynomial). 2. Show eigenvalues are real. (Proven above). 3. Use induction on dimension $n$. For $n=1$, trivial. Assume true for $n-1$. 4. Let $\vec{u}_1$ be a normalized eigenvector for $\lambda_1$. 5. Let $W = \{\vec{x} \in \mathbb{C}^n \mid \langle \vec{x}, \vec{u}_1 \rangle = 0\}$ be the orthogonal complement of $\text{span}(\vec{u}_1)$. $\dim(W) = n-1$. 6. Show that $W$ is invariant under $A$ (i.e., if $\vec{w} \in W$, then $A\vec{w} \in W$). For $\vec{w} \in W$, $\langle A\vec{w}, \vec{u}_1 \rangle = \langle \vec{w}, A^H\vec{u}_1 \rangle = \langle \vec{w}, A\vec{u}_1 \rangle = \langle \vec{w}, \lambda_1\vec{u}_1 \rangle = \lambda_1\langle \vec{w}, \vec{u}_1 \rangle = \lambda_1 \cdot 0 = 0$. So $A\vec{w} \in W$. 7. The restriction of $A$ to $W$ is a self-adjoint operator on $W$. By induction, this restricted operator has an orthonormal basis of eigenvectors for $W$. 8. Combining $\vec{u}_1$ with this basis gives an orthonormal basis of eigenvectors for $A$. - **Singular Value Decomposition (SVD):** Any $m \times n$ matrix $A$ can be factored as $A = U\Sigma V^H$, where $U$ is an $m \times m$ unitary matrix, $\Sigma$ is an $m \times n$ diagonal matrix with non-negative real entries (singular values), and $V$ is an $n \times n$ unitary matrix. - The columns of $U$ are the left singular vectors (eigenvectors of $AA^H$). - The columns of $V$ are the right singular vectors (eigenvectors of $A^H A$). - The non-zero singular values are the square roots of the non-zero eigenvalues of $A^H A$ (or $AA^H$). ### Jordan Canonical Form - **Generalized Eigenvectors:** A vector $\vec{v}$ is a generalized eigenvector of rank $k$ corresponding to eigenvalue $\lambda$ if $(A - \lambda I)^k \vec{v} = \vec{0}$ but $(A - \lambda I)^{k-1} \vec{v} \ne \vec{0}$. - **Jordan Block:** A matrix of the form: $$ J_k(\lambda) = \begin{pmatrix} \lambda & 1 & & \\ & \lambda & 1 & \\ & & \ddots & \ddots \\ & & & \lambda & 1 \\ & & & & \lambda \end{pmatrix} $$ - **Jordan Canonical Form Theorem:** Every square matrix $A$ is similar to a block diagonal matrix $J$, called the Jordan Canonical Form (JCF), where each block is a Jordan block. $A = PJP^{-1}$. - The diagonal entries of $J$ are the eigenvalues of $A$. - The number of Jordan blocks for an eigenvalue $\lambda$ is its geometric multiplicity. - The sum of the sizes of the Jordan blocks for an eigenvalue $\lambda$ is its algebraic multiplicity. - **Proof:** This is a deep theorem. The proof typically involves constructing a basis of generalized eigenvectors, often by working with the generalized eigenspaces $G_\lambda = \text{null}((A-\lambda I)^k)$ for sufficiently large $k$, and then finding a chain of vectors for each block. This is usually covered in a second course on linear algebra. ### Minimal Polynomial - **Annihilating Polynomial:** A polynomial $p(x)$ is an annihilating polynomial for a matrix $A$ (or operator $T$) if $p(A) = 0$ (or $p(T) = 0$). - **Cayley-Hamilton Theorem:** Every square matrix satisfies its own characteristic polynomial. That is, if $p(\lambda) = \det(A - \lambda I)$ is the characteristic polynomial of $A$, then $p(A) = 0$. - **Proof Sketch:** This proof is non-trivial. A common approach involves the adjugate matrix $adj(A-\lambda I)$, which is a polynomial in $\lambda$ whose entries are cofactors. $(A-\lambda I)adj(A-\lambda I) = \det(A-\lambda I)I$. Expanding the adjugate as a polynomial in $\lambda$ with matrix coefficients, and equating coefficients on both sides leads to $p(A)=0$. - **Minimal Polynomial:** The unique monic polynomial $m(x)$ of lowest degree such that $m(A) = 0$. - **Properties:** 1. The minimal polynomial divides every annihilating polynomial (including the characteristic polynomial). 2. The minimal polynomial and the characteristic polynomial have the same roots (eigenvalues), but possibly with different multiplicities. 3. A matrix $A$ is diagonalizable if and only if its minimal polynomial is a product of distinct linear factors.