Linear Algebra Topics

Cheatsheet Content

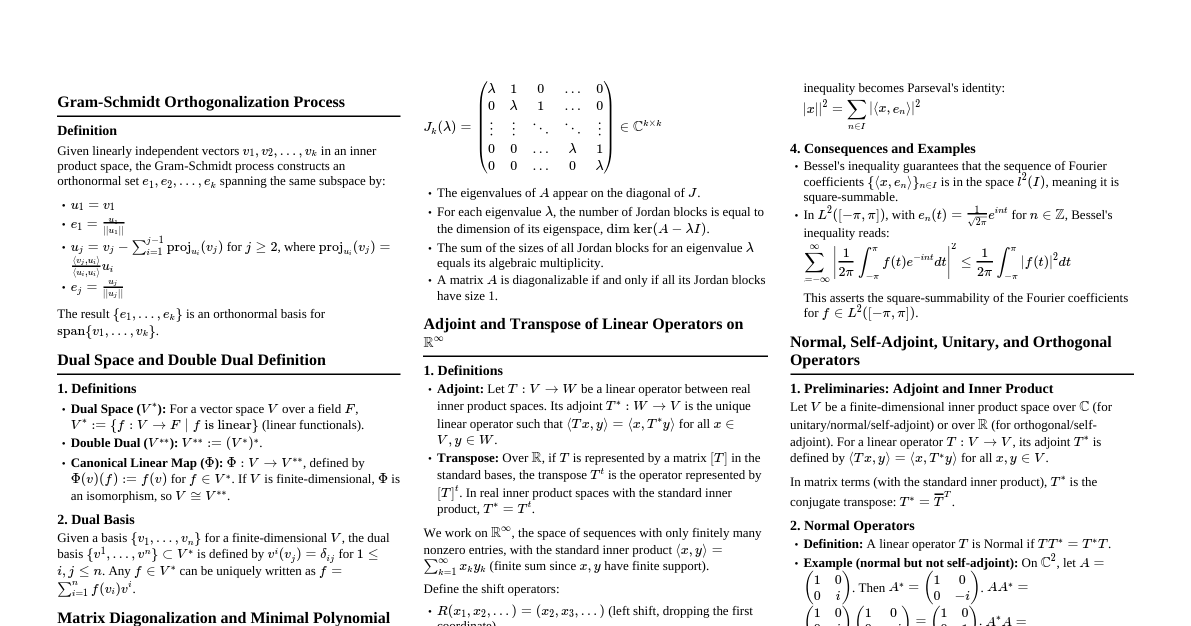

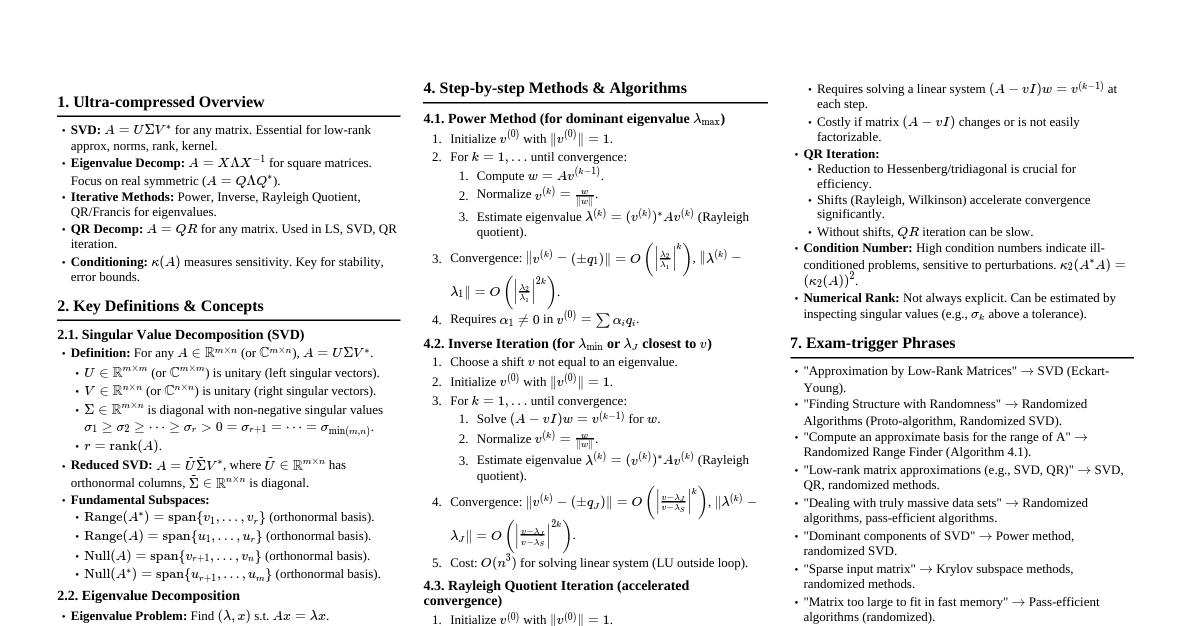

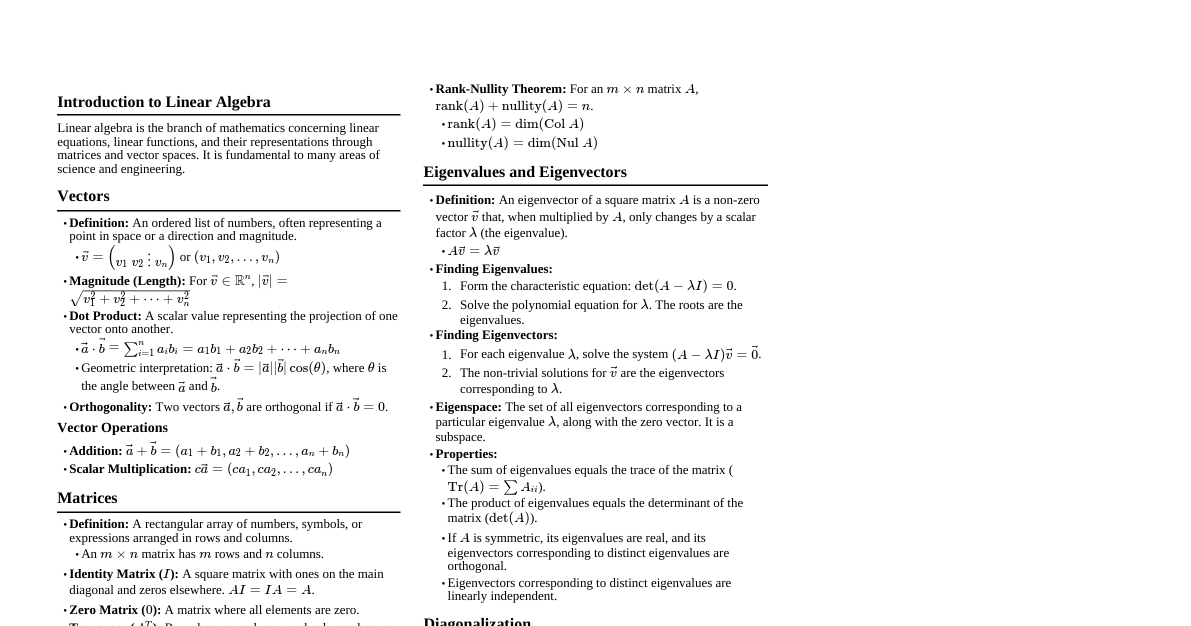

### Vectors - **Definition:** A vector is an ordered list of numbers (components) that represents a magnitude and direction. - $\vec{v} = \begin{pmatrix} v_1 \\ v_2 \\ \vdots \\ v_n \end{pmatrix}$ or $\vec{v} = (v_1, v_2, ..., v_n)$ - **Magnitude (Euclidean Norm):** The length of a vector. - $|\vec{v}| = ||\vec{v}||_2 = \sqrt{v_1^2 + v_2^2 + ... + v_n^2}$ - **Vector Addition:** Component-wise addition. - $\vec{u} + \vec{v} = (u_1+v_1, u_2+v_2, ..., u_n+v_n)$ - **Scalar Multiplication:** Multiplying each component by a scalar. - $c\vec{v} = (cv_1, cv_2, ..., cv_n)$ - **Dot Product (Scalar Product):** A scalar value representing the projection of one vector onto another. - $\vec{u} \cdot \vec{v} = \sum_{i=1}^n u_i v_i = u_1v_1 + u_2v_2 + ... + u_nv_n$ - Geometric interpretation: $\vec{u} \cdot \vec{v} = ||\vec{u}|| ||\vec{v}|| \cos(\theta)$, where $\theta$ is the angle between the vectors. - If $\vec{u} \cdot \vec{v} = 0$, the vectors are orthogonal (perpendicular). - **Cross Product (Vector Product - 3D only):** A vector perpendicular to both input vectors. - $\vec{u} \times \vec{v} = (u_2v_3 - u_3v_2, u_3v_1 - u_1v_3, u_1v_2 - u_2v_1)$ - Magnitude: $||\vec{u} \times \vec{v}|| = ||\vec{u}|| ||\vec{v}|| \sin(\theta)$ - Direction: Determined by the right-hand rule. ### Matrices - **Definition:** A rectangular array of numbers, symbols, or expressions, arranged in rows and columns. - An $m \times n$ matrix has $m$ rows and $n$ columns. $$ A = \begin{pmatrix} a_{11} & a_{12} & \dots & a_{1n} \\ a_{21} & a_{22} & \dots & a_{2n} \\ \vdots & \vdots & \ddots & \vdots \\ a_{m1} & a_{m2} & \dots & a_{mn} \end{pmatrix} $$ - **Matrix Addition:** Add corresponding elements. Requires same dimensions. - $(A+B)_{ij} = a_{ij} + b_{ij}$ - **Scalar Multiplication:** Multiply each element by the scalar. - $(cA)_{ij} = c \cdot a_{ij}$ - **Matrix Multiplication:** Product $AB$ is defined if number of columns in $A$ equals number of rows in $B$. If $A$ is $m \times p$ and $B$ is $p \times n$, then $AB$ is $m \times n$. - $(AB)_{ij} = \sum_{k=1}^p a_{ik}b_{kj}$ - Matrix multiplication is generally not commutative ($AB \neq BA$). - **Transpose:** Rows become columns and columns become rows. - $(A^T)_{ij} = a_{ji}$ - **Identity Matrix ($I$):** A square matrix with ones on the main diagonal and zeros elsewhere. $AI = IA = A$. $$ I_n = \begin{pmatrix} 1 & 0 & \dots & 0 \\ 0 & 1 & \dots & 0 \\ \vdots & \vdots & \ddots & \vdots \\ 0 & 0 & \dots & 1 \end{pmatrix} $$ - **Inverse Matrix ($A^{-1}$):** For a square matrix $A$, if $A^{-1}$ exists, then $AA^{-1} = A^{-1}A = I$. - A matrix is invertible if and only if its determinant is non-zero. - For a $2 \times 2$ matrix $A = \begin{pmatrix} a & b \\ c & d \end{pmatrix}$, $A^{-1} = \frac{1}{\det(A)} \begin{pmatrix} d & -b \\ -c & a \end{pmatrix}$, where $\det(A) = ad-bc$. ### Determinants - **Definition:** A scalar value that can be computed from the elements of a square matrix. It indicates properties of the matrix, such as invertibility. - **$2 \times 2$ Matrix:** - If $A = \begin{pmatrix} a & b \\ c & d \end{pmatrix}$, then $\det(A) = ad - bc$. - **$3 \times 3$ Matrix (Sarrus' Rule):** - If $A = \begin{pmatrix} a & b & c \\ d & e & f \\ g & h & i \end{pmatrix}$, then $\det(A) = a(ei - fh) - b(di - fg) + c(dh - eg)$. - **General $n \times n$ Matrix (Cofactor Expansion):** - $\det(A) = \sum_{j=1}^n (-1)^{i+j} a_{ij} M_{ij}$ (expansion along row $i$) - $\det(A) = \sum_{i=1}^n (-1)^{i+j} a_{ij} M_{ij}$ (expansion along column $j$) - Where $M_{ij}$ is the determinant of the submatrix formed by deleting row $i$ and column $j$. - **Properties:** - $\det(A^T) = \det(A)$ - $\det(AB) = \det(A)\det(B)$ - $\det(A^{-1}) = \frac{1}{\det(A)}$ - $\det(cA) = c^n \det(A)$ for an $n \times n$ matrix. - If a row or column is all zeros, $\det(A) = 0$. - If two rows or columns are identical, $\det(A) = 0$. - If rows/columns are linearly dependent, $\det(A) = 0$. - Swapping two rows/columns changes the sign of the determinant. - Adding a multiple of one row/column to another does not change the determinant. ### Systems of Linear Equations - **Definition:** A set of linear equations involving the same variables. $$ a_{11}x_1 + a_{12}x_2 + \dots + a_{1n}x_n = b_1 $$ $$ a_{21}x_1 + a_{22}x_2 + \dots + a_{2n}x_n = b_2 $$ $$ \vdots $$ $$ a_{m1}x_1 + a_{m2}x_2 + \dots + a_{mn}x_n = b_m $$ - **Matrix Form:** $A\vec{x} = \vec{b}$, where $A$ is the coefficient matrix, $\vec{x}$ is the variable vector, and $\vec{b}$ is the constant vector. - **Augmented Matrix:** $[A | \vec{b}]$ - **Methods for Solving:** - **Gaussian Elimination:** Use elementary row operations to transform the augmented matrix into row echelon form or reduced row echelon form. - Elementary Row Operations: 1. Swap two rows. 2. Multiply a row by a non-zero scalar. 3. Add a multiple of one row to another row. - **Gauss-Jordan Elimination:** Continues Gaussian elimination to reduced row echelon form, directly yielding the solution. - **Matrix Inverse (if $A$ is square and invertible):** $\vec{x} = A^{-1}\vec{b}$ - **Cramer's Rule:** For systems with a unique solution (square $A$ and $\det(A) \neq 0$). - $x_i = \frac{\det(A_i)}{\det(A)}$, where $A_i$ is the matrix formed by replacing the $i$-th column of $A$ with $\vec{b}$. - **Types of Solutions:** - **Unique Solution:** The lines/planes intersect at a single point. $\text{rank}(A) = \text{rank}([A|\vec{b}]) = n$ (number of variables). - **No Solution (Inconsistent):** The lines/planes are parallel and distinct. $\text{rank}(A) ### Vector Spaces - **Definition:** A set $V$ of vectors, together with two operations (vector addition and scalar multiplication), that satisfies ten axioms. - **Axioms:** (Closure under addition and scalar multiplication, associativity, commutativity, existence of zero vector, existence of additive inverse, distributive properties, identity for scalar multiplication). - **Subspace:** A subset $W$ of a vector space $V$ that is itself a vector space under the same operations. - To be a subspace, $W$ must: 1. Contain the zero vector. 2. Be closed under vector addition. 3. Be closed under scalar multiplication. - **Span:** The set of all possible linear combinations of a set of vectors $\{\vec{v}_1, ..., \vec{v}_k\}$. - $\text{span}\{\vec{v}_1, ..., \vec{v}_k\} = \{c_1\vec{v}_1 + ... + c_k\vec{v}_k \mid c_i \in \mathbb{R}\}$ - **Linear Independence:** A set of vectors $\{\vec{v}_1, ..., \vec{v}_k\}$ is linearly independent if the only solution to $c_1\vec{v}_1 + ... + c_k\vec{v}_k = \vec{0}$ is $c_1 = ... = c_k = 0$. - **Basis:** A set of vectors in a vector space $V$ that is both linearly independent and spans $V$. - Every vector in $V$ can be uniquely expressed as a linear combination of the basis vectors. - **Dimension:** The number of vectors in any basis for a vector space. - **Column Space ($\text{Col}(A)$):** The span of the column vectors of matrix $A$. It is a subspace of $\mathbb{R}^m$ (if $A$ is $m \times n$). - **Null Space ($\text{Null}(A)$):** The set of all solutions to $A\vec{x} = \vec{0}$. It is a subspace of $\mathbb{R}^n$ (if $A$ is $m \times n$). - The dimension of the null space is called the nullity. - **Row Space ($\text{Row}(A)$):** The span of the row vectors of matrix $A$. It is a subspace of $\mathbb{R}^n$. - **Rank-Nullity Theorem:** For an $m \times n$ matrix $A$, $\text{rank}(A) + \text{nullity}(A) = n$. - $\text{rank}(A)$ is the dimension of the column space (or row space). It is the number of pivot positions in the row echelon form of $A$. ### Eigenvalues & Eigenvectors - **Definition:** An eigenvector of a square matrix $A$ is a non-zero vector $\vec{v}$ such that when $A$ is multiplied by $\vec{v}$, the result is a scalar multiple of $\vec{v}$. The scalar is called the eigenvalue $\lambda$. - $A\vec{v} = \lambda\vec{v}$ - **Finding Eigenvalues:** 1. Form the characteristic equation: $\det(A - \lambda I) = 0$. 2. Solve the polynomial equation for $\lambda$. The roots are the eigenvalues. - **Finding Eigenvectors:** 1. For each eigenvalue $\lambda$, solve the homogeneous system $(A - \lambda I)\vec{v} = \vec{0}$. 2. The non-trivial solutions form the eigenspace corresponding to $\lambda$. Any non-zero vector in this eigenspace is an eigenvector. - **Eigenspace:** The set of all eigenvectors corresponding to a particular eigenvalue $\lambda$, along with the zero vector. It is a subspace. - **Diagonalization:** A square matrix $A$ is diagonalizable if there exists an invertible matrix $P$ and a diagonal matrix $D$ such that $A = PDP^{-1}$. - The columns of $P$ are the linearly independent eigenvectors of $A$. - The diagonal entries of $D$ are the corresponding eigenvalues. - A matrix is diagonalizable if and only if it has $n$ linearly independent eigenvectors (where $n$ is the dimension of the matrix). - **Applications:** Differential equations, principal component analysis (PCA), quantum mechanics.