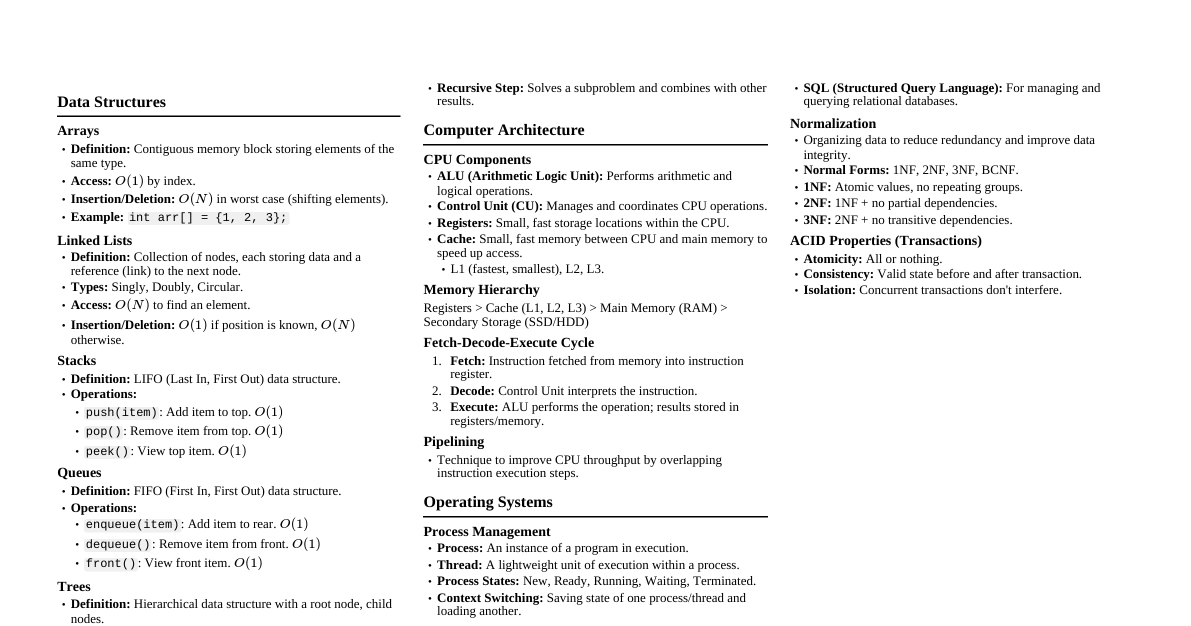

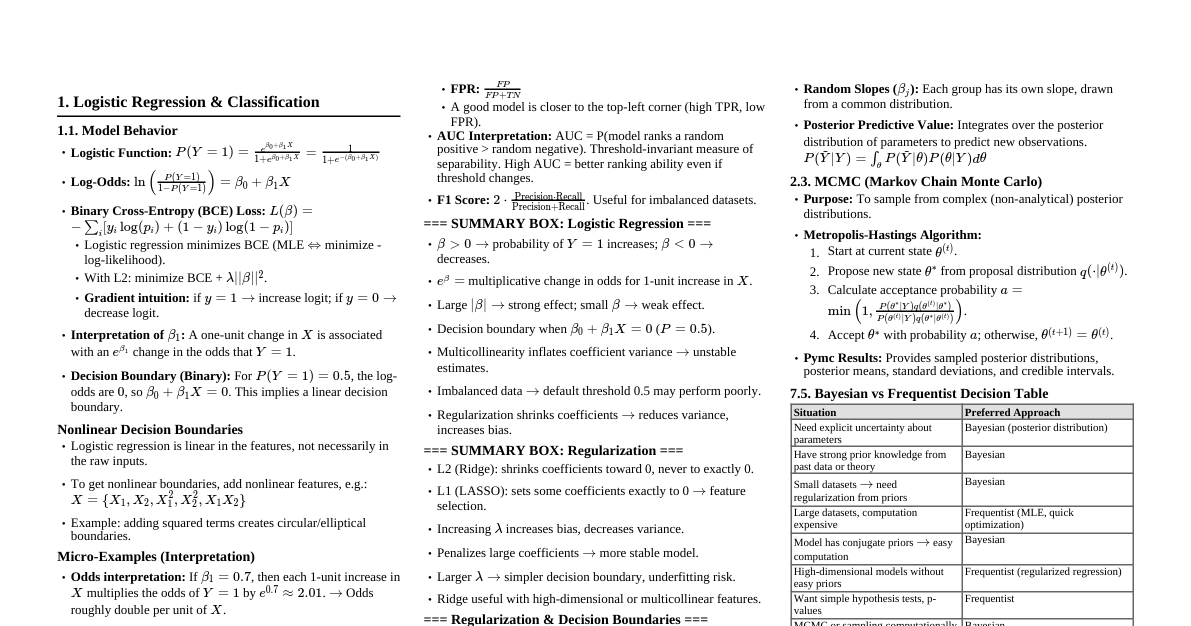

### 1. Introduction to Data Science - **Definition:** An interdisciplinary field that uses scientific methods, processes, algorithms, and systems to extract knowledge and insights from structured and unstructured data. - **Key Pillars:** - **Mathematics & Statistics:** Probability, linear algebra, calculus, statistical inference. - **Computer Science:** Programming, algorithms, data structures, databases, machine learning. - **Domain Expertise:** Understanding the context and problem being solved. - **Data Science Workflow (CRISP-DM):** 1. **Business Understanding:** Define objectives, assess situation. 2. **Data Understanding:** Collect, describe, explore, verify data quality. 3. **Data Preparation:** Select, clean, construct, integrate, format data. 4. **Modeling:** Select modeling technique, build model, assess model. 5. **Evaluation:** Evaluate results, review process, determine next steps. 6. **Deployment:** Plan deployment, plan monitoring & maintenance, produce final report. ### 2. Data Types and Structures #### 2.1 Data Types - **Numerical:** - **Discrete:** Countable, finite values (e.g., number of students). - **Continuous:** Measurable, infinite values within a range (e.g., temperature, height). - **Categorical:** - **Nominal:** No inherent order (e.g., colors, gender). - **Ordinal:** Has a meaningful order (e.g., satisfaction ratings: low, medium, high). - **Temporal:** Time-series data (e.g., stock prices over time). - **Textual:** Unstructured text data (e.g., reviews, articles). #### 2.2 Data Structures (Python Pandas) - **Series:** 1-dimensional labeled array (like a column in a spreadsheet). ```python import pandas as pd s = pd.Series([1, 3, 5, 7, 9]) ``` - **DataFrame:** 2-dimensional labeled data structure with columns of potentially different types (like a spreadsheet or SQL table). ```python data = {'col1': [1, 2], 'col2': [3, 4]} df = pd.DataFrame(data) ``` ### 3. Data Collection and Preparation #### 3.1 Data Sources - **Databases:** SQL (PostgreSQL, MySQL), NoSQL (MongoDB, Cassandra). - **APIs:** Web services (e.g., Twitter API, Google Maps API). - **Web Scraping:** Extracting data from websites (e.g., Beautiful Soup, Scrapy). - **Files:** CSV, Excel, JSON, XML, Parquet. #### 3.2 Data Cleaning - **Handling Missing Values:** - **Imputation:** Filling missing values (mean, median, mode, regression imputation). - **Deletion:** Removing rows/columns with missing values (use with caution). - **Handling Outliers:** - **Detection:** Z-score, IQR method, visual inspection (box plots). - **Treatment:** Capping, transformation, removal (if justified). - **Handling Duplicates:** Identifying and removing redundant entries. - **Data Type Conversion:** Ensuring data is in the correct format (e.g., string to numeric). #### 3.3 Feature Engineering - **Definition:** The process of creating new features from existing ones to improve model performance. - **Techniques:** - **One-Hot Encoding:** Converting categorical variables into numerical format (binary columns). - **Label Encoding:** Assigning a unique integer to each category. - **Scaling/Normalization:** - **Min-Max Scaling:** Rescales data to a fixed range (e.g., 0 to 1). $$X' = \frac{X - X_{min}}{X_{max} - X_{min}}$$ - **Standardization (Z-score):** Rescales data to have a mean of 0 and standard deviation of 1. $$X' = \frac{X - \mu}{\sigma}$$ - **Binning/Discretization:** Grouping continuous values into bins. - **Polynomial Features:** Creating higher-order terms (e.g., $x^2, x^3$). - **Interaction Features:** Combining two or more features (e.g., $x_1 * x_2$). ### 4. Exploratory Data Analysis (EDA) - **Goal:** Understand the data, identify patterns, detect anomalies, test hypotheses. - **Key Techniques:** - **Descriptive Statistics:** Mean, median, mode, standard deviation, variance, quartiles. - **Visualization:** - **Histograms:** Distribution of a single numerical variable. - **Box Plots:** Distribution, outliers, skewness for numerical variables. - **Scatter Plots:** Relationship between two numerical variables. - **Bar Charts:** Frequencies of categorical variables. - **Heatmaps:** Correlation matrices. - **Pair Plots:** Scatter plots for all pairs of variables. - **Correlation Analysis:** Measuring the strength and direction of a linear relationship between two variables. - **Pearson Correlation Coefficient ($r$):** For linear relationships between continuous variables. Range: -1 to 1. - **Spearman's Rank Correlation:** For monotonic relationships, handles non-normal data and ordinal variables. ### 5. Probability and Statistics #### 5.1 Basic Probability - **Definitions:** - **Experiment:** A procedure with well-defined outcomes. - **Sample Space ($S$):** Set of all possible outcomes. - **Event ($E$):** A subset of the sample space. - **Probability of an Event ($P(E)$):** Number of favorable outcomes / Total number of outcomes. - **Key Rules:** - **Addition Rule:** $P(A \cup B) = P(A) + P(B) - P(A \cap B)$ - **Multiplication Rule (Independent Events):** $P(A \cap B) = P(A) \times P(B)$ - **Conditional Probability:** $P(A|B) = \frac{P(A \cap B)}{P(B)}$ - **Bayes' Theorem:** $P(A|B) = \frac{P(B|A) P(A)}{P(B)}$ #### 5.2 Key Statistical Concepts - **Measures of Central Tendency:** - **Mean:** Average. - **Median:** Middle value when sorted. - **Mode:** Most frequent value. - **Measures of Dispersion:** - **Range:** Max - Min. - **Variance ($\sigma^2$):** Average of the squared differences from the mean. - **Standard Deviation ($\sigma$):** Square root of variance. - **Interquartile Range (IQR):** $Q_3 - Q_1$. - **Distributions:** - **Normal (Gaussian) Distribution:** Bell-shaped, symmetric. Defined by mean ($\mu$) and standard deviation ($\sigma$). - **Binomial Distribution:** Probability of $k$ successes in $n$ independent Bernoulli trials. - **Poisson Distribution:** Probability of a given number of events occurring in a fixed interval of time/space. - **Hypothesis Testing:** - **Null Hypothesis ($H_0$):** Statement of no effect or no difference. - **Alternative Hypothesis ($H_1$):** Statement there is an effect or difference. - **P-value:** Probability of observing data as extreme as, or more extreme than, the sample data under $H_0$. - **Significance Level ($\alpha$):** Threshold for rejecting $H_0$ (commonly 0.05). If $P ### 6. Machine Learning Fundamentals #### 6.1 Types of Machine Learning - **Supervised Learning:** Learns from labeled data (input-output pairs) to make predictions. - **Regression:** Predicts a continuous output (e.g., house price). - **Classification:** Predicts a categorical output (e.g., spam or not spam). - **Unsupervised Learning:** Finds patterns in unlabeled data. - **Clustering:** Groups similar data points together (e.g., customer segmentation). - **Dimensionality Reduction:** Reduces the number of features while retaining important information (e.g., PCA). - **Reinforcement Learning:** Learns through trial and error by interacting with an environment. #### 6.2 Model Evaluation Metrics - **Regression Metrics:** - **Mean Absolute Error (MAE):** Average absolute difference between predicted and actual values. - **Mean Squared Error (MSE):** Average of the squared differences. Penalizes larger errors more. - **Root Mean Squared Error (RMSE):** Square root of MSE. Interpretable in the same units as the target variable. - **R-squared ($R^2$):** Proportion of variance in the dependent variable predictable from the independent variable(s). Range: 0 to 1. - **Classification Metrics:** - **Accuracy:** Proportion of correctly classified instances. - **Precision:** $TP / (TP + FP)$ - proportion of positive identifications that were actually correct. - **Recall (Sensitivity):** $TP / (TP + FN)$ - proportion of actual positives that were correctly identified. - **F1-Score:** Harmonic mean of precision and recall. - **Confusion Matrix:** Table summarizing classification performance (True Positives, True Negatives, False Positives, False Negatives). - **ROC Curve & AUC:** Receiver Operating Characteristic curve and Area Under the Curve. Evaluates classifier performance across all possible thresholds. #### 6.3 Overfitting and Underfitting - **Overfitting:** Model performs well on training data but poorly on unseen data (too complex, captured noise). - **Underfitting:** Model performs poorly on both training and unseen data (too simple, failed to capture underlying patterns). - **Mitigation:** - **Cross-validation:** K-fold, Leave-One-Out. - **Regularization:** L1 (Lasso) and L2 (Ridge) regularization. - **Feature Selection/Engineering.** - **More data (for overfitting).** - **Simpler model (for overfitting).** - **More complex model (for underfitting).** ### 7. Supervised Learning Algorithms #### 7.1 Linear Regression - **Goal:** Model the linear relationship between a dependent variable ($y$) and one or more independent variables ($X$). - **Equation:** $y = \beta_0 + \beta_1 x_1 + \beta_2 x_2 + ... + \beta_n x_n + \epsilon$ - **Assumptions:** Linearity, independence of errors, homoscedasticity, normality of residuals. #### 7.2 Logistic Regression - **Goal:** Binary classification. Predicts the probability of an event occurring. - **Output:** Probability between 0 and 1 using the sigmoid function: $$P(y=1|X) = \frac{1}{1 + e^{-(\beta_0 + \beta_1 x_1 + ...)}}$$ - **Decision Boundary:** A threshold (e.g., 0.5) to classify outcomes. #### 7.3 Decision Trees - **How it works:** Splits data into branches based on feature values, forming a tree-like structure. - **Splitting Criteria:** Gini impurity, entropy (for classification); MSE (for regression). - **Advantages:** Easy to interpret, handles non-linear relationships. - **Disadvantages:** Prone to overfitting, sensitive to small changes in data. #### 7.4 Support Vector Machines (SVM) - **Goal:** Find the optimal hyperplane that best separates data points into different classes with the largest margin. - **Kernel Trick:** Allows SVMs to handle non-linearly separable data by mapping it into a higher-dimensional space. - Radial Basis Function (RBF), Polynomial, Sigmoid. #### 7.5 Ensemble Methods - **Bagging (e.g., Random Forest):** - Builds multiple decision trees on different bootstrap samples of the training data. - Aggregates predictions (majority vote for classification, average for regression) to reduce variance and overfitting. - **Boosting (e.g., Gradient Boosting, XGBoost, LightGBM):** - Sequentially builds models, where each new model corrects the errors of the previous ones. - Focuses on misclassified instances. ### 8. Unsupervised Learning Algorithms #### 8.1 K-Means Clustering - **Goal:** Partition data into $K$ clusters, where each data point belongs to the cluster with the nearest mean (centroid). - **Algorithm:** 1. Initialize $K$ centroids randomly. 2. Assign each data point to the nearest centroid. 3. Recalculate centroids as the mean of all points in that cluster. 4. Repeat steps 2-3 until convergence (centroids no longer move significantly). - **Elbow Method:** Used to find optimal $K$ by plotting Inertia (sum of squared distances of samples to their closest cluster center) vs. $K$. #### 8.2 Hierarchical Clustering - **Agglomerative (Bottom-up):** Starts with each data point as a single cluster and successively merges clusters until stopping criterion is met. - **Divisive (Top-down):** Starts with all data points in one cluster and recursively splits them. - **Dendrogram:** Tree-like diagram showing the hierarchical relationships between clusters. - **Linkage Criteria:** How the distance between clusters is measured (e.g., single, complete, average, ward). #### 8.3 Principal Component Analysis (PCA) - **Goal:** Dimensionality reduction technique that transforms data into a new set of orthogonal (uncorrelated) variables called Principal Components (PCs). - **How it works:** Identifies directions (PCs) in the data that capture the most variance. - **Applications:** Data compression, noise reduction, visualization. ### 9. Deep Learning Basics #### 9.1 Neural Networks - **Structure:** Input layer, hidden layers, output layer. - **Neurons:** Basic units that receive inputs, apply weights, sum them, and pass through an activation function. - **Activation Functions:** Introduce non-linearity. - **Sigmoid:** $\sigma(z) = \frac{1}{1 + e^{-z}}$ (outputs 0 to 1, good for output layer in binary classification). - **ReLU (Rectified Linear Unit):** $f(z) = \max(0, z)$ (common in hidden layers). - **Softmax:** Used in output layer for multi-class classification, converts raw scores to probabilities. - **Backpropagation:** Algorithm used to train neural networks by adjusting weights based on the error of the output. #### 9.2 Types of Neural Networks - **Feedforward Neural Networks (FNNs):** Simplest type, data flows in one direction. - **Convolutional Neural Networks (CNNs):** - **Applications:** Image recognition, computer vision. - **Key Components:** - **Convolutional Layers:** Apply filters to detect features (edges, textures). - **Pooling Layers:** Reduce dimensionality, make features robust to small shifts. - **Fully Connected Layers:** Standard neural network layers for classification. - **Recurrent Neural Networks (RNNs):** - **Applications:** Sequential data (text, time series). - **Key Feature:** Have "memory" (feedback loops) allowing information to persist across time steps. - **Variants:** LSTM (Long Short-Term Memory), GRU (Gated Recurrent Unit) address vanishing/exploding gradient problems. ### 10. Big Data and Deployment #### 10.1 Big Data Concepts - **The 3 Vs (or more):** - **Volume:** Sheer amount of data. - **Velocity:** Speed at which data is generated and processed. - **Variety:** Different forms of data (structured, unstructured, semi-structured). - **Veracity:** Quality and trustworthiness of data. - **Value:** Potential for insights. - **Technologies:** - **Hadoop:** Distributed storage (HDFS) and processing (MapReduce) framework. - **Spark:** Fast and general-purpose cluster computing system (in-memory processing). - **NoSQL Databases:** MongoDB, Cassandra, Redis (for flexible schemas and scalability). #### 10.2 Model Deployment - **API (Application Programming Interface):** Exposing your model as a service that other applications can call. - **Frameworks:** Flask, FastAPI, Django. - **Containerization (Docker):** Packaging an application and its dependencies into a single unit for consistent deployment across environments. - **Orchestration (Kubernetes):** Managing and automating the deployment, scaling, and operation of containerized applications. - **Cloud Platforms:** AWS (SageMaker, EC2), Google Cloud (AI Platform, Compute Engine), Azure (Machine Learning). - **Monitoring:** Tracking model performance, data drift, and concept drift in production.