Matrices Cheatsheet

Cheatsheet Content

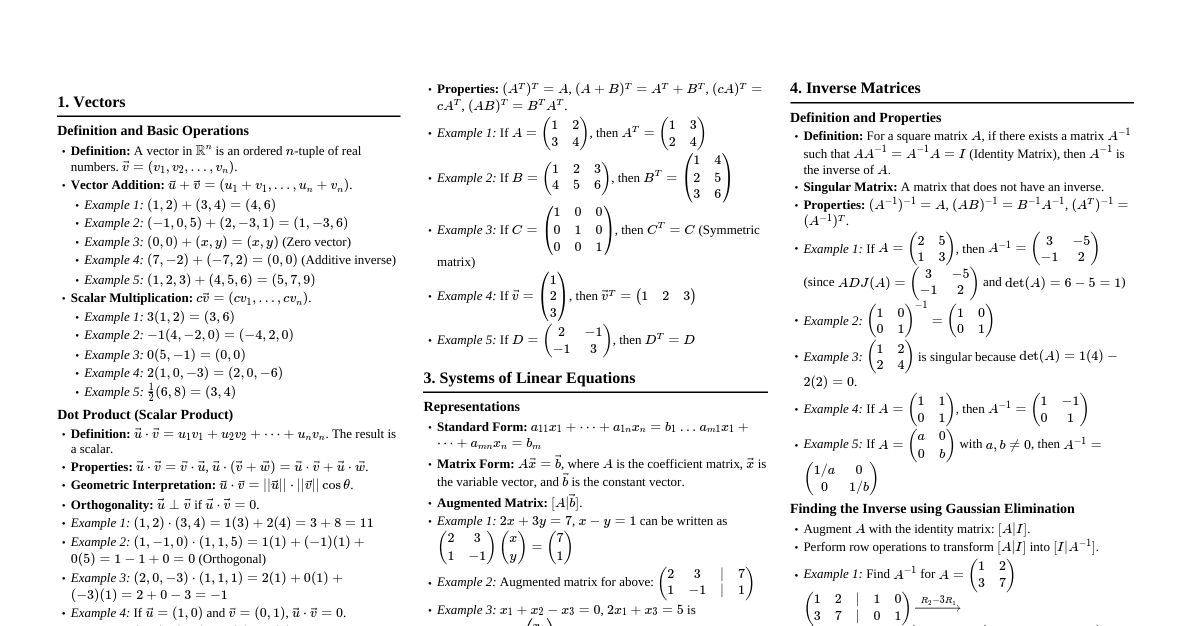

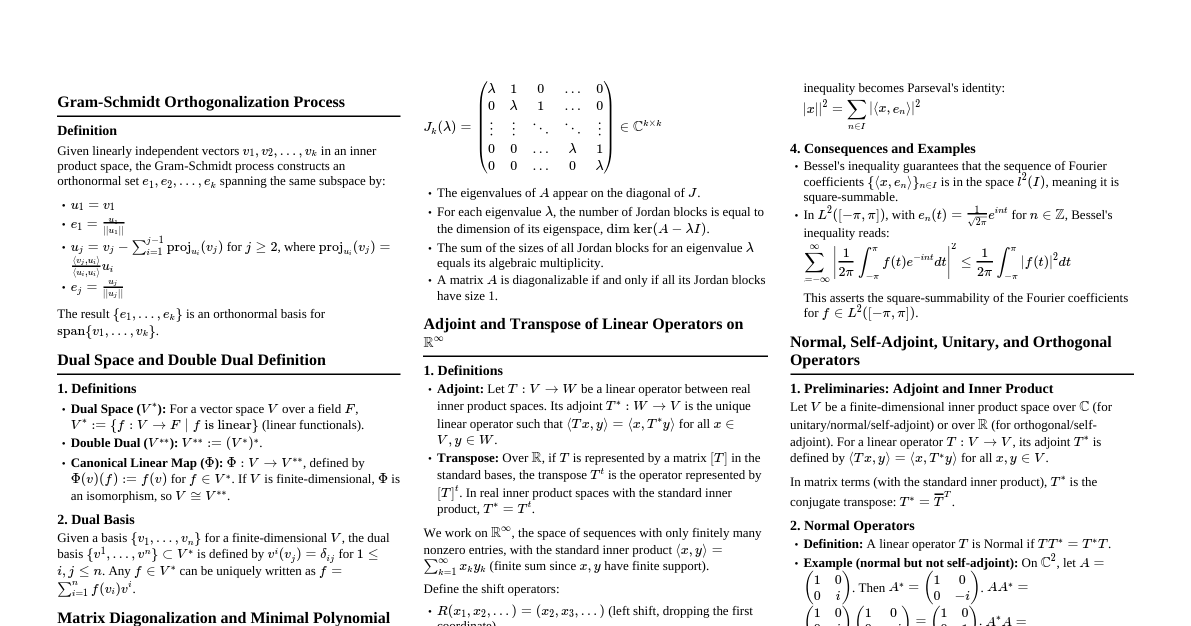

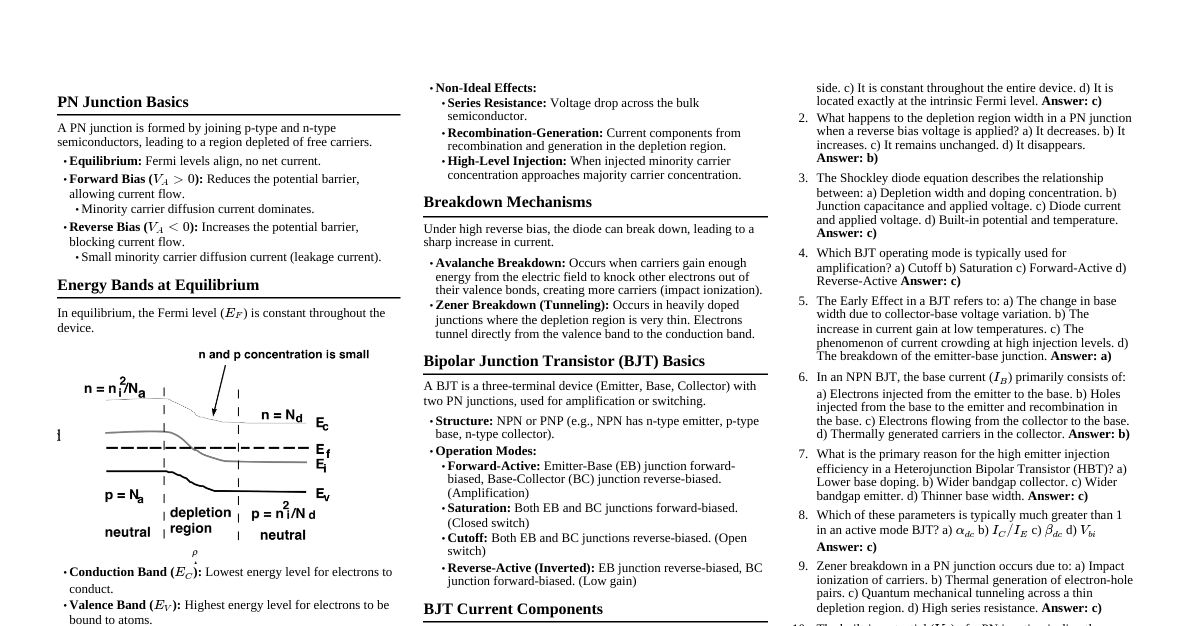

1. Definition and Basic Properties A matrix is a rectangular array of numbers, symbols, or expressions arranged in rows and columns. Notation: An $m \times n$ matrix $A$ has $m$ rows and $n$ columns. $A = [a_{ij}]$, where $a_{ij}$ is the element in the $i$-th row and $j$-th column. Square Matrix: If $m = n$. Zero Matrix ($0$): All elements are zero. Identity Matrix ($I$): A square matrix with ones on the main diagonal and zeros elsewhere. $$I = \begin{pmatrix} 1 & 0 & \dots & 0 \\ 0 & 1 & \dots & 0 \\ \vdots & \vdots & \ddots & \vdots \\ 0 & 0 & \dots & 1 \end{pmatrix}$$ Diagonal Matrix: A square matrix where all non-diagonal elements are zero. Scalar Matrix: A diagonal matrix where all diagonal elements are equal. Upper Triangular Matrix: All elements below the main diagonal are zero. Lower Triangular Matrix: All elements above the main diagonal are zero. Symmetric Matrix: $A = A^T$ (i.e., $a_{ij} = a_{ji}$). Skew-Symmetric Matrix: $A = -A^T$ (i.e., $a_{ij} = -a_{ji}$). Diagonal elements must be zero. 2. Matrix Operations 2.1. Addition and Subtraction Matrices must have the same dimensions. $A + B = [a_{ij} + b_{ij}]$ $A - B = [a_{ij} - b_{ij}]$ 2.2. Scalar Multiplication $kA = [ka_{ij}]$ for a scalar $k$. 2.3. Matrix Multiplication For $AB$, the number of columns in $A$ must equal the number of rows in $B$. If $A$ is $m \times n$ and $B$ is $n \times p$, then $C = AB$ is $m \times p$. $c_{ij} = \sum_{k=1}^n a_{ik}b_{kj}$ Properties: Associative: $(AB)C = A(BC)$ Distributive: $A(B+C) = AB + AC$ Not generally commutative: $AB \neq BA$ $AI = IA = A$ $A0 = 0A = 0$ 2.4. Transpose of a Matrix ($A^T$) Rows become columns and columns become rows. If $A$ is $m \times n$, $A^T$ is $n \times m$. $(A^T)^T = A$ $(A+B)^T = A^T + B^T$ $(kA)^T = kA^T$ $(AB)^T = B^T A^T$ 3. Determinant of a Matrix (det(A) or $|A|$) A scalar value that can be computed from the elements of a square matrix. $2 \times 2$ Matrix: $$A = \begin{pmatrix} a & b \\ c & d \end{pmatrix} \Rightarrow |A| = ad - bc$$ $3 \times 3$ Matrix (Sarrus' Rule): $$A = \begin{pmatrix} a & b & c \\ d & e & f \\ g & h & i \end{pmatrix}$$ $$|A| = a(ei - fh) - b(di - fg) + c(dh - eg)$$ Cofactor Expansion: For an $n \times n$ matrix, $|A| = \sum_{j=1}^n a_{ij}C_{ij}$ (along row $i$) or $|A| = \sum_{i=1}^n a_{ij}C_{ij}$ (along column $j$). $C_{ij} = (-1)^{i+j}M_{ij}$, where $M_{ij}$ is the determinant of the submatrix obtained by deleting row $i$ and column $j$. Properties: $|A^T| = |A|$ $|AB| = |A||B|$ $|kA| = k^n|A|$ for an $n \times n$ matrix $A$. If a row/column is all zeros, $|A|=0$. If two rows/columns are identical, $|A|=0$. If one row/column is a scalar multiple of another, $|A|=0$. Swapping two rows/columns changes the sign of the determinant. Adding a multiple of one row/column to another does not change the determinant. A matrix $A$ is invertible if and only if $|A| \neq 0$. 4. Inverse of a Matrix ($A^{-1}$) For a square matrix $A$, if $A^{-1}$ exists, then $AA^{-1} = A^{-1}A = I$. Formula for $2 \times 2$ Matrix: $$A = \begin{pmatrix} a & b \\ c & d \end{pmatrix} \Rightarrow A^{-1} = \frac{1}{|A|} \begin{pmatrix} d & -b \\ -c & a \end{pmatrix}$$ Provided $|A| \neq 0$. General Formula: $A^{-1} = \frac{1}{|A|} \text{adj}(A)$, where $\text{adj}(A)$ is the adjugate (or classical adjoint) matrix. $\text{adj}(A) = (C_{ij})^T$, where $C_{ij}$ are the cofactors. Properties: $(A^{-1})^{-1} = A$ $(AB)^{-1} = B^{-1}A^{-1}$ $(A^T)^{-1} = (A^{-1})^T$ $(kA)^{-1} = \frac{1}{k}A^{-1}$ 5. Rank of a Matrix The maximum number of linearly independent row vectors or column vectors. The dimension of the column space (image) or row space of the matrix. Can be found by reducing the matrix to row echelon form and counting the number of non-zero rows. $\text{rank}(A) \le \min(m, n)$ for an $m \times n$ matrix. A square matrix $A$ of size $n$ is invertible if and only if $\text{rank}(A) = n$. 6. Eigenvalues and Eigenvectors For a square matrix $A$, an eigenvector $\vec{v}$ is a non-zero vector that, when multiplied by $A$, only changes by a scalar factor $\lambda$ (the eigenvalue). Equation: $A\vec{v} = \lambda\vec{v}$ Characteristic Equation: To find eigenvalues $\lambda$, solve $\text{det}(A - \lambda I) = 0$. For each eigenvalue $\lambda_i$, solve $(A - \lambda_i I)\vec{v} = \vec{0}$ to find the corresponding eigenvectors. Properties: Sum of eigenvalues = Trace of $A$ ($\text{Tr}(A) = \sum a_{ii}$). Product of eigenvalues = Determinant of $A$ ($|A|$). If $\vec{v}$ is an eigenvector of $A$ with eigenvalue $\lambda$, then $\vec{v}$ is an eigenvector of $A^k$ with eigenvalue $\lambda^k$. 7. Systems of Linear Equations A system of $m$ linear equations in $n$ variables can be written as $A\vec{x} = \vec{b}$. $A$ is the coefficient matrix, $\vec{x}$ is the variable vector, $\vec{b}$ is the constant vector. Homogeneous System: $A\vec{x} = \vec{0}$. Always has the trivial solution $\vec{x} = \vec{0}$. Non-trivial solutions exist if $|A|=0$. Non-homogeneous System: $A\vec{x} = \vec{b}$ with $\vec{b} \neq \vec{0}$. Unique solution: If $|A| \neq 0$, then $\vec{x} = A^{-1}\vec{b}$. No solution or infinitely many solutions: If $|A| = 0$. Use Gaussian elimination to determine. Augmented Matrix: $[A|\vec{b}]$. Used in Gaussian elimination. Gaussian Elimination: Use elementary row operations to transform $[A|\vec{b}]$ into row echelon form or reduced row echelon form to solve for $\vec{x}$. 8. Special Matrices Orthogonal Matrix: A square matrix $Q$ such that $Q^T Q = Q Q^T = I$. Its columns (and rows) form an orthonormal basis. $Q^{-1} = Q^T$ $|Q| = \pm 1$ Unitary Matrix: A complex square matrix $U$ such that $U^*U = UU^* = I$, where $U^*$ is the conjugate transpose. Hermitian Matrix: A complex square matrix $A$ such that $A = A^*$. (Self-adjoint). Normal Matrix: A square matrix $A$ such that $A A^* = A^* A$.