Cloud Computing Fundamentals

Cheatsheet Content

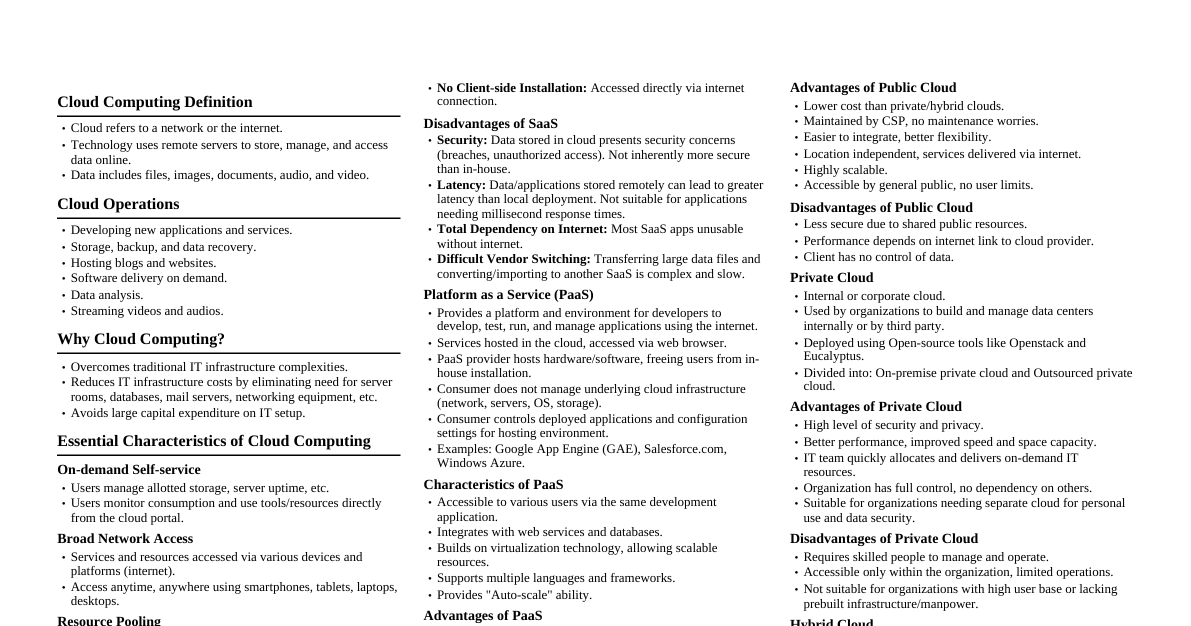

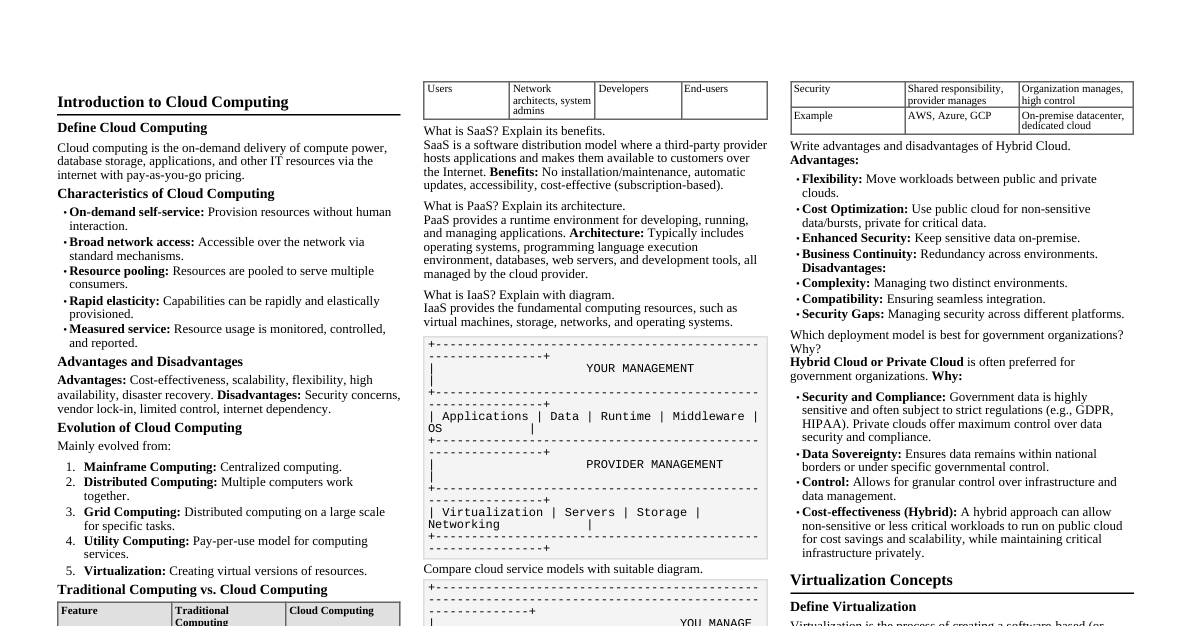

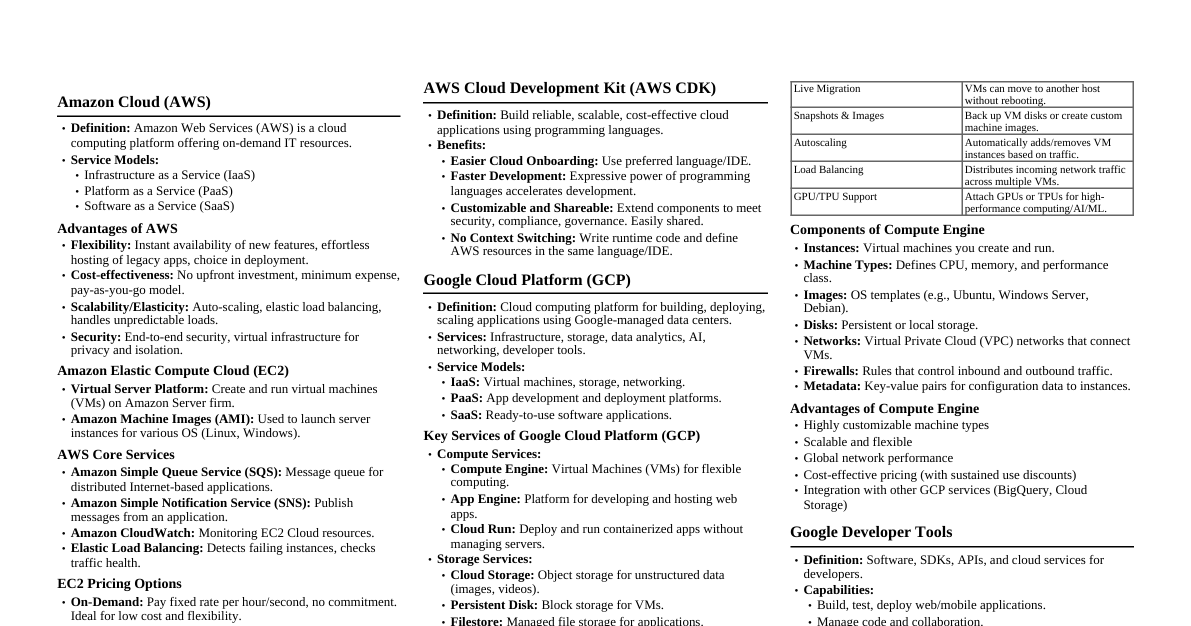

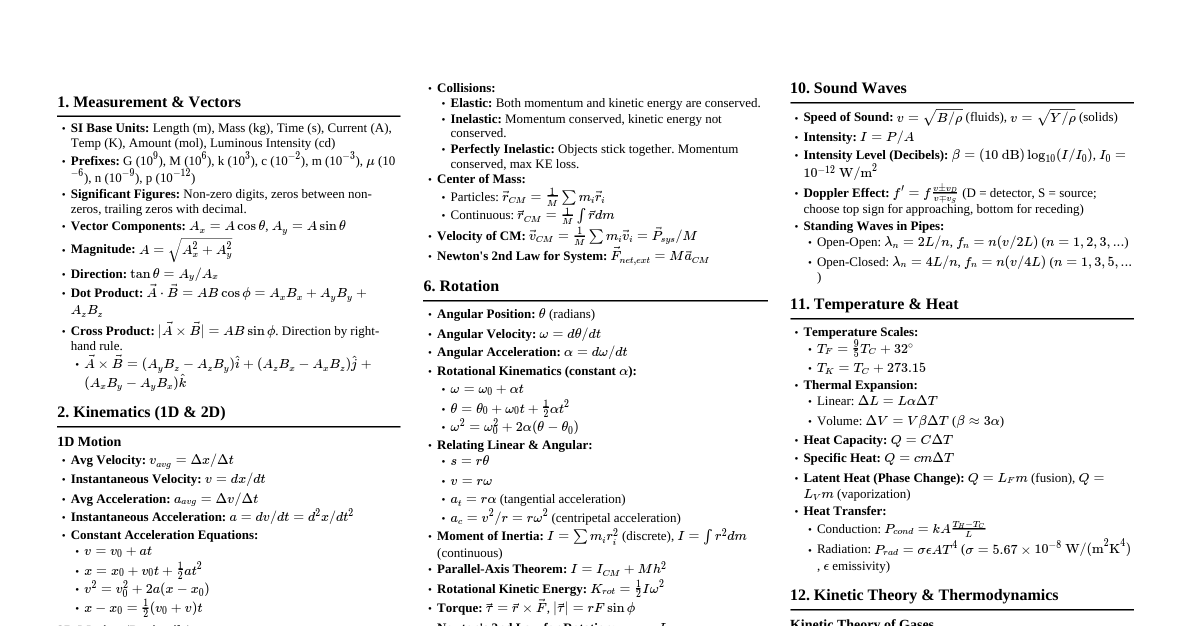

### Parallel & Distributed Computing Parallel and distributed computing are fundamental to cloud architecture, enabling simultaneous task execution. #### Parallel Computing Uses multiple processing elements simultaneously for a single problem. - **Hardware Architecture:** - **Multicore Processors:** Single physical processor with multiple execution cores (e.g., Intel i7). - **Vector/SIMD Processors:** Hardware for applying the same operation to multiple data points simultaneously (Single Instruction, Multiple Data), used in graphics and scientific data. - **GPUs (Graphics Processing Units):** Specialized accelerators for data-parallel tasks. - **Parallel Programming Models:** - **Shared Memory:** All processors access global memory. Communication via shared variables. - **Message Passing:** Processors have private memory and communicate by sending explicit messages (e.g., MPI). - **Levels of Parallelism:** - **Data-Level Parallelism:** Data partitioned; same task runs on each chunk (e.g., array of pixels). - **Task-Level Parallelism:** Different tasks/functions run on the same or different data simultaneously. - **Example:** Weather simulation on a supercomputer, thousands of cores calculating temperature changes across grid points. #### Distributed Computing Autonomous computers connected by a network working as a single system. - **Components:** - **Nodes:** Independent computers with local memory and OS. - **Interconnection Network:** Fabric (Ethernet, InfiniBand) for data transfer. - **Middleware:** Software layer (e.g., CORBA, RMI) abstracting machine heterogeneity and managing coordination. - **Architectural Styles:** - **Client-Server:** Clients request services, servers provide them. - **Peer-to-Peer (P2P):** All nodes are equal, acting as both clients and servers (e.g., BitTorrent). - **Cluster Computing:** Tightly coupled commodity computers acting as a single supercomputer. - **Example:** The World Wide Web (WWW) or a Hadoop cluster, where data is stored across thousands of machines and processed locally via network coordination. ### Content Centric Network (CCN) CCN (aka Named Data Networking or NDN) shifts network focus from "where" data is located (IP address) to "what" the content is. - **Concept:** Packets address named content (e.g., `/videos/lecture1.mp4`) instead of IP addresses. Network routes requests based on names. - **Mechanism:** - **Interest Packet:** Consumer broadcasts a packet with desired data's name. - **Data Packet:** Any node with matching data responds; packet follows reverse path of Interest packet. - **Benefits:** Supports natural caching, reduces redundant traffic, improves delivery speed for popular content. ### Software Defined Network (SDN) SDN decouples the control plane (decisions about traffic) from the data plane (underlying systems forwarding traffic). - **Architecture Layers:** - **Data Plane (Infrastructure Layer):** Network devices (switches, routers) forwarding packets based on rules. They are "dumb" devices. - **Control Plane (Controller Layer):** Centralized software controller (e.g., OpenDaylight) creating routing rules and logic; has global network view. - **Application Layer:** Business applications (firewalls, load balancers) communicate network requirements to the controller. - **Interfaces:** - **Southbound API (e.g., OpenFlow):** Connects controller to switches (Data Plane) to push forwarding rules. - **Northbound API:** Connects Application Layer to Controller, allowing applications to program network behavior. ### Cloud Scheduling Algorithms Scheduling in the cloud maps user tasks (or VMs) to physical resources (servers/cores) to optimize metrics like execution time, cost, or energy. #### Taxonomy of Scheduling Methods 1. **Static vs. Dynamic Scheduling:** - **Static:** Schedule calculated before execution; requires prior knowledge of task duration and resource availability. Simple but inflexible. - **Dynamic:** Decisions made at runtime based on current system state. Essential for fluctuating cloud workloads. 2. **Preemptive vs. Non-Preemptive:** - **Non-Preemptive:** Once a task starts, it runs to completion. - **Preemptive:** High-priority tasks can interrupt running low-priority tasks. #### Common Scheduling Algorithms 1. **First-Come-First-Serve (FCFS):** Tasks executed in arrival order. Simple but can cause "blocking." 2. **Round Robin:** Allocates fixed time slices to tasks cyclically. Ensures fairness but may increase latency for long jobs. 3. **Min-Min:** Selects the task that completes soonest on the fastest resource. Optimizes for best case, can starve longer tasks. 4. **Max-Min:** Selects the largest task and assigns to fastest resource. Clears big bottlenecks early, then fills gaps with smaller tasks. 5. **Start-Time Fair Queuing (SFQ):** Allocates CPU bandwidth proportionally among VMs, preventing starvation. #### Cloud-Specific Implementations - **Two-Level Scheduling:** Centralized scheduler (e.g., Mesos or YARN) allocates coarse-grained resources to frameworks (Hadoop, Spark), which then perform fine-grained scheduling. - **Data-Aware Scheduling:** Schedules computation on the node where data resides (Data Locality) to minimize network traffic (critical in MapReduce/Hadoop). ### Service Level Agreement (SLA) A formal negotiated agreement (contract) between a cloud service provider (CSP) and a customer (cloud user). It quantifies expected service levels and guarantees specific Quality of Service (QoS) parameters. #### Key Components - **Service Availability:** Percentage of time service is accessible (e.g., 99.9%). - **Performance Metrics:** Guarantees for response time, throughput, or latency. - **Penalties:** Financial credits or refunds if CSP fails to meet standards. - **Responsibility:** Defines boundaries of liability for data loss or service interruption. #### Example SLA for cloud storage (like Amazon S3): - **Guarantee:** 99.99% monthly uptime. - **Terms:** If uptime drops below 99.99% but above 99.0%, customer gets 10% service credit. If below 99.0%, 25% credit. - **Exclusions:** SLA typically excludes downtime from user software errors or force majeure events. ### Full vs. Para-Virtualization | Feature | Full Virtualization | Para-Virtualization | | :--------------------- | :----------------------------------------------------------------------------------- | :--------------------------------------------------------------------------------------- | | **Guest OS Modification** | None. Guest OS runs unmodified, unaware of virtualization. | Required. Guest OS source code modified to communicate directly with hypervisor. | | **Performance** | Lower, due to binary translation or hardware emulation overhead. | Higher, as Guest OS cooperates with hypervisor via "hypercalls." | | **Hardware Dependency** | Often relies on hardware-assisted virtualization (e.g., Intel VT-x, AMD-V). | Can run efficiently on legacy hardware without specific virtualization extensions. | | **Portability** | High; can run any OS (Windows, Linux) easily. | Lower; only runs OSs customized (ported) for that hypervisor. | | **Example Systems** | VMware ESXi, KVM (uses hardware assist). | Xen (in its original paravirtualized mode). | ### Cloud Computing Challenges Major challenges hindering adoption and operation of cloud computing: 1. **Security, Trust, and Privacy:** - **Data Confidentiality:** Sensitive data on third-party servers raises concerns about unauthorized access/breaches. - **Multi-tenancy Risks:** Malicious users on same physical server exploiting vulnerabilities to access other users' data. 2. **Availability and Reliability:** Users depend entirely on provider. Outages can cripple businesses. 3. **Vendor Lock-In:** Lack of standardization makes migration difficult, locking users into proprietary APIs/formats. 4. **Scalability and Fault Tolerance:** Managing dynamic scalability while maintaining performance is complex. System must detect failures and restart tasks automatically. 5. **Legal and Regulatory Issues:** Data residency laws (e.g., GDPR) may restrict where data can be stored, complicating global deployments. ### Petri Nets Applications Petri nets are a mathematical modeling language for distributed and concurrent systems. In Cloud Computing: 1. **Modeling Concurrency:** Maps how independent processes execute simultaneously and interact with shared resources. 2. **Workflow Management:** Models complex scientific or business workflows, ensuring tasks execute in correct order based on data dependencies. 3. **Deadlock and Conflict Detection:** Analyzes Petri net graph to mathematically prove if a cloud system might enter a "deadlock" state or have resource conflicts. 4. **State Analysis:** Tracks global state of a distributed system, understanding transitions based on events. ### Borrowed Virtual Time (BVT) A scheduling algorithm supporting low-latency (soft real-time) applications in shared cloud environments, often used in hypervisors (like Xen). - **Virtual Time:** Each task tracks its own "virtual time," increasing when running on CPU. - **Time Warp (W):** Latency-sensitive tasks are assigned a "warp" value. When waking up, they "borrow" virtual time by subtracting warp factor from actual virtual time. - **Effective Virtual Time (EVT):** Scheduler decisions based on EVT. For normal task: EVT = Actual Virtual Time. For warped task: EVT = Actual Virtual Time - Warp. - **Dispatch Rule:** Scheduler always selects task with smallest EVT. - **Example:** Thread A (batch, AVT 90) and Thread C (real-time, AVT 90, Warp 60). Thread C's EVT = 90 - 60 = 30. Since 30 ### Multi-BSP Model A theoretical bridging model for designing algorithms for multicore architectures, extending classic BSP to account for complex memory hierarchies (L1, L2, L3 caches) in modern cloud servers. #### Structure and Parameters Views the computer as a tree structure of depth $d$: - **Leaves:** Processors/cores. - **Internal Nodes:** Represent levels of memory (caches, main memory). - **Level i Parameters:** Each level defined by $p_i$ (number of sub-components), $g_i$ (communication bandwidth cost), $L_i$ (synchronization latency), $m_i$ (memory size/capacity). #### Use in Cloud Computing 1. **Algorithm Design:** Helps developers write "parameter-aware" algorithms that scale efficiently on multicore cloud servers by optimizing for cache and communication costs. 2. **Performance Predictability:** Provides a mathematical framework to predict application performance on specific cloud instance types. 3. **Handling Memory Hierarchy:** Explicitly models faster access to L1 cache than main memory, critical for high-performance cloud applications. ### Content Delivery Network (CDN) CDNs are crucial in cloud computing for delivering massive amounts of data globally with high performance and reliability. - **Latency Reduction:** Edge servers physically closer to end-users reduce communication latency and start-up time. - **Scalability & Flash Crowd Management:** Provide "capacity on demand" to absorb traffic spikes, preventing origin server crashes. - **Reliability:** Replicate content across multiple servers, ensuring high availability even if one server fails. - **Bandwidth Optimization:** Caching content at the edge reduces redundant traffic over the core network. ### Fair Queuing & Class-Based Queuing #### Fair Queuing (FQ) Ensures no single "greedy" flow consumes more than its fair share of link capacity, addressing unfairness of FCFS. - **Concept:** Prevents aggressive flows from starving others. - **Mechanism:** Classifies incoming packets into distinct flows, assigns each to its own queue. Scheduler services queues in Round-Robin order. - **Result:** Prevents high-data-rate flows from monopolizing bandwidth. Variations like Stochastic Fairness Queuing (SFQ) use hashing to map flows to queues. #### Class-Based Queuing (CBQ) Mechanism for link sharing supporting applications with specific bandwidth guarantees (VoIP, video streaming) while allowing other traffic to share remaining bandwidth. - **Concept:** Organizes traffic into a hierarchy of classes, each with assigned priorities and throughput allocations (e.g., Video: 60%, Web: 20%). - **Mechanism:** Uses Classifier (assigns packets to class), Estimator (measures bandwidth usage), and Scheduler (selects next packet based on priority/usage). - **Link Sharing:** Unused allocated bandwidth can be "borrowed" by other classes for efficient link utilization. ### Delay Scheduling, Apache Capacity Scheduler & Two-Level Resource Allocation #### Delay Scheduling Policy in data-intensive frameworks (Hadoop) to improve data locality. - When a task is scheduled, if the node with input data is busy, scheduler waits for a short period instead of immediately scheduling on a remote node. - This wait allows the local node to become free, preventing expensive data transfers and improving throughput. #### Apache Capacity Scheduler Pluggable scheduler for Hadoop, designed for multi-tenant service. - **Queues and Guarantees:** Supports multiple queues, each guaranteed a fraction of cluster capacity. - **Elasticity:** Unused queue capacity can be temporarily allocated to other queues; reclaimed if original queue needs it. - **Priorities:** Jobs with higher priority within a queue get resource access first. - **No Preemption (Standard):** Historically, waits for tasks to finish; newer versions may support preemption. #### Two-Level Resource Allocation Strategy for large clusters with diverse workloads (Mesos, YARN) to improve scalability by splitting scheduling responsibility. 1. **Level 1 (Resource Allocation):** Central coordinator (e.g., Mesos Master) manages global physical resources, offering "coarse-grained" bundles to specific framework schedulers. 2. **Level 2 (Resource Assignment):** Framework schedulers (Hadoop, Spark) receive offers and decide whether to accept resources and which tasks to run ("fine-grained" scheduling). - **Benefits:** Decouples global allocation from application-specific needs. ### Cloud Deployment Models Four primary models according to NIST and standard cloud theory: 1. **Public Cloud:** Infrastructure owned by CSP, services sold to general public. Resources shared (multi-tenancy). 2. **Private Cloud:** Infrastructure operated solely for one organization. Can be managed by organization or third party, on-premises or off-premises. Offers greater control/security. 3. **Community Cloud:** Infrastructure shared by several organizations with shared concerns (mission, security, compliance). Supports a specific community. 4. **Hybrid Cloud:** Composition of two or more clouds (private, community, or public) that remain unique but are bound by technology for data/application portability (e.g., "cloud bursting"). ### Load Balancing (Balls and Bins Model) Theoretical framework for analyzing randomized load balancing algorithms in distributed systems. - **Analogy:** Tasks are "balls" ($m$), processors/servers are "bins" ($n$). Goal: distribute balls to minimize maximum load in any bin. - **Randomized Strategy:** If tasks choose a processor uniformly at random, distribution is not perfectly even. Max load is $\approx \frac{\ln \ln n}{\ln 2}$. - **The Power of Two Choices:** Pick two random bins, check their load, place task in the least loaded. This exponentially improves load balance, reducing max load to $\approx \frac{\ln \ln n}{\ln 2} + O(1)$. ### Cloud Computing Characteristics According to NIST, cloud computing has five essential attributes: 1. **On-Demand Self-Service:** Consumer can unilaterally provision computing capabilities automatically. 2. **Broad Network Access:** Capabilities available over network (Internet) via standard mechanisms for heterogeneous client platforms. 3. **Resource Pooling:** Provider's computing resources pooled to serve multiple consumers using multi-tenant model, with dynamic allocation. 4. **Rapid Elasticity:** Capabilities elastically provisioned/released, scaling rapidly inward/outward. Appears unlimited to consumer. 5. **Measured Service:** Cloud systems monitor, control, optimize resource use via metering capability, providing transparency. ### Distributed Computing Technologies - **Remote Procedure Call (RPC):** Protocol allowing a program to execute a subroutine on a remote machine as if it were local. - **Distributed Object Frameworks:** Extensions of OOP for distributed environments. Examples: CORBA, Java RMI. - **Service-Oriented Computing (Web Services):** Allows disparate software applications to exchange data using standard protocols like SOAP and REST. Basis of modern distributed cloud applications. - **Message Passing Interface (MPI):** Standardized, portable message-passing standard for parallel computing architectures. ### Vehicular Cloud (VC) A group of vehicles whose computing, sensing, communication, and physical resources can be coordinated and dynamically allocated to authorized users. - **Underutilized Resources:** Modern vehicles have powerful on-board computers, storage, sensors often underutilized. VCs tap into these. - **Dynamic Formation:** VCs form dynamically by moving or parked vehicles, creating a volatile environment where nodes join/leave. - **Applications:** Traffic management, safety (e.g., pooling resources for complex traffic simulations, disseminating evacuation routes). - **Distinction from Conventional Clouds:** Key is resource volatility. Management strategies must account for unpredictable "residency time" of vehicles. ### Petri Nets for Dynamic System Behavior Petri nets (PNs) model dynamic behavior of distributed systems via: 1. **State Representation (Marking):** - **Places (p):** Conditions, input/output buffers, resource availability. - **Tokens:** Distribution of tokens across places (marking) represents current system state. - **Dynamic Change:** Movement of tokens changes marking, simulating state transition. 2. **Event Modeling (Transitions):** - **Transitions (t):** Model active events (computational tasks, packet transmission). - **Firing Rule:** Transition "enabled" if input places have enough tokens. Firing removes input tokens, adds output tokens. 3. **Concurrency and Conflict:** - **Concurrency:** Two transitions are concurrent if causally independent (no shared input tokens), modeling parallel activities. - **Conflict:** If two transitions share an input place with only one token, they are in conflict (only one fires), modeling resource contention. ### Virtualization: Main Enabling Cloud Technology Virtualization decouples software (OS, applications) from physical hardware, enabling core cloud characteristics: 1. **Resource Consolidation & Efficiency:** Multiple VMs on one physical server, drastically increasing hardware utilization (10-15% to 70-80%), reducing energy/costs. 2. **Isolation & Multi-tenancy:** Provides performance/security isolation. One user's faulty app won't affect others on same physical machine. 3. **Elasticity & Agility:** VM is a software file, allowing resources to be: - **Rapidly Provisioned:** New server in seconds. - **Migrated:** Running apps moved between physical servers without downtime. - **Scaled:** CPU/RAM resized dynamically. 4. **Hardware Independence:** Legacy/different OS environments (Linux, Windows) run simultaneously on same hardware, simplifying management. ### Cloud Computing Benefits & Limitations #### Benefits - **Cost Efficiency:** Eliminates upfront CapEx, pay-as-you-go OpEx. Reduces IT, power, cooling costs. - **Scalability & Agility:** Illusion of infinite resources, scaling infrastructure up/down quickly. - **Economy of Scale:** Large data centers operate more efficiently due to specialization. - **Accessibility:** Data/apps accessible from anywhere via Internet. #### Limitations (Challenges) - **Availability:** Users depend on service provider. Outages (AWS 2012 storm) can cripple businesses. - **Security & Privacy:** Storing data on third-party servers raises concerns (confidentiality, unauthorized access, auditability, multi-tenancy risks). - **Vendor Lock-in:** Difficult to move applications/data between providers due to lack of standardization. - **Performance Unpredictability:** Shared resources mean "noisy neighbors" can cause unexpected performance fluctuations. - **Data Transfer Bottlenecks:** Transferring massive data over Internet is slow/expensive. ### Hosted vs. Bare-Metal Architecture | Feature | Hosted Architecture (Type 2 Hypervisor) | Bare-Metal Architecture (Type 1 Hypervisor) | | :------------- | :------------------------------------------------------------------------- | :--------------------------------------------------------------------------- | | **Architecture** | Hypervisor runs *on top of* an existing Host OS (Windows, Linux) as an application. | Hypervisor runs *directly on* the physical hardware, without an intermediate OS. | | **Efficiency** | Lower performance due to I/O requests passing through Host OS. | Higher performance; direct hardware access. | | **Dependency** | Relies on Host OS for device drivers and basic services. | Does not require a host OS; hypervisor manages hardware directly. | | **Use Case** | Desktop virtualization, development, testing on personal computers. | Enterprise data centers, Cloud infrastructure (AWS, Azure). | | **Example** | VMware Workstation, Oracle VirtualBox, QEMU. | Xen, VMware ESXi, Microsoft Hyper-V. | ### Network-Centric Computing A computing model where applications and data are distributed over a network rather than residing on a single local computer. - **Key Aspects:** - **Utility Computing:** Resources (storage, processing) available over network on pay-per-use basis. - **Web Services & Connectivity:** Relies on internet technologies and standard protocols to connect diverse devices to centralized services. - **Distributed Systems:** Builds on principles of distributed systems for component communication/coordination. - **Grid & Cloud Computing:** Foundation for modern paradigms like Grid Computing (scientific collaboration) and Cloud Computing (dynamic provisioning). - **Evolution:** From "computer utilities" (1960s) to massive data centers. ### Network-Centric Content Refers to media and data (static, dynamic, live, stored) on the cloud, accessed via network, not confined to local storage. - **Content vs. Connection:** Focus shifts from "connecting to a specific host" to "retrieving specific content." Content addressed by name/identifier, not physical location. - **Accessibility:** Content is device-independent and location-independent. - **Content Delivery:** Relies on CDNs to cache data closer to user, reducing latency. - **Evolution:** Drives "Future Internet" / Named Data Networks (NDN) where routing is based on "what" the data is. ### Cloud Service Requirements For effective cloud services, systems must meet specific requirements: 1. **On-Demand Self-Service:** Users can provision computing capabilities automatically. 2. **Broad Network Access:** Services accessible over network via standard mechanisms by heterogeneous platforms. 3. **Resource Pooling:** Provider's computing resources pooled to serve multiple consumers, dynamically assigned. 4. **Rapid Elasticity:** Capabilities scale rapidly inward/outward automatically. 5. **Measured Service (Pay-per-Use):** Resource usage monitored, controlled, reported. 6. **Service Level Agreements (SLAs):** Critical to negotiate/enforce SLAs, specifying service level, availability, penalties. ### Applications of Cloud Computing Cloud computing is used across various domains: 1. **Scientific & Engineering Applications:** - **High Performance Computing (HPC):** Protein folding, financial modeling, earthquake simulation. - **Biology Research:** Azure BLAST for gene sequence comparisons. - **Geoscience:** Processing satellite images, environmental data. 2. **Business & Enterprise Applications:** - **CRM & ERP:** Salesforce.com, Microsoft Dynamics for customer relationships and resources. - **Data Analytics:** Data mining and analytics for optimizing operations. 3. **Consumer Applications:** - **Social Networking:** Facebook, Twitter, LinkedIn handle millions of users/content. - **Media & Content Delivery:** Netflix, YouTube stream video globally with low latency. - **Online Gaming:** Multiplayer gaming leverages cloud for shared virtual worlds. 4. **Data-Intensive Applications (Big Data):** MapReduce, Hadoop process petabytes of unstructured data. ### Data-Aware Scheduling A resource management strategy where scheduler considers data location when assigning tasks to nodes. - **Explanation:** In large-scale systems, moving data is slow. Data-aware scheduling moves computation to data, assigning tasks to nodes already storing required input data. - **Example (MapReduce):** JobTracker assigns Map tasks to nodes holding the input data blocks (e.g., 128MB HDFS chunks), avoiding network transfer. ### SLA Compliance Standards Users rely on third-party audits and compliance standards to verify CSP adherence to best practices in security and privacy. Common standards: - **PCI DSS:** For services handling credit card transactions. - **HIPAA:** For services handling sensitive healthcare data in the US. - **ISO/IEC 27001/27002:** International standards for information security management systems. - **SAS 70 / SSAE 16 / SOC Types I, II, III:** Auditing standards verifying internal controls over financial reporting/security. ### Virtualization Types & Hypervisors #### Types of Virtualization 1. **Full Virtualization:** Hypervisor simulates complete underlying hardware. Guest OS runs unmodified, relies on binary translation or hardware assistance. 2. **Para-Virtualization:** Guest OS modified to be aware of hypervisor. Uses special API calls ("hypercalls") for privileged operations, offering higher performance. 3. **OS-Level Virtualization (Containerization):** OS kernel allows multiple isolated user-space instances (containers). All containers share same host OS kernel but have own libraries/app code (e.g., Docker). #### Significance in Enabling Cloud Infrastructure - **Resource Pooling:** Multiple VMs on one physical server enable pooling resources for multi-tenancy. - **Isolation:** Strong isolation between users; one user's VM crash doesn't affect others. - **Agility & Scalability:** VMs/containers provisioned, started, stopped, migrated faster than physical hardware, enabling rapid elasticity. #### Importance of Hypervisors - **Hardware Abstraction:** Hypervisor (VMM) creates abstraction of physical hardware, managing resources and creating virtual environments. - **Resource Management:** Acts as traffic cop, scheduling resource access among competing VMs for fair distribution and performance. - **Security Enforcement:** Root of trust, enforcing security policies (e.g., one VM cannot access another's memory/data). ### Cloud Service Availability Factors Cloud services are vulnerable to events disrupting availability: - **Malicious Attacks:** DDoS attacks can overwhelm services (e.g., Akamai, Google). - **Infrastructure Failures:** Power failures, lightning, cooling system breakdowns can take down entire availability zones (AWS 2012 outage). - **Software Faults & Bugs:** "Hidden" bugs can extend outages (e.g., in load balancers). - **Complex Interactions (Instability):** Unintended coupling of independent controllers can lead to dynamic instability. ### Cloud Interoperability Challenges - **Main Challenges:** - **Lack of Standards:** No universally accepted standards for cloud APIs, data formats, interfaces. Vendors use proprietary solutions. - **Heterogeneity:** Different providers use different hypervisors, storage models, networking architectures, making migration difficult. - **Impact on Cloud Ecosystems:** - **Vendor Lock-in:** Customers become dependent on single provider's proprietary technology, limiting freedom of choice. - **Fragmented Market:** Hinders creation of global, open market for cloud services. Complicates hybrid/federated cloud deployments. ### RAID 5 & RACS #### RAID 5 (Redundant Array of Independent Disks Level 5) Storage technique for reliability and efficient data recovery. - **Mechanism:** Block-level striping with distributed parity. Data broken into blocks, distributed across disks. Parity block calculated (XOR) and stored on a different disk for each stripe. - **Benefit:** Withstands single drive failure; missing data reconstructed using remaining data/parity blocks. #### RACS (Redundant Array of Cloud Storage) Applies RAID 5 principles to cloud storage to solve vendor lock-in and increase reliability. - **Concept:** Stripes data across multiple cloud storage providers (e.g., Amazon S3, Google Cloud Storage). - **Mechanism:** Proxy server acts as "RAID controller," splitting user data into chunks, calculating parity, distributing chunks to different cloud providers. - **Benefit:** Avoids Vendor Lock-in. If one provider fails or raises prices, data can be retrieved from chunks on other clouds. Provides "cloud storage diversity." ### Vehicular Ad Hoc Networks (VANETs) Specific type of mobile ad hoc network where vehicles with on-board computers and wireless transceivers communicate with each other (V2V) and roadside infrastructure (V2I). - **Key Characteristics:** - **Dynamic Topology:** Vehicles move at high speeds, causing rapid network connection changes. - **Communication Types:** Supports V2V for safety data, and V2I for connecting to roadside units/Internet. - **Power:** Rarely an issue due to vehicle battery. - **Role in Cloud:** Often discussed in context of Vehicular Clouds, where vehicle resources are coordinated for services. - **Applications:** Safety (accident warnings), traffic efficiency (congestion monitoring), infotainment. - **Example (Safety Warning):** Lead vehicle detects black ice, broadcasts warning via V2V. Following vehicles receive message, alert drivers, engage braking. Data sent via V2I to traffic management cloud.