Stochastic Processes Cheatshee

Cheatsheet Content

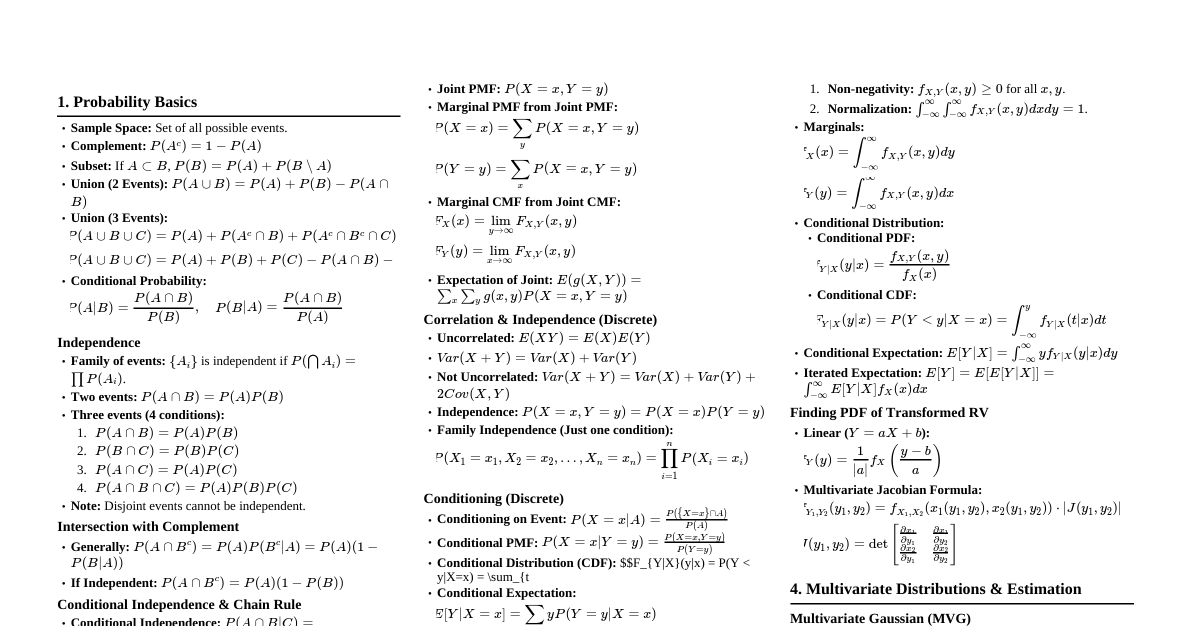

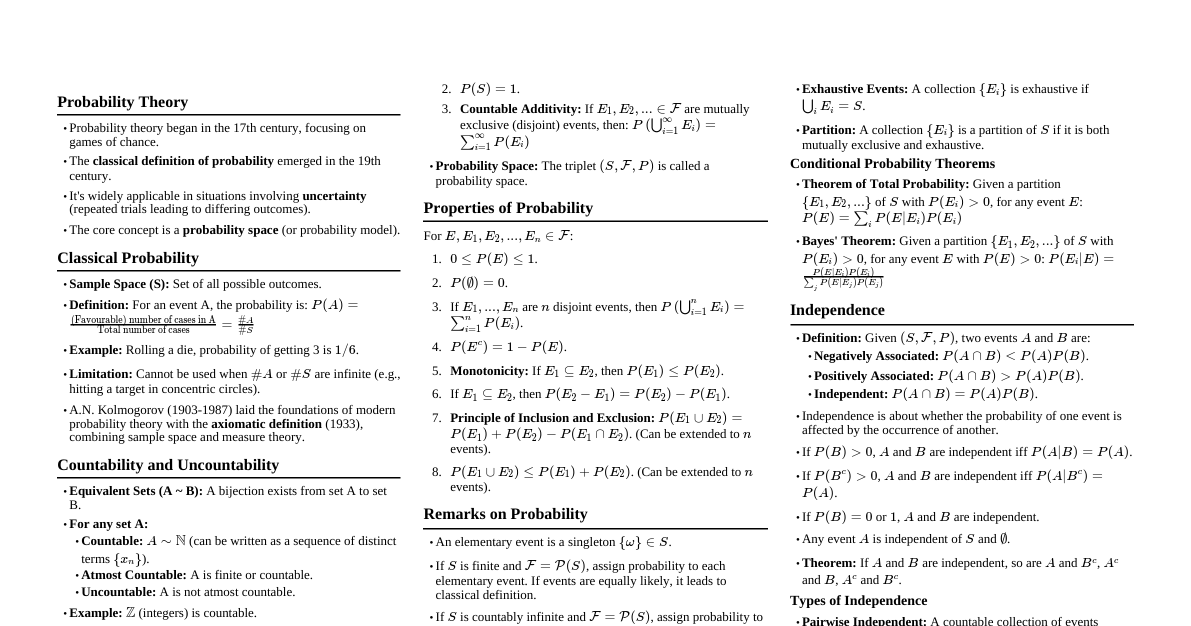

### Prerequisites: Probability Theory - **Probability Space:** $(\Omega, \mathcal{F}, P)$ where $\Omega$ is sample space, $\mathcal{F}$ is $\sigma$-algebra of events, $P$ is probability measure. - **Random Variable (RV):** A function $X: \Omega \to \mathbb{R}$ such that for every Borel set $B \in \mathcal{B}(\mathbb{R})$, $X^{-1}(B) \in \mathcal{F}$. - **Expectation:** - For discrete RV: $E[X] = \sum_x x P(X=x)$ - For continuous RV: $E[X] = \int_{-\infty}^{\infty} x f_X(x) dx$ - **Variance:** $Var(X) = E[(X - E[X])^2] = E[X^2] - (E[X])^2$ - **Conditional Expectation:** $E[X|\mathcal{G}]$ for a sub-$\sigma$-algebra $\mathcal{G} \subseteq \mathcal{F}$. - $E[X|Y=y]$: Expected value of $X$ given $Y=y$. - Properties: Linearity, Tower Property $E[E[X|\mathcal{G}]] = E[X]$, $E[XY|\mathcal{G}] = Y E[X|\mathcal{G}]$ if $Y$ is $\mathcal{G}$-measurable. - **Modes of Convergence:** - **Almost Sure (a.s.):** $X_n \to X$ a.s. if $P(\{\omega: X_n(\omega) \to X(\omega)\}) = 1$. - **In Probability:** $X_n \xrightarrow{P} X$ if $\lim_{n\to\infty} P(|X_n - X| > \epsilon) = 0$ for all $\epsilon > 0$. - **In $L^p$:** $X_n \xrightarrow{L^p} X$ if $\lim_{n\to\infty} E[|X_n - X|^p] = 0$. - **In Distribution:** $X_n \xrightarrow{D} X$ if $\lim_{n\to\infty} F_{X_n}(x) = F_X(x)$ at all continuity points of $F_X$. - **Laws of Large Numbers (LLN):** - **Weak LLN:** If $X_i$ are i.i.d. with $E[X_i] = \mu ### Stochastic Processes: Basic Concepts - **Definition:** A stochastic process is a collection of random variables $\{X_t\}_{t \in T}$ defined on a probability space $(\Omega, \mathcal{F}, P)$, where $T$ is an index set (time). - **Discrete Time:** $T = \{0, 1, 2, ...\}$ or $T = \{..., -1, 0, 1, ...\}$. - **Continuous Time:** $T = [0, \infty)$ or $T = \mathbb{R}$. - **State Space:** The set of all possible values that $X_t$ can take. - **Sample Path/Realization:** For a fixed $\omega \in \Omega$, the function $t \mapsto X_t(\omega)$. - **Filtration:** A sequence of $\sigma$-algebras $\{\mathcal{F}_t\}_{t \in T}$ such that $\mathcal{F}_s \subseteq \mathcal{F}_t \subseteq \mathcal{F}$ for $s ### Markov Chains (Discrete Time) - **Definition:** A sequence of RVs $\{X_n\}_{n=0}^\infty$ is a discrete-time Markov chain (DTMC) if for all $n \ge 0$ and states $i_0, ..., i_n, j$: $$P(X_{n+1}=j | X_n=i_n, ..., X_0=i_0) = P(X_{n+1}=j | X_n=i_n)$$ This is the **Markov Property**: "The future is conditionally independent of the past given the present." - **Homogeneous MC:** Transition probabilities are time-independent: $P(X_{n+1}=j | X_n=i) = P_{ij}$. - **Transition Matrix $P$:** $P_{ij} = P(X_{n+1}=j | X_n=i)$. $P$ is a stochastic matrix (rows sum to 1). - **Chapman-Kolmogorov Equations:** For $n, m \ge 0$: $$P_{ij}^{(n+m)} = \sum_k P_{ik}^{(n)} P_{kj}^{(m)}$$ In matrix form: $P^{(n+m)} = P^n P^m$. **Proof:** $$P(X_{n+m}=j | X_0=i) = \sum_k P(X_{n+m}=j, X_n=k | X_0=i)$$ $$ = \sum_k P(X_{n+m}=j | X_n=k, X_0=i) P(X_n=k | X_0=i)$$ $$ = \sum_k P(X_{n+m}=j | X_n=k) P(X_n=k | X_0=i) \quad (\text{Markov Property})$$ $$ = \sum_k P_{kj}^{(m)} P_{ik}^{(n)}$$ - **Communicating States:** $i \leftrightarrow j$ if $i \to j$ and $j \to i$. - **Irreducible MC:** All states communicate with each other. - **Recurrent State:** A state $i$ is recurrent if $P(\text{return to } i | X_0=i) = 1$. Otherwise, it's transient. - **Positive Recurrent:** A recurrent state $i$ is positive recurrent if $E[\text{time to return to } i | X_0=i] 0\}$. - **Aperiodic State:** $d(i)=1$. - **Ergodic State:** Aperiodic and positive recurrent. - **Stationary Distribution ($\pi$):** A probability vector such that $\pi P = \pi$ and $\sum_i \pi_i = 1$. - **Theorem:** For an irreducible, aperiodic DTMC, $\lim_{n\to\infty} P_{ij}^{(n)} = \pi_j$ for all $i,j$, where $\pi$ is the unique stationary distribution. - **Proof (Sketch):** Relies on the Ergodic Theorem for Markov Chains, which states that for any irreducible, positive recurrent Markov chain, the time average of a function of the state converges to the ensemble average with respect to the stationary distribution. For aperiodic chains, this implies convergence of transition probabilities. - **Reversible Markov Chains:** A MC with stationary distribution $\pi$ is reversible if $\pi_i P_{ij} = \pi_j P_{ji}$ for all $i,j$. (Detailed Balance Equations) ### Martingales - **Definition:** A sequence of RVs $\{M_n\}_{n=0}^\infty$ is a martingale with respect to a filtration $\{\mathcal{F}_n\}_{n=0}^\infty$ if: 1. $M_n$ is $\mathcal{F}_n$-measurable for all $n$. (Adapted) 2. $E[|M_n|] k}]$ for some $N$. $E[(M_{k+1} - M_k) \mathbb{I}_{T > k}] = E[E[(M_{k+1} - M_k) \mathbb{I}_{T > k} | \mathcal{F}_k]]$. Since $\mathbb{I}_{T > k}$ is $\mathcal{F}_k$-measurable (as $T$ is a stopping time), $= E[\mathbb{I}_{T > k} E[M_{k+1} - M_k | \mathcal{F}_k]] = E[\mathbb{I}_{T > k} (E[M_{k+1} | \mathcal{F}_k] - M_k)] = E[\mathbb{I}_{T > k} (M_k - M_k)] = 0$. Thus, $E[M_T] = E[M_0]$. - **Doob's Martingale Convergence Theorem:** If $M_n$ is a submartingale with $\sup_n E[|M_n|] ### Branching Processes - **Definition:** A sequence of RVs $X_n$ representing the size of a population at generation $n$. $X_0 = 1$. Each individual in generation $n$ produces $k$ offspring with probability $p_k$, independently. $$X_{n+1} = \sum_{i=1}^{X_n} Z_{n,i}$$ where $Z_{n,i}$ are i.i.d. with $P(Z_{n,i}=k) = p_k$. - **Generating Function:** $G(s) = E[s^Z] = \sum_{k=0}^\infty p_k s^k$. - **Mean Number of Offspring:** $\mu = E[Z] = G'(1)$. - **Generating Function for $X_n$:** $G_n(s) = E[s^{X_n}]$. $$G_{n+1}(s) = E[s^{X_{n+1}}] = E[E[s^{\sum_{i=1}^{X_n} Z_{n,i}} | X_n]] = E[(G(s))^{X_n}] = G_n(G(s))$$ So $G_n(s) = G(G(...G(s)...))$ ($n$ times). - **Probability of Extinction ($\rho$):** The smallest non-negative root of $s = G(s)$. - If $\mu \le 1$, then $\rho = 1$ (extinction is certain, unless $p_1=1$). - If $\mu > 1$, then $\rho 1$, $G'(1) > 1$, so $G(s)$ crosses the line $y=s$ from below at $s=\rho ### Poisson Process - **Definition (Counting Process):** A counting process $\{N(t)\}_{t \ge 0}$ is a Poisson Process with rate $\lambda > 0$ if: 1. $N(0)=0$. 2. $N(t)$ has independent increments (number of events in disjoint intervals are independent). 3. $N(t)$ has stationary increments (distribution of $N(t+s) - N(s)$ depends only on $t$). 4. $P(N(h)=1) = \lambda h + o(h)$ as $h \to 0$. 5. $P(N(h) \ge 2) = o(h)$ as $h \to 0$. - **Distribution:** $N(t) \sim \text{Poisson}(\lambda t)$, i.e., $P(N(t)=k) = e^{-\lambda t} \frac{(\lambda t)^k}{k!}$. **Proof (Sketch):** Let $P_k(t) = P(N(t)=k)$. $P_0(t+h) = P(N(t+h)=0) = P(N(t)=0, N(t+h)-N(t)=0)$ $= P_0(t) P(N(h)=0) = P_0(t) (1 - \lambda h - o(h))$. So $\frac{P_0(t+h) - P_0(t)}{h} = -\lambda P_0(t) - o(1) P_0(t)$. Taking limit as $h \to 0$: $P_0'(t) = -\lambda P_0(t)$. With $P_0(0)=1$, this implies $P_0(t) = e^{-\lambda t}$. Similarly for $P_k(t)$, we can derive a differential-difference equation: $P_k'(t) = -\lambda P_k(t) + \lambda P_{k-1}(t)$. Solving this yields the Poisson distribution. - **Inter-arrival Times:** Let $T_1, T_2, ...$ be the times between successive events. They are i.i.d. Exponential($\lambda$) RVs. **Proof:** $P(T_1 > t) = P(N(t)=0) = e^{-\lambda t}$. So $T_1 \sim \text{Exp}(\lambda)$. $P(T_2 > t | T_1=s) = P(N(t+s)-N(s)=0 | T_1=s) = P(N(t)=0) = e^{-\lambda t}$ by independent and stationary increments. So $T_2 \sim \text{Exp}(\lambda)$ and is independent of $T_1$. - **Sums of Poisson Processes:** If $N_1(t) \sim \text{Poisson}(\lambda_1 t)$ and $N_2(t) \sim \text{Poisson}(\lambda_2 t)$ are independent, then $N_1(t)+N_2(t) \sim \text{Poisson}((\lambda_1+\lambda_2)t)$. ### Continuous-Time Markov Chains (CTMC) - **Definition:** A stochastic process $\{X(t)\}_{t \ge 0}$ with a discrete state space $S$ is a CTMC if for all $t, s \ge 0$ and states $i, j, i_s$: $$P(X(t+s)=j | X(s)=i_s, X(u)=i_u \text{ for } u ### Brownian Motion - **Definition (Standard Brownian Motion $B(t)$):** A continuous-time stochastic process $\{B(t)\}_{t \ge 0}$ such that: 1. $B(0)=0$ a.s. 2. $B(t)$ has independent increments: For $0 \le s s$. 4. $B(t)$ has continuous sample paths a.s. - **Properties:** - $E[B(t)] = 0$ - $Var(B(t)) = t$ - $Cov(B(s), B(t)) = \min(s,t)$ **Proof:** $Cov(B(s), B(t)) = E[B(s)B(t)]$. Assume $s \le t$. $E[B(s)B(t)] = E[B(s)(B(t)-B(s)+B(s))] = E[B(s)(B(t)-B(s))] + E[B(s)^2]$. Since $B(s)$ and $B(t)-B(s)$ are independent and $E[B(t)-B(s)]=0$, $E[B(s)(B(t)-B(s))] = E[B(s)]E[B(t)-B(s)] = 0 \cdot 0 = 0$. So $Cov(B(s), B(t)) = E[B(s)^2] = Var(B(s)) = s$. - **General Brownian Motion:** $X(t) = \mu t + \sigma B(t)$, where $\mu \in \mathbb{R}$ is the drift and $\sigma > 0$ is the volatility. - $X(t) \sim N(\mu t, \sigma^2 t)$. - **Reflection Principle:** For $a>0$, $P(\max_{0 \le s \le t} B(s) \ge a) = 2 P(B(t) \ge a)$. **Proof (Sketch):** Consider the first time $\tau_a = \inf\{t : B(t) = a\}$. If $B(t) \ge a$, then $\tau_a \le t$. By the strong Markov property, the process $B(t) - B(\tau_a)$ for $t \ge \tau_a$ is also a Brownian motion. $P(B(t) \ge a \text{ and } \max_{0 \le s \le t} B(s) \ge a) = P(B(t) \ge a)$. $P(B(t) ### Stochastic Calculus (Brief Intro) - **Itô Integral:** For a predictable process $H(t)$ and Brownian motion $B(t)$, the Itô integral $\int_0^T H(s) dB(s)$ is defined as a limit of Riemann sums. - **Properties:** $E[\int_0^T H(s) dB(s)] = 0$. - **Itô Isometry:** $E[(\int_0^T H(s) dB(s))^2] = E[\int_0^T H(s)^2 ds]$. - **Itô's Lemma (1D):** If $X(t)$ is an Itô process $dX(t) = u(t,X(t)) dt + v(t,X(t)) dB(t)$, and $f(t,x)$ is a twice continuously differentiable function, then $Y(t) = f(t,X(t))$ is also an Itô process: $$dY(t) = \left(\frac{\partial f}{\partial t} + u \frac{\partial f}{\partial x} + \frac{1}{2} v^2 \frac{\partial^2 f}{\partial x^2}\right) dt + v \frac{\partial f}{\partial x} dB(t)$$ **Proof (Sketch):** Apply Taylor expansion to $f(t,X(t))$ up to second order, noting that $dt^2 = 0$, $dt dB(t) = 0$, and $dB(t)^2 = dt$. $df = \frac{\partial f}{\partial t} dt + \frac{\partial f}{\partial x} dX + \frac{1}{2} \frac{\partial^2 f}{\partial x^2} (dX)^2 + \frac{1}{2} \frac{\partial^2 f}{\partial t^2} (dt)^2 + \frac{\partial^2 f}{\partial t \partial x} dt dX + ...$ Substitute $dX = u dt + v dB$ and $(dX)^2 = (u dt + v dB)^2 = u^2 dt^2 + 2uv dt dB + v^2 dB^2 = v^2 dt$. This yields the formula.