Calculus I-III (Full Course)

Cheatsheet Content

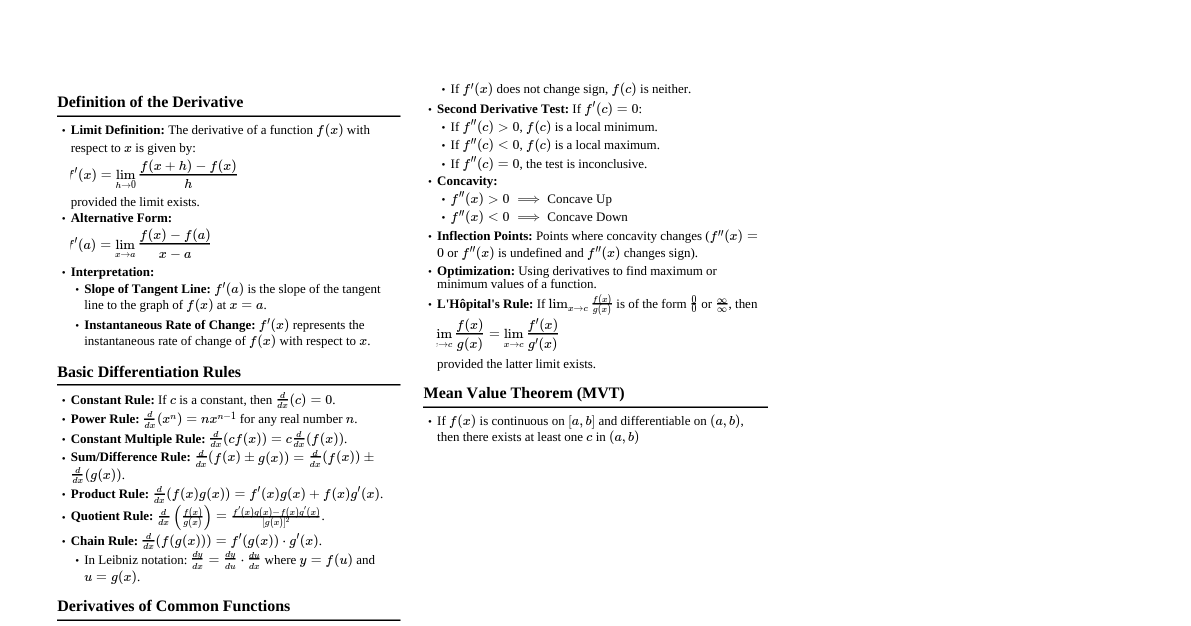

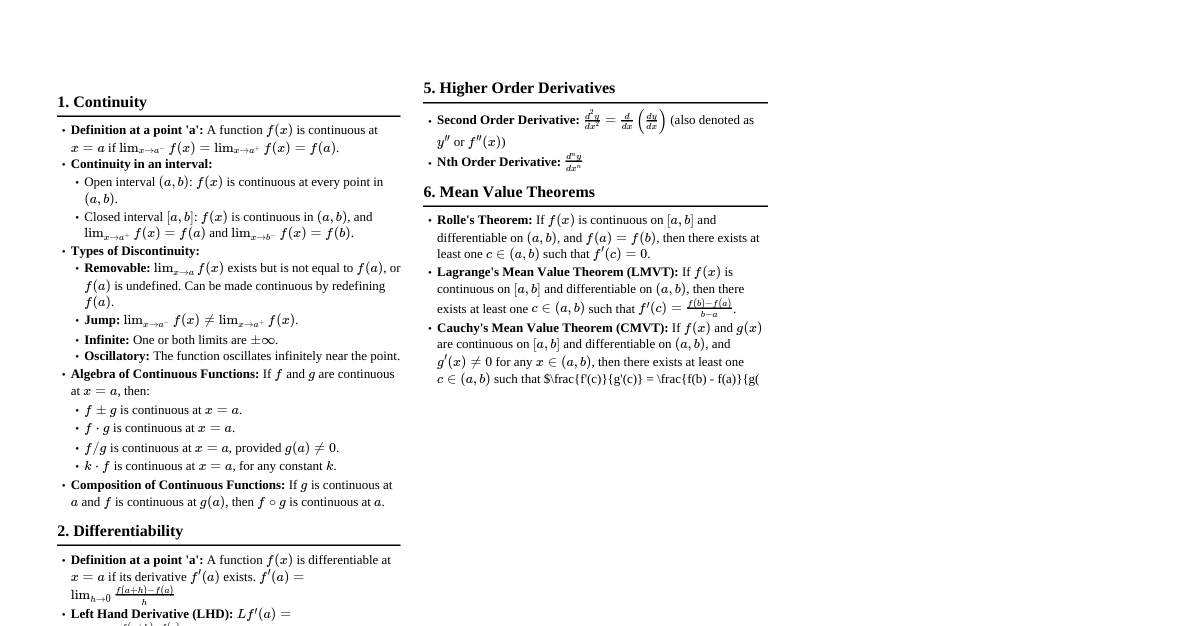

### Limits and Continuity #### Definitions - **Limit of a Function:** We say $\lim_{x \to a} f(x) = L$ if for every $\epsilon > 0$ there exists a $\delta > 0$ such that if $0 0$, there exist $\delta_1 > 0$ and $\delta_2 > 0$ such that: - if $0 0$. If $f(b) 0$ and $g(b) = f(b) - N ### Differentiation #### Definitions - **Derivative:** The derivative of a function $f$ at a number $a$, denoted by $f'(a)$, is $f'(a) = \lim_{h \to 0} \frac{f(a+h) - f(a)}{h}$, provided this limit exists. - **Interpretation:** $f'(a)$ represents the instantaneous rate of change of $f(x)$ with respect to $x$ at $x=a$, or the slope of the tangent line to the graph of $f$ at $(a, f(a))$. - **Differentiability:** A function $f$ is differentiable at $a$ if $f'(a)$ exists. If $f$ is differentiable at $a$, then $f$ is continuous at $a$. The converse is not true (e.g., $f(x) = |x|$ at $x=0$). #### Differentiation Rules - **Constant Rule:** $\frac{d}{dx}(c) = 0$ - **Power Rule:** $\frac{d}{dx}(x^n) = nx^{n-1}$ for any real number $n$. - **Constant Multiple Rule:** $\frac{d}{dx}(c f(x)) = c f'(x)$ - **Sum/Difference Rule:** $\frac{d}{dx}(f(x) \pm g(x)) = f'(x) \pm g'(x)$ - **Product Rule:** $\frac{d}{dx}(f(x) g(x)) = f'(x) g(x) + f(x) g'(x)$ - **Proof:** Let $y = f(x)g(x)$. $\frac{dy}{dx} = \lim_{h \to 0} \frac{f(x+h)g(x+h) - f(x)g(x)}{h}$ $= \lim_{h \to 0} \frac{f(x+h)g(x+h) - f(x)g(x+h) + f(x)g(x+h) - f(x)g(x)}{h}$ $= \lim_{h \to 0} \left[ g(x+h) \frac{f(x+h) - f(x)}{h} + f(x) \frac{g(x+h) - g(x)}{h} \right]$ Since $g$ is differentiable, it is continuous, so $\lim_{h \to 0} g(x+h) = g(x)$. $= g(x) \lim_{h \to 0} \frac{f(x+h) - f(x)}{h} + f(x) \lim_{h \to 0} \frac{g(x+h) - g(x)}{h}$ $= g(x) f'(x) + f(x) g'(x)$. - **Quotient Rule:** $\frac{d}{dx}\left(\frac{f(x)}{g(x)}\right) = \frac{f'(x) g(x) - f(x) g'(x)}{[g(x)]^2}$ - **Proof:** Let $y = \frac{f(x)}{g(x)}$. $\frac{dy}{dx} = \lim_{h \to 0} \frac{\frac{f(x+h)}{g(x+h)} - \frac{f(x)}{g(x)}}{h} = \lim_{h \to 0} \frac{f(x+h)g(x) - f(x)g(x+h)}{h g(x+h)g(x)}$ $= \lim_{h \to 0} \frac{f(x+h)g(x) - f(x)g(x) + f(x)g(x) - f(x)g(x+h)}{h g(x+h)g(x)}$ $= \lim_{h \to 0} \frac{g(x) \frac{f(x+h) - f(x)}{h} - f(x) \frac{g(x+h) - g(x)}{h}}{g(x+h)g(x)}$ Since $g$ is differentiable, it is continuous, so $\lim_{h \to 0} g(x+h) = g(x)$. $= \frac{g(x) f'(x) - f(x) g'(x)}{[g(x)]^2}$. - **Chain Rule:** $\frac{d}{dx}(f(g(x))) = f'(g(x)) g'(x)$ or $\frac{dy}{dx} = \frac{dy}{du} \frac{du}{dx}$ if $y=f(u)$ and $u=g(x)$. - **Proof (Sketch for $g'(x) \neq 0$):** $\frac{dy}{dx} = \lim_{\Delta x \to 0} \frac{\Delta y}{\Delta x} = \lim_{\Delta x \to 0} \frac{\Delta y}{\Delta u} \frac{\Delta u}{\Delta x}$ As $\Delta x \to 0$, $\Delta u = g(x+\Delta x) - g(x) \to 0$ because $g$ is continuous. So, $\lim_{\Delta x \to 0} \frac{\Delta y}{\Delta u} \frac{\Delta u}{\Delta x} = \left(\lim_{\Delta u \to 0} \frac{\Delta y}{\Delta u}\right) \left(\lim_{\Delta x \to 0} \frac{\Delta u}{\Delta x}\right) = \frac{dy}{du} \frac{du}{dx} = f'(u)g'(x) = f'(g(x))g'(x)$. A more rigorous proof handles the case where $\Delta u = 0$ for values of $\Delta x$ near 0. #### Important Derivatives - $\frac{d}{dx}(\sin x) = \cos x$ - $\frac{d}{dx}(\cos x) = -\sin x$ - $\frac{d}{dx}(\tan x) = \sec^2 x$ - $\frac{d}{dx}(e^x) = e^x$ - $\frac{d}{dx}(\ln x) = \frac{1}{x}$ - $\frac{d}{dx}(\arcsin x) = \frac{1}{\sqrt{1-x^2}}$ - $\frac{d}{dx}(\arctan x) = \frac{1}{1+x^2}$ #### Theorems - **Rolle's Theorem:** Let $f$ be a function that satisfies the following three hypotheses: 1. $f$ is continuous on the closed interval $[a, b]$. 2. $f$ is differentiable on the open interval $(a, b)$. 3. $f(a) = f(b)$. Then there is a number $c$ in $(a, b)$ such that $f'(c) = 0$. - **Proof:** Case 1: $f(x) = k$ (a constant) for all $x \in [a,b]$. Then $f'(x) = 0$ for all $x \in (a,b)$, so any $c \in (a,b)$ works. Case 2: $f(x)$ is not constant. Since $f$ is continuous on $[a,b]$, by the Extreme Value Theorem, $f$ attains its maximum and minimum values on $[a,b]$. Since $f(a) = f(b)$ and $f$ is not constant, at least one of the maximum or minimum must occur at a point $c \in (a,b)$. If $f(c)$ is a local extremum and $c \in (a,b)$, and $f$ is differentiable at $c$, then by Fermat's Theorem, $f'(c) = 0$. - **Mean Value Theorem (MVT):** If $f$ is continuous on the closed interval $[a, b]$ and differentiable on the open interval $(a, b)$, then there exists a number $c$ in $(a, b)$ such that $f'(c) = \frac{f(b) - f(a)}{b - a}$. - **Proof:** Consider the function $g(x) = f(x) - \left( f(a) + \frac{f(b) - f(a)}{b - a}(x - a) \right)$. This function $g(x)$ represents the vertical distance between $f(x)$ and the secant line connecting $(a, f(a))$ and $(b, f(b))$. 1. $g(x)$ is continuous on $[a,b]$ because $f(x)$ and linear functions are continuous. 2. $g(x)$ is differentiable on $(a,b)$ because $f(x)$ and linear functions are differentiable. 3. $g(a) = f(a) - (f(a) + 0) = 0$. 4. $g(b) = f(b) - \left( f(a) + \frac{f(b) - f(a)}{b - a}(b - a) \right) = f(b) - (f(a) + f(b) - f(a)) = 0$. Since $g(a) = g(b) = 0$, by Rolle's Theorem, there exists a number $c \in (a,b)$ such that $g'(c) = 0$. $g'(x) = f'(x) - \frac{f(b) - f(a)}{b - a}$. So, $g'(c) = f'(c) - \frac{f(b) - f(a)}{b - a} = 0$, which implies $f'(c) = \frac{f(b) - f(a)}{b - a}$. ### Applications of Differentiation - **L'Hôpital's Rule:** If $\lim_{x \to a} \frac{f(x)}{g(x)}$ is of the form $\frac{0}{0}$ or $\frac{\pm\infty}{\pm\infty}$, and $f$ and $g$ are differentiable and $g'(x) \neq 0$ near $a$ (except possibly at $a$), then $\lim_{x \to a} \frac{f(x)}{g(x)} = \lim_{x \to a} \frac{f'(x)}{g'(x)}$, provided the latter limit exists or is $\pm\infty$. - **Proof (for $\frac{0}{0}$ case, $a$ finite, $g'(x) \neq 0$):** We use Cauchy's Mean Value Theorem: If $f$ and $g$ are continuous on $[a,b]$ and differentiable on $(a,b)$ and $g'(x) \neq 0$ for $x \in (a,b)$, then there exists $c \in (a,b)$ such that $\frac{f'(c)}{g'(c)} = \frac{f(b) - f(a)}{g(b) - g(a)}$. Assume $\lim_{x \to a} f(x) = 0$ and $\lim_{x \to a} g(x) = 0$. For $x > a$, consider the interval $[a,x]$. Applying Cauchy's MVT on $[a,x]$, there exists $c_x \in (a,x)$ such that $\frac{f'(c_x)}{g'(c_x)} = \frac{f(x) - f(a)}{g(x) - g(a)}$. Since $\lim_{x \to a} f(x) = f(a) = 0$ and $\lim_{x \to a} g(x) = g(a) = 0$ (by continuity, extending $f,g$ to be continuous at $a$), we have: $\frac{f'(c_x)}{g'(c_x)} = \frac{f(x)}{g(x)}$. As $x \to a^+$, we have $c_x \to a^+$. Thus, $\lim_{x \to a^+} \frac{f(x)}{g(x)} = \lim_{c_x \to a^+} \frac{f'(c_x)}{g'(c_x)} = \lim_{x \to a^+} \frac{f'(x)}{g'(x)}$. A similar argument holds for $x \to a^-$. The other indeterminate forms can be converted to $\frac{0}{0}$ or $\frac{\infty}{\infty}$. - **First Derivative Test:** Suppose $c$ is a critical number of a continuous function $f$. - If $f'(x)$ changes from positive to negative at $c$, then $f$ has a local maximum at $c$. - If $f'(x)$ changes from negative to positive at $c$, then $f$ has a local minimum at $c$. - If $f'(x)$ does not change sign at $c$ (e.g., $f'(x)$ is positive on both sides of $c$), then $f$ has no local extremum at $c$. - **Second Derivative Test:** Suppose $f''(x)$ is continuous near $c$. - If $f'(c) = 0$ and $f''(c) > 0$, then $f$ has a local minimum at $c$. - If $f'(c) = 0$ and $f''(c) ### Integration #### Definitions - **Antiderivative:** A function $F$ is an antiderivative of $f$ on an interval $I$ if $F'(x) = f(x)$ for all $x$ in $I$. - **Indefinite Integral:** The indefinite integral of $f(x)$ is denoted by $\int f(x) dx = F(x) + C$, where $F$ is an antiderivative of $f$ and $C$ is the constant of integration. - **Definite Integral (Riemann Sum):** The definite integral of $f$ from $a$ to $b$ is $\int_a^b f(x) dx = \lim_{n \to \infty} \sum_{i=1}^n f(x_i^*) \Delta x$, where $\Delta x = \frac{b-a}{n}$ and $x_i^*$ is a sample point in the $i$-th subinterval $[x_{i-1}, x_i]$. #### Fundamental Theorem of Calculus (FTC) - **FTC Part 1:** If $f$ is continuous on $[a, b]$, then the function $g(x) = \int_a^x f(t) dt$ is continuous on $[a, b]$ and differentiable on $(a, b)$, and $g'(x) = f(x)$. - **Proof:** $g'(x) = \lim_{h \to 0} \frac{g(x+h) - g(x)}{h} = \lim_{h \to 0} \frac{1}{h} \left( \int_a^{x+h} f(t) dt - \int_a^x f(t) dt \right)$ $= \lim_{h \to 0} \frac{1}{h} \int_x^{x+h} f(t) dt$. If $h > 0$, since $f$ is continuous, by the Extreme Value Theorem, $f$ attains a minimum $m$ and maximum $M$ on $[x, x+h]$. So, $m \le f(t) \le M$ for $t \in [x, x+h]$. Then $m h \le \int_x^{x+h} f(t) dt \le M h$. And $m \le \frac{1}{h} \int_x^{x+h} f(t) dt \le M$. By the IVT for continuous functions, there exists $c_h \in [x, x+h]$ such that $f(c_h) = \frac{1}{h} \int_x^{x+h} f(t) dt$. As $h \to 0$, $c_h \to x$. Since $f$ is continuous, $\lim_{h \to 0} f(c_h) = f(x)$. Thus, $g'(x) = f(x)$. A similar argument holds for $h ### Applications of Integration - **Area between Curves:** $A = \int_a^b |f(x) - g(x)| dx$ - **Volumes of Solids of Revolution:** - **Disk Method:** $V = \int_a^b \pi [R(x)]^2 dx$ (revolving around x-axis) - **Washer Method:** $V = \int_a^b \pi ([R(x)]^2 - [r(x)]^2) dx$ (revolving around x-axis) - **Cylindrical Shells:** $V = \int_a^b 2\pi x f(x) dx$ (revolving around y-axis) - **Arc Length:** $L = \int_a^b \sqrt{1 + [f'(x)]^2} dx$ or $L = \int_a^b \sqrt{1 + \left(\frac{dy}{dx}\right)^2} dx$ - **Proof (Sketch):** Approximate the curve with small line segments. A segment of length $ds$ has $\Delta s = \sqrt{(\Delta x)^2 + (\Delta y)^2} = \sqrt{1 + \left(\frac{\Delta y}{\Delta x}\right)^2} \Delta x$. As $\Delta x \to 0$, we get $ds = \sqrt{1 + \left(\frac{dy}{dx}\right)^2} dx$. - **Surface Area of a Solid of Revolution:** $S = \int_a^b 2\pi y \sqrt{1 + \left(\frac{dy}{dx}\right)^2} dx$ (revolving $y=f(x)$ around x-axis) - **Work:** $W = \int_a^b F(x) dx$ - **Average Value of a Function:** $f_{avg} = \frac{1}{b-a} \int_a^b f(x) dx$ - **Mean Value Theorem for Integrals:** If $f$ is continuous on $[a,b]$, then there exists a number $c$ in $[a,b]$ such that $f(c) = \frac{1}{b-a} \int_a^b f(x) dx$. - **Proof:** Since $f$ is continuous on $[a,b]$, by the EVT, $f$ attains a minimum value $m$ and a maximum value $M$ on $[a,b]$. So, $m \le f(x) \le M$ for all $x \in [a,b]$. Integrating over $[a,b]$: $\int_a^b m \, dx \le \int_a^b f(x) dx \le \int_a^b M \, dx$. $m(b-a) \le \int_a^b f(x) dx \le M(b-a)$. Dividing by $(b-a)$: $m \le \frac{1}{b-a} \int_a^b f(x) dx \le M$. Let $k = \frac{1}{b-a} \int_a^b f(x) dx$. We have $m \le k \le M$. By the IVT, since $f$ is continuous and $k$ is a value between its minimum and maximum, there must exist a number $c \in [a,b]$ such that $f(c) = k$. Thus, $f(c) = \frac{1}{b-a} \int_a^b f(x) dx$. ### Sequences and Series #### Definitions - **Sequence:** An ordered list of numbers $a_1, a_2, ..., a_n, ...$. - **Convergence of a Sequence:** A sequence $\{a_n\}$ converges to $L$ if $\lim_{n \to \infty} a_n = L$. Otherwise, it diverges. - **Series:** The sum of the terms of a sequence, $\sum_{n=1}^\infty a_n$. - **Partial Sums:** $S_N = \sum_{n=1}^N a_n$. - **Convergence of a Series:** A series $\sum a_n$ converges if its sequence of partial sums $\{S_N\}$ converges to a finite limit $S$. Otherwise, it diverges. #### Convergence Tests - **Divergence Test:** If $\lim_{n \to \infty} a_n \neq 0$ or the limit does not exist, then $\sum a_n$ diverges. (Converse is false: if $\lim_{n \to \infty} a_n = 0$, the series may or may not converge, e.g., harmonic series $\sum \frac{1}{n}$). - **Proof:** Suppose $\sum a_n$ converges to $S$. Then $S_N \to S$ as $N \to \infty$. We have $a_N = S_N - S_{N-1}$. So $\lim_{N \to \infty} a_N = \lim_{N \to \infty} (S_N - S_{N-1}) = \lim_{N \to \infty} S_N - \lim_{N \to \infty} S_{N-1} = S - S = 0$. Thus, if $\sum a_n$ converges, then $\lim_{n \to \infty} a_n = 0$. The contrapositive statement is the Divergence Test. - **Integral Test:** If $f$ is a positive, continuous, and decreasing function on $[1, \infty)$ and $a_n = f(n)$, then $\sum_{n=1}^\infty a_n$ converges if and only if $\int_1^\infty f(x) dx$ converges. - **Proof (Sketch):** By comparing areas of rectangles (left-hand and right-hand sums) with the area under the curve. For a decreasing function, $\int_1^{N+1} f(x) dx \le \sum_{i=1}^N f(i)$ and $\sum_{i=2}^N f(i) \le \int_1^N f(x) dx$. This implies $\int_1^\infty f(x) dx \le \sum_{n=1}^\infty a_n$ and $\sum_{n=1}^\infty a_n \le a_1 + \int_1^\infty f(x) dx$. If the integral converges, the series is bounded above by $a_1 + \text{integral value}$, and since terms are positive, it must converge. If the series converges, the integral is bounded above by the series value, and since $f(x)$ is positive, the integral must converge. - **p-Series Test:** The series $\sum_{n=1}^\infty \frac{1}{n^p}$ converges if $p > 1$ and diverges if $p \le 1$. (Proven using Integral Test). - **Comparison Test:** Suppose $\sum a_n$ and $\sum b_n$ are series with positive terms. 1. If $\sum b_n$ converges and $a_n \le b_n$ for all $n$, then $\sum a_n$ converges. 2. If $\sum b_n$ diverges and $a_n \ge b_n$ for all $n$, then $\sum a_n$ diverges. - **Limit Comparison Test:** Suppose $\sum a_n$ and $\sum b_n$ are series with positive terms. If $\lim_{n \to \infty} \frac{a_n}{b_n} = L$ where $L$ is a finite, positive number ($0 0$) satisfies: 1. $b_n$ is decreasing ($b_{n+1} \le b_n$) 2. $\lim_{n \to \infty} b_n = 0$ Then the series converges. - **Proof (Sketch):** Consider the partial sums $S_{2N}$ and $S_{2N+1}$. $S_{2N} = (b_1 - b_2) + (b_3 - b_4) + ... + (b_{2N-1} - b_{2N})$. Since $b_n$ is decreasing, each term in parentheses is non-negative, so $S_{2N}$ is increasing. $S_{2N} = b_1 - (b_2 - b_3) - ... - (b_{2N-2} - b_{2N-1}) - b_{2N}$. Since terms in parentheses are non-negative and $b_{2N} \ge 0$, $S_{2N} \le b_1$. So $\{S_{2N}\}$ is increasing and bounded above, thus it converges to some $S$. $S_{2N+1} = S_{2N} + b_{2N+1}$. Since $\lim_{N \to \infty} b_{2N+1} = 0$, $\lim_{N \to \infty} S_{2N+1} = \lim_{N \to \infty} S_{2N} + 0 = S$. Since both even and odd partial sums converge to the same limit, the series converges. - **Ratio Test:** For a series $\sum a_n$: 1. If $\lim_{n \to \infty} \left|\frac{a_{n+1}}{a_n}\right| = L 1$ or $\lim_{n \to \infty} \left|\frac{a_{n+1}}{a_n}\right| = \infty$, the series diverges. 3. If $\lim_{n \to \infty} \left|\frac{a_{n+1}}{a_n}\right| = 1$, the test is inconclusive. - **Root Test:** For a series $\sum a_n$: 1. If $\lim_{n \to \infty} \sqrt[n]{|a_n|} = L 1$ or $\lim_{n \to \infty} \sqrt[n]{|a_n|} = \infty$, the series diverges. 3. If $\lim_{n \to \infty} \sqrt[n]{|a_n|} = 1$, the test is inconclusive. #### Power Series - **Definition:** A series of the form $\sum_{n=0}^\infty c_n (x-a)^n$. - **Radius of Convergence (R):** For a given power series, there are three possibilities: 1. The series converges only when $x=a$ ($R=0$). 2. The series converges for all $x$ ($R=\infty$). 3. There is a positive number $R$ such that the series converges if $|x-a| R$. - **Interval of Convergence:** The set of all $x$ for which the series converges. Must check endpoints $x=a \pm R$ separately. #### Taylor and Maclaurin Series - **Taylor Series:** If $f$ has a power series representation (or is "analytic") at $a$, then $f(x) = \sum_{n=0}^\infty \frac{f^{(n)}(a)}{n!} (x-a)^n$. - **Maclaurin Series:** A Taylor series with $a=0$: $f(x) = \sum_{n=0}^\infty \frac{f^{(n)}(0)}{n!} x^n$. - **Taylor's Inequality:** If $|f^{(n+1)}(x)| \le M$ for $|x-a| \le d$, then the remainder $R_n(x)$ of the Taylor series satisfies $|R_n(x)| \le \frac{M}{(n+1)!}|x-a|^{n+1}$ for $|x-a| \le d$. This is used to show that $R_n(x) \to 0$ as $n \to \infty$, thus the Taylor series converges to $f(x)$. #### Common Maclaurin Series - $e^x = \sum_{n=0}^\infty \frac{x^n}{n!} = 1 + x + \frac{x^2}{2!} + \frac{x^3}{3!} + ...$, for all $x$. - $\sin x = \sum_{n=0}^\infty (-1)^n \frac{x^{2n+1}}{(2n+1)!} = x - \frac{x^3}{3!} + \frac{x^5}{5!} - ...$, for all $x$. - $\cos x = \sum_{n=0}^\infty (-1)^n \frac{x^{2n}}{(2n)!} = 1 - \frac{x^2}{2!} + \frac{x^4}{4!} - ...$, for all $x$. - $\frac{1}{1-x} = \sum_{n=0}^\infty x^n = 1 + x + x^2 + x^3 + ...$, for $|x| ### Parametric Equations and Polar Coordinates #### Parametric Equations - **Definition:** $x = f(t)$, $y = g(t)$. - **Slope of Tangent:** $\frac{dy}{dx} = \frac{dy/dt}{dx/dt}$, provided $\frac{dx}{dt} \neq 0$. - **Second Derivative:** $\frac{d^2y}{dx^2} = \frac{d}{dx}\left(\frac{dy}{dx}\right) = \frac{d/dt(dy/dx)}{dx/dt}$. - **Arc Length:** $L = \int_a^b \sqrt{\left(\frac{dx}{dt}\right)^2 + \left(\frac{dy}{dt}\right)^2} dt$. #### Polar Coordinates - **Conversion:** $x = r \cos \theta$, $y = r \sin \theta$, $r^2 = x^2 + y^2$, $\tan \theta = \frac{y}{x}$. - **Slope of Tangent:** For $r = f(\theta)$, $\frac{dy}{dx} = \frac{dy/d\theta}{dx/d\theta} = \frac{f'(\theta)\sin\theta + f(\theta)\cos\theta}{f'(\theta)\cos\theta - f(\theta)\sin\theta}$. - **Area in Polar Coordinates:** $A = \int_a^b \frac{1}{2} [f(\theta)]^2 d\theta = \int_a^b \frac{1}{2} r^2 d\theta$. - **Arc Length in Polar Coordinates:** $L = \int_a^b \sqrt{r^2 + \left(\frac{dr}{d\theta}\right)^2} d\theta$. ### Vectors and the Geometry of Space #### Vectors - **Vector:** A quantity with both magnitude and direction. Represented as $\vec{v} = \langle v_1, v_2, v_3 \rangle$. - **Magnitude:** $|\vec{v}| = \sqrt{v_1^2 + v_2^2 + v_3^2}$. - **Unit Vector:** $\hat{u} = \frac{\vec{v}}{|\vec{v}|}$. - **Dot Product:** $\vec{a} \cdot \vec{b} = a_1 b_1 + a_2 b_2 + a_3 b_3 = |\vec{a}| |\vec{b}| \cos \theta$. - **Orthogonality:** $\vec{a} \cdot \vec{b} = 0 \iff \vec{a} \perp \vec{b}$ (if $\vec{a}, \vec{b} \neq \vec{0}$). - **Cross Product:** $\vec{a} \times \vec{b} = \begin{vmatrix} \mathbf{i} & \mathbf{j} & \mathbf{k} \\ a_1 & a_2 & a_3 \\ b_1 & b_2 & b_3 \end{vmatrix}$. - **Properties:** $\vec{a} \times \vec{b}$ is orthogonal to both $\vec{a}$ and $\vec{b}$. - **Magnitude:** $|\vec{a} \times \vec{b}| = |\vec{a}| |\vec{b}| \sin \theta$. - **Area of Parallelogram:** Formed by $\vec{a}$ and $\vec{b}$ is $|\vec{a} \times \vec{b}|$. - **Parallelism:** $\vec{a} \times \vec{b} = \vec{0} \iff \vec{a} \parallel \vec{b}$ (if $\vec{a}, \vec{b} \neq \vec{0}$). - **Scalar Triple Product:** $\vec{a} \cdot (\vec{b} \times \vec{c}) = \begin{vmatrix} a_1 & a_2 & a_3 \\ b_1 & b_2 & b_3 \\ c_1 & c_2 & c_3 \end{vmatrix}$. - **Volume of Parallelepiped:** Formed by $\vec{a}, \vec{b}, \vec{c}$ is $|\vec{a} \cdot (\vec{b} \times \vec{c})|$. #### Lines and Planes in 3D - **Equation of a Line:** - **Vector Equation:** $\vec{r}(t) = \vec{r}_0 + t\vec{v}$ - **Parametric Equations:** $x = x_0 + at$, $y = y_0 + bt$, $z = z_0 + ct$ - **Symmetric Equations:** $\frac{x-x_0}{a} = \frac{y-y_0}{b} = \frac{z-z_0}{c}$ - **Equation of a Plane:** - **Vector Equation:** $\vec{n} \cdot (\vec{r} - \vec{r}_0) = 0$ - **Scalar Equation:** $a(x-x_0) + b(y-y_0) + c(z-z_0) = 0$ - **General Form:** $ax + by + cz + d = 0$ (where $\vec{n} = \langle a, b, c \rangle$ is the normal vector). #### Cylinders and Quadric Surfaces - **Cylinders:** A surface consisting of all lines (rulings) that are parallel to a given line and pass through a given plane curve. - **Quadric Surfaces:** General equation $Ax^2 + By^2 + Cz^2 + Dxy + Eyz + Fxz + Gx + Hy + Iz + J = 0$. - **Ellipsoid:** $\frac{x^2}{a^2} + \frac{y^2}{b^2} + \frac{z^2}{c^2} = 1$ - **Elliptic Paraboloid:** $\frac{z}{c} = \frac{x^2}{a^2} + \frac{y^2}{b^2}$ - **Hyperbolic Paraboloid:** $\frac{z}{c} = \frac{x^2}{a^2} - \frac{y^2}{b^2}$ - **Cone:** $\frac{x^2}{a^2} + \frac{y^2}{b^2} = \frac{z^2}{c^2}$ - **Hyperboloid of One Sheet:** $\frac{x^2}{a^2} + \frac{y^2}{b^2} - \frac{z^2}{c^2} = 1$ - **Hyperboloid of Two Sheets:** $-\frac{x^2}{a^2} - \frac{y^2}{b^2} + \frac{z^2}{c^2} = 1$ ### Multivariable Differentiation #### Functions of Several Variables - **Definition:** A function $f$ of two variables is a rule that assigns to each ordered pair $(x,y)$ in a domain $D$ a unique real number $f(x,y)$. - **Level Curves:** The curves in the plane with equations $f(x,y) = k$ (where $k$ is a constant). - **Limits and Continuity:** - **Limit:** $\lim_{(x,y) \to (a,b)} f(x,y) = L$ if for every $\epsilon > 0$ there exists a $\delta > 0$ such that if $0 0$ and $f_{xx}(a,b) > 0$, then $f(a,b)$ is a local minimum. 2. If $D > 0$ and $f_{xx}(a,b) ### Multivariable Integration #### Double Integrals - **Definition (Riemann Sum):** $\iint_R f(x,y) dA = \lim_{m,n \to \infty} \sum_{i=1}^m \sum_{j=1}^n f(x_i^*, y_j^*) \Delta A$. - **Fubini's Theorem:** If $f$ is continuous on the rectangle $R = [a,b] \times [c,d]$, then $\iint_R f(x,y) dA = \int_a^b \int_c^d f(x,y) dy dx = \int_c^d \int_a^b f(x,y) dx dy$. - **Double Integrals over General Regions:** - **Type I (y-simple):** $D = \{(x,y) | a \le x \le b, g_1(x) \le y \le g_2(x)\}$. $\iint_D f(x,y) dA = \int_a^b \int_{g_1(x)}^{g_2(x)} f(x,y) dy dx$. - **Type II (x-simple):** $D = \{(x,y) | c \le y \le d, h_1(y) \le x \le h_2(y)\}$. $\iint_D f(x,y) dA = \int_c^d \int_{h_1(y)}^{h_2(y)} f(x,y) dx dy$. - **Area:** $A(D) = \iint_D 1 dA$. - **Polar Coordinates:** If $f$ is continuous on a polar region $R$, then $\iint_R f(x,y) dA = \iint_R f(r \cos\theta, r \sin\theta) r dr d\theta$. - The Jacobian for polar coordinates is $r$. #### Triple Integrals - **Definition:** $\iiint_E f(x,y,z) dV = \lim_{l,m,n \to \infty} \sum_{i=1}^l \sum_{j=1}^m \sum_{k=1}^n f(x_i^*, y_j^*, z_k^*) \Delta V$. - **Fubini's Theorem for Triple Integrals:** For a rectangular box $B = [a,b] \times [c,d] \times [r,s]$, $\iiint_B f(x,y,z) dV = \int_r^s \int_c^d \int_a^b f(x,y,z) dx dy dz$ (and 5 other orders). - **Triple Integrals over General Regions:** Similar to double integrals, define regions as Type 1, 2, or 3 based on projection onto coordinate planes. - **Volume:** $V(E) = \iiint_E 1 dV$. - **Cylindrical Coordinates:** $x = r \cos\theta$, $y = r \sin\theta$, $z = z$. - $dV = r dz dr d\theta$. - **Spherical Coordinates:** $x = \rho \sin\phi \cos\theta$, $y = \rho \sin\phi \sin\theta$, $z = \rho \cos\phi$. - $\rho^2 = x^2+y^2+z^2$, $0 \le \phi \le \pi$, $0 \le \theta \le 2\pi$. - $dV = \rho^2 \sin\phi d\rho d\phi d\theta$. - The Jacobian for spherical coordinates is $\rho^2 \sin\phi$. #### Change of Variables in Multiple Integrals - **Jacobian:** If $x=g(u,v)$ and $y=h(u,v)$, then the Jacobian of the transformation is $J = \frac{\partial(x,y)}{\partial(u,v)} = \begin{vmatrix} \frac{\partial x}{\partial u} & \frac{\partial x}{\partial v} \\ \frac{\partial y}{\partial u} & \frac{\partial y}{\partial v} \end{vmatrix}$. - **Formula:** $\iint_R f(x,y) dA = \iint_S f(g(u,v), h(u,v)) \left|\frac{\partial(x,y)}{\partial(u,v)}\right| du dv$. ### Vector Calculus #### Vector Fields - **Definition:** A vector field $\vec{F}$ on $\mathbb{R}^2$ (or $\mathbb{R}^3$) is a function that assigns to each point $(x,y)$ a two-dimensional vector $\vec{F}(x,y)$ (or to $(x,y,z)$ a three-dimensional vector $\vec{F}(x,y,z)$). - **Conservative Vector Field:** A vector field $\vec{F}$ is conservative if it is the gradient of some scalar function $f$, i.e., $\vec{F} = \nabla f$. The function $f$ is called a potential function for $\vec{F}$. - **Test for Conservative Field:** If $\vec{F} = P\mathbf{i} + Q\mathbf{j}$ is a vector field on an open, simply-connected domain, and $P, Q$ have continuous first-order partial derivatives, then $\vec{F}$ is conservative if and only if $\frac{\partial P}{\partial y} = \frac{\partial Q}{\partial x}$. - In 3D, if $\vec{F} = P\mathbf{i} + Q\mathbf{j} + R\mathbf{k}$, then $\vec{F}$ is conservative if and only if $\text{curl } \vec{F} = \vec{0}$ (i.e., $\frac{\partial R}{\partial y} = \frac{\partial Q}{\partial z}$, $\frac{\partial P}{\partial z} = \frac{\partial R}{\partial x}$, $\frac{\partial Q}{\partial x} = \frac{\partial P}{\partial y}$). #### Line Integrals - **Line Integral of a Scalar Function:** $\int_C f(x,y,z) ds = \int_a^b f(x(t), y(t), z(t)) \sqrt{\left(\frac{dx}{dt}\right)^2 + \left(\frac{dy}{dt}\right)^2 + \left(\frac{dz}{dt}\right)^2} dt$. Used for mass of a wire, etc. - **Line Integral of a Vector Field (Work):** $\int_C \vec{F} \cdot d\vec{r} = \int_a^b \vec{F}(\vec{r}(t)) \cdot \vec{r}'(t) dt$. - **Fundamental Theorem for Line Integrals:** If $\vec{F} = \nabla f$ is a conservative vector field, then $\int_C \vec{F} \cdot d\vec{r} = f(\vec{r}(b)) - f(\vec{r}(a))$. - **Proof:** Let $\vec{r}(t) = \langle x(t), y(t), z(t) \rangle$ for $a \le t \le b$. $\int_C \nabla f \cdot d\vec{r} = \int_a^b \nabla f(\vec{r}(t)) \cdot \vec{r}'(t) dt$ $= \int_a^b \left( \frac{\partial f}{\partial x}\frac{dx}{dt} + \frac{\partial f}{\partial y}\frac{dy}{dt} + \frac{\partial f}{\partial z}\frac{dz}{dt} \right) dt$. By the Chain Rule, the integrand is $\frac{d}{dt} f(x(t), y(t), z(t))$. So, $\int_a^b \frac{d}{dt} f(\vec{r}(t)) dt = f(\vec{r}(b)) - f(\vec{r}(a))$ by FTC Part 2. - **Path Independence:** If $\vec{F}$ is conservative, the line integral $\int_C \vec{F} \cdot d\vec{r}$ is path independent. - **Closed Loop:** If $\vec{F}$ is conservative and $C$ is a closed curve, $\oint_C \vec{F} \cdot d\vec{r} = 0$. #### Green's Theorem - **Statement:** Let $C$ be a positively oriented, piecewise-smooth, simple closed curve in the plane and let $D$ be the region bounded by $C$. If $P$ and $Q$ have continuous partial derivatives on an open region that contains $D$, then $\oint_C P \, dx + Q \, dy = \iint_D \left(\frac{\partial Q}{\partial x} - \frac{\partial P}{\partial y}\right) dA$. - **Proof (for a simple region):** Assume $D$ is a Type I region, $D = \{(x,y) | a \le x \le b, g_1(x) \le y \le g_2(x)\}$. $\iint_D -\frac{\partial P}{\partial y} dA = \int_a^b \int_{g_1(x)}^{g_2(x)} -\frac{\partial P}{\partial y} dy dx = \int_a^b [-P(x,y)]_{y=g_1(x)}^{y=g_2(x)} dx$ $= \int_a^b [-P(x,g_2(x)) + P(x,g_1(x))] dx = \int_a^b P(x,g_1(x)) dx - \int_a^b P(x,g_2(x)) dx$. Now consider $\oint_C P \, dx$. The curve $C$ consists of $C_1: y=g_1(x)$ from $a$ to $b$, $C_2$ (vertical line segment at $x=b$), $C_3: y=g_2(x)$ from $b$ to $a$, and $C_4$ (vertical line segment at $x=a$). $\int_{C_1} P \, dx = \int_a^b P(x,g_1(x)) dx$. $\int_{C_3} P \, dx = \int_b^a P(x,g_2(x)) dx = -\int_a^b P(x,g_2(x)) dx$. On $C_2$ and $C_4$, $dx=0$, so $\int_{C_2} P \, dx = 0$ and $\int_{C_4} P \, dx = 0$. Thus, $\oint_C P \, dx = \int_a^b P(x,g_1(x)) dx - \int_a^b P(x,g_2(x)) dx$. So $\oint_C P \, dx = \iint_D -\frac{\partial P}{\partial y} dA$. A similar argument for $\iint_D \frac{\partial Q}{\partial x} dA = \oint_C Q \, dy$ for Type II regions. Summing these two results gives Green's Theorem. #### Curl and Divergence - **Curl:** For $\vec{F} = P\mathbf{i} + Q\mathbf{j} + R\mathbf{k}$, $\text{curl } \vec{F} = \nabla \times \vec{F} = \begin{vmatrix} \mathbf{i} & \mathbf{j} & \mathbf{k} \\ \frac{\partial}{\partial x} & \frac{\partial}{\partial y} & \frac{\partial}{\partial z} \\ P & Q & R \end{vmatrix}$. - Measures the tendency of a fluid to rotate. If $\text{curl } \vec{F} = \vec{0}$, $\vec{F}$ is irrotational. - **Divergence:** For $\vec{F} = P\mathbf{i} + Q\mathbf{j} + R\mathbf{k}$, $\text{div } \vec{F} = \nabla \cdot \vec{F} = \frac{\partial P}{\partial x} + \frac{\partial Q}{\partial y} + \frac{\partial R}{\partial z}$. - Measures the tendency of a fluid to expand or compress. If $\text{div } \vec{F} = 0$, $\vec{F}$ is incompressible. #### Surface Integrals - **Surface Integral of a Scalar Function:** $\iint_S f(x,y,z) dS = \iint_D f(\vec{r}(u,v)) |\vec{r}_u \times \vec{r}_v| dA$. - For a surface $z = g(x,y)$ over a region $D$ in the $xy$-plane, $dS = \sqrt{1 + (\frac{\partial z}{\partial x})^2 + (\frac{\partial z}{\partial y})^2} dA$. - **Surface Integral of a Vector Field (Flux):** $\iint_S \vec{F} \cdot d\vec{S} = \iint_S \vec{F} \cdot \vec{n} dS$. - If $S$ is given by $\vec{r}(u,v)$, then $\iint_S \vec{F} \cdot (\vec{r}_u \times \vec{r}_v) dA$ (for upward normal). - If $S$ is given by $z = g(x,y)$ with upward normal, then $\iint_D (-P\frac{\partial z}{\partial x} - Q\frac{\partial z}{\partial y} + R) dA$. #### Stokes' Theorem - **Statement:** Let $S$ be an oriented piecewise-smooth surface that is bounded by a simple, closed, piecewise-smooth boundary curve $C$ with positive orientation. Let $\vec{F}$ be a vector field whose components have continuous partial derivatives on an open region in $\mathbb{R}^3$ that contains $S$. Then $\oint_C \vec{F} \cdot d\vec{r} = \iint_S \text{curl } \vec{F} \cdot d\vec{S}$. - **Interpretation:** Relates a line integral around a boundary to a surface integral over the surface it bounds. Generalizes Green's Theorem to 3D. - **Proof (Beyond scope of typical cheatsheet, uses generalized Green's theorem and parametrization).** #### Divergence Theorem (Gauss's Theorem) - **Statement:** Let $E$ be a simple solid region whose boundary surface $S$ is a closed, oriented piecewise-smooth surface. Let $\vec{n}$ be the outward unit normal vector of $S$. Let $\vec{F}$ be a vector field whose components have continuous partial derivatives on an open region that contains $E$. Then $\iint_S \vec{F} \cdot d\vec{S} = \iiint_E \text{div } \vec{F} dV$. - **Interpretation:** Relates the flux of a vector field across a closed surface to the triple integral of the divergence of the field over the volume it encloses. - **Proof (Beyond scope of typical cheatsheet, uses generalized FTC and properties of triple integrals).**