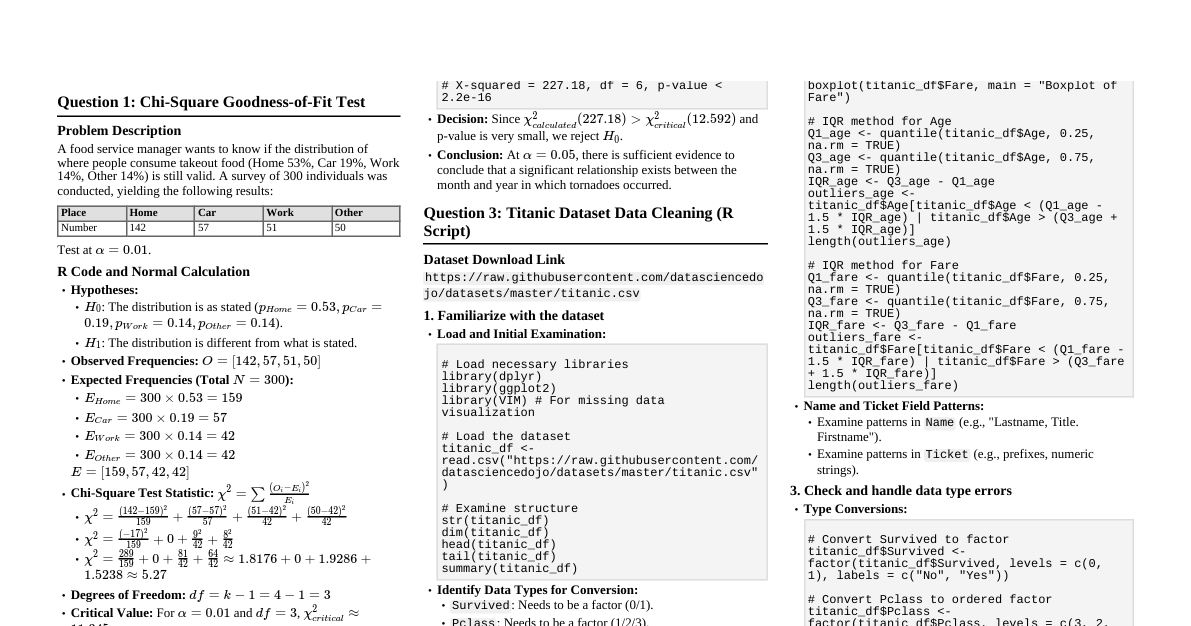

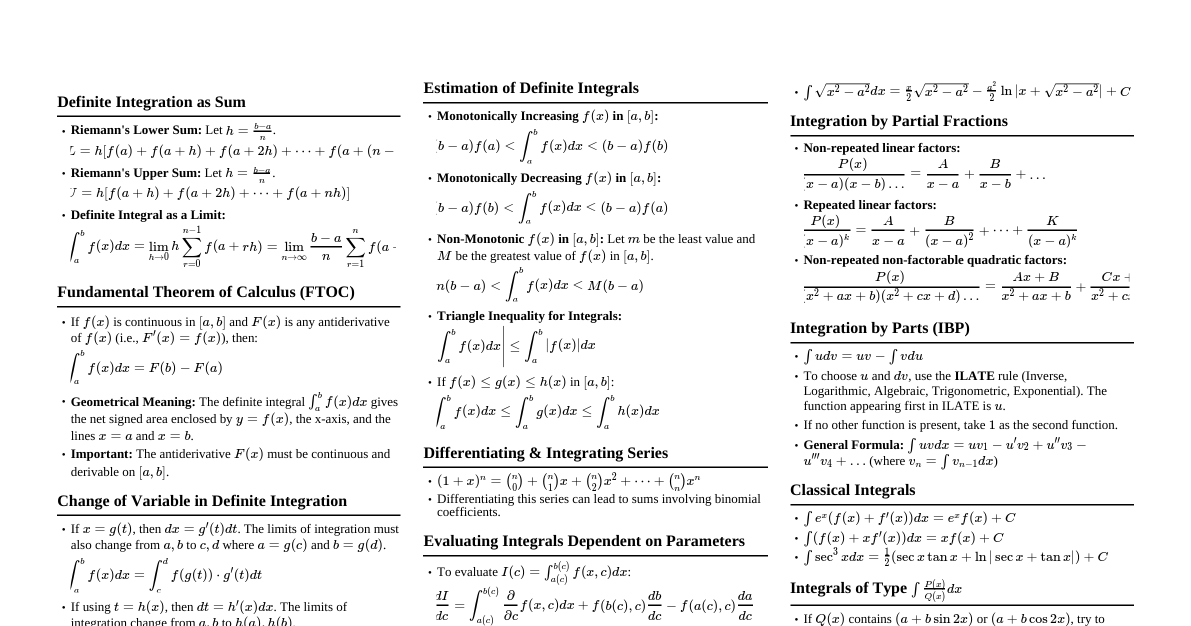

### Hypothesis Testing Hypothesis testing is a statistical method used to make inferences about a population parameter based on sample data. #### Null and Alternative Hypotheses - **Null Hypothesis ($H_0$):** A statement of no effect or no difference, often representing the status quo. - **Alternative Hypothesis ($H_1$ or $H_a$):** A statement that contradicts the null hypothesis, suggesting an effect or a difference. #### Types of Errors - **Type I Error ($\alpha$):** Rejecting $H_0$ when it is actually true (false positive). - **Type II Error ($\beta$):** Failing to reject $H_0$ when it is false (false negative). - **Power of a Test ($1 - \beta$):** The probability of correctly rejecting $H_0$ when it is false. #### Likelihood Ratio Test (LRT) The LRT is a general method for constructing hypothesis tests. It is based on the ratio of the maximum likelihood under the null hypothesis to the maximum likelihood under the alternative hypothesis. Let $L(\theta | x)$ be the likelihood function. The likelihood ratio is given by: $$\Lambda(x) = \frac{\sup_{\theta \in \Theta_0} L(\theta | x)}{\sup_{\theta \in \Theta} L(\theta | x)}$$ where $\Theta_0$ is the parameter space under $H_0$ and $\Theta$ is the full parameter space. We reject $H_0$ if $\Lambda(x) ### LRT for Two-Sample Problems #### Independent Samples from Normal Distributions Let $X_1, \dots, X_{n_1}$ be i.i.d. from $N(\mu_1, \sigma_1^2)$ and $Y_1, \dots, Y_{n_2}$ be i.i.d. from $N(\mu_2, \sigma_2^2)$. **Case I: $\sigma_1 = \sigma_2 = \sigma$ (known)** - **Hypotheses:** $H_0: \mu_1 = \mu_2$ versus $H_1: \mu_1 \neq \mu_2$ - **LRT Decision Rule:** Reject $H_0$ if $|\bar{X} - \bar{Y}| > k$ for some constant $k$. - **Power Function:** Probability of rejecting $H_0$ as a function of the true parameter values. - **p-value:** The probability of observing data as extreme as, or more extreme than, the sample data, assuming $H_0$ is true. **Case II: $\sigma_1 \neq \sigma_2$ (known)** - **Hypotheses:** $H_0: \mu_1 = \mu_2$ versus $H_1: \mu_1 \neq \mu_2$ - **LRT Decision Rule:** Reject $H_0$ if $|\bar{X} - \bar{Y}| > k$ for some constant $k$. **Case III: $\sigma_1 = \sigma_2 = \sigma$ (unknown)** - **Hypotheses:** $H_0: \mu_1 = \mu_2$ versus $H_1: \mu_1 \neq \mu_2$ - **LRT based on pooled variance:** The test statistic typically involves a t-distribution. **Case IV: $\sigma_1 \neq \sigma_2$ (unknown)** - **Hypotheses:** $H_0: \mu_1 = \mu_2$ versus $H_1: \mu_1 \neq \mu_2$ - This is the Behrens-Fisher problem, often approximated using Welch's t-test. #### Paired Samples from Normal Distributions Let $(X_i, Y_i)$ be $n$ pairs from $N(\mu_1, \sigma_1^2)$ and $N(\mu_2, \sigma_2^2)$, with $cor(X_i, Y_i) = \rho$. - **Hypotheses:** $H_0: \mu_1 - \mu_2 = 0$ versus $H_1: \mu_1 - \mu_2 \neq 0$ - **LRT Test Statistic:** Based on the differences $D_i = X_i - Y_i$. Let $\bar{D}$ and $S_D$ be the sample mean and standard deviation of the differences. - **Decision Rule:** Reject $H_0$ if $\left| \frac{\bar{D}}{S_D/\sqrt{n}} \right| > t_{n-1, \alpha/2}$. ### Large Sample Tests #### Central Limit Theorem (CLT) Let $X_1, \dots, X_n$ be a random sample from a population with mean $\mu$ and variance $\sigma^2$. Then, as $n \to \infty$, the limiting distribution of $$Z_n = \frac{\bar{X} - \mu}{\sigma/\sqrt{n}}$$ is the standard normal distribution $N(0,1)$. $$\lim_{n \to \infty} P(Z_n \le z) = \int_{-\infty}^{z} \frac{1}{\sqrt{2\pi}} e^{-t^2/2} dt$$ #### Slutsky's Theorem If $X_n \xrightarrow{d} X$ and $Y_n \xrightarrow{p} c$ (where $c$ is a constant), then: 1. $X_n + Y_n \xrightarrow{d} X + c$ 2. $X_n Y_n \xrightarrow{d} cX$ (If $c=0$, then $X_n Y_n \xrightarrow{p} 0$) 3. $X_n / Y_n \xrightarrow{d} X / c$ (If $c \neq 0$) #### Wald Test The Wald test is used for testing hypotheses about parameters that are asymptotically normally distributed. - **Hypotheses:** $H_0: \theta = \theta_0$ versus $H_1: \theta \neq \theta_0$ - **Estimator:** Let $\hat{\theta}$ be an estimator of $\theta$ such that $\sqrt{n}(\hat{\theta} - \theta) \xrightarrow{d} N(0, v(\theta))$. - **Wald Test Statistic:** $$T_n = \frac{\hat{\theta} - \theta_0}{\sqrt{v(\theta)/n}}$$ - If $v(\theta)$ is unknown, a consistent estimator $\hat{v}(\hat{\theta})$ can be plugged in: $$T_n = \frac{\hat{\theta} - \theta_0}{\sqrt{\hat{v}(\hat{\theta})/n}}$$ - Under $H_0$, $T_n \xrightarrow{d} N(0,1)$. - **Rejection Rule:** Reject $H_0$ if $|T_n| \ge z_{\alpha/2}$. #### Wald Test for Bernoulli Proportion Let $X_1, \dots, X_n$ be a random sample from a Bernoulli($p$) population. - **Hypotheses:** $H_0: p = p_0$ versus $H_1: p \neq p_0$ or $H_1: p > p_0$ - **Estimator:** $\hat{p} = \bar{X}$ - **Wald Test Statistic:** $$Z = \frac{\hat{p} - p_0}{\sqrt{p_0(1-p_0)/n}}$$ - **Rejection Rule:** For $H_1: p \neq p_0$, reject if $|Z| \ge z_{\alpha/2}$. For $H_1: p > p_0$, reject if $Z \ge z_{\alpha}$. #### Chi-Squared Test for Multinomial Distribution Let $X_1, \dots, X_k$ be independent random variables, with $X_i \sim Bin(n_i, p_i)$. As $n_i \to \infty$, the statistic $$\sum_{i=1}^k \frac{(X_i - n_i p_i)^2}{n_i p_i (1-p_i)} \xrightarrow{d} \chi^2(k)$$ This is used to test $H_0: p_1 = p_2 = \dots = p_k = p$ against all alternatives. **Case 1: $p$ is known** $$T = \sum_{i=1}^k \frac{(X_i - n_i p)^2}{n_i p (1-p)}$$ Under $H_0$, $T \xrightarrow{d} \chi^2(k-1)$. Reject $H_0$ if $T \ge \chi^2_{k-1, \alpha}$. **Case 2: $p$ is unknown** Estimate $p$ by $\hat{p} = \frac{\sum X_i}{\sum n_i}$. $$U = \sum_{i=1}^k \frac{(X_i - n_i \hat{p})^2}{n_i \hat{p} (1-\hat{p})}$$ Under $H_0$, $U \xrightarrow{d} \chi^2(k-1)$. Reject $H_0$ if $U \ge \chi^2_{k-1, \alpha}$. ### Chi-squared Goodness of Fit Test Tests whether observed frequencies match expected frequencies from a specified distribution. - **Hypotheses:** $H_0: X \sim F$ (specified distribution) versus $H_1: X \not\sim F$. - Divide the data into $k$ disjoint intervals $A_1, \dots, A_k$. - Let $Y_i$ be the observed number of data points in $A_i$. - Let $p_i = P(X \in A_i)$ under $H_0$. - Let $E_i = n p_i$ be the expected number of observations in $A_i$. #### Test Statistic $$U = \sum_{i=1}^k \frac{(Y_i - E_i)^2}{E_i}$$ #### Degrees of Freedom - If $F$ is completely specified (no unknown parameters), $U \xrightarrow{d} \chi^2(k-1)$. - If $F$ involves $r$ unknown parameters estimated from the data (e.g., mean and variance of a normal distribution), $U \xrightarrow{d} \chi^2(k-r-1)$. #### Rules of Thumb - It is desirable to have $E_i \ge 5$ for each interval. If any $E_i ### Interval Estimation An interval estimate is a range of values used to estimate a population parameter. #### Random Intervals - A family of subsets $S(x) \subseteq \Theta$ is called a family of random sets. - If $S(x)$ is an interval $[L(x), U(x)]$, it's called a random interval, where $L(x)$ and $U(x)$ are functions of the sample $x$. - $L(x)$ and $U(x)$ are the lower and upper bounds of the interval estimator. #### Coverage Probability The coverage probability of an interval estimator $[L(X), U(X)]$ for a parameter $\theta$ is the probability that the random interval covers the true parameter value $\theta$. $$P_{\theta}(\theta \in [L(X), U(X)])$$ This probability can vary with different values of $\theta$. #### Confidence Coefficient The confidence coefficient of an interval estimator is the infimum of its coverage probabilities over all possible values of $\theta$: $$\inf_{\theta \in \Theta} P_{\theta}(\theta \in [L(X), U(X)])$$ An interval estimator with a confidence coefficient of $1-\alpha$ is called a **$1-\alpha$ confidence interval**. #### Methods of Finding Interval Estimators **1. Inverting a Test Statistic** - There is a strong correspondence between hypothesis testing and interval estimation. - If $A(\theta_0)$ is the acceptance region for a level $\alpha$ test of $H_0: \theta = \theta_0$, then the set $$C(x) = \{\theta_0 : x \in A(\theta_0)\}$$ is a $1-\alpha$ confidence set. - Conversely, if $C(X)$ is a $1-\alpha$ confidence set, then $A(\theta_0) = \{x : \theta_0 \in C(x)\}$ is the acceptance region for a level $\alpha$ test of $H_0: \theta = \theta_0$. - **Example (Wald Confidence Interval):** By inverting the Wald test for $H_0: \theta = \theta_0$ vs. $H_1: \theta \neq \theta_0$, the $1-\alpha$ Wald confidence interval is: $$\hat{\theta} \pm z_{\alpha/2} \sqrt{\frac{\hat{v}(\hat{\theta})}{n}}$$ **2. Pivotal Methods** - A random variable $Q = Q(X, \theta)$ is a **pivotal quantity (or pivot)** if its distribution does not depend on $\theta$. - **Procedure:** 1. Find a pivot $Q(X, \theta)$. 2. Find constants $a$ and $b$ such that $P(a \le Q(X, \theta) \le b) = 1-\alpha$. 3. Invert the inequalities to solve for $\theta$ in terms of $X$, $a$, and $b$, to obtain the confidence interval $[L(X), U(X)]$. - If $Q(x, \theta)$ is a monotone function of $\theta$: - If increasing in $\theta$: $L(x,a) \le \theta \le U(x,b)$. - If decreasing in $\theta$: $L(x,b) \le \theta \le U(x,a)$. #### Large Sample Pivot - A pivot $Q_n(X, \theta)$ is a large sample pivot if its asymptotic distribution is free of all unknown parameters. - If $Q_n(X, \theta) \xrightarrow{d} N(0,1)$, then we can find $a, b$ such that $P(a \le Q_n(X, \theta) \le b) \approx 1-\alpha$. - For example, using the Wald test statistic as a large sample pivot: $$Q_n(X, \theta) = \frac{\hat{\theta} - \theta}{\sqrt{\hat{v}(\hat{\theta})/n}} \xrightarrow{d} N(0,1)$$ Then $P\left(-z_{\alpha/2} \le \frac{\hat{\theta} - \theta}{\sqrt{\hat{v}(\hat{\theta})/n}} \le z_{\alpha/2}\right) \approx 1-\alpha$. Rearranging gives the approximate $1-\alpha$ confidence interval: $$\hat{\theta} - z_{\alpha/2} \sqrt{\frac{\hat{v}(\hat{\theta})}{n}} \le \theta \le \hat{\theta} + z_{\alpha/2} \sqrt{\frac{\hat{v}(\hat{\theta})}{n}}$$