Discrete Mathematics

Cheatsheet Content

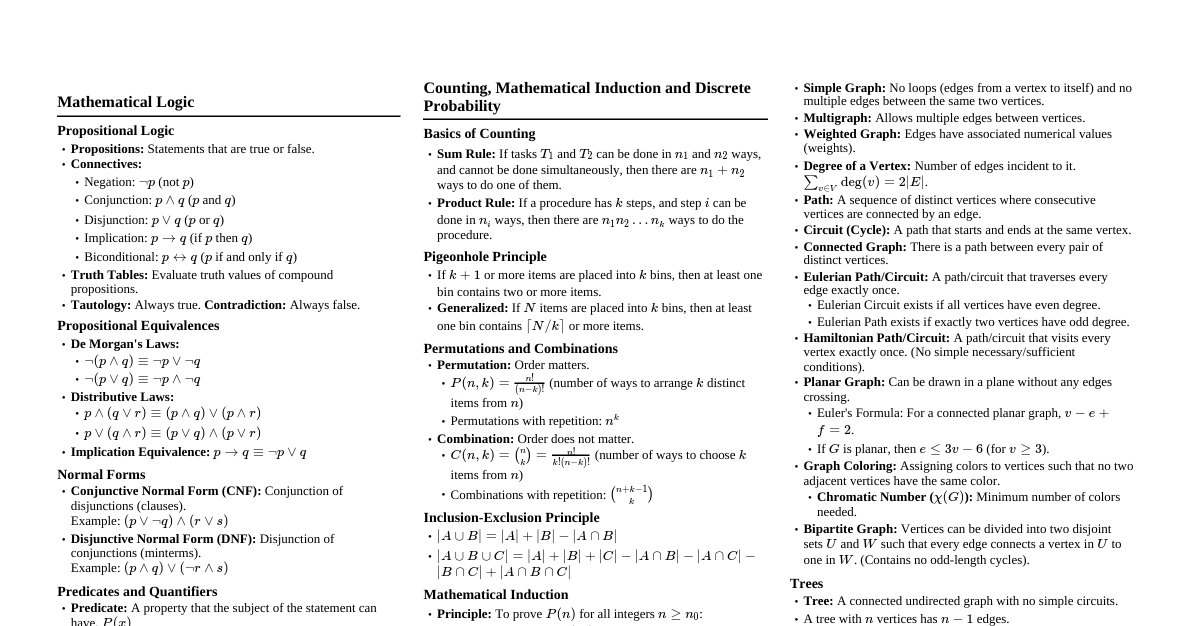

### Pigeonhole Principle The Pigeonhole Principle is a fundamental concept in discrete mathematics. #### Basic Principle If $f: X \to Y$ and $|X| > |Y|$, then there exist $x_1, x_2 \in X$ such that $x_1 \neq x_2$ and $f(x_1) = f(x_2)$. - **Analogy:** If there are more pigeons than pigeonholes, and every pigeon goes into a pigeonhole, then at least one pigeonhole must contain more than one pigeon. - **Example:** In any group of 8 people, some two of them were born on the same day of the week (since there are 7 days in a week). #### Extended Pigeonhole Principle For any finite sets $X$ and $Y$ and any positive integer $k$ such that $|X| > k \cdot |Y|$, if $f: X \to Y$, then there are at least $k+1$ distinct members $x_1, \dots, x_{k+1} \in X$ such that $f(x_1) = \dots = f(x_{k+1})$. - The basic principle is the case where $k=1$. - **Example:** If 15 pigeons are placed in 7 pigeonholes, at least one pigeonhole must contain $\lceil 15/7 \rceil = 3$ pigeons. #### Terminology - **Set:** A collection of distinct things (elements or members). - $P = \{\text{Annie, Batul, Charlie, Deja, Evelyn, Fawwaz, Gregoire, Hoon}\}$ - $D = \{\text{Sunday, Monday, Tuesday, Wednesday, Thursday, Friday, Saturday}\}$ - **Cardinality ($|X|$):** The number of members in a finite set $X$. - $|P|=8$, $|D|=7$. - **Function ($f: X \to Y$):** A rule that associates each member of $X$ with exactly one member of $Y$. $f(x)$ is the value of $f$ on argument $x$. ### Proof Techniques #### Formal Reasoning - Proofs require formal reasoning, starting with true statements and connecting them with logical inferences. - Mathematical language aims for precision, generality, and clarity, avoiding the imprecision of ordinary language. #### Types of Proofs 1. **Direct Proof:** - Assumes the proposition is true and builds on that assumption to establish the conclusion. - **Example (Odd Integers Theorem):** Every odd integer is equal to the difference between the squares of two integers. - An odd integer can be written as $2k+1$ for some integer $k$. - We want to find $m, n$ such that $2k+1 = m^2 - n^2$. - Observe that $(k+1)^2 - k^2 = (k^2 + 2k + 1) - k^2 = 2k+1$. - So, we can choose $m = k+1$ and $n = k$. 2. **Constructive Proof:** - Not only demonstrates that a thing exists but also shows how to find it (provides an algorithm). - The proof of the Odd Integers Theorem is constructive as it shows how to find $m$ and $n$ for any given odd integer. 3. **Nonconstructive Proof:** - Shows that something exists without showing how to find it. - The Pigeonhole Principle itself is nonconstructive; it asserts the existence of a pigeonhole with multiple pigeons but doesn't identify which one. 4. **Proof by Contradiction:** - Assumes the negation of the statement to be proven. - Derives a contradiction from this assumption, thereby proving the original statement must be true. - **Example ($\sqrt{2}$ is irrational):** - Assume $\sqrt{2}$ is rational, so $\sqrt{2} = a/b$ for integers $a, b$ with no common factors (at least one is odd). - Squaring both sides gives $2 = a^2/b^2 \implies 2b^2 = a^2$. - This means $a^2$ is even, so $a$ must be even. Let $a=2k$. - Substituting $a=2k$ into the equation gives $2b^2 = (2k)^2 \implies 2b^2 = 4k^2 \implies b^2 = 2k^2$. - This means $b^2$ is even, so $b$ must be even. - This contradicts the initial assumption that $a$ and $b$ have no common factors (they are both even). - Therefore, $\sqrt{2}$ must be irrational. 5. **Case Analysis:** - Breaks a general statement into restricted statements about several subclasses. - Proves each case individually to prove the general statement. - **Example (Ramsey Theory):** In any group of 6 people, there are either 3 who all know each other, or 3 who are all strangers to each other. - Pick an arbitrary person $X$. Of the remaining 5 people, $X$ either knows $\geq 3$ people or knows $\leq 2$ people (meaning $X$ does not know $\geq 3$ people). - **Case 1:** $X$ knows $\geq 3$ people. - **Case 2:** $X$ does not know $\geq 3$ people. - Each case is further broken down. 6. **Symmetry in Arguments:** - Can simplify proofs by recognizing when different cases are essentially identical, just with roles reversed. - Often makes arguments shorter and more revealing. #### Logical Equivalence - Two statements are equivalent if one is true whenever the other is true, and vice versa. - "P if and only if Q" (P iff Q) means "If P then Q" AND "If Q then P". - **Contrapositive:** "If not Q then not P" is logically equivalent to "If P then Q". - **Converse:** "If Q then P" (not equivalent to "If P then Q"). - **Inverse:** "If not P then not Q" (not equivalent to "If P then Q"). #### Well-Ordering Principle - Any nonempty set of nonnegative integers has a smallest element. - This principle is equivalent to the principle of mathematical induction. ### Mathematical Induction #### Principle of Mathematical Induction To prove that a predicate $P(n)$ holds for every number $n$ greater than or equal to some particular number $n_0$: 1. **Base Case:** Prove $P(n_0)$. 2. **Induction Hypothesis:** Let $n$ be an arbitrary but fixed number greater than or equal to $n_0$, and assume $P(n)$ is true. 3. **Induction Step:** Assuming the induction hypothesis, prove $P(n+1)$. - **Analogy:** Climbing a ladder. If you can get on the bottom rung ($P(n_0)$ is true), and if you can climb from any rung to the next higher rung (if $P(n)$ then $P(n+1)$), then you can reach any rung. #### Example (Sum of Powers of 2) Prove that for any $n \geq 0$, $\sum_{i=0}^{n-1} 2^i = 2^n - 1$. 1. **Base Case ($n=0$):** $\sum_{i=0}^{-1} 2^i = 0$ (empty sum). $2^0 - 1 = 1 - 1 = 0$. So $P(0)$ is true. 2. **Induction Hypothesis:** Assume $P(n)$ is true for some fixed but arbitrary $n \geq 0$. That is, $\sum_{i=0}^{n-1} 2^i = 2^n - 1$. 3. **Induction Step:** Prove $P(n+1)$, which is $\sum_{i=0}^{n} 2^i = 2^{n+1} - 1$. - Left side: $\sum_{i=0}^{n} 2^i = (\sum_{i=0}^{n-1} 2^i) + 2^n$. - By induction hypothesis: $(2^n - 1) + 2^n$. - This simplifies to $2 \cdot 2^n - 1 = 2^{n+1} - 1$. - Thus, $P(n+1)$ is true. #### Strong Induction To prove that a predicate $P(n)$ holds for every number $n$ greater than or equal to some particular number $n_0$: 1. **Base Cases:** Prove $P(m)$ for all $m$ such that $n_0 \leq m \leq n_1$, where $n_1$ is some number $\geq n_0$. (Often, multiple base cases are needed if the induction step refers to values far back). 2. **Induction Hypothesis:** Let $n \geq n_1$ be arbitrary but fixed, and assume that $P(m)$ holds whenever $n_0 \leq m \leq n$. 3. **Induction Step:** Assuming the induction hypothesis, show that $P(n+1)$ holds. - **Difference from Ordinary Induction:** The induction hypothesis assumes the truth of $P(m)$ for *all* values from $n_0$ up to $n$, not just $P(n)$. - **Equivalence:** Anything that can be proved using strong induction can also be proved using ordinary induction (perhaps less elegantly). #### Structural Induction - Generalizes proof by induction to non-numerical objects. - Used for sets defined inductively (base cases and constructor cases). - To prove a predicate $P(x)$ for all elements $x$ in an inductively defined set $S$: 1. **Base Cases:** Prove $P(b)$ for each base element $b \in S$. 2. **Constructor Cases:** For each $k$-place constructor operation $c$, if $x_1, \dots, x_k \in S$ and $P(x_1), \dots, P(x_k)$ all hold, then prove $P(c(x_1, \dots, x_k))$. - **Example (Balanced Strings of Parentheses - BSP):** Every BSP has equal numbers of left and right parentheses. - **Inductive Definition of BSPs:** - **Base Case:** The empty string $\lambda$ is a BSP. - **Constructor Cases:** - If $x$ is a BSP, then $(x)$ is a BSP. - If $x$ and $y$ are BSPs, then $xy$ is a BSP. - **Proof:** 1. **Base Case ($\lambda$):** The empty string has zero left parentheses and zero right parentheses, so they are equal. 2. **Constructor Case 1 ($(x)$):** Assume $x$ has an equal number of left and right parentheses (say $n$ of each) by induction hypothesis. Then $(x)$ has $n+1$ left parentheses and $n+1$ right parentheses, so they are equal. 3. **Constructor Case 2 ($xy$):** Assume $x$ has $n$ of each and $y$ has $m$ of each by induction hypothesis. Then $xy$ has $n+m$ left parentheses and $n+m$ right parentheses, so they are equal. ### Sets A set is an unordered collection of distinct objects, called **elements** or **members**. #### Set Notation - **Curly braces:** $\{1, 2, 3\}$ - **Membership:** $x \in S$ (x is a member of S), $x \notin S$ (x is not a member of S) - **Cardinality (size):** $|S|$ (number of elements in S) - **Empty Set:** $\emptyset$ or $\{\}$ (contains no objects), $|\emptyset| = 0$ - **Finite Set:** Can be listed in full, has a non-negative integer cardinality. - **Infinite Set:** Not finite (e.g., integers). #### Common Sets - $\mathbb{Z} = \{\dots, -2, -1, 0, 1, 2, \dots\}$ (integers) - $\mathbb{N} = \{0, 1, 2, 3, \dots\}$ (nonnegative integers, natural numbers) - $\mathbb{R}$ (real numbers) - $\mathbb{Q}$ (rational numbers) #### Subsets and Supersets - **Subset:** $A \subseteq B$ (A is a subset of B, possibly equal to B). - **Proper Subset:** $A \subset B$ (A is a subset of B, and $A \neq B$). - **Superset:** $B \supseteq A$ (B is a superset of A). - **Proper Superset:** $B \supset A$ (B is a proper superset of A). - **Power Set:** $\mathcal{P}(S)$ is the set of all subsets of $S$. - If $S = \{3, 17\}$, then $\mathcal{P}(S) = \{\emptyset, \{3\}, \{17\}, \{3, 17\}\}$. - If $|S|=n$, then $|\mathcal{P}(S)|=2^n$. #### Set Operations - **Union:** $A \cup B = \{x : x \in A \text{ or } x \in B\}$ - **Intersection:** $A \cap B = \{x : x \in A \text{ and } x \in B\}$ - **Complement:** $\bar{A} = \{x : x \notin A\}$ (relative to a defined universe $U$) - **Difference:** $A - B = A \cap \bar{B} = \{x : x \in A \text{ and } x \notin B\}$ #### Properties of Set Operations - **Associative Laws:** - $(A \cup B) \cup C = A \cup (B \cup C)$ - $(A \cap B) \cap C = A \cap (B \cap C)$ - **Commutative Laws:** - $A \cup B = B \cup A$ - $A \cap B = B \cap A$ - **Distributive Laws:** - $A \cap (B \cup C) = (A \cap B) \cup (A \cap C)$ - $A \cup (B \cap C) = (A \cup B) \cap (A \cup C)$ #### Cartesian Product - **Ordered Pair:** $(x, y)$ (order matters, $(x, y) \neq (y, x)$ unless $x=y$) - **Ordered n-tuple:** $(x_1, x_2, \dots, x_n)$ - **Cartesian Product:** $A \times B = \{(x, y) : x \in A \text{ and } y \in B\}$ - If $A = \{1, 2, 3\}$ and $B = \{-1, -2\}$, then $A \times B = \{(1, -1), (1, -2), (2, -1), (2, -2), (3, -1), (3, -2)\}$. - If $A$ and $B$ are finite, $|A \times B| = |A| \cdot |B|$. #### Infinite Sets - **Countably Infinite:** A set that has a bijection with the natural numbers $\mathbb{N}$. - Examples: $\mathbb{N}$, even numbers, odd numbers, $\mathbb{Z}$, $\mathbb{N} \times \mathbb{N}$. - All countably infinite sets have the same "size" (cardinality $\aleph_0$). - The union of countably many countably infinite sets is countably infinite. - **Countable:** A set that is either finite or countably infinite. - **Uncountable:** A set that is not countable. - Examples: $\mathcal{P}(\mathbb{N})$, real numbers $\mathbb{R}$. - $\mathcal{P}(S)$ is always "larger" than $S$ for any set $S$ (finite or infinite). This is proven by **diagonalization argument**. ### Relations and Functions #### Relations - **Definition:** A relation is a connection between things. Formally, a relation is a set of ordered $n$-tuples, a subset of the Cartesian product of sets. - **Binary Relation:** A relation between two things, represented by ordered pairs. $R \subseteq A \times B$. - **Example:** The "lives at" relation between people $P$ and addresses $A$ is a subset of $P \times A$. - **Inverse Relation:** $R^{-1} = \{(y, x) : (x, y) \in R\}$. Reverses the roles of the components. #### Properties of Binary Relations on a Set $S \times S$ - **Reflexive:** $R(x, x)$ holds for every $x \in S$. (e.g., equality, $\leq$) - **Reflexive Closure ($R^{refl}$):** $R \cup \{(x, x) : x \in S\}$. Adds all missing self-loops. - **Irreflexive:** $R(x, x)$ never holds for any $x \in S$. (e.g., $ ### Directed Graphs (Digraphs) A directed graph (digraph) is an ordered pair $(V, A)$, where $V$ is a non-empty set of **vertices** (or nodes) and $A$ is a subset of $V \times V$ representing **arcs** (or directed edges). - Arcs are written $v \to w$ rather than $(v, w)$. - A vertex can have an arc to itself (self-loop). - Between any pair of distinct vertices, there can be at most one arc in a given direction. #### Digraph Properties - **Walk:** A sequence of vertices $v_0, v_1, \dots, v_n$ such that $v_i \to v_{i+1} \in A$ for each $i ### Undirected Graphs An undirected graph (or simply graph) is a pair $(V, E)$, where $V$ is a set of **vertices** and $E$ is a set of **edges**. - An **edge** is a two-element subset of $V$, typically written $x-y$. - Edges have no direction. - No self-loops ($x-x$ is not allowed). - No multiple edges between the same two vertices. - **Endpoints:** $x$ and $y$ are endpoints of edge $x-y$. - **Incident:** Edge $x-y$ is incident on $x$ and $y$. #### Graph Properties - **Walk:** A series of vertices where successive vertices are joined by an edge. - **Path:** A walk that does not repeat any edge. - **Circuit:** A path that ends at its beginning. - **Cycle:** A circuit that repeats no vertex except the start/end vertex. - A trivial path consists of a single vertex. - **Connected:** Two vertices are connected if there is a path between them. - **Connected Components:** The equivalence classes of the "connected" relation. A graph is connected if it has only one component. - **Degree of a Vertex:** The number of edges incident on it. - The sum of the degrees of all vertices in any graph is always even (equal to twice the number of edges). #### Types of Graphs - **Complete Graph ($K_n$):** A graph with $n$ vertices where every pair of distinct vertices is connected by an edge. - $K_n$ has $n(n-1)/2$ edges. - **Tree:** A connected acyclic graph. - A tree with $n$ vertices has $n-1$ edges. - Removing an edge disconnects a tree. Adding an edge (without adding vertices) creates a cycle. - Between any two vertices in a tree, there is one and only one path. - **Forest:** An acyclic graph (its connected components are trees). - **Bipartite Graph:** A graph whose vertices can be divided into two disjoint sets $A$ and $B$ such that every edge connects a vertex in $A$ to one in $B$. - Bipartite graphs are 2-colorable. #### Graph Isomorphism - **Isomorphic Graphs:** Two graphs $G=(V,E)$ and $G'=(V',E')$ are isomorphic if there is a bijection $f: V \to V'$ such that $x-y \in E$ if and only if $f(x)-f(y) \in E'$. - Isomorphic graphs have the same number of vertices, edges, and vertices of each degree. - Determining if two graphs are isomorphic is a complex problem. #### Eulerian Circuits - **Eulerian Circuit:** A circuit that includes every edge exactly once. - **Euler's Theorem:** A connected graph has an Eulerian circuit if and only if every vertex has an even degree. #### Connectivity in Graphs - **Edge Connectivity:** The minimum number of edges that must be removed to disconnect the graph. - **Bridge:** An edge whose removal increases the number of connected components. - **Vertex Connectivity:** The minimum number of vertices that must be removed to disconnect the graph. - **Articulation Point:** A vertex whose removal increases the number of connected components. - **$k$-connected:** A graph with vertex connectivity of at least $k$. - A 1-connected graph is connected. - A 2-connected graph (biconnected) has no articulation points. - **Menger's Theorem:** Relates the minimum size of an edge/vertex cut to the maximum number of edge/vertex-disjoint paths. - **Edge Version:** Minimum size of an $(s,t)$-edge-cut equals maximum number of edge-disjoint paths from $s$ to $t$. - **Vertex Version:** Minimum size of an $(s,t)$-vertex-cut equals maximum number of vertex-disjoint paths from $s$ to $t$. - **Max-Flow-Min-Cut Theorem:** Generalization of Menger's theorem for weighted digraphs (networks). Maximum flow from source to destination equals minimum capacity of an edge-cut. ### Graph Coloring - **Coloring:** An assignment of colors to vertices such that adjacent vertices have different colors. A $k$-coloring uses $k$ colors. - **Chromatic Number ($\chi(G)$):** The minimum number of colors needed to color a graph $G$. - $\chi(K_n) = n$. - $\chi(\text{nontrivial tree}) = 2$. - $\chi(C_n) = 2$ if $n$ is even, $3$ if $n$ is odd. - If a graph has a $k$-clique (a $K_k$ subgraph), then $\chi(G) \geq k$. - The chromatic number is at most one more than the maximum degree of any vertex. - **Planar Graph:** A graph that can be drawn in the plane without any edges crossing. - **Four-Color Theorem:** Every planar graph is 4-colorable. - **Applications:** Scheduling problems, resource allocation (e.g., register allocation in compilers, gate assignment for flights). ### Finite Automata A finite automaton (or finite state machine) is a mathematical model of computation. It is a quintuple $(K, \Sigma, \Delta, s, F)$, where: - $K$: A finite set of **states**. - $\Sigma$: An **alphabet** (finite set of input symbols). - $\Delta$: A set of **labeled arcs** (transitions), $\Delta \subseteq K \times (\Sigma \cup \{\lambda\}) \times K$. $\lambda$ denotes the empty string. - $s \in K$: The **start state**. - $F \subseteq K$: The set of **final states** (or accepting states). #### Types of Finite Automata - **Deterministic Finite Automaton (DFA):** - For every state $q \in K$ and every symbol $a \in \Sigma$, there is exactly one transition $(q, a, q') \in \Delta$. - No $\lambda$-transitions. - Its behavior is fully determined by the input. - **Transition Function ($\delta$):** Maps a state and symbol to the next state, $\delta: K \times \Sigma \to K$. - **Nondeterministic Finite Automaton (NDFA):** - May have multiple transitions for a given state and symbol. - May have $\lambda$-transitions (transitions without consuming input). - Accepts an input string if there is *any* path from the start state to a final state that consumes the entire input. - DFAs are a proper subset of NDFAs. #### Languages and Acceptance - **Language:** A set of strings over an alphabet $\Sigma$. - **Acceptance:** An automaton $M$ accepts a string $w$ if there is a computation (sequence of transitions) from the start state $s$ with input $w$ that ends in a final state $f \in F$. - **$L(M)$:** The language accepted by automaton $M$. - **Equivalence:** Two finite automata are equivalent if they accept the same language. #### Subset Construction - A constructive proof that any language accepted by an NDFA can also be accepted by some DFA. - The states of the resulting DFA are subsets of the NDFA's states. - Can lead to an exponential blow-up in the number of states in the worst case, but often results in smaller DFAs if only reachable states are considered. #### Regular Languages - **Definition:** Languages that can be accepted by finite automata. - **Regular Expressions:** Strings that denote regular languages. - **Symbols:** Alphabet symbols (e.g., $a, b$), $\emptyset$ (empty language), $\lambda$ (empty string). - **Operators:** $\cup$ (union), $\cdot$ (concatenation), $*$ (Kleene star). - **Kleene Star ($L^*$):** The language containing all concatenations of zero or more strings from $L$. - **Relationship to Automata:** A language can be represented by a regular expression if and only if there exists a finite automaton that accepts the language. - **Closure Properties:** If $L_1$ and $L_2$ are regular languages, then $L_1 \cup L_2$, $L_1 \cdot L_2$ (concatenation), $L_1^*$ (Kleene star), $\bar{L_1}$ (complement), and $L_1 \cap L_2$ are also regular languages. #### Limitations of Finite Automata - Finite automata cannot recognize languages that require unbounded memory, such as strings with equal numbers of 'a's and 'b's. This is proven using the **pumping lemma**. ### Order Notation Describes the asymptotic behavior of functions, especially for analyzing algorithm runtime and space complexity. #### Basic Concepts - **Runtime Function ($f(n)$):** Maximum number of steps an algorithm takes on an input of size $n$. - **Size of Input:** Measure of space taken (e.g., length of string, number of bits for a number $\log_2 n$). - **Asymptotic Equivalence ($f(n) \sim g(n)$):** $\lim_{n \to \infty} \frac{f(n)}{g(n)} = 1$. Means $f$ and $g$ grow at roughly the same rate. #### Asymptotic Notations - **Big-O ($O(g(n))$):** Upper bound. $f(n) = O(g(n))$ if $\lim_{n \to \infty} \frac{f(n)}{g(n)} 0$ and $n_0 \geq 0$ such that for all $n \geq n_0$, $f(n) \leq c \cdot g(n)$. - Means $f$ grows no faster than $g$. - **Big-Omega ($\Omega(g(n))$):** Lower bound. $f(n) = \Omega(g(n))$ if $\lim_{n \to \infty} \frac{f(n)}{g(n)} > 0$. - Alternatively, there exist constants $c > 0$ and $n_0 \geq 0$ such that for all $n \geq n_0$, $f(n) \geq c \cdot g(n)$. - Means $f$ grows no slower than $g$. - **Big-Theta ($\Theta(g(n))$):** Tight bound. $f(n) = \Theta(g(n))$ if $f(n) = O(g(n))$ and $f(n) = \Omega(g(n))$. - Alternatively, there exist constants $c_1, c_2 > 0$ and $n_0 \geq 0$ such that for all $n \geq n_0$, $c_1 \cdot g(n) \leq f(n) \leq c_2 \cdot g(n)$. - Means $f$ grows at the same rate as $g$. - **Little-o ($o(g(n))$):** Strict upper bound. $f(n) = o(g(n))$ if $\lim_{n \to \infty} \frac{f(n)}{g(n)} = 0$. - Means $f$ grows strictly slower than $g$. - **Little-omega ($\omega(g(n))$):** Strict lower bound. $f(n) = \omega(g(n))$ if $\lim_{n \to \infty} \frac{f(n)}{g(n)} = \infty$. - Means $f$ grows strictly faster than $g$. #### Properties of Growth Rates - **Highest-Order Term Dominates:** In a sum of functions, the term with the highest growth rate determines the overall order. Lower-order terms can be dropped. - Example: $5n^3 + 8n + 2 = \Theta(n^3)$. - **Logarithmic Functions:** Grow slower than any polynomial function. - Any two logarithmic functions are $\Theta$ of each other (base doesn't matter asymptotically). - $\log n = o(n^k)$ for any $k > 0$. - **Polynomial Functions:** - $n^a$ grows slower than $n^b$ if $a 1$. - **Exponential Functions:** Grow faster than any polynomial function. - $c^n = o(d^n)$ if $c ### Counting Counting in discrete mathematics involves finding the number of elements in a set without enumerating them one by one. #### Basic Counting Principles - **Sum Rule:** If sets $A$ and $B$ are disjoint, then $|A \cup B| = |A| + |B|$. - Example: 12 sophomores + 9 juniors = 21 students. - **Product Rule:** For two sets $A$ and $B$, $|A \times B| = |A| \cdot |B|$. - Generalized Product Rule: For a sequence of $k$ elements, if there are $n_1$ choices for the first, $n_2$ for the second, ..., $n_k$ for the $k$-th, then there are $n_1 \cdot n_2 \cdot \dots \cdot n_k$ total choices. - Example: License plates (3 letters, 3 digits) = $26^3 \cdot 10^3$. - **Counting "Out of Order":** Sometimes reordering the selection process simplifies counting by avoiding complex case analysis. #### Permutations - **Permutation:** An arrangement of elements in a specific order. Formally, a bijection $p: \{0, \dots, |S|-1\} \to S$. - **Number of Permutations of a Set of Size $n$ ($n!$):** $n \cdot (n-1) \cdot \dots \cdot 1$. - $0! = 1$. - **Cyclically Equivalent Permutations:** Permutations that are rotations of each other. - Number of cyclically inequivalent permutations of a set of size $n$ is $(n-1)!$. - **Permutations of Subsets ($n P_k$):** Number of permutations of $k$ elements chosen from a set of $n$ distinct elements. - $n P_k = \frac{n!}{(n-k)!}$. - **Permutations of Multisets:** For a multiset $M=(S, \mu)$ of size $m$ (where $\mu(x)$ is the multiplicity of $x$), the number of distinct permutations is $\frac{m!}{\prod_{x \in S} \mu(x)!}$. - Example: ANAGRAM (7 letters, 3 A's) = $\frac{7!}{3!1!1!1!1!} = \frac{5040}{6} = 840$. #### Combinations - **Combination:** A selection of elements where order does not matter. - **Combinations of Subsets ($\binom{n}{k}$ or $n C_k$):** Number of ways to choose $k$ elements from a set of $n$ distinct elements (without replacement). - $\binom{n}{k} = \frac{n!}{k!(n-k)!}$. - Symmetrical: $\binom{n}{k} = \binom{n}{n-k}$. - **Partitions of a Set into Labeled Subsets:** Number of ways to partition a set of $n$ elements into $m$ disjoint labeled subsets of sizes $k_1, \dots, k_m$ is $\binom{n}{k_1, k_2, \dots, k_m} = \frac{n!}{k_1!k_2!\dots k_m!}$. - **Combinations with Replacement:** Choosing $k$ elements from $n$ types of elements (order doesn't matter, replacement allowed). - Number of ways: $\binom{k+n-1}{k}$. This is equivalent to "stars and bars". - **Partitions of an Integer:** Expressing a positive integer $n$ as the sum of $k$ smaller positive integers (the order of parts does not matter). - $p_k(n)$: Number of partitions of $n$ into $k$ parts. - $p_k(n) = p_{k-1}(n-1) + p_k(n-k)$ (recursive formula). - The number of partitions of $n$ into $k$ parts is equal to the number of partitions of $n$ where the largest part is $k$. (Young diagrams) ### Recurrence Relations A recurrence relation defines the terms of a sequence based on previous terms. They are crucial for analyzing algorithms. #### Definition An equation or set of equations that defines $a_n$ in terms of $a_i$ for $i 0$, then $T(n) = \Theta(n^e)$. 2. If $f(n) = \Theta(n^e)$, then $T(n) = \Theta(n^e \log n)$. 3. If $f(n) = \Omega(n^{e+\epsilon})$ for some $\epsilon > 0$, and $af(n/b) \leq c f(n)$ for some $c e$, then $T(n) = \Theta(n^d)$. ### Probability Probability quantifies the likelihood of events occurring in repeatable procedures. #### Basic Definitions - **Trial:** A repeatable procedure with a well-defined set of outcomes. - **Outcome:** A possible result of a trial. - **Sample Space ($S$):** The set of all possible outcomes of a trial. (For finite probability, $S$ is finite). - **Event ($E$):** Any subset of the sample space ($E \subseteq S$). - **Probability Function ($\text{Pr}$):** A function $\text{Pr}: \mathcal{P}(S) \to \mathbb{R}$ that assigns probabilities to events. #### Axioms of Finite Probability 1. $0 \leq \text{Pr}(A) \leq 1$ for any event $A$. 2. $\text{Pr}(\emptyset) = 0$ and $\text{Pr}(S) = 1$. 3. If $A$ and $B$ are disjoint events ($A \cap B = \emptyset$), then $\text{Pr}(A \cup B) = \text{Pr}(A) + \text{Pr}(B)$. #### Derived Properties - $\text{Pr}(\bar{A}) = 1 - \text{Pr}(A)$ (complement rule). - If $A \subseteq B$, then $\text{Pr}(A) \leq \text{Pr}(B)$. - **Inclusion-Exclusion Principle:** For any two events $A$ and $B$ (not necessarily disjoint), $\text{Pr}(A \cup B) = \text{Pr}(A) + \text{Pr}(B) - \text{Pr}(A \cap B)$. - For a set of disjoint events $A_1, \dots, A_n$, $\text{Pr}(\cup_{i=1}^n A_i) = \sum_{i=1}^n \text{Pr}(A_i)$. #### Equally Likely Outcomes If all outcomes in $S$ are equally likely, then for any event $E$: $\text{Pr}(E) = \frac{|E|}{|S|}$. - Example: Flipping a fair coin 4 times, probability of exactly 2 heads: $\binom{4}{2}/2^4 = 6/16 = 3/8$. #### Conditional Probability - **Definition:** The probability of event $A$ occurring given that event $B$ has occurred ($\text{Pr}(B) > 0$). $\text{Pr}(A|B) = \frac{\text{Pr}(A \cap B)}{\text{Pr}(B)}$. - **Example (Monty Hall Problem):** Switching doors increases the probability of winning. - **Prosecutor's Fallacy:** Confusing $\text{Pr}(A|B)$ with $\text{Pr}(B|A)$. #### Independence - **Independent Events:** Events $A$ and $B$ are independent if knowledge of one gives no information about the other. - $\text{Pr}(A \cap B) = \text{Pr}(A) \cdot \text{Pr}(B)$. - Equivalently, $\text{Pr}(A|B) = \text{Pr}(A)$ (if $\text{Pr}(B) > 0$). - **Pairwise Independent:** A set of events where every pair of events is independent. - **Mutually Independent:** A stronger condition where every event is independent of any combination of other events. - **Conditional Independence:** Events $A$ and $B$ are conditionally independent given $E$ if $\text{Pr}(A \cap B|E) = \text{Pr}(A|E) \text{Pr}(B|E)$. #### Law of Total Probability If events $S_1, \dots, S_n$ form a partition of the sample space $S$ (disjoint and their union is $S$), then for any event $A$: $\text{Pr}(A) = \sum_{i=1}^n \text{Pr}(A|S_i) \text{Pr}(S_i)$. ### Bayes' Theorem Bayes' Theorem provides a way to update the probability of a hypothesis given new evidence. #### Formula For events $A$ and $B$ (with $\text{Pr}(A)>0, \text{Pr}(B)>0$): $\text{Pr}(A|B) = \frac{\text{Pr}(B|A) \text{Pr}(A)}{\text{Pr}(B)}$. - $\text{Pr}(A|B)$: Posterior probability (probability of hypothesis $A$ given evidence $B$). - $\text{Pr}(B|A)$: Likelihood (probability of evidence $B$ given hypothesis $A$). - $\text{Pr}(A)$: Prior probability (initial probability of hypothesis $A$). - $\text{Pr}(B)$: Marginal probability of evidence $B$. #### Using Law of Total Probability for $\text{Pr}(B)$ If $A_1, \dots, A_n$ form a partition of the sample space, then $\text{Pr}(B) = \sum_{i=1}^n \text{Pr}(B|A_i) \text{Pr}(A_i)$. So, Bayes' Theorem can be written as: $\text{Pr}(A_j|B) = \frac{\text{Pr}(B|A_j) \text{Pr}(A_j)}{\sum_{i=1}^n \text{Pr}(B|A_i) \text{Pr}(A_i)}$. #### Applications - **Updating Beliefs:** Used to update the probability of a hypothesis (e.g., winning the Monty Hall game, probability of guilt given evidence). - **Naïve Bayes Classifier:** A machine learning algorithm that uses Bayes' Theorem with the assumption of conditional independence between features. Used in spam filtering, image recognition, etc. ### Random Variables and Expectation Random variables assign numerical values to outcomes of a trial, and expectation describes their average value. #### Random Variables - **Definition:** A function $X: S \to T$ that maps outcomes from a sample space $S$ to numerical values in a set $T$ (often $\mathbb{N}$ or $\mathbb{R}$). - **Bernoulli Variable:** A random variable that takes on values 0 or 1. - Characterized by probability $p$ of value 1 (success). - **Geometric Random Variable:** Represents the number of trials until the first success in a sequence of Bernoulli trials. - $\text{Pr}(X=n) = (1-p)^{n-1}p$. #### Probability Distributions - **Probability Mass Function (PMF):** $\text{PMF}_X(x) = \text{Pr}(X=x)$. Maps each possible value $x$ to its probability. - **Cumulative Distribution Function (CDF):** $\text{CDF}_X(x) = \text{Pr}(X \leq x) = \sum_{y \leq x} \text{PMF}_X(y)$. #### Expectation (Mean) - **Definition:** The weighted average of the possible values of a random variable $X$. $E(X) = \sum_{x \in T} \text{Pr}(X=x) \cdot x$. - **Alternative Formula:** $E(X) = \sum_{s \in S} \text{Pr}(s) \cdot X(s)$. - **Properties (Linearity of Expectation):** - $E(c) = c$ for a constant $c$. - $E(cX) = cE(X)$. - $E(X+Y) = E(X) + E(Y)$ (holds even if $X$ and $Y$ are not independent). - $E(\sum_i c_i X_i) = \sum_i c_i E(X_i)$. - **Examples:** - Expected value of a fair six-sided die roll: 3.5. - Expected value of a Bernoulli variable with probability $p$: $p$. - Expected value of a geometric random variable with probability $p$: $1/p$. #### Variance - **Definition:** A measure of how spread out the possible values of a random variable are. $\text{Var}(X) = E((X - E(X))^2) = E(X^2) - E(X)^2$. - **Properties:** - $\text{Var}(c) = 0$. - $\text{Var}(cX) = c^2 \text{Var}(X)$. - If $X$ and $Y$ are independent, $\text{Var}(X+Y) = \text{Var}(X) + \text{Var}(Y)$. - **Example:** Variance of a Bernoulli variable with probability $p$: $p(1-p)$. - **Example:** Variance of a geometric random variable with probability $p$: $(1-p)/p^2$. ### Modular Arithmetic A system of arithmetic for integers, where numbers "wrap around" when they reach a certain value, the modulus. #### Congruence - **Definition:** $x \equiv y \pmod{m}$ (read "$x$ is congruent to $y$ modulo $m$") if: 1. $x \pmod{m} = y \pmod{m}$ (remainders are equal when divided by $m$). 2. There exists an integer $k$ such that $x = km + y$. 3. $x - y$ is divisible by $m$. - **Congruence Classes (Residue Classes):** The set of integers that leave the same remainder $r$ when divided by $m$. Denoted $[r]$ or $x \pmod{m}$. - $\mathbb{Z}_m$: The set of $m$ congruence classes modulo $m$, i.e., $\{[0], [1], \dots, [m-1]\}$. - **Equivalence Relation:** Congruence modulo $m$ is an equivalence relation (reflexive, symmetric, transitive). #### Operations in $\mathbb{Z}_m$ - **Addition:** $[x] + [y] = [x+y]$. - **Subtraction:** $[x] - [y] = [x-y]$. - **Multiplication:** $[x] \cdot [y] = [x \cdot y]$. - **Exponentiation:** $[x]^n = [x^n]$. - **Cardinal Rule:** When performing modular arithmetic, you can replace any number $x$ with $x \pmod{m}$ at any point. However, this applies to the base of an exponent, not the exponent itself ($[x]^n \neq [x \pmod{m}]^{n \pmod{m}}$). #### Multiplicative Inverses - **Multiplicative Inverse:** An element $[y]$ is the multiplicative inverse of $[x]$ in $\mathbb{Z}_m$ if $[x] \cdot [y] = [1]$. Denoted $[x]^{-1}$. - **Existence:** A multiplicative inverse for $[x]$ exists in $\mathbb{Z}_m$ if and only if $\gcd(x, m) = 1$. - **Prime Modulus:** If $m$ is a prime number $p$, then every nonzero element in $\mathbb{Z}_p$ has a multiplicative inverse. $\mathbb{Z}_p$ is a **field**. - **Extended Euclidean Algorithm:** Used to find multiplicative inverses. If $\gcd(a, m) = 1$, it finds integers $x, y$ such that $ax + my = 1$. Then $ax \equiv 1 \pmod{m}$, so $[x]$ is $[a]^{-1}$. #### Repeated Squaring (Modular Exponentiation) - An efficient algorithm to compute $x^n \pmod{m}$. - It reduces the number of multiplications from $n-1$ to $\Theta(\log n)$ (number of bits in $n$). - **Process:** If $n$ is even, $x^n = (x^{n/2})^2$. If $n$ is odd, $x^n = x \cdot (x^{(n-1)/2})^2$. #### Discrete Logarithms - **Definition:** Given $y, b, m$, find $x$ such that $y \equiv b^x \pmod{m}$. This $x$ is the discrete logarithm of $y$ to base $b$ modulo $m$. - **Computational Difficulty:** Easy to compute modular exponentiation, but hard to compute discrete logarithms for large numbers. This asymmetry is the basis for public-key cryptography. ### Public Key Cryptography A system allowing secure communication between parties who have no prior shared secret, relying on computationally hard problems. #### Cryptography Basics - **Plaintext:** The original message. - **Ciphertext:** The encrypted message. - **Key:** Secret information used for encryption and decryption. - **Encryption:** Transforming plaintext into ciphertext using a key. - **Decryption:** Recovering plaintext from ciphertext using a key. - **Weak Cipher:** Easy to crack. #### Traditional Ciphers - **Caesar Cipher:** Shifts each letter by a fixed number of positions (key is the shift amount). Weak. - **Substitution Cipher:** Replaces each letter with another letter (key is a permutation of the alphabet). Weak (vulnerable to frequency analysis). - **One-Time Pad:** Key is a random sequence of bits as long as the message, combined with plaintext using XOR. Unbreakable if key is truly random and used only once, but clumsy to distribute keys. #### Diffie-Hellman Key Exchange A protocol allowing two parties (Alice and Bob) to establish a shared secret key over an insecure channel, without Eve (eavesdropper) being able to determine the key. 1. **Public Parameters (green, known to all):** A large prime $p$ and a generator $g$ (a number whose powers modulo $p$ generate a large group). 2. **Alice's Secret (red, known only to Alice):** Alice chooses a secret integer $a$. 3. **Bob's Secret (red, known only to Bob):** Bob chooses a secret integer $b$. 4. **Alice's Public Key (blue, sent over public channel):** Alice computes $A = g^a \pmod{p}$ and sends $A$ to Bob. 5. **Bob's Public Key (blue, sent over public channel):** Bob computes $B = g^b \pmod{p}$ and sends $B$ to Alice. 6. **Shared Secret Key (red, known only to Alice and Bob):** - Alice computes $K = B^a \pmod{p} = (g^b)^a \pmod{p} = g^{ab} \pmod{p}$. - Bob computes $K = A^b \pmod{p} = (g^a)^b \pmod{p} = g^{ab} \pmod{p}$. - Both arrive at the same shared secret $K$. #### Security Basis - **One-Way Function:** A function that is easy to compute but computationally hard to invert (find the input given the output). - **Discrete Logarithm Problem:** Finding $x$ in $y \equiv g^x \pmod{p}$ is computationally hard for large $p$. - **Computational Difficulty:** Eve knows $g, p, A, B$. To find $K$, she would need to find $a$ from $A=g^a \pmod{p}$ (or $b$ from $B=g^b \pmod{p}$), which is the discrete logarithm problem. This is assumed to be hard. - **RSA Cryptosystem:** Another public-key system relying on the difficulty of factoring large numbers into their prime factors. #### Practical Considerations - The security of these systems relies on the assumption that certain mathematical problems (like discrete logarithm or factoring) are hard, not on mathematical proof. - Key length (size of $p$) is chosen to make brute-force attacks infeasible within practical timeframes (e.g., 2048 bits for $p$).